## Diagram: Predicate Identification and Grounding

### Overview

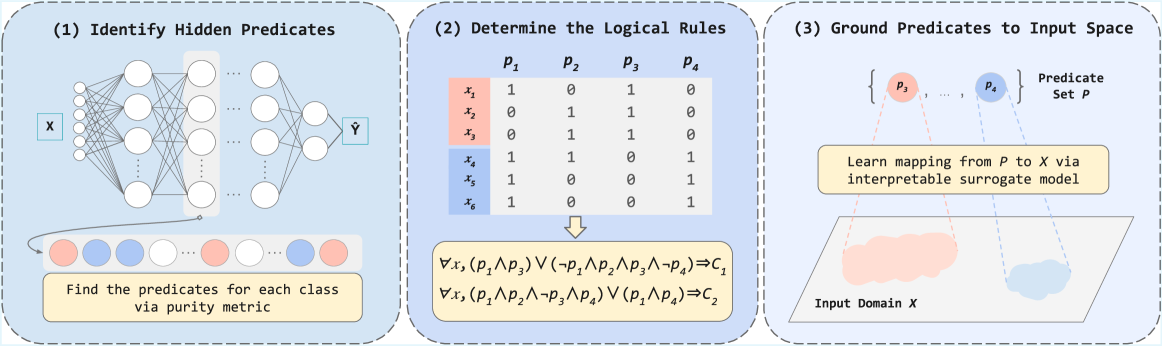

The image is a diagram illustrating a three-step process for identifying hidden predicates, determining logical rules, and grounding those predicates to an input space. It appears to be related to explainable AI, specifically how to extract logical rules from a trained neural network.

### Components/Axes

* **Panel 1: (1) Identify Hidden Predicates**

* A neural network diagram with input *X* and output *Ŷ*.

* A series of colored circles (predicates) below the network, some red and some blue.

* Text: "Find the predicates for each class via purity metric"

* **Panel 2: (2) Determine the Logical Rules**

* A table showing the relationship between input variables *x<sub>i</sub>* and predicates *p<sub>j</sub>*.

* Arrow pointing downwards from the table to logical rules.

* Table Rows: *x<sub>1</sub>*, *x<sub>2</sub>*, *x<sub>3</sub>* (highlighted in light red), *x<sub>4</sub>*, *x<sub>5</sub>*, *x<sub>6</sub>* (highlighted in light blue)

* Table Columns: *p<sub>1</sub>*, *p<sub>2</sub>*, *p<sub>3</sub>*, *p<sub>4</sub>*

* Logical Rules:

* ∀x, (p<sub>1</sub> ∧ p<sub>3</sub>) ∨ (¬p<sub>1</sub> ∧ p<sub>2</sub> ∧ p<sub>3</sub> ∧ ¬p<sub>4</sub>) ⇒ C<sub>1</sub>

* ∀x, (p<sub>1</sub> ∧ p<sub>2</sub> ∧ ¬p<sub>3</sub> ∧ p<sub>4</sub>) ∨ (p<sub>1</sub> ∧ p<sub>4</sub>) ⇒ C<sub>2</sub>

* **Panel 3: (3) Ground Predicates to Input Space**

* A set containing predicates *p<sub>3</sub>*, ..., *p<sub>4</sub>*.

* Text: "Predicate Set P"

* Text: "Learn mapping from P to X via interpretable surrogate model"

* A trapezoidal shape representing "Input Domain X" with two colored regions inside (red and blue).

### Detailed Analysis or ### Content Details

**Panel 1: Identify Hidden Predicates**

* The neural network takes an input *X* and produces an output *Ŷ*.

* The colored circles represent predicates. Red circles likely correspond to one class, and blue circles to another.

* The text indicates that a "purity metric" is used to find these predicates.

**Panel 2: Determine the Logical Rules**

* The table shows the relationship between input variables *x<sub>i</sub>* and predicates *p<sub>j</sub>*.

* *x<sub>1</sub>*: p<sub>1</sub>=1, p<sub>2</sub>=0, p<sub>3</sub>=1, p<sub>4</sub>=0

* *x<sub>2</sub>*: p<sub>1</sub>=0, p<sub>2</sub>=1, p<sub>3</sub>=1, p<sub>4</sub>=0

* *x<sub>3</sub>*: p<sub>1</sub>=0, p<sub>2</sub>=1, p<sub>3</sub>=1, p<sub>4</sub>=0

* *x<sub>4</sub>*: p<sub>1</sub>=1, p<sub>2</sub>=1, p<sub>3</sub>=0, p<sub>4</sub>=1

* *x<sub>5</sub>*: p<sub>1</sub>=1, p<sub>2</sub>=0, p<sub>3</sub>=0, p<sub>4</sub>=1

* *x<sub>6</sub>*: p<sub>1</sub>=1, p<sub>2</sub>=0, p<sub>3</sub>=0, p<sub>4</sub>=1

* The logical rules are derived from the table and represent conditions for classes C<sub>1</sub> and C<sub>2</sub>.

**Panel 3: Ground Predicates to Input Space**

* The predicates are mapped to the input domain *X*.

* An "interpretable surrogate model" is used to learn this mapping.

* The colored regions within the input domain likely represent areas where specific predicates are active.

### Key Observations

* The diagram outlines a process for extracting logical rules from a neural network.

* Predicates are identified and then grounded to the input space.

* The goal is to create an interpretable model that explains the network's behavior.

### Interpretation

The diagram illustrates a method for making neural networks more transparent and understandable. By identifying hidden predicates and grounding them to the input space, it becomes possible to extract logical rules that govern the network's decision-making process. This approach can be valuable for debugging, verifying, and explaining AI systems. The use of a "purity metric" and an "interpretable surrogate model" suggests a focus on both accuracy and interpretability. The logical rules provide a human-readable representation of the network's internal logic.