TECHNICAL ASSET FINGERPRINT

7992e7de7af2b36940b3cb93

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

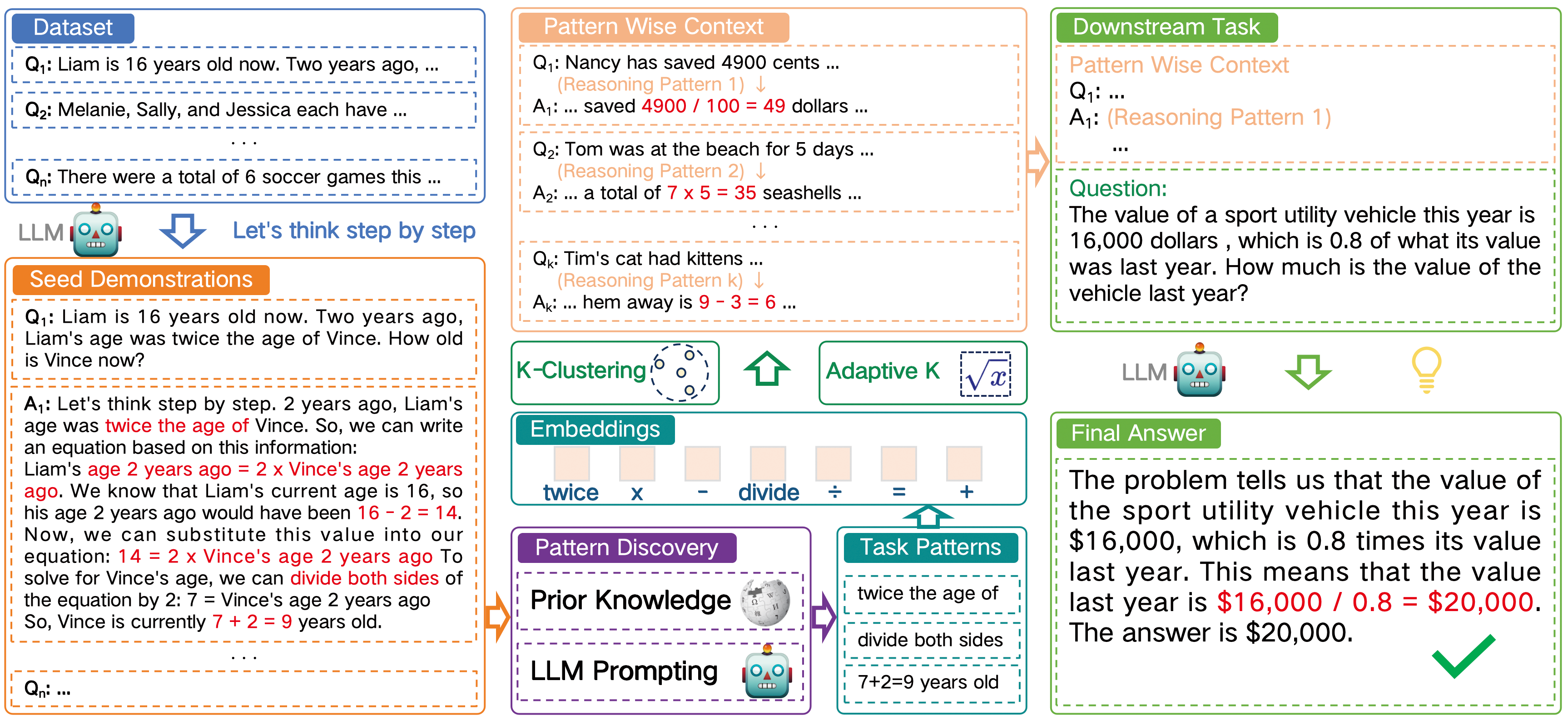

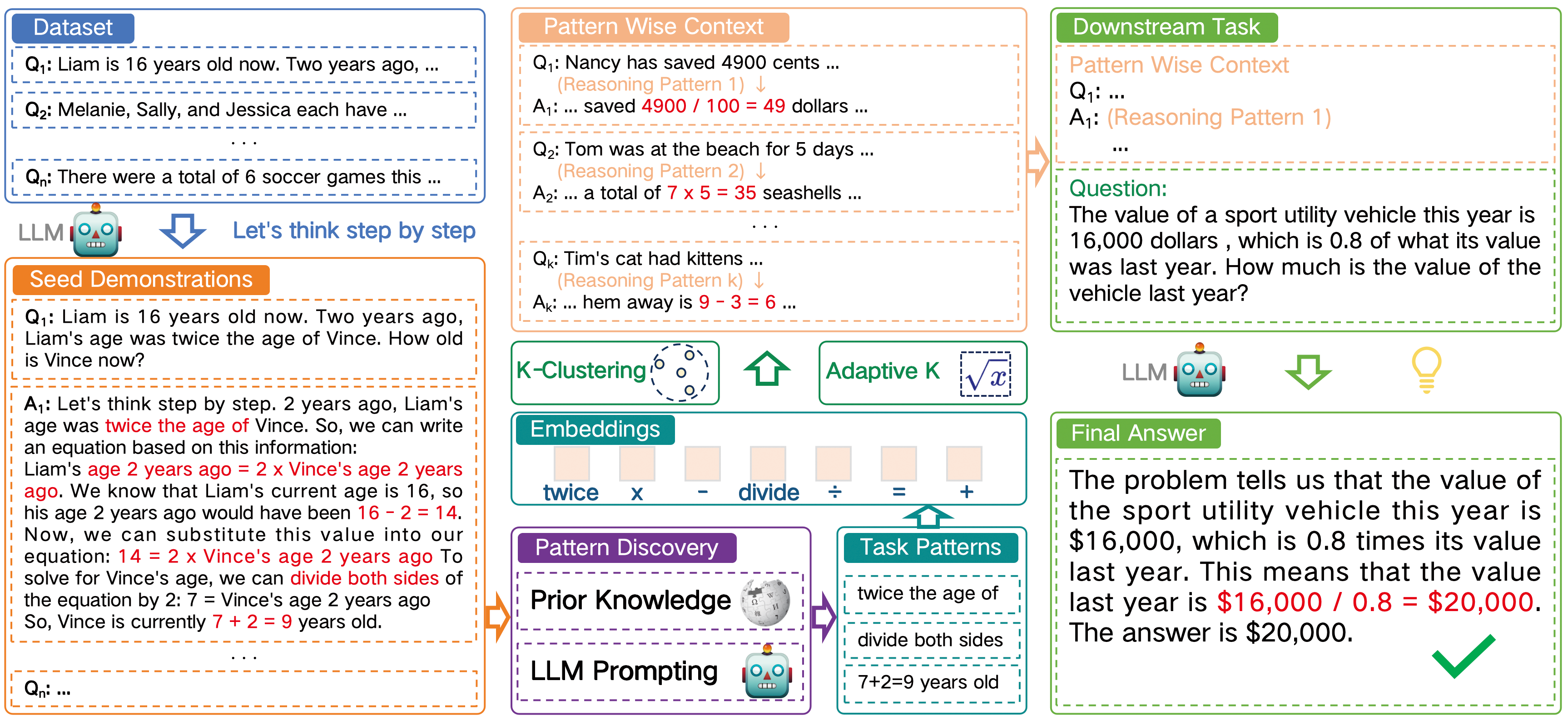

## Flow Diagram: LLM Reasoning Process

### Overview

The image presents a flow diagram illustrating how a Large Language Model (LLM) processes and solves a reasoning problem. It outlines the steps from initial data input to the final answer, incorporating pattern recognition, knowledge integration, and step-by-step reasoning.

### Components/Axes

The diagram is divided into several key components, each represented by a bordered box:

1. **Dataset:** Contains example questions.

2. **Seed Demonstrations:** Provides detailed examples of how to solve similar problems.

3. **Pattern Wise Context:** Shows examples of reasoning patterns.

4. **Downstream Task:** Presents the specific question to be solved.

5. **Final Answer:** Displays the solution to the problem.

6. **Embeddings:** Represents the numerical representation of the text.

7. **Pattern Discovery:** Involves prior knowledge and LLM prompting.

8. **Task Patterns:** Lists common mathematical operations.

The diagram also includes the following elements:

* **LLM Robot Icon:** Appears at multiple stages, representing the LLM's involvement.

* **Arrows:** Indicate the flow of information and processing steps.

* **Mathematical Symbols:** Represent operations like multiplication, division, addition, and square root.

### Detailed Analysis or ### Content Details

**1. Dataset (Top-Left, Blue Border):**

* Q1: Liam is 16 years old now. Two years ago, ...

* Q2: Melanie, Sally, and Jessica each have ...

* Qn: There were a total of 6 soccer games this ...

**2. Seed Demonstrations (Bottom-Left, Orange Border):**

* Q1: Liam is 16 years old now. Two years ago, Liam's age was twice the age of Vince. How old is Vince now?

* A1: Let's think step by step. 2 years ago, Liam's age was twice the age of Vince. So, we can write an equation based on this information: Liam's age 2 years ago = 2 x Vince's age 2 years ago. We know that Liam's current age is 16, so his age 2 years ago would have been 16 - 2 = 14. Now, we can substitute this value into our equation: 14 = 2 x Vince's age 2 years ago To solve for Vince's age, we can divide both sides of the equation by 2: 7 = Vince's age 2 years ago So, Vince is currently 7 + 2 = 9 years old.

* Qn: ...

**3. Pattern Wise Context (Top-Center, Orange Border):**

* Q1: Nancy has saved 4900 cents ... (Reasoning Pattern 1) ↓

* A1: ... saved 4900 / 100 = 49 dollars ...

* Q2: Tom was at the beach for 5 days ... (Reasoning Pattern 2) ↓

* A2: ... a total of 7 x 5 = 35 seashells ...

* Qk: Tim's cat had kittens ... (Reasoning Pattern k) ↓

* Ak: ... hem away is 9-3 = 6 ...

**4. Downstream Task (Top-Right, Green Border):**

* Pattern Wise Context

* Q1: ...

* A1: (Reasoning Pattern 1)

* Question: The value of a sport utility vehicle this year is 16,000 dollars, which is 0.8 of what its value was last year. How much is the value of the vehicle last year?

**5. Final Answer (Bottom-Right, Green Border):**

* The problem tells us that the value of the sport utility vehicle this year is $16,000, which is 0.8 times its value last year. This means that the value last year is $16,000 / 0.8 = $20,000. The answer is $20,000.

**6. Embeddings (Center, No Border):**

* K-Clustering: A diagram of circles clustered together.

* Adaptive K: A square root symbol.

* The words "twice", "x", "divide", "+", "-", and "÷" are present.

**7. Pattern Discovery (Bottom-Center, Purple Border):**

* Prior Knowledge: A globe icon.

* LLM Prompting: An LLM robot icon.

**8. Task Patterns (Center-Right, Purple Border):**

* twice the age of

* divide both sides

* 7+2=9 years old

### Key Observations

* The diagram illustrates a step-by-step approach to problem-solving using an LLM.

* The LLM utilizes seed demonstrations and pattern recognition to understand and solve the given problem.

* The process involves converting text into numerical embeddings, clustering, and applying adaptive knowledge.

* The final answer is derived through a series of mathematical operations based on the problem's context.

### Interpretation

The diagram demonstrates how an LLM can be used to solve reasoning problems by breaking them down into smaller, manageable steps. The LLM leverages prior knowledge, pattern recognition, and mathematical operations to arrive at the correct solution. The use of seed demonstrations helps the LLM understand the problem's context and apply appropriate reasoning strategies. This approach highlights the potential of LLMs in automating complex problem-solving tasks.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

## Diagram: LLM Reasoning Process for Mathematical Word Problems

### Overview

This diagram illustrates a proposed process for a Large Language Model (LLM) to solve mathematical word problems. It outlines the flow of information from a dataset of problems, through pattern recognition and contextual understanding, to a final answer. The process involves several stages: Dataset, Seed Demonstrations, Pattern Wise Context, Downstream Task, and Final Answer, with intermediate steps like K-Clustering, Embeddings, Pattern Discovery, and Task Patterns.

### Components/Axes

The diagram is structured into distinct blocks, each representing a stage or component of the LLM's reasoning process. There are no traditional axes or legends as this is not a chart.

**Blocks and their content:**

1. **Dataset:**

* `Q₁: Liam is 16 years old now. Two years ago, ...`

* `Q₂: Melanie, Sally, and Jessica each have ...`

* `...`

* `Qn: There were a total of 6 soccer games this ...`

2. **LLM (with an icon of a robot):**

* Text: `Let's think step by step`

* An arrow pointing downwards from "LLM" to "Seed Demonstrations".

3. **Seed Demonstrations:**

* `Q₁: Liam is 16 years old now. Two years ago, Liam's age was twice the age of Vince. How old is Vince now?`

* `A₁: Let's think step by step. 2 years ago, Liam's age was twice the age of Vince. So, we can write an equation based on this information: Liam's age 2 years ago = 2 x Vince's age 2 years ago. We know that Liam's current age is 16, so his age 2 years ago would have been 16 - 2 = 14. Now, we can substitute this value into our equation: 14 = 2 x Vince's age 2 years ago. To solve for Vince's age, we can divide both sides of the equation by 2: 7 = Vince's age 2 years ago. So, Vince is currently 7 + 2 = 9 years old.`

* `Qn: ...`

4. **Pattern Wise Context:**

* `Q₁: Nancy has saved 4900 cents ...`

* `(Reasoning Pattern 1) ↓`

* `A₁: ... saved 4900 / 100 = 49 dollars ...`

* `Q₂: Tom was at the beach for 5 days ...`

* `(Reasoning Pattern 2) ↓`

* `A₂: ... a total of 7 x 5 = 35 seashells ...`

* `...`

* `Qk: Tim's cat had kittens ...`

* `(Reasoning Pattern k) ↓`

* `Ak: ... hem away is 9 - 3 = 6 ...`

5. **K-Clustering (with an icon of clustered circles):**

* An upward-pointing arrow.

6. **Embeddings:**

* Three square boxes, each containing a smaller square.

* Text labels below the boxes: `twice`, `x`, `-`, `divide`, `=`, `+`.

7. **Adaptive K (with an icon of a square root symbol):**

8. **Pattern Discovery:**

* **Prior Knowledge:** (with an icon of Wikipedia logo)

* **LLM Prompting:** (with an icon of a robot)

9. **Task Patterns:**

* `twice the age of`

* `divide both sides`

* `7+2=9 years old`

10. **Downstream Task:**

* **Pattern Wise Context:**

* `Q₁: ...`

* `A₁: (Reasoning Pattern 1)`

* `...`

* **Question:** `The value of a sport utility vehicle this year is 16,000 dollars, which is 0.8 of what its value was last year. How much is the value of the vehicle last year?`

* **LLM (with an icon of a robot):**

* A downward-pointing arrow.

* An icon of a lightbulb.

11. **Final Answer:**

* `The problem tells us that the value of the sport utility vehicle this year is $16,000, which is 0.8 times its value last year. This means that the value last year is $16,000 / 0.8 = $20,000. The answer is $20,000.`

* A green checkmark icon.

### Detailed Analysis or Content Details

The diagram depicts a multi-stage process:

* **Dataset:** Provides example mathematical word problems (`Q₁`, `Q₂`, `Qn`).

* **LLM & Step-by-Step Reasoning:** An LLM is introduced, with an emphasis on its ability to "think step by step".

* **Seed Demonstrations:** A detailed example of a word problem (`Q₁` about Liam's age) and its step-by-step solution (`A₁`) are provided. This demonstrates how the LLM might break down a problem, identify relationships (e.g., "twice the age of"), form equations, and solve them.

* **Pattern Wise Context:** This section shows how the LLM might extract specific reasoning patterns from different problems and their solutions. Examples include:

* Unit conversion (`cents` to `dollars`) with calculation `4900 / 100 = 49`.

* Multiplication for total quantity (`7 x 5 = 35`).

* Subtraction for difference (`9 - 3 = 6`).

* Each example is associated with a "Reasoning Pattern" and its corresponding answer.

* **Intermediate Processes:**

* **K-Clustering & Adaptive K:** These suggest methods for grouping similar patterns or adapting parameters, possibly for identifying relevant reasoning strategies.

* **Embeddings:** Visualized with abstract boxes and mathematical operators (`twice`, `x`, `-`, `divide`, `=`, `+`), this likely represents the LLM's internal representation of mathematical concepts and operations.

* **Pattern Discovery:** This stage combines "Prior Knowledge" (represented by the Wikipedia icon, suggesting general knowledge or learned facts) and "LLM Prompting" (robot icon, indicating the LLM's ability to generate or utilize prompts) to identify relevant patterns.

* **Task Patterns:** This block lists specific patterns extracted or generated, such as "twice the age of", "divide both sides", and a specific arithmetic result "7+2=9 years old". These are likely derived from the Seed Demonstrations and Pattern Wise Context.

* **Downstream Task:** This represents the application of the learned process to a new problem.

* It includes a "Pattern Wise Context" placeholder, implying that context from previous examples is relevant.

* A specific "Question" is posed: "The value of a sport utility vehicle this year is 16,000 dollars, which is 0.8 of what its value was last year. How much is the value of the vehicle last year?"

* The LLM icon is shown again, with a downward arrow and a lightbulb, suggesting it is processing the question and generating a solution.

* **Final Answer:** The solution to the downstream task is presented. It explicitly states the problem, the relationship (0.8 times value last year), and the calculation to find the value last year: `$16,000 / 0.8 = $20,000`. The final answer is confirmed as $20,000 with a checkmark.

### Key Observations

* The diagram emphasizes a structured, step-by-step approach for LLMs to solve word problems, moving from general examples to specific pattern extraction and application.

* "Seed Demonstrations" play a crucial role in providing concrete examples for the LLM to learn from.

* The process involves identifying and abstracting "Reasoning Patterns" from solved examples.

* "Embeddings" and "Pattern Discovery" represent internal LLM mechanisms for understanding and utilizing these patterns.

* The "Downstream Task" demonstrates the application of the learned process to a novel problem, which is then solved using a similar step-by-step logic.

* The final answer for the downstream task is explicitly calculated and verified.

### Interpretation

This diagram outlines a methodology for enhancing LLM capabilities in mathematical reasoning, particularly for word problems. It suggests that by providing explicit examples ("Seed Demonstrations") and then abstracting the underlying "Reasoning Patterns," an LLM can learn to decompose complex problems into manageable steps. The "Pattern Wise Context" and "Task Patterns" sections highlight the LLM's ability to generalize and apply learned arithmetic and algebraic relationships.

The inclusion of "Prior Knowledge" and "LLM Prompting" under "Pattern Discovery" indicates that the LLM leverages both its pre-existing knowledge base and its ability to generate or interpret instructions to find relevant solution strategies. The "Embeddings" represent the internal numerical or vector representations that the LLM uses to process and compare mathematical concepts and operations.

The "Downstream Task" serves as a test case, demonstrating how the entire pipeline, from pattern recognition to application, leads to a correct solution. The problem of the sport utility vehicle's value is a typical algebraic word problem that requires understanding proportional relationships and performing division. The diagram shows that the LLM, by following its learned process, can correctly identify that if the current value is 0.8 times the past value, then the past value can be found by dividing the current value by 0.8. This suggests that the LLM is not just pattern-matching but is capable of performing logical deductions and calculations based on the problem's structure. The overall process aims to make LLM reasoning more transparent and robust for mathematical tasks.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: LLM Prompting & Reasoning Process

### Overview

This diagram illustrates a process for Large Language Model (LLM) prompting and reasoning, showcasing how a dataset is used to generate pattern-wise context, which then informs a downstream task. The diagram highlights a step-by-step thinking process facilitated by the LLM, including seed demonstrations, pattern discovery, and final answer generation.

### Components/Axes

The diagram is divided into three main columns: "Dataset", "Pattern Wise Context", and "Downstream Task". Within each column, there are sections for questions (Q<sub>i</sub>) and answers (A<sub>i</sub>). A central section depicts the LLM processing flow, including K-Clustering, Embeddings, Pattern Discovery, and Prior Knowledge. Mathematical operations ("twice", "divide", "+", "=") are visually represented as connecting elements.

### Detailed Analysis or Content Details

**Dataset (Left Column):**

* Q<sub>1</sub>: Liam is 16 years old now. Two years ago…

* Q<sub>2</sub>: Melanie, Sally, and Jessica each have…

* Q<sub>3</sub>: There were a total of 6 soccer games this…

* Seed Demonstrations:

* Q<sub>1</sub>: Liam is 16 years old now. Two years ago, Liam’s age was twice the age of Vince. How old is Vince now?

* A<sub>1</sub>: Let’s think step by step. 2 years ago, Liam’s age was twice the age of Vince. So, we can write an equation based on this information: Liam’s age 2 years ago = 2 x Vince’s age 2 years ago. We know that Liam’s current age is 16, so his age 2 years ago would have been 16 - 2 = 14. Now, we can substitute this value into our equation: 14 = 2 x Vince’s age 2 years ago. To solve for Vince’s age, we can divide both sides of the equation by 2: 7 = Vince’s age 2 years ago. So, Vince is currently 7 + 2 = 9 years old.

**Pattern Wise Context (Center Column):**

* Q<sub>1</sub>: Nancy has saved 4900 cents … (Reasoning Pattern 1)

* A<sub>1</sub>: … saved 4900 / 100 = 49 dollars …

* Q<sub>2</sub>: Tom was at the beach for 5 days … (Reasoning Pattern 2)

* A<sub>2</sub>: … a total of 7 x 5 = 35 seashells …

* Q<sub>3</sub>: Tim’s cat had kittens … (Reasoning Pattern 3)

* A<sub>3</sub>: … hem away is 9 - 3 = 6 …

* K-Clustering: Visual representation of clustering with labeled points.

* Embeddings: Visual representation of embeddings.

* Pattern Discovery: Visual representation of pattern discovery.

* Prior Knowledge: Visual representation of prior knowledge.

* Mathematical Operations: "twice", "divide", "+", "=" are visually connected.

**Downstream Task (Right Column):**

* Question: The value of a sport utility vehicle this year is $16,000, which is 0.8 of what its value was last year. How much is the value of the vehicle last year?

* Final Answer: The problem tells us that the value of the sport utility vehicle this year is $16,000, which is 0.8 times its value last year. This means that the value last year is $16,000 / 0.8 = $20,000. The answer is $20,000.

**LLM Prompting Flow (Central Area):**

* "Let's think step by step" is prominently displayed.

* "LLM Prompting" is labeled above the flow.

* "7+3=29 years old" is displayed at the bottom.

### Key Observations

* The diagram demonstrates a multi-step reasoning process.

* The "Pattern Wise Context" section shows examples of different reasoning patterns.

* The downstream task involves a simple division problem.

* The LLM is presented as a central component facilitating the reasoning process.

* The diagram uses visual cues (arrows, connecting lines) to illustrate the flow of information.

### Interpretation

The diagram illustrates a methodology for improving LLM performance by providing structured context and guiding the model through a step-by-step reasoning process. The "Dataset" provides initial examples, the "Pattern Wise Context" extracts underlying reasoning patterns, and the "Downstream Task" applies these patterns to solve a new problem. The LLM acts as the engine for this process, leveraging techniques like K-Clustering and embeddings to identify and apply relevant knowledge. The inclusion of mathematical operations as visual elements suggests that the LLM is capable of performing quantitative reasoning. The "Let's think step by step" prompt is a key element, encouraging the model to articulate its reasoning process, which can improve accuracy and transparency. The final answer demonstrates the successful application of this methodology to solve a practical problem. The diagram suggests a focus on teaching the LLM *how* to reason, rather than simply providing it with facts.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: LLM-Based Math Word Problem Solving Pipeline

### Overview

This image is a technical flowchart illustrating a pipeline for solving math word problems using a Large Language Model (LLM) enhanced with pattern recognition and clustering. The process flows from left to right, starting with a dataset of problems, extracting reasoning patterns, and applying them to solve a new downstream task. The diagram uses color-coded boxes and arrows to denote different stages and data flow.

### Components/Axes

The diagram is segmented into several key components, arranged spatially as follows:

1. **Left Column (Input & Demonstration):**

* **Top-Left (Blue Box):** `Dataset`. Contains example questions (Q₁, Q₂, ... Qₙ).

* **Bottom-Left (Orange Box):** `Seed Demonstrations`. Contains a detailed worked example (Q₁ and A₁) showing step-by-step reasoning.

* **Icon & Text:** An LLM robot icon with the text `Let's think step by step` points from the Dataset to the Seed Demonstrations.

2. **Center Column (Pattern Processing):**

* **Top-Center (Orange Box):** `Pattern Wise Context`. Contains multiple question-answer pairs (Q₁/A₁, Q₂/A₂, ... Qₖ/Aₖ), each labeled with a `(Reasoning Pattern X)`.

* **Middle-Center (Green Boxes):**

* `K-Clustering` (with a cluster icon).

* `Adaptive K` (with a square root of x icon).

* `Embeddings` (with a row of colored squares labeled: `twice`, `x`, `-`, `divide`, `÷`, `=`, `+`).

* **Bottom-Center (Purple & Teal Boxes):**

* `Pattern Discovery` containing `Prior Knowledge` (globe icon) and `LLM Prompting` (robot icon).

* `Task Patterns` containing example phrases: `twice the age of`, `divide both sides`, `7+2=9 years old`.

3. **Right Column (Application & Output):**

* **Top-Right (Green Box):** `Downstream Task`. Contains a `Pattern Wise Context` section (Q₁, A₁) and a new `Question` about a sport utility vehicle's value.

* **Bottom-Right (Green Box):** `Final Answer`. Contains the LLM's step-by-step solution to the downstream task question, culminating in the answer `$20,000` with a green checkmark.

* **Icons:** An LLM robot icon and a lightbulb icon are positioned between the Downstream Task and Final Answer boxes.

**Flow Arrows:** Orange arrows connect the major stages: Dataset -> Seed Demonstrations -> Pattern Wise Context -> Downstream Task -> Final Answer. Green arrows connect the pattern processing components (K-Clustering, Embeddings, Pattern Discovery) to the main flow.

### Detailed Analysis

**Textual Content Extraction:**

* **Dataset Box:**

* `Q₁: Liam is 16 years old now. Two years ago, ...`

* `Q₂: Melanie, Sally, and Jessica each have ...`

* `...`

* `Qₙ: There were a total of 6 soccer games this ...`

* **Seed Demonstrations Box:**

* `Q₁: Liam is 16 years old now. Two years ago, Liam's age was twice the age of Vince. How old is Vince now?`

* `A₁: Let's think step by step. 2 years ago, Liam's age was twice the age of Vince. So, we can write an equation based on this information: Liam's age 2 years ago = 2 x Vince's age 2 years ago. We know that Liam's current age is 16, so his age 2 years ago would have been 16 - 2 = 14. Now, we can substitute this value into our equation: 14 = 2 x Vince's age 2 years ago To solve for Vince's age, we can divide both sides of the equation by 2: 7 = Vince's age 2 years ago So, Vince is currently 7 + 2 = 9 years old.`

* `...`

* `Qₙ: ...`

* **Pattern Wise Context Box:**

* `Q₁: Nancy has saved 4900 cents ... (Reasoning Pattern 1) ↓`

* `A₁: ... saved 4900 / 100 = 49 dollars ...`

* `Q₂: Tom was at the beach for 5 days ... (Reasoning Pattern 2) ↓`

* `A₂: ... a total of 7 x 5 = 35 seashells ...`

* `...`

* `Qₖ: Tim's cat had kittens ... (Reasoning Pattern k) ↓`

* `Aₖ: ... hem away is 9 - 3 = 6 ...`

* **Embeddings Box:** Labels under colored squares: `twice`, `x`, `-`, `divide`, `÷`, `=`, `+`.

* **Task Patterns Box:** Phrases in dashed boxes: `twice the age of`, `divide both sides`, `7+2=9 years old`.

* **Downstream Task Box:**

* `Pattern Wise Context`

* `Q₁: ...`

* `A₁: (Reasoning Pattern 1)`

* `...`

* `Question:`

* `The value of a sport utility vehicle this year is 16,000 dollars , which is 0.8 of what its value was last year. How much is the value of the vehicle last year?`

* **Final Answer Box:**

* `The problem tells us that the value of the sport utility vehicle this year is $16,000, which is 0.8 times its value last year. This means that the value last year is $16,000 / 0.8 = $20,000. The answer is $20,000.`

### Key Observations

1. **Pattern-Centric Approach:** The core of the pipeline is the extraction and reuse of "Reasoning Patterns" (e.g., unit conversion, multiplication, subtraction) from solved examples.

2. **Adaptive Clustering:** The system uses `K-Clustering` and `Adaptive K` on problem `Embeddings` to group similar problems, suggesting it dynamically determines the number of relevant patterns to apply.

3. **Hierarchical Pattern Application:** Patterns are discovered from `Prior Knowledge` and `LLM Prompting`, stored as `Task Patterns`, and then injected as `Pattern Wise Context` into the prompt for the downstream task.

4. **Chain-of-Thought Emphasis:** The `Seed Demonstrations` and `Final Answer` explicitly show step-by-step reasoning, highlighted with red text for key operations and numbers.

5. **Visual Flow:** The process is linear for a single problem (left to right) but involves a feedback loop where pattern discovery (center-bottom) informs the context provided to the main solving pipeline.

### Interpretation

This diagram represents a sophisticated method for improving an LLM's mathematical reasoning. Instead of relying solely on its parametric knowledge, the system:

1. **Decomposes** problems into reusable reasoning patterns.

2. **Organizes** these patterns via clustering based on semantic embeddings.

3. **Contextualizes** the LLM by providing relevant patterns (`Pattern Wise Context`) alongside the new problem.

The **key innovation** is the structured, adaptive retrieval of reasoning strategies. The `Adaptive K` component is particularly notable, as it implies the system can decide how many and which patterns are relevant for a given new problem, moving beyond a static few-shot prompt. The red-highlighted text in the examples serves as a training signal, explicitly marking the mathematical operations and results the model should learn to replicate.

The pipeline demonstrates a move towards more **modular and interpretable AI problem-solving**. By isolating "Task Patterns," the system's reasoning steps become more transparent and potentially editable. The final answer is not just a number but a justified solution that mirrors the structure of the seed demonstrations, indicating successful pattern transfer.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: LLM Reasoning Process for Mathematical Problem Solving

### Overview

The image depicts a multi-stage flowchart illustrating how a Large Language Model (LLM) processes and solves mathematical problems through pattern recognition, contextual reasoning, and step-by-step deduction. The diagram integrates text, symbolic representations, and visual metaphors to demonstrate the model's cognitive pipeline.

### Components/Axes

1. **Left Column (Input/Context)**

- **Dataset**: Contains three question-answer pairs (Q1, Q2, Qn) with contextual information about ages, savings, and soccer games.

- **Pattern Wise Context**: Shows reasoning patterns (e.g., division for currency conversion, multiplication for counting objects).

- **Seed Demonstrations**: Step-by-step solution for an age-related problem involving Liam and Vince.

2. **Center Column (Processing)**

- **K-Clustering & Adaptive K**: Symbolic representations of clustering algorithms with mathematical operations (√x, x).

- **Embeddings**: Boxes labeled "twice," "x," "divide," "=", and "+" representing mathematical operations.

- **Pattern Discovery**: Combines prior knowledge (globe icon) and LLM prompting (robot icon) to identify reasoning patterns.

3. **Right Column (Output)**

- **Downstream Task**: Presents a vehicle value problem with contextual information.

- **Final Answer**: Solution to the vehicle value problem ($20,000) with a green checkmark.

### Detailed Analysis

1. **Dataset Section**

- Q1: Liam's current age (16) and age two years ago.

- Q2: Three individuals (Melanie, Sally, Jessica) with unspecified quantities.

- Qn: Total soccer games (6) with unspecified distribution.

2. **Pattern Wise Context**

- Q1: Nancy's savings (4900 cents) converted to dollars (4900/100 = 49).

- Q2: Tom's beach visit duration (5 days) multiplied by seashells per day (7×5=35).

- Qk: Cat kittens problem with subtraction (9-3=6).

3. **Seed Demonstrations**

- Step-by-step solution for Liam's age problem:

- Current age: 16

- Two years ago: 16-2=14

- Equation: 14 = 2×Vince's age two years ago

- Solution: Vince's current age = 9

4. **Embeddings & Task Patterns**

- Mathematical operations visualized as boxes:

- "twice" (multiplication)

- "divide" (division)

- "=" (equality)

- "+" (addition)

- Task Patterns list:

- "twice the age of"

- "divide both sides"

- "7+2=9 years old"

5. **Final Answer**

- Vehicle value problem:

- Current value: $16,000 (0.8×last year's value)

- Calculation: $16,000 / 0.8 = $20,000

### Key Observations

1. The flowchart demonstrates hierarchical reasoning, moving from raw data (Dataset) to abstract patterns (Pattern Discovery) and finally to concrete solutions (Final Answer).

2. Mathematical operations are visually represented through labeled boxes in the Embeddings section.

3. The robot icon in LLM Prompting suggests anthropomorphic representation of the model's reasoning process.

4. The globe icon in Prior Knowledge implies integration of external information sources.

### Interpretation

This diagram illustrates how LLMs transform unstructured problems into structured reasoning chains. The Embeddings section acts as a symbolic representation of mathematical operations, while Task Patterns show the model's ability to identify and apply reasoning templates. The Final Answer demonstrates successful application of these patterns to solve novel problems, highlighting the model's capacity for:

- Pattern recognition across different problem types

- Contextual adaptation (e.g., unit conversion in Pattern Wise Context)

- Step-by-step logical deduction (Seed Demonstrations)

- Integration of prior knowledge with new information (Pattern Discovery)

The visual metaphors (robot, globe) suggest personification of the model's cognitive processes, while the mathematical operations emphasize the algorithmic nature of the reasoning. The flowchart effectively communicates the LLM's problem-solving methodology through a combination of textual examples and symbolic representations.

DECODING INTELLIGENCE...