## Diagram: LLM Prompting & Reasoning Process

### Overview

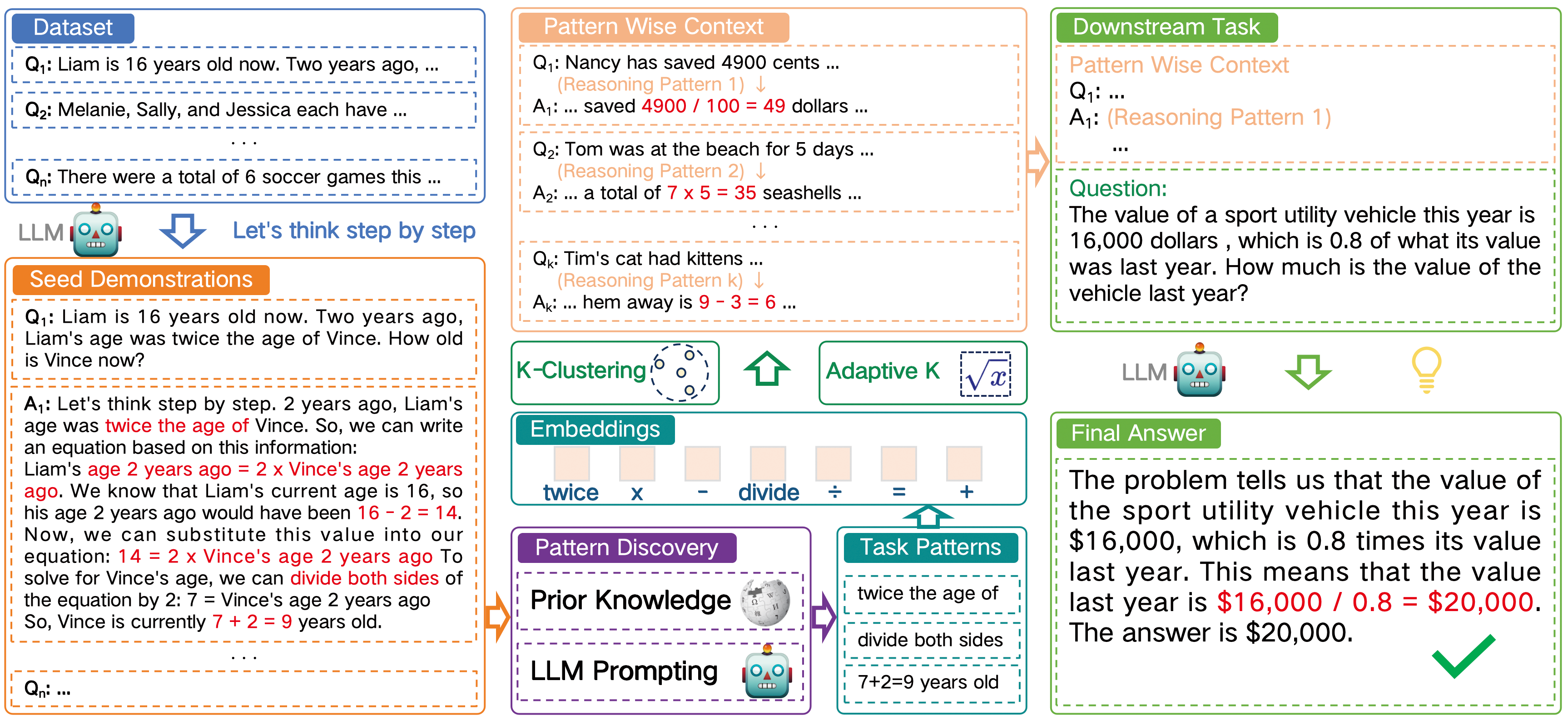

This diagram illustrates a process for Large Language Model (LLM) prompting and reasoning, showcasing how a dataset is used to generate pattern-wise context, which then informs a downstream task. The diagram highlights a step-by-step thinking process facilitated by the LLM, including seed demonstrations, pattern discovery, and final answer generation.

### Components/Axes

The diagram is divided into three main columns: "Dataset", "Pattern Wise Context", and "Downstream Task". Within each column, there are sections for questions (Q<sub>i</sub>) and answers (A<sub>i</sub>). A central section depicts the LLM processing flow, including K-Clustering, Embeddings, Pattern Discovery, and Prior Knowledge. Mathematical operations ("twice", "divide", "+", "=") are visually represented as connecting elements.

### Detailed Analysis or Content Details

**Dataset (Left Column):**

* Q<sub>1</sub>: Liam is 16 years old now. Two years ago…

* Q<sub>2</sub>: Melanie, Sally, and Jessica each have…

* Q<sub>3</sub>: There were a total of 6 soccer games this…

* Seed Demonstrations:

* Q<sub>1</sub>: Liam is 16 years old now. Two years ago, Liam’s age was twice the age of Vince. How old is Vince now?

* A<sub>1</sub>: Let’s think step by step. 2 years ago, Liam’s age was twice the age of Vince. So, we can write an equation based on this information: Liam’s age 2 years ago = 2 x Vince’s age 2 years ago. We know that Liam’s current age is 16, so his age 2 years ago would have been 16 - 2 = 14. Now, we can substitute this value into our equation: 14 = 2 x Vince’s age 2 years ago. To solve for Vince’s age, we can divide both sides of the equation by 2: 7 = Vince’s age 2 years ago. So, Vince is currently 7 + 2 = 9 years old.

**Pattern Wise Context (Center Column):**

* Q<sub>1</sub>: Nancy has saved 4900 cents … (Reasoning Pattern 1)

* A<sub>1</sub>: … saved 4900 / 100 = 49 dollars …

* Q<sub>2</sub>: Tom was at the beach for 5 days … (Reasoning Pattern 2)

* A<sub>2</sub>: … a total of 7 x 5 = 35 seashells …

* Q<sub>3</sub>: Tim’s cat had kittens … (Reasoning Pattern 3)

* A<sub>3</sub>: … hem away is 9 - 3 = 6 …

* K-Clustering: Visual representation of clustering with labeled points.

* Embeddings: Visual representation of embeddings.

* Pattern Discovery: Visual representation of pattern discovery.

* Prior Knowledge: Visual representation of prior knowledge.

* Mathematical Operations: "twice", "divide", "+", "=" are visually connected.

**Downstream Task (Right Column):**

* Question: The value of a sport utility vehicle this year is $16,000, which is 0.8 of what its value was last year. How much is the value of the vehicle last year?

* Final Answer: The problem tells us that the value of the sport utility vehicle this year is $16,000, which is 0.8 times its value last year. This means that the value last year is $16,000 / 0.8 = $20,000. The answer is $20,000.

**LLM Prompting Flow (Central Area):**

* "Let's think step by step" is prominently displayed.

* "LLM Prompting" is labeled above the flow.

* "7+3=29 years old" is displayed at the bottom.

### Key Observations

* The diagram demonstrates a multi-step reasoning process.

* The "Pattern Wise Context" section shows examples of different reasoning patterns.

* The downstream task involves a simple division problem.

* The LLM is presented as a central component facilitating the reasoning process.

* The diagram uses visual cues (arrows, connecting lines) to illustrate the flow of information.

### Interpretation

The diagram illustrates a methodology for improving LLM performance by providing structured context and guiding the model through a step-by-step reasoning process. The "Dataset" provides initial examples, the "Pattern Wise Context" extracts underlying reasoning patterns, and the "Downstream Task" applies these patterns to solve a new problem. The LLM acts as the engine for this process, leveraging techniques like K-Clustering and embeddings to identify and apply relevant knowledge. The inclusion of mathematical operations as visual elements suggests that the LLM is capable of performing quantitative reasoning. The "Let's think step by step" prompt is a key element, encouraging the model to articulate its reasoning process, which can improve accuracy and transparency. The final answer demonstrates the successful application of this methodology to solve a practical problem. The diagram suggests a focus on teaching the LLM *how* to reason, rather than simply providing it with facts.