# Technical Document Extraction: Long-Horizon Task Abstraction

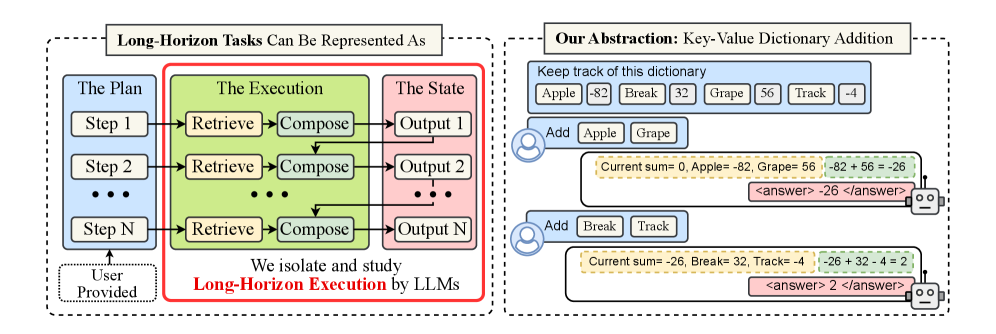

This image contains two primary panels enclosed in dashed borders, illustrating a conceptual framework for representing long-horizon tasks and a specific mathematical abstraction used to study them.

---

## Panel 1: Conceptual Framework

**Header:** Long-Horizon Tasks Can Be Represented As

### Component Isolation

This panel is divided into three vertical segments representing the flow of a task.

#### 1. The Plan (Blue Segment)

* **Input Source:** A dashed box at the bottom labeled "User Provided" points upward to this segment.

* **Components:**

* **Step 1**: Top-level task instruction.

* **Step 2**: Intermediate task instruction.

* **...**: Ellipsis indicating multiple intermediate steps.

* **Step N**: Final task instruction.

* **Flow:** Each step in "The Plan" points horizontally to a corresponding "Retrieve" block in the next segment.

#### 2. The Execution (Green Segment)

* **Context:** This segment is enclosed in a thick red border with the caption: "We isolate and study **Long-Horizon Execution** by LLMs".

* **Internal Logic (Repeated for Steps 1 through N):**

* **Retrieve**: Receives input from "The Plan".

* **Compose**: Receives input from "Retrieve".

* **Inter-step Flow:** A downward arrow connects the "Compose" block of one step to the "Compose" block of the subsequent step, indicating state or context carry-over.

#### 3. The State (Red Segment)

* **Components:**

* **Output 1**: Result of Step 1 execution.

* **Output 2**: Result of Step 2 execution.

* **...**: Ellipsis indicating intermediate outputs.

* **Output N**: Final result.

* **Flow:** Each "Compose" block in the Execution segment points horizontally to its corresponding "Output" block.

---

## Panel 2: Mathematical Abstraction

**Header:** Our Abstraction: Key-Value Dictionary Addition

This panel illustrates a specific task designed to test the execution logic described in Panel 1.

### Data Table: The Dictionary

A blue box at the top contains a key-value dictionary that the system must "Keep track of":

| Key | Value | Key | Value | Key | Value | Key | Value |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| Apple | -82 | Break | 32 | Grape | 56 | Track | -4 |

### Interaction Flow (User and AI Agent)

#### Interaction 1:

* **User Input (User Icon):** "Add Apple Grape"

* **AI Reasoning (Robot Icon):**

* **Retrieval/State Check (Yellow dashed box):** "Current sum= 0, Apple= -82, Grape= 56"

* **Computation (Green dashed box):** "-82 + 56 = -26"

* **Final Output (Pink box):** `<answer> -26 </answer>`

#### Interaction 2:

* **User Input (User Icon):** "Add Break Track"

* **AI Reasoning (Robot Icon):**

* **Retrieval/State Check (Yellow dashed box):** "Current sum= -26, Break= 32, Track= -4" (Note: The current sum is carried over from the previous interaction).

* **Computation (Green dashed box):** "-26 + 32 - 4 = 2"

* **Final Output (Pink box):** `<answer> 2 </answer>`

---

## Summary of Technical Information

* **Primary Objective:** To isolate and study how Large Language Models (LLMs) execute long-horizon tasks by maintaining state across multiple steps.

* **Task Logic:** The task involves a "Key-Value Dictionary Addition" where the model must retrieve values for specific keys and maintain a running sum across sequential user prompts.

* **Key Data Points:**

* Initial State: Sum = 0.

* Step 1: Apple (-82) + Grape (56) = -26.

* Step 2: Previous Sum (-26) + Break (32) + Track (-4) = 2.