# Technical Diagram Analysis: Token Processing and KV Cache

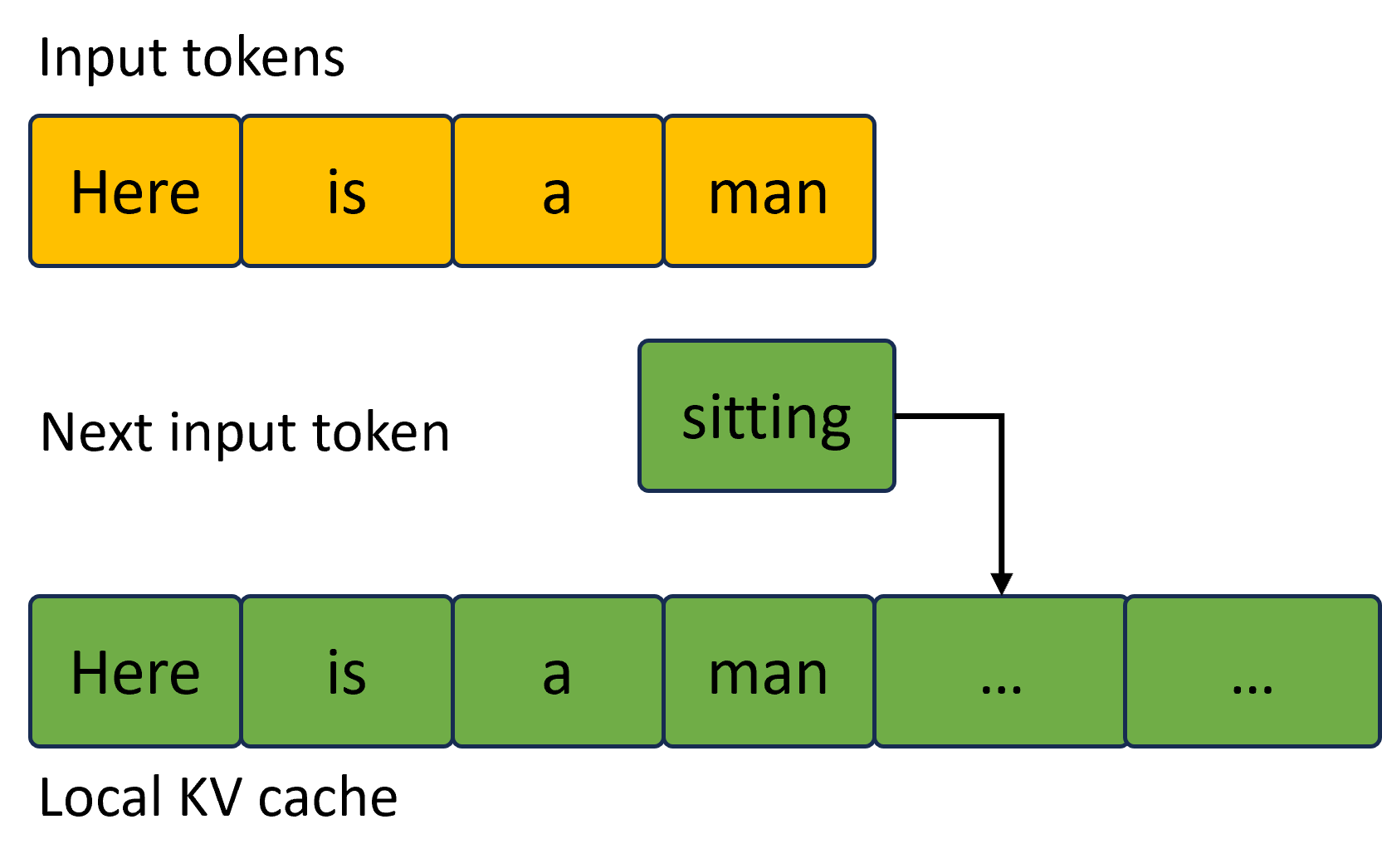

This diagram illustrates the relationship between input tokens, the next predicted token, and the local Key-Value (KV) cache in a Large Language Model (LLM) inference context.

## 1. Component Isolation

The image is organized into three horizontal layers:

* **Top Layer (Header):** Input tokens sequence.

* **Middle Layer (Transition):** The next predicted token and its flow.

* **Bottom Layer (Footer):** The state of the Local KV cache.

---

## 2. Detailed Content Extraction

### A. Input Tokens (Top Layer)

This section represents the initial prompt or context provided to the model.

* **Label:** "Input tokens"

* **Visual Representation:** Four contiguous yellow rectangular blocks.

* **Text Content (Left to Right):**

1. `Here`

2. `is`

3. `a`

4. `man`

### B. Next Input Token (Middle Layer)

This section represents the token generated by the model that will serve as the input for the next step of autoregressive generation.

* **Label:** "Next input token"

* **Visual Representation:** A single green rectangular block positioned to the right of the original input sequence.

* **Text Content:** `sitting`

* **Flow Indicator:** A black right-angled arrow originates from the right side of the "sitting" block and points downward to the fifth position in the KV cache.

### C. Local KV Cache (Bottom Layer)

This section represents the stored representations of tokens used to optimize inference speed.

* **Label:** "Local KV cache"

* **Visual Representation:** A sequence of six contiguous green rectangular blocks.

* **Text Content (Left to Right):**

1. `Here`

2. `is`

3. `a`

4. `man`

5. `...` (Indicated by the arrow as the destination for the new token)

6. `...`

---

## 3. Diagram Logic and Flow

1. **Color Coding:**

* **Yellow** represents the original "Input tokens."

* **Green** represents the "Next input token" and the contents of the "Local KV cache."

2. **Process Flow:**

* The model processes the initial input sequence: "Here is a man".

* The model predicts the next token: "sitting".

* The diagram shows the "sitting" token being appended to the existing sequence within the **Local KV cache**.

* The KV cache contains the history of the sequence, including the original words (now colored green to indicate they are cached) and placeholders (`...`) for newly added and future tokens.