## [Chart Type]: Dual Line Charts Comparing Model Performance

### Overview

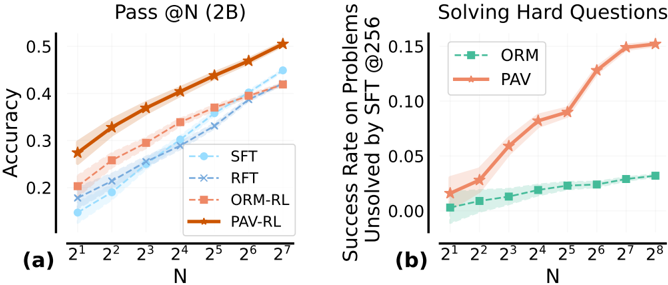

The image contains two side-by-side line charts, labeled (a) and (b), comparing the performance of different methods (likely language model training or decoding strategies) on two distinct metrics as a function of the number of samples or attempts, denoted by N. The charts use a logarithmic scale for the x-axis (N). Both charts include shaded regions around the lines, indicating confidence intervals or variance.

### Components/Axes

**Chart (a): "Pass @N (2B)"**

* **Title:** Pass @N (2B)

* **Y-axis:** Label: "Accuracy". Scale: Linear, from 0.2 to 0.5, with major ticks at 0.2, 0.3, 0.4, 0.5.

* **X-axis:** Label: "N". Scale: Logarithmic base 2. Ticks: 2¹, 2², 2³, 2⁴, 2⁵, 2⁶, 2⁷.

* **Legend:** Located in the bottom-right corner. Contains four entries:

1. `SFT` - Light blue line with circle markers.

2. `RFT` - Blue line with 'x' markers.

3. `ORM-RL` - Red-orange line with square markers.

4. `PAV-RL` - Orange line with star markers.

**Chart (b): "Solving Hard Questions"**

* **Title:** Solving Hard Questions

* **Y-axis:** Label: "Success Rate on Problems Unsolved by SFT @256". Scale: Linear, from 0.00 to 0.15, with major ticks at 0.00, 0.05, 0.10, 0.15.

* **X-axis:** Label: "N". Scale: Logarithmic base 2. Ticks: 2¹, 2², 2³, 2⁴, 2⁵, 2⁶, 2⁷, 2⁸.

* **Legend:** Located in the top-left corner. Contains two entries:

1. `ORM` - Green line with square markers.

2. `PAV` - Orange line with star markers.

### Detailed Analysis

**Chart (a) Data Points & Trends:**

* **Trend Verification:** All four lines show a clear upward trend, indicating that accuracy increases as N increases. The slope is steepest for PAV-RL and ORM-RL.

* **PAV-RL (Orange, Stars):** Highest performance across all N. Starts at ~0.28 (N=2¹) and rises steadily to ~0.50 (N=2⁷).

* **ORM-RL (Red-Orange, Squares):** Second highest. Starts at ~0.20 (N=2¹) and rises to ~0.42 (N=2⁷).

* **RFT (Blue, 'x'):** Third. Starts at ~0.18 (N=2¹) and rises to ~0.45 (N=2⁷). Note: At N=2⁷, it appears to slightly surpass ORM-RL.

* **SFT (Light Blue, Circles):** Lowest performance. Starts at ~0.15 (N=2¹) and rises to ~0.45 (N=2⁷), converging with RFT at the highest N.

**Chart (b) Data Points & Trends:**

* **Trend Verification:** Both lines show an upward trend. PAV exhibits a pronounced, accelerating (convex) curve, while ORM shows a shallow, near-linear increase.

* **PAV (Orange, Stars):** Shows dramatic improvement. Starts at ~0.02 (N=2¹), increases slowly until N=2³ (~0.06), then accelerates sharply, reaching ~0.15 at N=2⁸.

* **ORM (Green, Squares):** Shows modest, steady improvement. Starts at ~0.00 (N=2¹) and rises linearly to only ~0.03 at N=2⁸.

### Key Observations

1. **Performance Hierarchy:** In chart (a), the order from best to worst is consistently PAV-RL > ORM-RL ≈ RFT > SFT for most N, with convergence between RFT and SFT at high N.

2. **Scaling Advantage:** PAV(-RL) demonstrates superior scaling properties in both charts. Its advantage over other methods becomes significantly more pronounced as N increases, especially in the "Solving Hard Questions" metric.

3. **Hard Problem Specialization:** Chart (b) reveals a massive performance gap between PAV and ORM on problems that the baseline SFT method fails to solve even with 256 samples. PAV's success rate on these hard problems is approximately 5 times higher than ORM's at N=2⁸.

4. **Variance:** The shaded confidence intervals are relatively narrow for all lines, suggesting the reported trends are statistically stable.

### Interpretation

These charts likely evaluate methods for improving the problem-solving capability of a 2-billion parameter (2B) language model through techniques like Reinforcement Learning (RL) or different sampling strategies. "Pass @N" measures the probability that at least one of N generated samples is correct.

The data strongly suggests that the **PAV** (and its RL variant PAV-RL) method is significantly more effective than the alternatives (**SFT**, **RFT**, **ORM**). Its key advantage is not just in overall accuracy (chart a), but specifically in its ability to solve *hard problems* that stump the baseline model (chart b). The accelerating curve in chart (b) indicates that PAV's benefit is not linear; it becomes disproportionately more valuable as you are allowed more attempts (higher N). This implies PAV is better at exploring the solution space or has learned a more robust reasoning strategy. The close performance of RFT and SFT at high N in chart (a) suggests that simple fine-tuning (SFT) and perhaps Rejection Fine-Tuning (RFT) have similar asymptotic limits, which PAV and ORM-RL surpass. The charts collectively argue for the superiority of the PAV approach, particularly for challenging tasks where brute-force sampling (increasing N) is computationally expensive.