## Heatmap: Average Jensen-Shannon Divergence Across Model Layers

### Overview

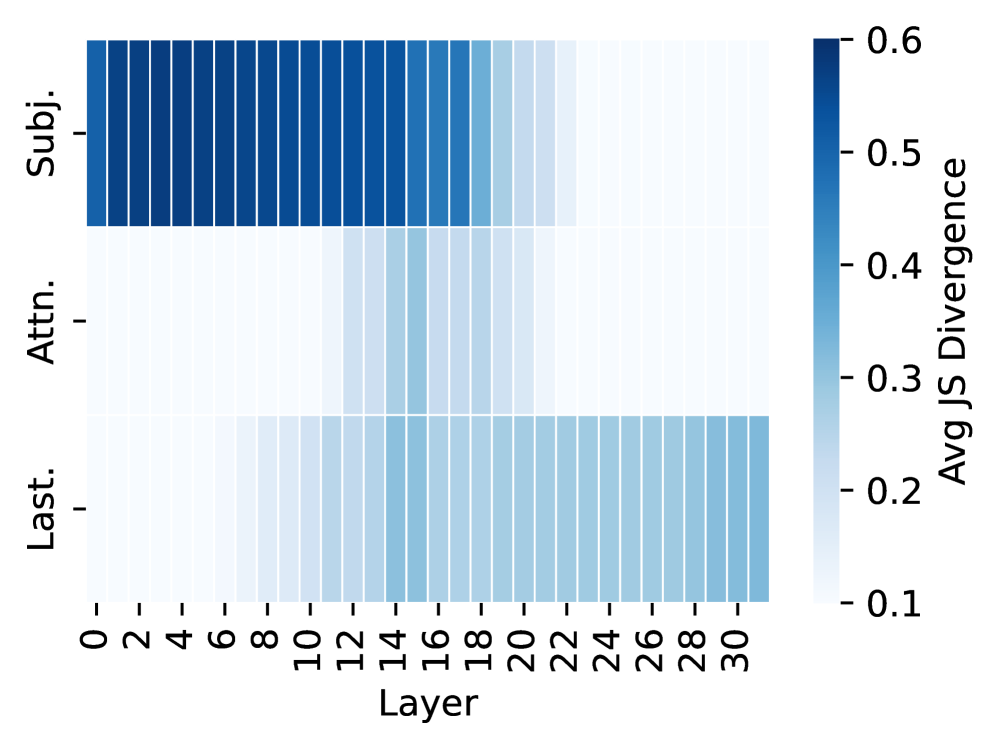

The image is a heatmap visualizing the average Jensen-Shannon (JS) Divergence across 31 layers (0-30) of a model for three distinct components or metrics. The heatmap uses a blue color gradient to represent the magnitude of divergence, with a corresponding color scale bar on the right.

### Components/Axes

* **Y-Axis (Vertical):** Lists three categories, positioned on the left side of the chart.

* `Subj.` (Top row)

* `Attn.` (Middle row)

* `Last.` (Bottom row)

* **X-Axis (Horizontal):** Labeled "Layer" at the bottom center. It displays numerical markers from 0 to 30, incrementing by 2 (0, 2, 4, ..., 30). Each integer layer from 0 to 30 is represented by a vertical column in the heatmap.

* **Color Scale (Legend):** Positioned on the right side of the chart. It is a vertical bar labeled "Avg JS Divergence". The scale ranges from a light blue/white at the bottom (value `0.1`) to a dark blue at the top (value `0.6`), with intermediate markers at `0.2`, `0.3`, `0.4`, and `0.5`.

### Detailed Analysis

The heatmap displays the following patterns for each row (component) across the layers (columns):

1. **Row: `Subj.`**

* **Trend:** Starts with very high divergence in the earliest layers, which gradually decreases and fades to very low divergence in the later layers.

* **Approximate Values:** Layers 0-12 show the darkest blue, indicating Avg JS Divergence values near or at the maximum of `~0.6`. The color begins to lighten noticeably around layer 14 (`~0.5`), continues to fade through layers 16-20 (`~0.4` to `~0.2`), and becomes very light (near `0.1`) from layer 22 onward.

2. **Row: `Attn.`**

* **Trend:** Shows generally low divergence across most layers, with a subtle, localized increase in the middle layers.

* **Approximate Values:** Layers 0-10 are very light, indicating values near `0.1`. A slight darkening is visible from approximately layer 12 to layer 20, suggesting a modest increase in divergence to around `~0.2` to `~0.3`. The divergence returns to very low levels (`~0.1`) from layer 22 to layer 30.

3. **Row: `Last.`**

* **Trend:** Starts with very low divergence in the early layers and shows a steady, progressive increase in divergence across the subsequent layers.

* **Approximate Values:** Layers 0-6 are very light (`~0.1`). A gradual darkening begins around layer 8 (`~0.15`), becoming more pronounced through the middle layers (e.g., layer 16 is `~0.3`, layer 22 is `~0.4`). The divergence continues to increase, with the final layers (28-30) showing a medium blue, corresponding to values of approximately `~0.45` to `0.5`.

### Key Observations

* **Inverse Relationship:** The `Subj.` and `Last.` rows exhibit a near-inverse relationship. `Subj.` divergence is highest in early layers and decays, while `Last.` divergence is lowest in early layers and grows.

* **Localized Activity:** The `Attn.` row shows a distinct, bounded region of slightly elevated divergence in the model's middle layers (approx. 12-20), unlike the broad trends of the other two rows.

* **Maximum Divergence:** The highest divergence values (`~0.6`) are exclusively found in the `Subj.` row for the first dozen layers.

* **Layer 22 Transition:** Layer 22 appears to be a transition point where the `Subj.` row's divergence has nearly vanished, the `Attn.` row's minor elevation ends, and the `Last.` row's divergence becomes firmly established.

### Interpretation

This heatmap likely visualizes how different types of information or representations evolve within a deep neural network (e.g., a transformer model) across its layers.

* **`Subj.` (Subject/Subject Representation):** The high early-layer divergence suggests that subject-related information is processed and is highly variable or "divergent" in the initial stages of the network. Its decay indicates this representation becomes more stable or converges as information flows deeper.

* **`Attn.` (Attention):** The localized increase in the middle layers aligns with the hypothesis that attention mechanisms perform significant, focused computations in the intermediate processing stages of the model, before the representations are finalized.

* **`Last.` (Last Layer/Final Representation):** The steadily increasing divergence suggests that the final output representation becomes progressively more distinct or specialized layer-by-layer, accumulating information from earlier processing stages. The high divergence in later layers may reflect the model's preparation for a specific, fine-grained prediction task.

**Overall Narrative:** The data suggests a processing pipeline where initial layers heavily work on subject-related features (`Subj.`), middle layers engage in focused relational computations (`Attn.`), and later layers build up a complex, divergent final representation (`Last.`) suitable for the model's ultimate objective. The inverse trend between `Subj.` and `Last.` could indicate a transformation from raw, variable input features to a refined, task-specific output.