TECHNICAL ASSET FINGERPRINT

7acb1ae0d4ce50509c3480a0

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

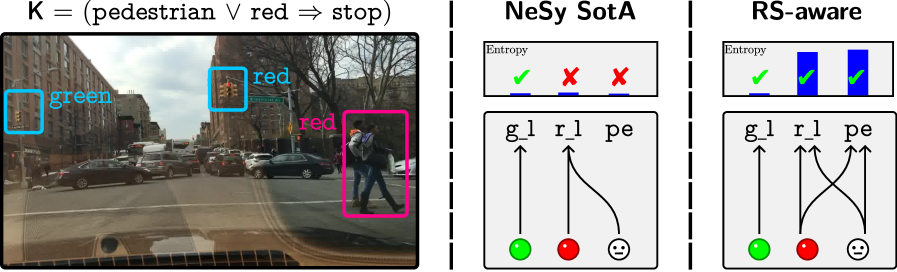

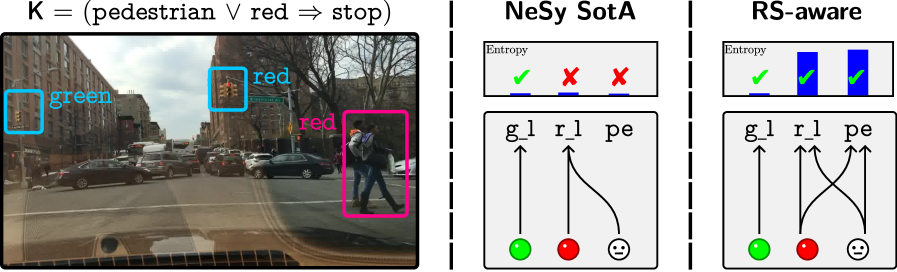

## Image Analysis: Scene Understanding and Reasoning

### Overview

The image presents a scene understanding and reasoning comparison between two approaches: "NeSy SotA" and "RS-aware". The image is divided into three sections. The left section shows a street scene with bounding boxes around objects, and an associated logical rule. The middle and right sections are diagrams representing the reasoning process of the two approaches, including entropy visualizations and connections between ground-level perceptions and higher-level concepts.

### Components/Axes

**Left Section (Street Scene):**

* **Image:** A photograph of a street scene with cars, buildings, traffic lights, and pedestrians.

* **Bounding Boxes:**

* A cyan box around a green traffic light, labeled "green".

* A cyan box around a red traffic light, labeled "red".

* A magenta box around two pedestrians, labeled "red".

* **Logical Rule:** "K = (pedestrian ∨ red ⇒ stop)"

**Middle Section (NeSy SotA):**

* **Title:** "NeSy SotA"

* **Entropy Visualization:** A horizontal bar with three segments.

* First segment: A green checkmark.

* Second segment: A red "X".

* Third segment: A red "X".

* Label: "Entropy" above the bar.

* **Diagram:**

* Labels: "g_l", "r_l", "pe" (likely representing green light, red light, and pedestrian, respectively)

* Nodes: Green circle, red circle, and a smiley face icon.

* Arrows: An arrow from the green circle to "g_l", an arrow from the red circle to "r_l", and a curved arrow from the smiley face to "r_l".

**Right Section (RS-aware):**

* **Title:** "RS-aware"

* **Entropy Visualization:** A horizontal bar with three segments.

* First segment: A green checkmark.

* Second segment: A blue bar.

* Third segment: A blue bar.

* Label: "Entropy" above the bar.

* **Diagram:**

* Labels: "g_l", "r_l", "pe"

* Nodes: Green circle, red circle, and a smiley face icon.

* Arrows: An arrow from the green circle to "g_l", an arrow from the red circle to "r_l", and two crossing arrows, one from the smiley face to "r_l" and one from the red circle to "pe".

### Detailed Analysis or Content Details

**Left Section (Street Scene):**

* The street scene depicts a typical urban environment with traffic and pedestrians.

* The bounding boxes highlight the objects of interest for the reasoning task: traffic lights and pedestrians.

* The logical rule "K = (pedestrian ∨ red ⇒ stop)" states that the system should stop if there are pedestrians or the traffic light is red.

**Middle Section (NeSy SotA):**

* The entropy visualization shows a green checkmark for the first segment and red "X"s for the second and third segments. This suggests that the system correctly identifies the green light but fails to correctly identify the red light and pedestrian.

* The diagram shows that the green light is correctly associated with "g_l", the red light is correctly associated with "r_l", but the pedestrian is incorrectly associated with "r_l" instead of "pe".

**Right Section (RS-aware):**

* The entropy visualization shows a green checkmark for the first segment and blue bars for the second and third segments. This suggests that the system correctly identifies the green light and also identifies the red light and pedestrian.

* The diagram shows that the green light is correctly associated with "g_l", the red light is correctly associated with "r_l", and the pedestrian is correctly associated with "pe".

### Key Observations

* The image compares the performance of two scene understanding and reasoning approaches.

* "NeSy SotA" struggles to correctly identify the red light and pedestrian, while "RS-aware" performs better.

* The entropy visualizations provide a measure of uncertainty for each approach.

* The diagrams illustrate the connections between ground-level perceptions and higher-level concepts.

### Interpretation

The image demonstrates the importance of robust scene understanding and reasoning for autonomous systems. The "NeSy SotA" approach, while being state-of-the-art, fails to correctly identify the red light and pedestrian, which could lead to dangerous situations. The "RS-aware" approach, on the other hand, performs better by correctly identifying the objects of interest and associating them with the appropriate concepts. This suggests that "RS-aware" is a more reliable approach for scene understanding and reasoning in this scenario. The logical rule "K = (pedestrian ∨ red ⇒ stop)" highlights the importance of safety in autonomous systems, as the system should stop if there are pedestrians or the traffic light is red.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Traffic Scene Analysis with Uncertainty Quantification

### Overview

The image presents a comparison of two approaches – NeSy (State-of-the-Art) and RS-aware – for analyzing a traffic scene, focusing on uncertainty quantification related to pedestrian detection and traffic light recognition. The left side shows a real-world street scene with bounding boxes around detected objects. The right two sections illustrate the uncertainty representation for each approach using diagrams.

### Components/Axes

The image is divided into three main sections:

1. **Real-World Scene:** A photograph of a street with cars, pedestrians, and traffic lights. Bounding boxes with labels are overlaid on the image.

2. **NeSy SOTA:** A diagram representing the uncertainty quantification of the NeSy approach. It includes a visual representation of entropy and a directed graph.

3. **RS-aware:** A diagram representing the uncertainty quantification of the RS-aware approach, similar in structure to the NeSy diagram.

The diagrams share the following components:

* **Entropy:** Represented by a bar graph.

* **Nodes:** Representing 'gl' (green light), 'rl' (red light), and 'pe' (pedestrian).

* **Edges:** Representing relationships between the nodes, with arrows indicating direction.

* **Node Indicators:** Green checkmarks and red crosses indicate confidence levels.

### Detailed Analysis or Content Details

**Real-World Scene:**

* A bounding box labeled "green" (blue outline) surrounds a traffic light displaying a green signal.

* Two bounding boxes labeled "red" (cyan outline) surround traffic lights displaying a red signal.

* A bounding box labeled "red" (magenta outline) surrounds a pedestrian.

* The equation at the top reads: K = (pedestrian ∨ red ⇒ stop). This suggests a rule-based system where the system should stop if a pedestrian or a red light is detected.

**NeSy SOTA:**

* **Entropy:** The bar graph shows two bars. The first bar (corresponding to the green light) is short and marked with a green checkmark. The second bar (corresponding to the red light) is long and marked with a red cross.

* **Directed Graph:**

* 'gl' (green light) node is connected to 'pe' (pedestrian) with a red line and a sad face.

* 'rl' (red light) node is connected to 'pe' (pedestrian) with a red line and a sad face.

* 'gl' (green light) node has an upward arrow.

* 'rl' (red light) node has an upward arrow.

* 'pe' (pedestrian) node has an upward arrow.

**RS-aware:**

* **Entropy:** The bar graph shows two bars. The first bar (corresponding to the green light) is tall and marked with a green checkmark. The second bar (corresponding to the red light) is short and marked with a green checkmark.

* **Directed Graph:**

* 'gl' (green light) node is connected to 'pe' (pedestrian) with a black line and a neutral face.

* 'rl' (red light) node is connected to 'pe' (pedestrian) with a black line and a neutral face.

* 'gl' (green light) node has an upward arrow.

* 'rl' (red light) node has an upward arrow.

* 'pe' (pedestrian) node has an upward arrow.

### Key Observations

* The NeSy approach indicates high uncertainty regarding the red light (long red bar and red cross) and a negative correlation between the green light and pedestrian detection (red line and sad face).

* The RS-aware approach indicates low uncertainty for both the green and red lights (short green bars and green checkmarks) and a neutral correlation between the lights and pedestrian detection (black lines and neutral faces).

* The entropy bars in RS-aware are inverted compared to NeSy.

* The RS-aware approach appears to provide a more consistent and confident assessment of the scene.

### Interpretation

The image demonstrates a comparison between two methods for assessing uncertainty in a traffic scene. The NeSy approach seems to struggle with accurately identifying the red light, leading to high uncertainty. The RS-aware approach, on the other hand, provides a more confident and consistent assessment of both traffic lights. The directed graphs illustrate the relationships between the detected objects and the level of confidence in those detections. The use of checkmarks, crosses, and neutral faces provides a quick visual indication of the system's confidence. The equation at the top suggests that the system is designed to stop when a pedestrian or red light is detected, and the diagrams illustrate how each approach quantifies the uncertainty associated with these detections. The RS-aware approach appears to be more robust and reliable in this scenario. The difference in entropy values and the direction of the lines in the graphs suggest that the RS-aware method is better at resolving ambiguities and providing a more accurate representation of the scene.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of Neural-Symbolic (NeSy) Approaches for Autonomous Driving Logic

### Overview

The image is a technical diagram comparing two approaches to implementing a logical rule for autonomous driving. The left panel shows a real-world driving scene with annotated objects. The middle and right panels contrast a "State-of-the-Art" (SotA) Neural-Symbolic (NeSy) method with a proposed "RS-aware" (Rule-Semantics-aware) method, using entropy charts and connection diagrams to illustrate their performance and internal reasoning.

### Components/Axes

The image is divided into three vertical panels separated by dashed lines.

**1. Left Panel (Problem Definition):**

* **Header Text:** `K = (pedestrian ∨ red ⇒ stop)`

* This is a logical rule: "If there is a pedestrian OR a red light, then stop."

* **Image Content:** A street scene from a vehicle's perspective.

* **Annotations:**

* A cyan bounding box around a traffic light on the left, labeled `green` in cyan text.

* A cyan bounding box around a traffic light further ahead, labeled `red` in cyan text.

* A magenta bounding box around two pedestrians crossing the street, labeled `red` in magenta text. This label likely indicates the logical condition "red" (as in red light) is being triggered by the presence of pedestrians, or it's a mislabel highlighting the conflict.

**2. Middle Panel (NeSy SotA):**

* **Header Text:** `NeSy SotA`

* **Top Chart - "Entropy":**

* A bar chart with three bars.

* **Bar 1 (Left):** Short blue bar with a green checkmark (✓) above it.

* **Bar 2 (Middle):** Taller blue bar with a red cross (✗) above it.

* **Bar 3 (Right):** Taller blue bar (similar height to Bar 2) with a red cross (✗) above it.

* **Bottom Diagram:**

* Three nodes at the top labeled: `g_l` (green light), `r_l` (red light), `pe` (pedestrian).

* Three colored circles at the bottom: a green circle, a red circle, and a neutral face (😐) circle.

* **Connections (Arrows):**

* `g_l` → green circle.

* `r_l` → red circle.

* `pe` → red circle.

* `pe` → neutral face circle.

**3. Right Panel (RS-aware):**

* **Header Text:** `RS-aware`

* **Top Chart - "Entropy":**

* A bar chart with three bars.

* **All Three Bars:** Are of equal, moderate height (shorter than the tall bars in the NeSy SotA chart). Each has a green checkmark (✓) above it.

* **Bottom Diagram:**

* Same three nodes at the top: `g_l`, `r_l`, `pe`.

* Same three colored circles at the bottom: green, red, neutral face.

* **Connections (Arrows):**

* `g_l` → green circle.

* `r_l` → red circle.

* `r_l` → neutral face circle.

* `pe` → red circle.

* `pe` → neutral face circle.

### Detailed Analysis

* **Logical Rule Application:** The left panel establishes the ground truth rule `K`. The scene contains a `green` light, a `red` light, and `pedestrians`. According to the rule, the presence of pedestrians (`pe`) should trigger the `stop` condition.

* **NeSy SotA Performance (Middle Panel):**

* **Entropy Chart:** Shows low entropy (certainty) for the first condition (likely `g_l`), but high entropy (uncertainty) for the second and third conditions (likely `r_l` and `pe`). The red crosses indicate failure or high uncertainty in correctly classifying or reasoning about the red light and pedestrian.

* **Connection Diagram:** Shows a direct, one-to-one mapping for `g_l` and `r_l`. The `pe` node connects *only* to the `red` circle and the `neutral` circle. This suggests the model is uncertain whether the pedestrian should map to the "red" (stop) condition or a neutral state, failing to confidently link it to the required logical outcome.

* **RS-aware Performance (Right Panel):**

* **Entropy Chart:** Shows moderate, equal entropy across all three conditions, with green checkmarks indicating successful handling or correct classification.

* **Connection Diagram:** Shows a more complex, cross-connected mapping. Both `r_l` and `pe` connect to *both* the `red` circle and the `neutral` circle. This indicates the model acknowledges the ambiguity or shared semantic role between a red light and a pedestrian in triggering the stop rule, distributing its reasoning across both relevant outputs.

### Key Observations

1. **Spatial Grounding:** The legend (Entropy chart symbols: ✓/✗) is placed directly above its corresponding bar. The connection diagrams are positioned directly below their respective entropy charts.

2. **Trend Verification:** The NeSy SotA entropy trend is "low, high, high". The RS-aware entropy trend is "moderate, moderate, moderate". The connection complexity increases from NeSy SotA (mostly separate lines) to RS-aware (crossed lines).

3. **Component Isolation:** The left panel defines the problem. The middle and right panels are direct comparisons of solution methods, each with a performance metric (entropy) and a model of internal reasoning (connection diagram).

4. **Anomaly/Highlight:** The magenta label `red` on the pedestrians in the left image is a critical annotation. It visually forces the connection between the object "pedestrian" and the logical condition "red" (stop), which is the core challenge the diagrams explore.

### Interpretation

This diagram argues that a standard Neural-Symbolic (NeSy) approach struggles with the semantic ambiguity inherent in real-world rules. The rule `pedestrian ∨ red ⇒ stop` requires the system to understand that two different physical objects (a traffic light showing red, and a pedestrian) map to the same logical condition (`stop`). The NeSy SotA model shows high uncertainty (high entropy) when processing these ambiguous cases (`r_l` and `pe`), as its internal connections are too rigid.

The proposed "RS-aware" model is designed to handle this rule semantics explicitly. Its lower, uniform entropy indicates greater confidence. The cross-connected diagram reveals its strategy: it doesn't force a one-to-one mapping. Instead, it allows both `r_l` and `pe` to influence both the `red` (stop) and `neutral` outputs, better modeling the "OR" logic where either input can trigger the same outcome. The diagram suggests that explicitly modeling the semantics of logical rules (how inputs relate to outputs) improves the robustness and certainty of AI systems in safety-critical tasks like autonomous driving.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Traffic Scenario Analysis with Decision Logic Diagrams

### Overview

The image presents a multi-part technical visualization analyzing traffic decision-making logic. Left section shows a street scene with annotated traffic elements, while right sections contain two decision logic diagrams comparing system architectures.

### Components/Axes

**Left Image (Traffic Scene):**

- Annotated elements:

- Traffic lights:

- Green light (blue box, left side)

- Red light (blue box, center)

- Pedestrian: Pink box (right side)

- Text annotations:

- "K = (pedestrian ∨ red ⇒ stop)" (top-left)

- "red" labels (blue and pink boxes)

**Right Diagrams:**

1. **NeSy SotA Diagram:**

- Table:

- Header: "Entropy"

- Entries:

- Green checkmark (✓)

- Red X (×)

- Red X (×)

- Flow diagram:

- Nodes:

- Green circle (g₁)

- Red circle (r₁)

- Pedestrian symbol (pe)

- Arrows:

- g₁ → pe

- r₁ → pe

2. **RS-aware Diagram:**

- Table:

- Header: "Entropy"

- Entries:

- Green checkmark (✓)

- Green checkmark (✓)

- Green checkmark (✓)

- Flow diagram:

- Nodes:

- Green circle (g₁)

- Red circle (r₁)

- Pedestrian symbol (pe)

- Arrows:

- g₁ → pe

- r₁ → pe

- pe → pe (self-loop)

### Detailed Analysis

**Traffic Scene:**

- Urban street view from vehicle perspective

- Three traffic lights visible:

- Left: Green (annotated)

- Center: Red (annotated)

- Right: Red (annotated)

- Pedestrian crossing street (pink box)

- Vehicles: Multiple cars in traffic lanes

**NeSy SotA Diagram:**

- Entropy table shows:

- Green light: ✓ (valid)

- Red light: × (invalid)

- Pedestrian: × (invalid)

- Flow diagram shows:

- Green and red lights influence pedestrian decision

- No self-loop or feedback mechanism

**RS-aware Diagram:**

- Entropy table shows:

- All elements (green, red, pedestrian): ✓ (valid)

- Flow diagram shows:

- Bidirectional influence between elements

- Pedestrian symbol has self-loop (pe → pe)

- More complex interdependencies

### Key Observations

1. **Decision Logic:**

- K rule enforces stop when pedestrian OR red light present

- NeSy SotA only validates green/red lights

- RS-aware validates all three elements (green, red, pedestrian)

2. **System Differences:**

- NeSy SotA: Simpler, single-direction influence

- RS-aware: More comprehensive, includes pedestrian awareness

- RS-aware's self-loop suggests continuous monitoring

3. **Entropy Validation:**

- Green light consistently valid in both systems

- Red light invalid in NeSy SotA but valid in RS-aware

- Pedestrian validation only in RS-aware

### Interpretation

The diagrams demonstrate two approaches to traffic decision-making:

1. **NeSy SotA** focuses on traffic light states but neglects pedestrian presence in entropy calculations, potentially leading to unsafe decisions when pedestrians are present.

2. **RS-aware** system shows improved safety by:

- Validating all three elements (green/red lights + pedestrian)

- Implementing bidirectional influence between components

- Including self-monitoring through pedestrian self-loop

3. The logical rule K (pedestrian OR red ⇒ stop) is foundational to both systems but implemented with different granularity. RS-aware's comprehensive validation aligns more closely with real-world safety requirements, suggesting better suitability for autonomous vehicle systems where pedestrian awareness is critical.

DECODING INTELLIGENCE...