## Diagram: Comparison of AI Model Responses to an "Unreasonable Question"

### Overview

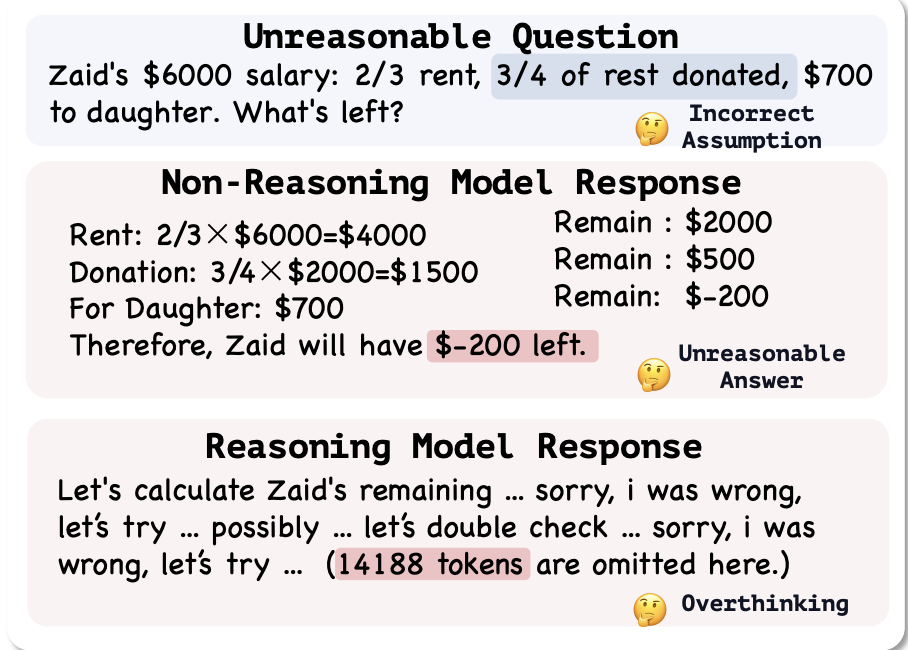

The image is a structured diagram comparing how two different types of AI models—a "Non-Reasoning Model" and a "Reasoning Model"—respond to the same mathematically flawed word problem. The diagram is divided into three horizontal sections, each with a title, content, and an emoji-based annotation.

### Components/Axes

The diagram has three primary components, arranged vertically:

1. **Top Section (Header/Problem Statement):** Titled "Unreasonable Question". Contains the problem text and an annotation.

2. **Middle Section:** Titled "Non-Reasoning Model Response". Contains a step-by-step calculation and a final answer, with an annotation.

3. **Bottom Section:** Titled "Reasoning Model Response". Contains a text snippet representing a model's internal monologue, with an annotation.

**Annotations (using 🤔 emoji):**

* Top-right of the first section: "Incorrect Assumption"

* Bottom-right of the second section: "Unreasonable Answer"

* Bottom-right of the third section: "Overthinking"

### Detailed Analysis / Content Details

**1. Top Section: Unreasonable Question**

* **Text:** "Zaid's $6000 salary: 2/3 rent, 3/4 of rest donated, $700 to daughter. What's left?"

* **Annotation:** 🤔 Incorrect Assumption

* **Analysis:** The problem is presented as a standard arithmetic word problem. The annotation suggests the premise or the question itself is flawed.

**2. Middle Section: Non-Reasoning Model Response**

This section shows a direct, linear calculation.

* **Calculation Steps:**

* `Rent: 2/3 × $6000 = $4000`

* `Donation: 3/4 × $2000 = $1500`

* `For Daughter: $700`

* **Intermediate Results (listed to the right):**

* `Remain : $2000` (after rent)

* `Remain : $500` (after donation)

* `Remain: $-200` (after giving to daughter)

* **Final Answer:** "Therefore, Zaid will have **$-200 left.**" (The "$-200" is highlighted with a pink background).

* **Annotation:** 🤔 Unreasonable Answer

* **Trend/Logic Check:** The model follows the operations sequentially: subtract 2/3 of the total, then subtract 3/4 of the remainder, then subtract a fixed amount. The trend leads to a negative final value, which is mathematically correct based on the operations but logically unreasonable in a real-world context (having negative money left).

**3. Bottom Section: Reasoning Model Response**

This section shows a text-based representation of a model's thought process.

* **Text:** "Let's calculate Zaid's remaining ... sorry, i was wrong, let's try ... possibly ... let's double check ... sorry, i was wrong, let's try ... (14188 tokens are omitted here.)"

* **Highlighted Text:** "(14188 tokens are omitted here.)" is highlighted with a pink background.

* **Annotation:** 🤔 Overthinking

* **Analysis:** The text depicts a model engaging in extensive self-correction and verification, leading to a very long internal process (14188 tokens) without arriving at or presenting a final answer. The trend is one of circular deliberation.

### Key Observations

1. **Problem Flaw:** The question is labeled as "Unreasonable," implying the scenario or the expected answer is nonsensical.

2. **Divergent Failure Modes:** The diagram contrasts two distinct failure modes:

* **Non-Reasoning Model:** Produces a mathematically consistent but contextually absurd answer (`$-200`) without recognizing the absurdity.

* **Reasoning Model:** Gets stuck in a loop of self-doubt and verification, consuming excessive computational resources (14188 tokens) without reaching a conclusion.

3. **Visual Emphasis:** The pink highlighting draws attention to the critical outputs: the unreasonable numerical answer and the excessive token count.

### Interpretation

This diagram serves as a critique or a case study on the limitations of different AI architectures when faced with ill-posed or "unreasonable" problems.

* **What the data suggests:** It demonstrates that both simplistic, direct-computation models and complex, self-reflective models can fail, but in fundamentally different ways. The non-reasoning model fails by being **literal and uncontextual**, while the reasoning model fails by being **inefficient and indecisive**.

* **How elements relate:** The three sections form a logical sequence: Problem -> Flawed Direct Solution -> Flawed Deliberative Process. The annotations ("Incorrect Assumption," "Unreasonable Answer," "Overthinking") create a narrative of cascading failure.

* **Notable implications:** The image highlights a key challenge in AI development: creating systems that can both **recognize the context and reasonableness of a problem** and **solve it efficiently without getting trapped in unproductive loops**. The omitted 14188 tokens symbolize the potential for massive resource waste in pursuit of a perfect answer to a bad question. It argues for a balance between reasoning capability and pragmatic problem-assessment.