## Diagram: Behavioral Frameworks in Reinforcement Learning

### Overview

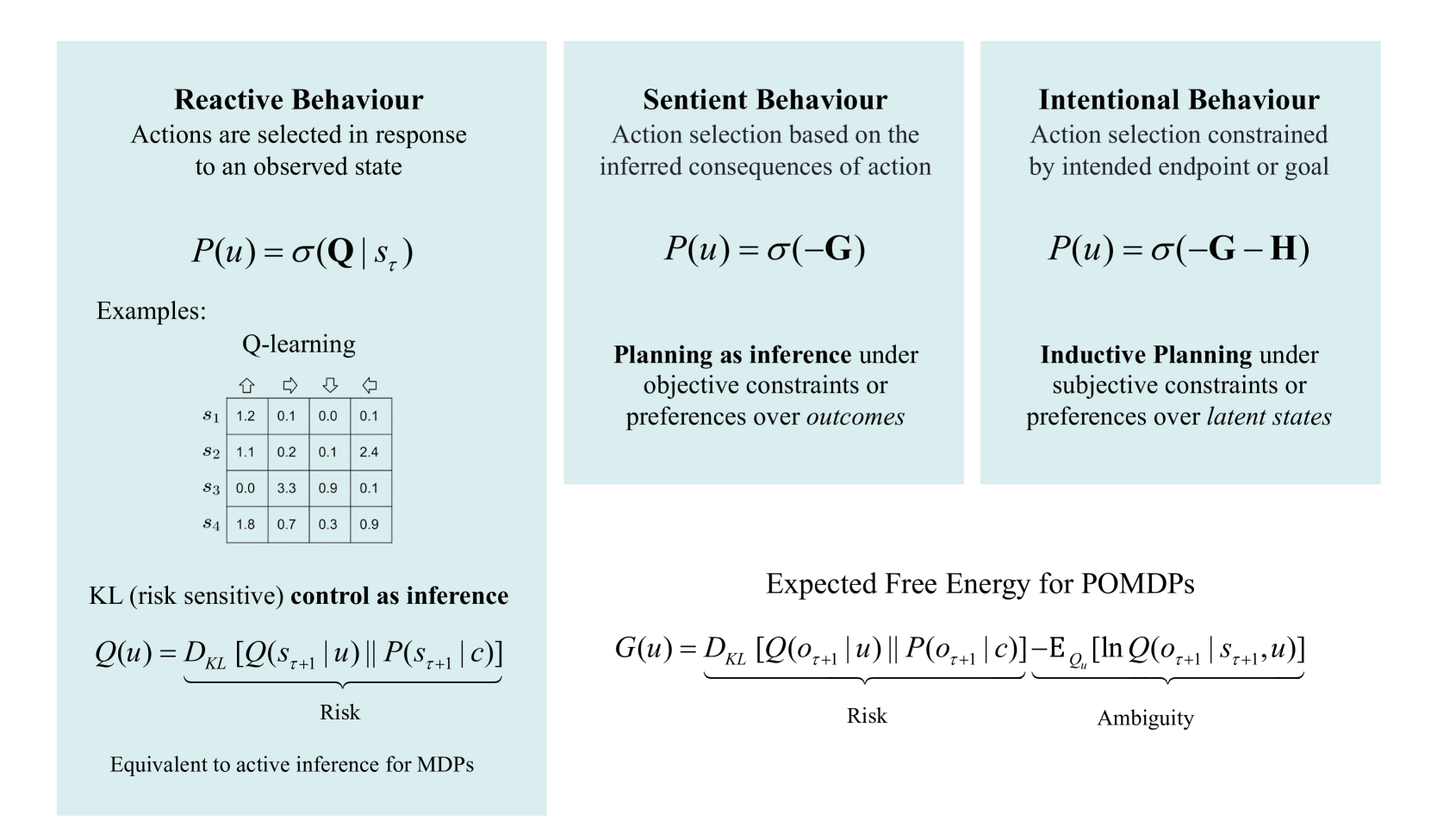

The image presents a comparative diagram outlining three behavioral frameworks in reinforcement learning: Reactive Behaviour, Sentient Behaviour, and Intentional Behaviour. Each framework is defined by its action selection process and associated mathematical formulation. The diagram also includes examples of Q-learning and related equations for KL (risk sensitive) control and Expected Free Energy.

### Components/Axes

The diagram is structured into three main columns, each representing a behavioral framework. Each column contains:

* **Title:** Indicating the type of behavior (Reactive, Sentient, Intentional).

* **Definition:** A textual description of the action selection process.

* **Mathematical Formulation:** An equation representing the framework.

* **Example/Extension:** Further elaboration or a related equation.

The bottom of the diagram includes a note stating equivalence to active inference for MDPs.

### Detailed Analysis or Content Details

**1. Reactive Behaviour (Left Column)**

* **Definition:** "Actions are selected in response to an observed state."

* **Equation:** `P(u) = σ(Q | sₜ)`

* **Example: Q-learning**

* A 2x2 table is presented with rows labeled `s₁`, `s₂`, `s₃`, `s₄` and columns labeled with symbols representing actions (up, right, down, left).

* The table contains the following approximate values:

* `s₁`: 1.2, 0.1, 0.0, 0.1

* `s₂`: 1.1, 0.2, 2.4, 0.1

* `s₃`: 0.0, 3.3, 0.9, 0.1

* `s₄`: 1.8, 0.7, 0.9, 0.9

* **Equation:** `Q(u) = DKL [Q(sₜ₊₁ | u) || P(sₜ₊₁ | c)]`

* **Label:** "Risk" is placed under the equation.

* **Note:** "Equivalent to active inference for MDPs"

**2. Sentient Behaviour (Center Column)**

* **Definition:** "Action selection based on the inferred consequences of action."

* **Equation:** `P(u) = σ(–G)`

* **Extension:** "Planning as inference under objective constraints or preferences over outcomes."

* **Equation:** `G(u) = DKL [Q(oₜ₊₁ | u) || P(oₜ₊₁ | c)] – E Qω [ln Q(oₜ₊₁ | sₜ₊₁ , u)]`

* **Labels:** "Risk" and "Ambiguity" are placed under the equation.

**3. Intentional Behaviour (Right Column)**

* **Definition:** "Action selection constrained by intended endpoint or goal."

* **Equation:** `P(u) = σ(–G – H)`

* **Extension:** "Inductive Planning under subjective constraints or preferences over latent states."

### Key Observations

* The diagram presents a progression from simpler (Reactive) to more complex (Intentional) behavioral frameworks.

* Each framework builds upon the previous one, adding layers of inference and constraint.

* The use of the Kullback-Leibler divergence (`DKL`) in the equations suggests a focus on information-theoretic approaches to reinforcement learning.

* The Q-learning example provides concrete values for a simple state-action space.

* The inclusion of "Risk" and "Ambiguity" as separate components in the Sentient Behaviour framework highlights the importance of uncertainty in decision-making.

### Interpretation

The diagram illustrates a hierarchical view of behavior in reinforcement learning agents. Reactive behavior represents the most basic level, where actions are directly determined by the current state. Sentient behavior introduces the ability to anticipate consequences, while Intentional behavior adds goal-directedness. The mathematical formulations provide a formal way to represent these different levels of behavior.

The Q-learning example demonstrates how the reactive framework can be implemented in practice. The equations for KL control and Expected Free Energy suggest ways to extend the framework to handle risk and ambiguity. The overall message is that intelligent behavior requires a combination of reactivity, anticipation, and goal-directedness, and that these different aspects can be formally modeled using probabilistic and information-theoretic tools. The diagram suggests a progression in complexity, with each level building upon the previous one to create more sophisticated and adaptive agents. The final note about equivalence to active inference suggests a broader theoretical connection to Bayesian brain theory.