## Multi-Chart Comparison: PKU Exam Performance

### Overview

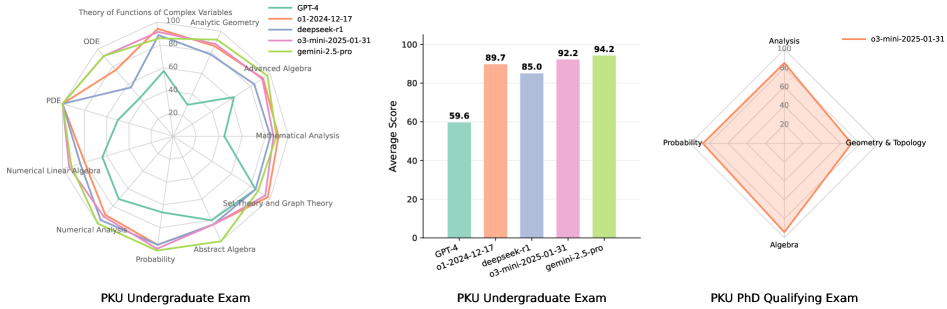

The image displays three charts comparing the performance of various AI models on academic mathematics examinations from Peking University (PKU). The left and middle charts focus on the "PKU Undergraduate Exam," while the right chart focuses on the "PKU PhD Qualifying Exam." The charts compare models including GPT-4, o1-2024-12-17, deepseek-r1, o3-mini-2025-01-31, and gemini-2.5-pro.

### Components/Axes

The image is composed of three distinct charts arranged horizontally.

**1. Left Chart: Radar Chart - PKU Undergraduate Exam**

* **Type:** Radar/Spider Chart

* **Title:** PKU Undergraduate Exam (bottom center)

* **Axes (12 subjects, clockwise from top):**

* Theory of Functions of Complex Variables

* Analytic Geometry

* Advanced Algebra

* Mathematical Analysis

* Set Theory and Graph Theory

* Abstract Algebra

* Probability

* Numerical Analysis

* Numerical Linear Algebra

* PDE (Partial Differential Equations)

* ODE (Ordinary Differential Equations)

* (The 12th axis label is partially obscured but appears to be another advanced mathematics topic).

* **Scale:** Concentric circles marked at 0, 20, 40, 60, 80, 100.

* **Legend (Top Right):**

* GPT-4 (Teal line)

* o1-2024-12-17 (Orange line)

* deepseek-r1 (Blue line)

* o3-mini-2025-01-31 (Pink line)

* gemini-2.5-pro (Light Green line)

**2. Middle Chart: Bar Chart - PKU Undergraduate Exam**

* **Type:** Vertical Bar Chart

* **Title:** PKU Undergraduate Exam (bottom center)

* **Y-Axis:** "Average Score" (Scale: 0 to 100, increments of 20).

* **X-Axis:** Model names.

* **Data Series (Bars, left to right):**

* GPT-4 (Teal bar)

* o1-2024-12-17 (Orange bar)

* deepseek-r1 (Blue bar)

* o3-mini-2025-01-31 (Pink bar)

* gemini-2.5-pro (Light Green bar)

**3. Right Chart: Radar Chart - PKU PhD Qualifying Exam**

* **Type:** Radar/Spider Chart

* **Title:** PKU PhD Qualifying Exam (bottom center)

* **Axes (4 subjects):**

* Analysis (Top)

* Geometry & Topology (Right)

* Algebra (Bottom)

* Probability (Left)

* **Scale:** Concentric circles marked at 0, 20, 40, 60, 80, 100.

* **Legend (Top Right):**

* o3-mini-2025-01-31 (Orange line)

### Detailed Analysis

**Left Chart: PKU Undergraduate Exam (Radar)**

* **Trend Verification:** The lines for o3-mini-2025-01-31 (pink) and gemini-2.5-pro (light green) form the outermost shapes, indicating the highest overall performance. The GPT-4 (teal) line is the innermost, indicating the lowest overall performance. The o1-2024-12-17 (orange) and deepseek-r1 (blue) lines are in the middle tier.

* **Data Points (Approximate values by subject and model):**

* **Theory of Functions of Complex Variables:** o3-mini & gemini ~95, o1 ~85, deepseek ~80, GPT-4 ~70.

* **Analytic Geometry:** o3-mini & gemini ~90, o1 ~85, deepseek ~80, GPT-4 ~65.

* **Advanced Algebra:** o3-mini & gemini ~95, o1 ~90, deepseek ~85, GPT-4 ~60.

* **Mathematical Analysis:** o3-mini & gemini ~90, o1 ~85, deepseek ~80, GPT-4 ~55.

* **Set Theory and Graph Theory:** o3-mini & gemini ~85, o1 ~80, deepseek ~75, GPT-4 ~50.

* **Abstract Algebra:** o3-mini & gemini ~90, o1 ~85, deepseek ~80, GPT-4 ~55.

* **Probability:** o3-mini & gemini ~85, o1 ~80, deepseek ~75, GPT-4 ~60.

* **Numerical Analysis:** o3-mini & gemini ~80, o1 ~75, deepseek ~70, GPT-4 ~50.

* **Numerical Linear Algebra:** o3-mini & gemini ~85, o1 ~80, deepseek ~75, GPT-4 ~55.

* **PDE:** o3-mini & gemini ~80, o1 ~75, deepseek ~70, GPT-4 ~45.

* **ODE:** o3-mini & gemini ~85, o1 ~80, deepseek ~75, GPT-4 ~50.

**Middle Chart: PKU Undergraduate Exam (Bar)**

* **Data Points (Exact values displayed on bars):**

* GPT-4: 59.6

* o1-2024-12-17: 89.7

* deepseek-r1: 85.0

* o3-mini-2025-01-31: 92.2

* gemini-2.5-pro: 94.2

**Right Chart: PKU PhD Qualifying Exam (Radar)**

* **Trend Verification:** The single orange line for o3-mini-2025-01-31 forms a diamond shape skewed towards the top (Analysis) and right (Geometry & Topology).

* **Data Points (Approximate values for o3-mini-2025-01-31):**

* Analysis: ~95

* Geometry & Topology: ~90

* Algebra: ~80

* Probability: ~85

### Key Observations

1. **Performance Hierarchy:** On the undergraduate exam, gemini-2.5-pro (94.2) and o3-mini-2025-01-31 (92.2) are the top performers, followed by o1-2024-12-17 (89.7), deepseek-r1 (85.0), and GPT-4 (59.6).

2. **Subject Strengths/Weaknesses:** All models show a relative dip in performance on "Numerical Analysis" and "PDE" in the radar chart. GPT-4's performance is notably weaker across all subjects compared to the other models.

3. **PhD Exam Performance:** The o3-mini-2025-01-31 model, which performed well on the undergraduate exam, also shows strong performance on the PhD qualifying exam, with its highest score in "Analysis."

4. **Visual Consistency:** The color coding is consistent across the first two charts (e.g., the pink line for o3-mini corresponds to the pink bar with a score of 92.2).

### Interpretation

This set of charts provides a comparative benchmark of advanced AI models on rigorous, standardized mathematics examinations. The data suggests a significant progression in capability, with the latest models (o3-mini-2025-01-31 and gemini-2.5-pro) achieving scores in the low-to-mid 90s, approaching what might be considered expert human performance on such exams. The consistent underperformance of GPT-4 relative to the other listed models highlights the rapid pace of development in this field. The inclusion of a PhD qualifying exam benchmark for one model indicates testing at an even higher level of specialized knowledge. The charts effectively communicate that model performance is not uniform across all mathematical sub-disciplines, with areas like PDEs and Numerical Analysis appearing more challenging for all models shown. This information is crucial for understanding the current state-of-the-art in AI for formal mathematical reasoning and identifying areas for further improvement.