TECHNICAL ASSET FINGERPRINT

7be59fbebd3b17f6104a658e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

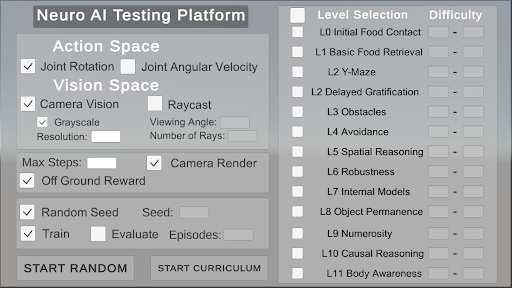

## GUI: Neuro AI Testing Platform

### Overview

The image is a screenshot of a GUI for a "Neuro AI Testing Platform". It features sections for configuring the action space, vision space, reward system, training parameters, and level selection. The interface allows users to define various aspects of the AI's environment and training regime.

### Components/Axes

* **Title:** Neuro AI Testing Platform

* **Action Space:**

* Joint Rotation (Checkbox: Selected)

* Joint Angular Velocity (Checkbox: Unselected)

* **Vision Space:**

* Camera Vision (Checkbox: Selected)

* Grayscale (Checkbox: Selected)

* Resolution: (Text Input Field)

* Raycast (Checkbox: Unselected)

* Viewing Angle: (Text Input Field)

* Number of Rays: (Text Input Field)

* **Reward:**

* Max Steps: (Text Input Field)

* Camera Render (Checkbox: Selected)

* Off Ground Reward (Checkbox: Selected)

* **Training:**

* Random Seed (Checkbox: Selected)

* Seed: (Text Input Field)

* Train (Checkbox: Selected)

* Evaluate (Checkbox: Unselected)

* Episodes: (Text Input Field)

* **Level Selection:**

* Level Selection (Header)

* Difficulty (Header)

* L0 Initial Food Contact (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L1 Basic Food Retrieval (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L2 Y-Maze (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L2 Delayed Gratification (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L3 Obstacles (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L4 Avoidance (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L5 Spatial Reasoning (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L6 Robustness (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L7 Internal Models (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L8 Object Permanence (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L9 Numerosity (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L10 Causal Reasoning (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* L11 Body Awareness (Checkbox: Unselected, Difficulty: Text Input Field with "-")

* **Buttons:**

* START RANDOM

* START CURRICULUM

### Detailed Analysis or ### Content Details

The GUI is structured into distinct sections, each controlling a specific aspect of the AI testing environment.

* **Action Space:** The AI can control its "Joint Rotation". "Joint Angular Velocity" is an available option but is currently unselected.

* **Vision Space:** The AI uses "Camera Vision" and processes it in "Grayscale". Parameters like "Resolution", "Viewing Angle", and "Number of Rays" can be configured, but the values are not specified in the provided image.

* **Reward:** The AI receives a reward for being "Off Ground". The "Camera Render" option is enabled. The "Max Steps" parameter is configurable.

* **Training:** The training process uses a "Random Seed". The "Train" option is enabled, while "Evaluate" is disabled. The number of "Episodes" can be specified.

* **Level Selection:** A series of levels (L0 to L11) are listed, each with a checkbox for selection and a field to specify the difficulty. None of the levels are currently selected. The difficulty for each level is set to "-".

### Key Observations

* The GUI provides a comprehensive set of options for configuring an AI testing environment.

* The user has selected "Joint Rotation" for the action space and "Camera Vision" with "Grayscale" for the vision space.

* The AI receives a reward for being "Off Ground".

* The training process is configured to use a random seed and is currently set to train.

* No specific levels are selected for training.

### Interpretation

The "Neuro AI Testing Platform" GUI allows researchers and developers to set up and run experiments for training AI agents. The configuration options cover a range of aspects, from the agent's action and perception capabilities to the reward structure and training regime. The level selection feature suggests a curriculum learning approach, where the AI is gradually exposed to more complex tasks. The current configuration indicates a setup focused on joint rotation control, grayscale camera vision, and a reward for being off the ground, with the training process enabled but no specific levels selected.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 2

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Screenshot: Neuro AI Testing Platform Interface

### Overview

The image is a screenshot of a user interface for a "Neuro AI Testing Platform". It appears to be a configuration panel allowing users to set parameters for training and evaluating artificial intelligence agents. The interface is divided into sections for Action Space, Vision Space, general settings (Max Steps, Reward), and Level Selection. It features checkboxes, input fields, and buttons for controlling the simulation environment.

### Components/Axes

The interface is structured into the following sections:

* **Header:** "Neuro AI Testing Platform"

* **Action Space:** Contains checkboxes for "Joint Rotation" and "Joint Angular Velocity".

* **Vision Space:** Contains checkboxes for "Camera Vision" and "Raycast". Sub-options include "Grayscale" (checkbox), "Resolution" (input field), "Viewing Angle" (input field), and "Number of Rays" (input field).

* **Max Steps:** Contains a checkbox for "Off Ground Reward" and a checkbox for "Camera Render".

* **Random Seed:** Contains a checkbox for "Random Seed" and an input field labeled "Seed:".

* **Train/Evaluate:** Contains checkboxes for "Train" and "Evaluate", and an input field labeled "Episodes:".

* **Buttons:** "START RANDOM" and "START CURRICULUM".

* **Level Selection:** A list of levels labeled "L0" through "L11", each with a checkbox. The levels are:

* L0 Initial Food Contact

* L1 Basic Food Retrieval

* L2 Y-Maze

* L2 Delayed Gratification

* L3 Obstacles

* L4 Avoidance

* L5 Spatial Reasoning

* L6 Robustness

* L7 Internal Models

* L8 Object Permanence

* L9 Numerosity

* L10 Causal Reasoning

* L11 Body Awareness

* **Difficulty:** A column of sliders next to the Level Selection list.

### Detailed Analysis or Content Details

The interface presents a series of configurable options.

* **Action Space:** Both "Joint Rotation" and "Joint Angular Velocity" are unchecked by default.

* **Vision Space:** "Camera Vision" is checked, while "Raycast" is unchecked. "Grayscale" is unchecked. The "Resolution", "Viewing Angle", and "Number of Rays" input fields are empty.

* **Max Steps:** "Off Ground Reward" is checked, and "Camera Render" is checked.

* **Random Seed:** "Random Seed" is checked. The "Seed:" input field is empty.

* **Train/Evaluate:** "Train" and "Evaluate" are checked. The "Episodes:" input field is empty.

* **Level Selection:** All levels (L0-L11) are unchecked by default. The difficulty sliders are all set to their minimum value.

* **Buttons:** The buttons "START RANDOM" and "START CURRICULUM" are present.

### Key Observations

The interface is designed for controlling a simulation environment. The options allow for customization of the agent's action space, sensory input (vision), training parameters, and the complexity of the environment (level selection). The presence of both "Train" and "Evaluate" checkboxes suggests the platform supports both training and testing of AI agents. The "START RANDOM" and "START CURRICULUM" buttons indicate different approaches to training – random exploration versus a pre-defined curriculum.

### Interpretation

This interface is likely part of a larger system for developing and testing reinforcement learning agents. The configurable options allow researchers to experiment with different settings and observe their impact on agent performance. The level selection provides a way to gradually increase the complexity of the task, potentially following a curriculum learning approach. The "Neuro AI Testing Platform" name suggests a focus on neural network-based AI. The interface is relatively simple and straightforward, suggesting it is intended for users with some familiarity with reinforcement learning concepts. The empty input fields indicate that the user has not yet configured the simulation. The checked boxes for "Camera Vision", "Off Ground Reward", "Random Seed", "Train", and "Evaluate" suggest a default configuration that utilizes camera input, provides a reward for staying off the ground, uses a random seed for reproducibility, and initiates both training and evaluation. The levels listed suggest a progression of tasks designed to test various aspects of intelligence, from basic food retrieval to more complex reasoning abilities like causality and body awareness.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Screenshot: Neuro AI Testing Platform Interface

### Overview

This image is a screenshot of a graphical user interface (GUI) for a software application titled "Neuro AI Testing Platform." The interface is designed for configuring parameters and selecting tasks for testing or training an artificial intelligence system, likely in a simulated environment. The layout is divided into two primary vertical panels: a configuration panel on the left and a level selection panel on the right.

### Components/Axes

The interface is composed of the following major sections and elements:

**1. Header:**

* **Title:** "Neuro AI Testing Platform" (centered at the top).

**2. Left Configuration Panel:**

This panel contains multiple grouped settings with checkboxes, labels, and input fields.

* **Action Space:**

* Checkbox: `[✓] Joint Rotation`

* Checkbox: `[ ] Joint Angular Velocity`

* **Vision Space:**

* Checkbox: `[✓] Camera Vision`

* Checkbox: `[ ] Raycast`

* Checkbox: `[✓] Grayscale` (indented under Camera Vision)

* Label & Input Field: `Viewing Angle: [ ]`

* Label & Input Field: `Resolution: [ ]`

* Label & Input Field: `Number of Rays: [ ]`

* **General Settings:**

* Label & Input Field: `Max Steps: [ ]`

* Checkbox: `[✓] Camera Render`

* Checkbox: `[✓] Off Ground Reward`

* **Training Control:**

* Checkbox: `[✓] Random Seed`

* Label & Input Field: `Seed: [ ]`

* Checkbox: `[✓] Train`

* Checkbox: `[ ] Evaluate`

* Label & Input Field: `Episodes: [ ]`

* **Action Buttons:**

* Button: `START RANDOM`

* Button: `START CURRICULUM`

**3. Right Level Selection Panel:**

This panel is a vertical list of selectable tasks or training levels.

* **Column Headers:** `Level Selection` and `Difficulty`

* **List of Levels (each with a checkbox):**

* `[ ] L0 Initial Food Contact` | Difficulty: `-`

* `[ ] L1 Basic Food Retrieval` | Difficulty: `-`

* `[ ] L2 Y-Maze` | Difficulty: `-`

* `[ ] L2 Delayed Gratification` | Difficulty: `-`

* `[ ] L3 Obstacles` | Difficulty: `-`

* `[ ] L4 Avoidance` | Difficulty: `-`

* `[ ] L5 Spatial Reasoning` | Difficulty: `-`

* `[ ] L6 Robustness` | Difficulty: `-`

* `[ ] L7 Internal Models` | Difficulty: `-`

* `[ ] L8 Object Permanence` | Difficulty: `-`

* `[ ] L9 Numerosity` | Difficulty: `-`

* `[ ] L10 Causal Reasoning` | Difficulty: `-`

* `[ ] L11 Body Awareness` | Difficulty: `-`

### Detailed Analysis

* **State of Controls:** Several checkboxes are pre-selected (marked with `✓`), indicating default or active settings: `Joint Rotation`, `Camera Vision`, `Grayscale`, `Camera Render`, `Off Ground Reward`, `Random Seed`, and `Train`.

* **Empty Input Fields:** All numerical input fields (`Viewing Angle`, `Resolution`, `Number of Rays`, `Max Steps`, `Seed`, `Episodes`) are empty, represented by blank boxes `[ ]`.

* **Level List Structure:** The level list contains 12 entries (L0 through L11). Notably, there are two distinct entries labeled "L2": "L2 Y-Maze" and "L2 Delayed Gratification." All checkboxes in this list are unselected (`[ ]`).

* **Difficulty Column:** The "Difficulty" column for every level contains only a hyphen (`-`), suggesting this value is either not set, not applicable, or to be determined by the system or user.

* **Spatial Layout:** The configuration panel occupies approximately the left 60% of the window. The level selection panel occupies the right 40%. The two action buttons are positioned at the bottom of the left panel.

### Key Observations

1. **Dual L2 Levels:** The presence of two separate "L2" levels is a notable structural anomaly. This could indicate a versioning error, two sub-tasks within the same difficulty tier, or a simple labeling mistake.

2. **Default Configuration:** The interface loads with a specific set of features enabled (vision, certain rewards, training mode), suggesting a common starting point for users.

3. **Unpopulated Parameters:** All configurable numerical values are blank, requiring user input before execution. The difficulty ratings are also unpopulated.

4. **Task Progression:** The level names (L0-L11) suggest a curriculum or progression of complexity, starting from basic sensory-motor tasks ("Initial Food Contact") and advancing to abstract cognitive challenges ("Causal Reasoning," "Body Awareness").

### Interpretation

This interface is the control panel for a neuro-evolutionary or reinforcement learning AI testing platform. Its purpose is to allow researchers or engineers to define the **morphology** (action and vision spaces) and **environmental parameters** of an AI agent, and then select a specific **task or competency** (from the Level Selection list) to train or evaluate it on.

* **Relationship Between Elements:** The left panel defines the agent's capabilities and the rules of its world. The right panel defines the specific challenge it must solve. The "START" buttons initiate the process, either with randomized parameters (`START RANDOM`) or following a predefined curriculum (`START CURRICULUM`).

* **Implied Workflow:** A user would: 1) Configure the agent's sensors and actuators, 2) Set training parameters like step limits and random seeds, 3) Select one or more competency levels from the list, and 4) Launch the simulation.

* **Underlying Purpose:** The platform appears designed to systematically test and develop increasingly sophisticated cognitive abilities in artificial agents, moving from reflexive behaviors to higher-order reasoning. The empty difficulty fields imply that the challenge of each level may be dynamic or assessed during testing.

* **Notable Absence:** There is no visible output console, visualization window, or data readout in this screenshot. This suggests it is purely a configuration screen, with results likely displayed in a separate window or after execution.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Screenshot: Neuro AI Testing Platform Interface

### Overview

The image depicts a configuration interface for a Neuro AI Testing Platform, featuring two primary sections: a **Settings Panel** on the left and a **Level Selection Panel** on the right. The interface includes checkboxes, input fields, and buttons for configuring AI testing parameters and selecting simulation levels.

---

### Components/Axes

#### Left Panel (Settings)

1. **Action Space**

- **Joint Rotation**: Checked (✓)

- **Joint Angular Velocity**: Unchecked (⬜)

2. **Vision Space**

- **Camera Vision**: Checked (✓)

- **Raycast**: Unchecked (⬜)

- **Grayscale**: Checked (✓)

- **Resolution**: Empty input field

- **Viewing Angle**: Empty input field

- **Number of Rays**: Empty input field

3. **General Parameters**

- **Max Steps**: Empty input field

- **Camera Render**: Checked (✓)

- **Off Ground Reward**: Checked (✓)

- **Random Seed**: Checked (✓), with an empty "Seed" field

- **Train**: Checked (✓)

- **Evaluate**: Unchecked (⬜)

- **Episodes**: Empty input field

#### Right Panel (Level Selection)

1. **Level Selection**

- **L0 Initial Food Contact**

- **L1 Basic Food Retrieval**

- **L2 Y-Maze**

- **L2 Delayed Gratification**

- **L3 Obstacles**

- **L4 Avoidance**

- **L5 Spatial Reasoning**

- **L6 Robustness**

- **L7 Internal Models**

- **L8 Object Permanence**

- **L9 Numerosity**

- **L10 Causal Reasoning**

- **L11 Body Awareness**

- All levels have unchecked checkboxes (⬜).

2. **Difficulty**

- Dropdown menu with a placeholder value ("-"), indicating no selection.

#### Buttons

- **Start Random** (bottom-left)

- **Start Curriculum** (bottom-center)

---

### Detailed Analysis

- **Action Space**: Joint Rotation is enabled, but Joint Angular Velocity is disabled, suggesting the AI will control rotational movement but not angular speed.

- **Vision Space**: Camera Vision is active with Grayscale enabled, but Raycast is disabled. Resolution, Viewing Angle, and Number of Rays are unspecified, leaving these parameters undefined.

- **General Parameters**:

- Max Steps and Episodes are unset, implying no predefined limits for simulation steps or training episodes.

- Off Ground Reward and Camera Render are enabled, indicating penalties for leaving the ground and active camera rendering during testing.

- **Level Selection**: All levels (L0–L11) are unselected, and no difficulty is chosen, suggesting the interface is in a pre-configuration state.

---

### Key Observations

1. **Unspecified Parameters**: Critical fields like Resolution, Viewing Angle, and Max Steps are empty, which could lead to undefined behavior during testing.

2. **Checkbox Consistency**: All checkboxes in the Level Selection Panel are unchecked, indicating no levels are currently active.

3. **Dropdown Ambiguity**: The Difficulty dropdown shows a placeholder ("-"), implying no difficulty level is assigned.

---

### Interpretation

This interface is designed for configuring AI training and testing scenarios. The **Action Space** and **Vision Space** settings define the AI's interaction with its environment (e.g., movement constraints and sensory input). The **Level Selection** panel allows incremental testing of AI capabilities across increasing complexity (L0–L11). However, the absence of selected levels and unspecified parameters (e.g., Resolution, Max Steps) suggests the platform is in an initial setup phase. The **Start Random** and **Start Curriculum** buttons likely initiate training or testing workflows, but their behavior depends on the configured parameters. The interface emphasizes modularity, enabling users to isolate specific skills (e.g., spatial reasoning, object permanence) for targeted AI evaluation.

DECODING INTELLIGENCE...