TECHNICAL ASSET FINGERPRINT

7c2e70041d20a371704b61b4

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

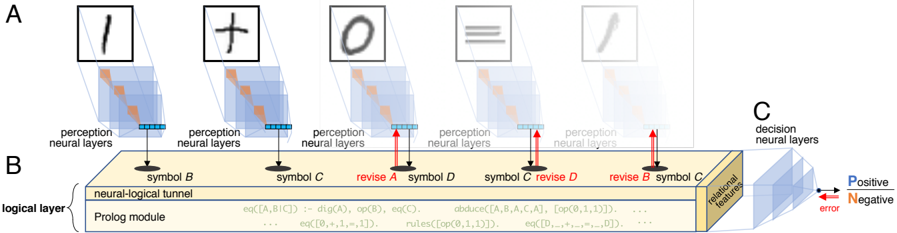

## Neural-Logical Reasoning Diagram

### Overview

The image illustrates a neural-logical reasoning system, processing visual input through perception neural layers, integrating it with a Prolog module via a neural-logical tunnel, and making decisions based on relational features. The diagram shows how visual symbols are processed and revised using logical rules.

### Components/Axes

* **A:** Represents the input layer with handwritten symbols (1, +, 0, =, /). Each symbol is processed by perception neural layers.

* **Labels:** "perception neural layers"

* **B:** Represents the logical layer, consisting of a "neural-logical tunnel" and a "Prolog module". This layer processes the symbols and revises them based on logical rules.

* **Labels:** "symbol B", "symbol C", "revise A", "symbol D", "revise D", "revise B", "symbol C", "neural-logical tunnel", "Prolog module", "logical layer"

* **C:** Represents the decision neural layers, which output a decision based on relational features.

* **Labels:** "decision neural layers", "relational features", "Positive", "Negative", "error"

* **Arrows:** Red arrows indicate error signals or revisions. Black arrows indicate the flow of information.

### Detailed Analysis or ### Content Details

* **Input Symbols (A):**

* The diagram shows five handwritten symbols: '1', '+', '0', '=', and '/'.

* Each symbol is fed into a stack of "perception neural layers", represented as a cube.

* The output of these layers is a set of features, represented as a row of blue squares.

* **Logical Layer (B):**

* The "neural-logical tunnel" connects the perception neural layers to the "Prolog module".

* The Prolog module contains logical rules, such as:

* `eq([A,B,C]) :- dig(A), op(B), eq(C).`

* `eq([0,+,1,-,1]).`

* `abduce([A, B, A,C,A], [op(0,1,1)]).`

* `rules([op(0,1,1)]).`

* `eq([D]).`

* `eq([D,+,*,=,D]).`

* The symbols are processed and potentially revised based on these rules. For example, "revise A", "revise B", and "revise D" are explicitly labeled.

* **Decision Layer (C):**

* The "decision neural layers" receive "relational features" from the logical layer.

* Based on these features, the system makes a decision, indicated by the "Positive" and "Negative" outputs.

* An "error" signal is shown as a red arrow pointing back into the decision layer.

### Key Observations

* The diagram illustrates a hybrid system combining neural networks for perception and logical reasoning using Prolog.

* The "revise" labels indicate that the system can correct or refine its understanding of the input symbols based on logical rules.

* The error signal suggests a feedback loop for learning or adaptation.

### Interpretation

The diagram demonstrates a system that integrates visual perception with logical reasoning. The neural networks handle the initial processing of visual input, while the Prolog module applies logical rules to interpret and revise the symbols. This approach allows the system to handle noisy or ambiguous input by leveraging logical constraints. The error signal suggests that the system can learn from its mistakes and improve its performance over time. The system is designed to process and understand symbolic information, potentially for tasks such as mathematical problem-solving or logical inference.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Conceptual Model of a Cognitive Architecture

### Overview

The image depicts a conceptual diagram of a cognitive architecture, illustrating the flow of information from perception to decision-making. It appears to represent a system combining neural network-like perception layers with a logical reasoning component (Prolog module) and a decision-making layer. The diagram is segmented into three main areas labeled A, B, and C.

### Components/Axes

* **A: Perception Neural Layers:** This section shows five instances of "perception neural layers" represented as translucent blue grids. Each layer is associated with a different symbol presented above it.

* **B: Logical Layer:** This section represents a "logical layer" containing a "neural-logical tunnel" and a "Prolog module". The Prolog module displays a series of logical expressions.

* **C: Decision Neural Layers:** This section represents "decision neural layers" and is colored with a gradient from blue to red, indicating "Positive" and "Negative" error.

* **Symbols:** Five symbols are presented above the perception layers: a vertical line, a plus sign, a circle, an equals sign, and a forward slash.

* **Connections:** Red and blue arrows connect the perception layers to the logical layer and the logical layer to the decision layers.

* **Labels:** "symbol B", "symbol C", "revise A", "symbol D", "revise C", "revise B" are labels associated with the connections between the perception and logical layers.

* **Legend:** A color gradient indicates "Positive" (blue) and "Negative" (red) error in the decision neural layers.

* **Text within Prolog Module:** A series of logical expressions are visible within the Prolog module, including "eq(A,B) :- dig(A), op(B), eq(C)", "abduce(A,B,A,C,A)", "rules(op(0,1,1))", and others.

### Detailed Analysis / Content Details

The diagram shows a sequential processing flow.

1. **Perception:** Five different symbols (vertical line, plus sign, circle, equals sign, forward slash) are input into separate "perception neural layers".

2. **Logical Processing:** Each perception layer is connected to the "neural-logical tunnel" within the logical layer. The connections are labeled with symbols and revisions (e.g., "symbol B", "revise A"). The Prolog module contains a series of logical rules and expressions.

3. **Decision Making:** The output of the logical layer is fed into the "decision neural layers". The color of the decision layers indicates the presence of positive or negative error.

The Prolog module contains the following expressions (transcribed as accurately as possible):

* `eq(A,B) :- dig(A), op(B), eq(C)`

* `abduce(A,B,A,C,A)`

* `rules(op(0,1,1))`

* `eq(B, ... ,11)`

* `eq(C, ... ,11)`

* `rules(op(0,1,11))`

* `eq(B, ... ,0)`

The connections between the perception layers and the logical layer are differentiated by color: blue arrows are associated with "symbol B" and "symbol C", while red arrows are associated with "revise A", "symbol D", "revise C", and "revise B".

### Key Observations

* The diagram suggests a hybrid cognitive architecture combining neural network-like processing with symbolic reasoning.

* The "revise" labels indicate a feedback or iterative process where the logical layer refines its understanding based on the input from the perception layers.

* The color gradient in the decision layers suggests a continuous spectrum of error, rather than a binary classification.

* The Prolog module appears to implement a set of logical rules and inference mechanisms.

### Interpretation

This diagram illustrates a cognitive architecture that attempts to bridge the gap between sub-symbolic (neural network) and symbolic (logical reasoning) processing. The perception layers extract features from the input symbols, which are then processed by the Prolog module using logical rules. The decision layers then evaluate the output of the logical module and provide feedback in the form of error signals.

The "revise" connections suggest a mechanism for learning and adaptation, where the logical layer refines its rules based on the input from the perception layers. The Prolog module likely implements a form of abductive reasoning, where it attempts to find the best explanation for the observed data.

The diagram highlights the importance of integrating different levels of processing in a cognitive architecture. By combining neural networks with symbolic reasoning, the system can potentially achieve a more robust and flexible form of intelligence. The presence of "Positive" and "Negative" error suggests a learning mechanism where the system adjusts its parameters to minimize error. The specific logical expressions within the Prolog module would need further analysis to understand the precise functionality of the system.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Neuro-Symbolic AI System Architecture

### Overview

The image is a technical diagram illustrating the architecture of a neuro-symbolic artificial intelligence system. It depicts a pipeline that processes handwritten symbols through neural perception layers, integrates them with a logical reasoning module (Prolog), and feeds the results into decision-making neural layers. The system features a feedback mechanism for iterative revision of symbols based on logical constraints.

### Components/Axes

The diagram is segmented into three primary labeled regions: **A**, **B**, and **C**.

**Region A (Top - Perception Stage):**

* **Content:** Five sequential panels, each showing a handwritten symbol inside a square frame.

* **Symbols (from left to right):**

1. A vertical line, resembling the digit "1" or letter "l".

2. A plus sign "+".

3. A circle, resembling the digit "0" or letter "O".

4. Three horizontal lines, resembling the "≡" symbol.

5. A diagonal line, resembling a slash "/".

* **Labels:** Below each symbol panel is the text "perception neural layers". Arrows point from these layers down to the logical layer in Region B.

* **Annotations:** Red arrows point upward from the logical layer (B) back to the perception layers for the 1st, 3rd, 4th, and 5th symbols. These are labeled:

* `revise A` (pointing to the 1st symbol's perception layer)

* `revise D` (pointing to the 4th symbol's perception layer)

* `revise B` (pointing to the 5th symbol's perception layer)

* (The 3rd symbol also has a red upward arrow, but its label is partially obscured; it likely follows the pattern).

**Region B (Center - Logical Layer):**

* **Title:** `logical layer` (written vertically on the left).

* **Main Component:** A large, beige rectangular block labeled `neural-logical tunnel`.

* **Sub-component:** Inside the tunnel is a section labeled `Prolog module`.

* **Content within Prolog module:** Several lines of Prolog-like logical rules and facts. The text is small but partially legible:

* `eq([A,B,C]) :- dig(A), op(B), eq(C).`

* `eq([0,+,1,1]).`

* `rules([op(0,+,1,1)]).`

* `abduce([A,B,C,A,A]), [op(0,+,1,1)]...`

* `eq([0,+,+,+,1]).`

* `rules([op(0,+,+,+,1)]).`

* **Connections:**

* **Inputs:** Black arrows from Region A's perception layers point down into the tunnel, labeled with symbol names: `symbol B`, `symbol C`, `symbol A`, `symbol D`, `symbol C`, `symbol D`, `symbol B`, `symbol C`.

* **Feedback:** Red upward arrows (labeled `revise`) originate from the tunnel and point back to specific perception layers in Region A.

* **Output:** An arrow points from the right end of the tunnel to Region C.

**Region C (Right - Decision Stage):**

* **Title:** `decision neural layers`.

* **Content:** A stack of blue, semi-transparent rectangular planes, representing neural network layers.

* **Output Labels:** Three arrows point out from the right side of the decision layers:

* A **blue** arrow labeled `Positive`.

* A **red** arrow labeled `Negative`.

* A **black** arrow labeled `error`.

* **Spatial Grounding:** The legend for the output arrows (Positive/Negative/error) is located at the bottom-right of the diagram, adjacent to the decision layers.

### Detailed Analysis

The diagram outlines a closed-loop, iterative process:

1. **Perception:** Handwritten symbols are initially processed by dedicated "perception neural layers."

2. **Symbol Grounding & Logical Processing:** The outputs of these perception layers (labeled as `symbol B`, `symbol C`, etc.) are fed into the `neural-logical tunnel`. Here, a `Prolog module` attempts to ground these perceptual symbols into a formal logical representation using rules and facts (e.g., defining equations like `eq([0,+,1,1])`).

3. **Decision:** The processed information from the logical tunnel is passed to `decision neural layers` to produce a final classification (`Positive` or `Negative`) or flag an `error`.

4. **Revision Loop (Key Feature):** The system does not process symbols in a single pass. The logical module can identify inconsistencies or ambiguities. When this happens, it sends a `revise` signal (red arrows) back to specific perception layers. This forces the perception of a particular symbol (e.g., `symbol A`, `symbol D`) to be re-evaluated, creating an iterative refinement cycle until a consistent logical interpretation is achieved.

### Key Observations

* **Hybrid Architecture:** The system explicitly combines sub-symbolic (neural networks for perception and decision) and symbolic (Prolog for logic) AI paradigms.

* **Iterative Refinement:** The presence of multiple `revise` arrows indicates that the system is designed to handle uncertainty and ambiguity by looping back, rather than making a single, feed-forward decision.

* **Symbolic Labeling:** The perception layers output discrete `symbol` labels (A, B, C, D), suggesting an intermediate step where continuous neural features are mapped to discrete symbolic tokens before logical processing.

* **Error as an Output:** The explicit `error` output path suggests the system can formally declare when it cannot resolve an interpretation, even after revision attempts.

### Interpretation

This diagram represents a **neuro-symbolic AI system designed for robust, explainable reasoning on structured tasks**, such as interpreting mathematical expressions or logical puzzles from handwritten input.

* **What it demonstrates:** The core idea is to overcome the limitations of pure neural networks (black-box reasoning, poor symbolic manipulation) and pure symbolic AI (brittle perception, manual feature engineering). The neural components handle the messy, real-world perception, while the symbolic component provides structured reasoning, constraint checking, and explainability.

* **How elements relate:** The `neural-logical tunnel` is the critical bridge. It translates between the distributed, vector-based representations of the neural nets and the discrete, rule-based world of Prolog. The feedback loop (`revise`) is the mechanism for **grounding**—ensuring the symbolic interpretation aligns with the perceptual data.

* **Notable implications:** The system's ability to revise its perception based on logical constraints mimics a form of **cognitive plausibility**. For example, if the logic module expects a certain pattern (e.g., `0 + 1 = 1`) but the perception of a symbol is ambiguous, it can request a re-examination of that specific input. This makes the system more robust to noise and capable of self-correction. The Prolog rules shown (`eq([0,+,1,1])`) hint that the specific task may involve validating simple arithmetic equations.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Neural-Symbolic Processing Architecture

### Overview

The diagram illustrates a multi-stage neural-symbolic processing system with three primary components:

1. **Perception Neural Layers (A)**: Processes raw symbolic inputs (e.g., "1", "+", "0", "≡", "✒").

2. **Neural-Logical Tunnel (B)**: Integrates symbolic representations with logical operations via a Prolog module.

3. **Decision Neural Layers (C)**: Outputs probabilistic decisions (Positive/Negative) with error correction.

### Components/Axes

#### Section A: Perception Neural Layers

- **Symbols**:

- "1" (digit), "+" (operator), "0" (digit), "≡" (equivalence), "✒" (pen).

- **Structure**:

- Each symbol is processed by stacked neural layers (blue shaded regions).

- Arrows indicate forward propagation of features.

#### Section B: Neural-Logical Tunnel

- **Symbols**:

- "B", "C", "D" (abstract symbols).

- **Key Elements**:

- **Prolog Module**: Contains logical rules (e.g., `eq(A,B,C) := dig(A), op(B), eq(C)`) and operations like `abduce`, `revise`.

- **Revisions**: Red arrows indicate iterative refinement of symbols (e.g., "revise A", "revise B").

- **Flow**:

- Inputs from perception layers are mapped to symbolic representations (e.g., "symbol B", "symbol C").

#### Section C: Decision Neural Layers

- **Output**:

- Binary decisions labeled "Positive" (blue arrow) and "Negative" (red arrow).

- Error signals (red) propagate backward to refine earlier layers.

- **Features**:

- "Relational features" (yellow) extracted for decision-making.

### Detailed Analysis

#### Perception Layers (A)

- Each symbol is processed independently, with hierarchical feature extraction (e.g., edges, shapes).

- Example: The "≡" symbol’s neural layers likely detect symmetry and equivalence properties.

#### Neural-Logical Tunnel (B)

- **Prolog Module**:

- Logical rules (e.g., `rules(op(0,1,1))`) define symbolic operations.

- Equations like `eq([A,B,C]) := dig(A), op(B), eq(C)` map neural activations to symbolic logic.

- **Revisions**:

- Iterative corrections (e.g., "revise B") suggest error feedback loops to refine symbolic representations.

#### Decision Layers (C)

- **Probabilistic Output**:

- Positive/Negative decisions are weighted by relational features.

- Error signals (red) indicate misclassifications, triggering revisions in earlier layers.

### Key Observations

1. **Hierarchical Processing**:

- Raw symbols → symbolic abstraction → logical integration → probabilistic decisions.

2. **Error Correction**:

- Red arrows in C and revisions in B highlight a feedback mechanism for robustness.

3. **Symbolic-Neural Integration**:

- The Prolog module bridges neural activations (e.g., `dig(A)`) with formal logic (e.g., `op(B)`).

### Interpretation

This architecture demonstrates a hybrid AI system where:

- **Perception layers** extract low-level features from raw inputs.

- **Neural-logical integration** uses symbolic reasoning (via Prolog) to refine intermediate representations.

- **Decision layers** combine learned features with logical constraints to produce interpretable outcomes.

The "revise" annotations and error signals suggest a dynamic system capable of self-correction, likely inspired by neuro-symbolic AI frameworks. The use of Prolog implies formal logic is critical for tasks requiring rule-based reasoning (e.g., mathematical operations, equivalence checks). The error-driven revisions in C emphasize the importance of feedback in achieving reliable decisions.

DECODING INTELLIGENCE...