## Diagram: Neural-Symbolic Processing Architecture

### Overview

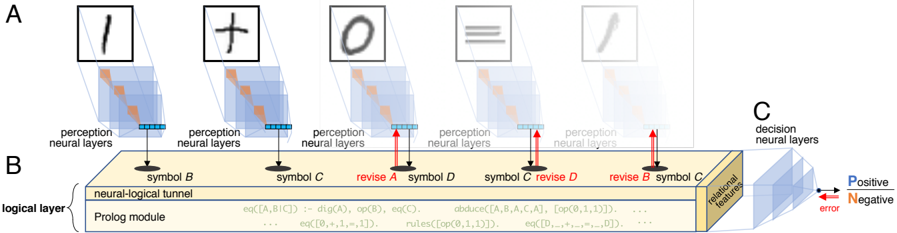

The diagram illustrates a multi-stage neural-symbolic processing system with three primary components:

1. **Perception Neural Layers (A)**: Processes raw symbolic inputs (e.g., "1", "+", "0", "≡", "✒").

2. **Neural-Logical Tunnel (B)**: Integrates symbolic representations with logical operations via a Prolog module.

3. **Decision Neural Layers (C)**: Outputs probabilistic decisions (Positive/Negative) with error correction.

### Components/Axes

#### Section A: Perception Neural Layers

- **Symbols**:

- "1" (digit), "+" (operator), "0" (digit), "≡" (equivalence), "✒" (pen).

- **Structure**:

- Each symbol is processed by stacked neural layers (blue shaded regions).

- Arrows indicate forward propagation of features.

#### Section B: Neural-Logical Tunnel

- **Symbols**:

- "B", "C", "D" (abstract symbols).

- **Key Elements**:

- **Prolog Module**: Contains logical rules (e.g., `eq(A,B,C) := dig(A), op(B), eq(C)`) and operations like `abduce`, `revise`.

- **Revisions**: Red arrows indicate iterative refinement of symbols (e.g., "revise A", "revise B").

- **Flow**:

- Inputs from perception layers are mapped to symbolic representations (e.g., "symbol B", "symbol C").

#### Section C: Decision Neural Layers

- **Output**:

- Binary decisions labeled "Positive" (blue arrow) and "Negative" (red arrow).

- Error signals (red) propagate backward to refine earlier layers.

- **Features**:

- "Relational features" (yellow) extracted for decision-making.

### Detailed Analysis

#### Perception Layers (A)

- Each symbol is processed independently, with hierarchical feature extraction (e.g., edges, shapes).

- Example: The "≡" symbol’s neural layers likely detect symmetry and equivalence properties.

#### Neural-Logical Tunnel (B)

- **Prolog Module**:

- Logical rules (e.g., `rules(op(0,1,1))`) define symbolic operations.

- Equations like `eq([A,B,C]) := dig(A), op(B), eq(C)` map neural activations to symbolic logic.

- **Revisions**:

- Iterative corrections (e.g., "revise B") suggest error feedback loops to refine symbolic representations.

#### Decision Layers (C)

- **Probabilistic Output**:

- Positive/Negative decisions are weighted by relational features.

- Error signals (red) indicate misclassifications, triggering revisions in earlier layers.

### Key Observations

1. **Hierarchical Processing**:

- Raw symbols → symbolic abstraction → logical integration → probabilistic decisions.

2. **Error Correction**:

- Red arrows in C and revisions in B highlight a feedback mechanism for robustness.

3. **Symbolic-Neural Integration**:

- The Prolog module bridges neural activations (e.g., `dig(A)`) with formal logic (e.g., `op(B)`).

### Interpretation

This architecture demonstrates a hybrid AI system where:

- **Perception layers** extract low-level features from raw inputs.

- **Neural-logical integration** uses symbolic reasoning (via Prolog) to refine intermediate representations.

- **Decision layers** combine learned features with logical constraints to produce interpretable outcomes.

The "revise" annotations and error signals suggest a dynamic system capable of self-correction, likely inspired by neuro-symbolic AI frameworks. The use of Prolog implies formal logic is critical for tasks requiring rule-based reasoning (e.g., mathematical operations, equivalence checks). The error-driven revisions in C emphasize the importance of feedback in achieving reliable decisions.