TECHNICAL ASSET FINGERPRINT

7cb854db70addbb25a824d03

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Chart: Mean Pass Rate vs. Mean Number of Tokens Generated

### Overview

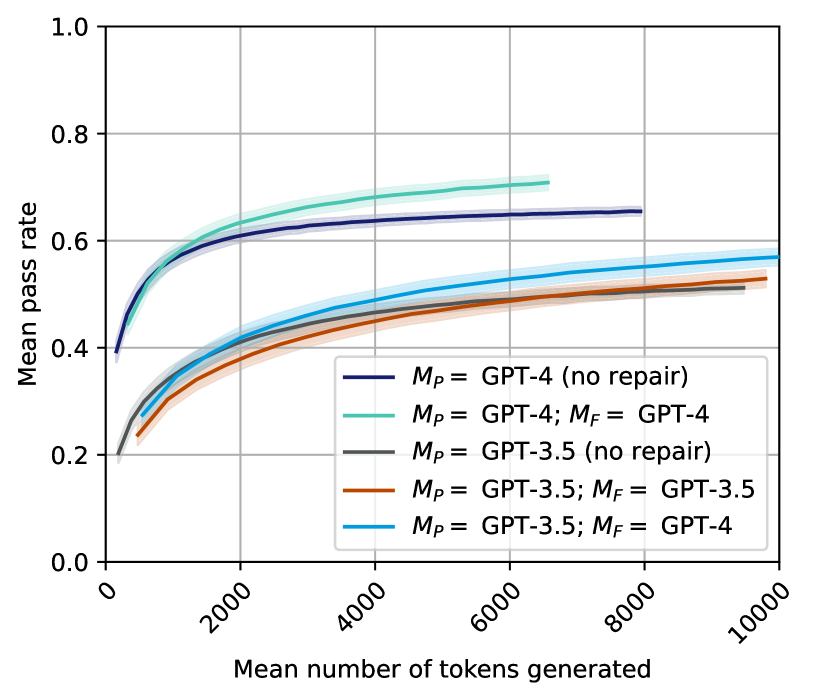

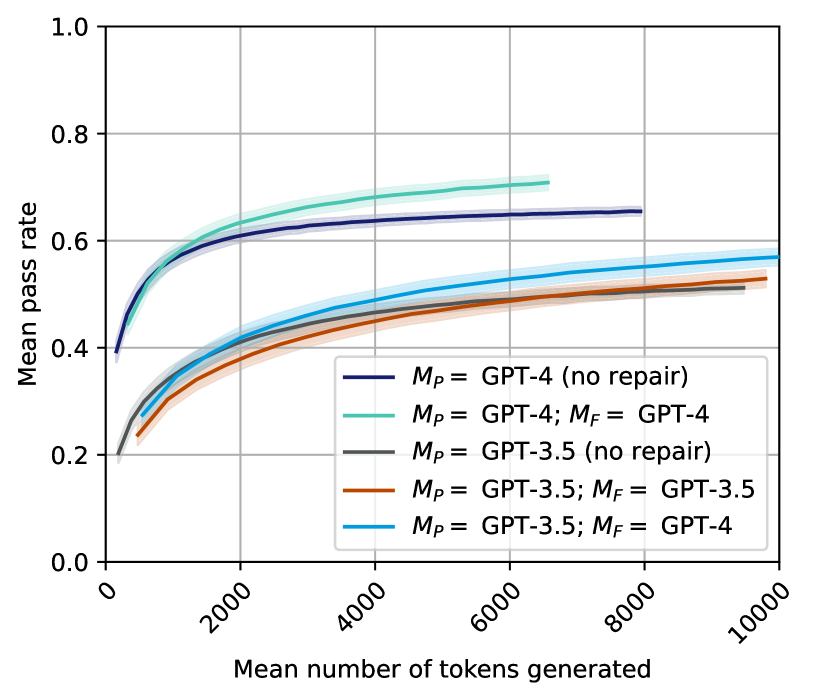

The image is a line chart comparing the mean pass rate against the mean number of tokens generated for different GPT models and repair configurations. The chart displays five data series, each representing a different model configuration, with shaded regions indicating uncertainty.

### Components/Axes

* **X-axis:** Mean number of tokens generated, ranging from 0 to 10000, with tick marks at intervals of 2000.

* **Y-axis:** Mean pass rate, ranging from 0.0 to 1.0, with tick marks at intervals of 0.2.

* **Legend (located in the center-right of the chart):**

* Dark Blue: *M<sub>P</sub>* = GPT-4 (no repair)

* Light Green: *M<sub>P</sub>* = GPT-4; *M<sub>F</sub>* = GPT-4

* Gray: *M<sub>P</sub>* = GPT-3.5 (no repair)

* Brown: *M<sub>P</sub>* = GPT-3.5; *M<sub>F</sub>* = GPT-3.5

* Teal: *M<sub>P</sub>* = GPT-3.5; *M<sub>F</sub>* = GPT-4

### Detailed Analysis

* **Dark Blue Line: *M<sub>P</sub>* = GPT-4 (no repair)**

* Trend: The line slopes upward, starting at approximately 0.4 and reaching approximately 0.65, then plateaus.

* Data Points:

* At 0 tokens, the mean pass rate is approximately 0.4.

* At 2000 tokens, the mean pass rate is approximately 0.6.

* At 6000 tokens, the mean pass rate is approximately 0.65.

* At 10000 tokens, the mean pass rate is approximately 0.65.

* **Light Green Line: *M<sub>P</sub>* = GPT-4; *M<sub>F</sub>* = GPT-4**

* Trend: The line slopes upward, starting at approximately 0.25 and reaching approximately 0.7, then plateaus.

* Data Points:

* At 0 tokens, the mean pass rate is approximately 0.25.

* At 2000 tokens, the mean pass rate is approximately 0.62.

* At 6000 tokens, the mean pass rate is approximately 0.7.

* **Gray Line: *M<sub>P</sub>* = GPT-3.5 (no repair)**

* Trend: The line slopes upward, starting at approximately 0.2 and reaching approximately 0.52, then plateaus.

* Data Points:

* At 0 tokens, the mean pass rate is approximately 0.2.

* At 2000 tokens, the mean pass rate is approximately 0.4.

* At 6000 tokens, the mean pass rate is approximately 0.5.

* At 10000 tokens, the mean pass rate is approximately 0.52.

* **Brown Line: *M<sub>P</sub>* = GPT-3.5; *M<sub>F</sub>* = GPT-3.5**

* Trend: The line slopes upward, starting at approximately 0.25 and reaching approximately 0.5, then plateaus.

* Data Points:

* At 0 tokens, the mean pass rate is approximately 0.25.

* At 2000 tokens, the mean pass rate is approximately 0.38.

* At 6000 tokens, the mean pass rate is approximately 0.48.

* At 10000 tokens, the mean pass rate is approximately 0.5.

* **Teal Line: *M<sub>P</sub>* = GPT-3.5; *M<sub>F</sub>* = GPT-4**

* Trend: The line slopes upward, starting at approximately 0.28 and reaching approximately 0.57, then plateaus.

* Data Points:

* At 0 tokens, the mean pass rate is approximately 0.28.

* At 2000 tokens, the mean pass rate is approximately 0.45.

* At 6000 tokens, the mean pass rate is approximately 0.55.

* At 10000 tokens, the mean pass rate is approximately 0.57.

### Key Observations

* GPT-4 models generally outperform GPT-3.5 models.

* The "no repair" GPT-4 model has a higher initial pass rate but plateaus earlier than the GPT-4 model with repair.

* Using GPT-4 for repair (*M<sub>F</sub>*) on GPT-3.5 (*M<sub>P</sub>*) improves the pass rate compared to using GPT-3.5 for both.

* All models show diminishing returns in pass rate as the number of tokens generated increases.

### Interpretation

The chart illustrates the relationship between the number of tokens generated and the mean pass rate for different GPT models and repair configurations. The data suggests that using a more advanced model (GPT-4) generally leads to a higher pass rate. Furthermore, the use of a repair mechanism (indicated by *M<sub>F</sub>*) can improve the performance of the models, especially in the initial stages of token generation. The plateauing of the pass rates indicates that there is a limit to the improvement that can be achieved by simply generating more tokens. The shaded regions around the lines represent the uncertainty in the measurements, which should be considered when interpreting the results.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Chart Type: Line Chart - Mean Pass Rate vs. Mean Number of Tokens Generated

### Overview

This image displays a 2D line chart illustrating the relationship between the "Mean pass rate" (Y-axis) and the "Mean number of tokens generated" (X-axis) for various configurations of GPT models, with and without repair mechanisms. The chart features five distinct data series, each represented by a colored line and a shaded confidence interval, comparing GPT-4 and GPT-3.5 models, and the impact of different models (`M_P` for primary model, `M_F` for repair model) on performance.

### Components/Axes

The chart is structured with a primary plot area, an X-axis at the bottom, a Y-axis on the left, and a legend in the bottom-right.

* **X-axis Label**: "Mean number of tokens generated"

* **X-axis Range**: From 0 to 10000.

* **X-axis Tick Markers**: 0, 2000, 4000, 6000, 8000, 10000. The tick labels are rotated approximately 45 degrees counter-clockwise.

* **Y-axis Label**: "Mean pass rate"

* **Y-axis Range**: From 0.0 to 1.0.

* **Y-axis Tick Markers**: 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Grid**: A light grey grid is present, aligning with both X and Y-axis major tick markers.

* **Legend**: Located in the bottom-right quadrant of the plot area. It lists five data series with their corresponding colors and descriptions:

* **Dark Blue line with light blue shading**: `M_P = GPT-4 (no repair)`

* **Teal/Light Green line with lighter teal/green shading**: `M_P = GPT-4; M_F = GPT-4`

* **Grey line with light grey shading**: `M_P = GPT-3.5 (no repair)`

* **Brown/Orange line with light orange shading**: `M_P = GPT-3.5; M_F = GPT-3.5`

* **Light Blue line with very light blue shading**: `M_P = GPT-3.5; M_F = GPT-4`

### Detailed Analysis

All five data series exhibit a general trend of increasing "Mean pass rate" as the "Mean number of tokens generated" increases, eventually flattening out. The shaded areas around each line represent confidence intervals.

1. **Dark Blue line: `M_P = GPT-4 (no repair)`**

* **Trend**: This line starts at a relatively high pass rate and increases steeply, then gradually flattens.

* **Data Points (approximate)**:

* At X=0, Y is approximately 0.39.

* At X=1000, Y is approximately 0.58.

* At X=2000, Y is approximately 0.62.

* At X=4000, Y is approximately 0.64.

* At X=6000, Y is approximately 0.65.

* The line extends to approximately X=8000, where Y is around 0.66.

* The confidence interval is relatively narrow.

2. **Teal/Light Green line: `M_P = GPT-4; M_F = GPT-4`**

* **Trend**: This line starts similarly to the dark blue line but quickly surpasses it, showing a slightly higher pass rate, and then flattens.

* **Data Points (approximate)**:

* At X=0, Y is approximately 0.40.

* At X=1000, Y is approximately 0.60.

* At X=2000, Y is approximately 0.65.

* At X=4000, Y is approximately 0.69.

* At X=6000, Y is approximately 0.70.

* The line extends to approximately X=6800, where Y is around 0.70.

* The confidence interval is relatively narrow.

3. **Grey line: `M_P = GPT-3.5 (no repair)`**

* **Trend**: This line starts at a lower pass rate than the GPT-4 lines and increases more slowly, eventually flattening at a significantly lower pass rate.

* **Data Points (approximate)**:

* At X=0, Y is approximately 0.20.

* At X=1000, Y is approximately 0.33.

* At X=2000, Y is approximately 0.39.

* At X=4000, Y is approximately 0.45.

* At X=6000, Y is approximately 0.48.

* At X=8000, Y is approximately 0.50.

* The line extends to approximately X=9500, where Y is around 0.51.

* The confidence interval is moderately wide.

4. **Brown/Orange line: `M_P = GPT-3.5; M_F = GPT-3.5`**

* **Trend**: This line closely follows the grey line, starting slightly below it and remaining very close, indicating minimal improvement from self-repair.

* **Data Points (approximate)**:

* At X=0, Y is approximately 0.18.

* At X=1000, Y is approximately 0.30.

* At X=2000, Y is approximately 0.37.

* At X=4000, Y is approximately 0.44.

* At X=6000, Y is approximately 0.47.

* At X=8000, Y is approximately 0.49.

* The line extends to approximately X=9800, where Y is around 0.51.

* The confidence interval is moderately wide.

5. **Light Blue line: `M_P = GPT-3.5; M_F = GPT-4`**

* **Trend**: This line starts at a similar low pass rate as the other GPT-3.5 lines but shows a more significant increase, eventually flattening at a higher pass rate than the other GPT-3.5 configurations.

* **Data Points (approximate)**:

* At X=0, Y is approximately 0.22.

* At X=1000, Y is approximately 0.37.

* At X=2000, Y is approximately 0.44.

* At X=4000, Y is approximately 0.50.

* At X=6000, Y is approximately 0.54.

* At X=8000, Y is approximately 0.56.

* The line extends to approximately X=10000, where Y is around 0.57.

* The confidence interval is moderately wide.

### Key Observations

* **GPT-4 Superiority**: Both GPT-4 configurations (dark blue and teal/light green lines) consistently achieve significantly higher mean pass rates compared to all GPT-3.5 configurations across the entire range of tokens generated.

* **Impact of GPT-4 Repair**: When `M_P = GPT-4`, using `M_F = GPT-4` (teal/light green line) results in a slightly higher mean pass rate than `(no repair)` (dark blue line), particularly at higher token counts. The peak pass rate for `M_P = GPT-4; M_F = GPT-4` is around 0.70, while `M_P = GPT-4 (no repair)` peaks around 0.66.

* **Impact of Repair on GPT-3.5**:

* `M_P = GPT-3.5 (no repair)` (grey line) and `M_P = GPT-3.5; M_F = GPT-3.5` (brown/orange line) perform very similarly, suggesting that using GPT-3.5 itself for repair (`M_F = GPT-3.5`) provides negligible improvement over no repair. Both peak around a 0.50-0.51 pass rate.

* However, when `M_P = GPT-3.5` is paired with `M_F = GPT-4` (light blue line), there is a noticeable improvement in the mean pass rate. This configuration achieves a pass rate of approximately 0.57 at 10000 tokens, which is a significant increase over the ~0.51 achieved by the other GPT-3.5 configurations.

* **Diminishing Returns**: All lines show a rapid increase in pass rate at lower token counts, followed by a flattening trend, indicating diminishing returns in pass rate improvement beyond a certain number of generated tokens (roughly 4000-6000 tokens for GPT-4, and 6000-8000 for GPT-3.5).

* **Confidence Intervals**: The confidence intervals (shaded areas) are relatively narrow for the GPT-4 models, suggesting higher consistency, and slightly wider for the GPT-3.5 models, indicating more variability.

### Interpretation

This chart strongly suggests that GPT-4 is a more capable model than GPT-3.5 for the task being measured (implied by "pass rate"). The baseline performance of GPT-4 without any repair mechanism (`M_P = GPT-4 (no repair)`) is already superior to all GPT-3.5 configurations, even those with repair.

The "repair" mechanism (`M_F`) appears to be most effective when a more powerful model (GPT-4) is used for the repair process. Specifically, using GPT-4 to repair outputs from GPT-3.5 (`M_P = GPT-3.5; M_F = GPT-4`) significantly boosts GPT-3.5's performance, bringing its pass rate closer to, though still below, the baseline GPT-4 performance. This indicates that a strong "fixer" model can compensate for the limitations of a weaker primary model.

Conversely, using a weaker model (GPT-3.5) to repair its own outputs (`M_P = GPT-3.5; M_F = GPT-3.5`) offers almost no benefit over no repair at all. This implies that if the primary model itself is not capable enough, it cannot effectively self-correct or repair its own errors.

The flattening of all curves indicates that there's a practical limit to the "Mean pass rate" achievable, and simply generating more tokens beyond a certain point does not yield substantial improvements. This could be due to inherent limitations of the models, the task itself, or the evaluation metric. The point of diminishing returns for GPT-4 is reached earlier (fewer tokens) than for GPT-3.5, suggesting GPT-4 is more efficient in achieving its peak performance.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Pass Rate vs. Tokens Generated for Different Models

### Overview

This line chart depicts the relationship between the mean number of tokens generated and the mean pass rate for several configurations of GPT models (GPT-4 and GPT-3.5) with and without a "repair" step (denoted as *M<sub>P</sub>* and *M<sub>F</sub>*). The chart aims to demonstrate how the performance (pass rate) of these models changes as they generate more text.

### Components/Axes

* **X-axis:** "Mean number of tokens generated". Scale ranges from 0 to 10000, with tick marks at 0, 2000, 4000, 6000, 8000, and 10000.

* **Y-axis:** "Mean pass rate". Scale ranges from 0.0 to 1.0, with tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Legend:** Located in the top-right corner of the chart. Contains the following entries:

* Black line: *M<sub>P</sub>* = GPT-4 (no repair)

* Light blue line: *M<sub>P</sub>* = GPT-4; *M<sub>F</sub>* = GPT-4

* Gray line: *M<sub>P</sub>* = GPT-3.5 (no repair)

* Orange line: *M<sub>P</sub>* = GPT-3.5; *M<sub>F</sub>* = GPT-3.5

* Teal line: *M<sub>P</sub>* = GPT-3.5; *M<sub>F</sub>* = GPT-4

### Detailed Analysis

The chart displays five distinct lines, each representing a different model configuration.

* **GPT-4 (no repair):** (Black line) Starts at approximately 0.15 pass rate at 0 tokens, and increases rapidly to approximately 0.6 pass rate at 2000 tokens. The line then plateaus, reaching approximately 0.63 pass rate at 10000 tokens.

* **GPT-4; GPT-4:** (Light blue line) Starts at approximately 0.2 pass rate at 0 tokens, and increases steadily to approximately 0.58 pass rate at 2000 tokens. It continues to increase, reaching approximately 0.6 pass rate at 10000 tokens.

* **GPT-3.5 (no repair):** (Gray line) Starts at approximately 0.1 pass rate at 0 tokens, and increases to approximately 0.45 pass rate at 2000 tokens. The line then plateaus, reaching approximately 0.48 pass rate at 10000 tokens.

* **GPT-3.5; GPT-3.5:** (Orange line) Starts at approximately 0.1 pass rate at 0 tokens, and increases to approximately 0.5 pass rate at 2000 tokens. It continues to increase, reaching approximately 0.53 pass rate at 10000 tokens.

* **GPT-3.5; GPT-4:** (Teal line) Starts at approximately 0.15 pass rate at 0 tokens, and increases to approximately 0.5 pass rate at 2000 tokens. It continues to increase, reaching approximately 0.55 pass rate at 10000 tokens.

All lines exhibit an initial steep increase in pass rate as the number of tokens generated increases, followed by a flattening of the curve as the number of tokens grows larger.

### Key Observations

* GPT-4 consistently outperforms GPT-3.5 across all configurations, as indicated by its higher pass rates.

* The "no repair" configurations for both GPT-4 and GPT-3.5 have lower initial pass rates compared to configurations with a repair step.

* The repair step (*M<sub>F</sub>*) appears to improve the pass rate, particularly at lower token counts.

* Using GPT-4 for the repair step (*M<sub>F</sub>* = GPT-4) with GPT-3.5 as the initial model (*M<sub>P</sub>* = GPT-3.5) results in a pass rate that is higher than using GPT-3.5 for both steps.

### Interpretation

The data suggests that GPT-4 is a more capable model than GPT-3.5 in terms of generating text that "passes" a certain criteria (the nature of which is not specified in the chart). The "repair" step, represented by *M<sub>F</sub>*, seems to be a valuable addition to the process, improving the quality of the generated text. The combination of GPT-3.5 for initial generation and GPT-4 for repair is a particularly effective strategy, demonstrating that leveraging the strengths of different models can lead to improved performance. The flattening of the curves at higher token counts indicates that there is a limit to the improvement that can be achieved by simply generating more text. The initial steep increase suggests that the models are quickly learning to generate text that meets the pass criteria, but further gains become more difficult as the models approach their maximum performance. The *M<sub>P</sub>* and *M<sub>F</sub>* notation likely refers to a two-stage process where *M<sub>P</sub>* is the primary model generating the text, and *M<sub>F</sub>* is a secondary model used to "repair" or refine the output.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Mean Pass Rate vs. Mean Number of Tokens Generated

### Overview

The image is a line chart comparing the performance of different Large Language Model (LLM) configurations. It plots the "Mean pass rate" (y-axis) against the "Mean number of tokens generated" (x-axis). The chart shows five distinct data series, each representing a different model setup, with shaded regions likely indicating confidence intervals or standard deviation around the mean.

### Components/Axes

* **Chart Type:** Line chart with shaded error bands.

* **X-Axis:**

* **Label:** "Mean number of tokens generated"

* **Scale:** Linear, ranging from 0 to 10,000.

* **Major Tick Marks:** 0, 2000, 4000, 6000, 8000, 10000.

* **Y-Axis:**

* **Label:** "Mean pass rate"

* **Scale:** Linear, ranging from 0.0 to 1.0.

* **Major Tick Marks:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Legend:** Located in the bottom-right quadrant of the chart area. It contains five entries, each with a colored line sample and a text label.

1. **Dark Blue Line:** `M_P = GPT-4 (no repair)`

2. **Teal/Green Line:** `M_P = GPT-4; M_F = GPT-4`

3. **Gray Line:** `M_P = GPT-3.5 (no repair)`

4. **Orange/Red Line:** `M_P = GPT-3.5; M_F = GPT-3.5`

5. **Light Blue Line:** `M_P = GPT-3.5; M_F = GPT-4`

* **Notation:** `M_P` likely denotes the primary model, and `M_F` denotes a secondary model used for "repair" or refinement.

### Detailed Analysis

**Trend Verification & Data Point Extraction:**

All five lines exhibit a similar logarithmic growth trend: a steep initial increase in pass rate as token count rises from 0, followed by a gradual plateau. The lines are ordered vertically, indicating clear performance tiers.

1. **`M_P = GPT-4; M_F = GPT-4` (Teal/Green Line):**

* **Trend:** Highest-performing series. Slopes upward sharply and maintains the highest position throughout.

* **Approximate Values:** Starts near 0.4 at ~0 tokens. Reaches ~0.6 at 2000 tokens, ~0.68 at 4000 tokens, and plateaus near 0.71 by 6000-7000 tokens (the line ends before 8000).

2. **`M_P = GPT-4 (no repair)` (Dark Blue Line):**

* **Trend:** Second-highest series. Follows a path very close to but consistently below the teal line.

* **Approximate Values:** Starts near 0.4. Reaches ~0.6 at 2000 tokens, ~0.64 at 4000 tokens, and plateaus near 0.66 by 8000 tokens.

3. **`M_P = GPT-3.5; M_F = GPT-4` (Light Blue Line):**

* **Trend:** Middle series. Shows a more gradual ascent compared to the GPT-4 lines.

* **Approximate Values:** Starts near 0.2. Reaches ~0.4 at 2000 tokens, ~0.48 at 4000 tokens, ~0.54 at 6000 tokens, and ~0.57 at 10000 tokens.

4. **`M_P = GPT-3.5; M_F = GPT-3.5` (Orange/Red Line):**

* **Trend:** Fourth series, closely tracking the light blue line but slightly below it.

* **Approximate Values:** Starts near 0.2. Reaches ~0.38 at 2000 tokens, ~0.45 at 4000 tokens, ~0.50 at 6000 tokens, and ~0.53 at 10000 tokens.

5. **`M_P = GPT-3.5 (no repair)` (Gray Line):**

* **Trend:** Lowest-performing series. Follows a path very close to but consistently below the orange line.

* **Approximate Values:** Starts near 0.2. Reaches ~0.37 at 2000 tokens, ~0.44 at 4000 tokens, ~0.49 at 6000 tokens, and ~0.51 at 10000 tokens.

### Key Observations

1. **Clear Performance Hierarchy:** There is a significant and consistent gap between the two GPT-4 based models (top two lines) and the three GPT-3.5 based models (bottom three lines). The GPT-4 models achieve a mean pass rate approximately 0.15-0.20 points higher than their GPT-3.5 counterparts at equivalent token counts.

2. **Impact of Repair (`M_F`):** For both GPT-4 and GPT-3.5, adding a repair model (`M_F`) improves performance over the "no repair" baseline. The teal line (GPT-4 repair) is above the dark blue line (GPT-4 no repair). The light blue and orange lines (GPT-3.5 with repair) are above the gray line (GPT-3.5 no repair).

3. **Cross-Model Repair Benefit:** The most notable observation is that using GPT-4 as a repair model for GPT-3.5 (`M_P = GPT-3.5; M_F = GPT-4`, light blue line) yields better performance than using GPT-3.5 to repair itself (orange line). This suggests a stronger repair model can elevate the performance of a weaker primary model.

4. **Diminishing Returns:** All curves show strong diminishing returns. The majority of the pass rate gain occurs within the first 2000-4000 tokens generated. Beyond 6000 tokens, the curves flatten considerably.

### Interpretation

This chart demonstrates the relationship between computational effort (measured in generated tokens) and task success rate (pass rate) for different LLM agent configurations. The data suggests several key insights:

* **Model Capability is Primary:** The base capability of the primary model (`M_P`) is the strongest determinant of performance. GPT-4 fundamentally outperforms GPT-3.5, regardless of the repair strategy.

* **Repair Mechanisms are Effective:** Implementing a repair or refinement step (`M_F`) consistently improves outcomes. This supports the architectural pattern of using a secondary model to critique and improve the output of a primary model.

* **Asymmetric Repair is Powerful:** The benefit is not symmetric. A more capable model (GPT-4) serves as a more effective repair agent for a less capable model (GPT-3.5) than the less capable model does for itself. This implies that in a multi-model system, assigning the most capable model to the "critic" or "repair" role can be a highly efficient strategy.

* **Efficiency Plateau:** There is a clear point of diminishing returns. Investing tokens beyond approximately 6000 yields minimal improvement in pass rate for these tasks. This has practical implications for cost and latency optimization in deployed systems, suggesting that capping token generation could be beneficial without significant performance loss.

The chart effectively argues for the value of both using advanced base models and implementing multi-model repair architectures, with a particular emphasis on the strategic advantage of using a strong model in the repair role.

DECODING INTELLIGENCE...

EXPERT: jina-vlm VERSION 1

RUNTIME: jina-vlm

INTEL_VERIFIED

## Line Graph: Mean Pass Rate

### Overview

The line graph depicts the mean pass rate of different versions of GPT models as a function of the mean number of tokens generated. The graph compares the performance of GPT-4, GPT-3.5, and their repaired versions.

### Components/Axes

- **X-axis**: Mean number of tokens generated

- **Y-axis**: Mean pass rate

- **Legend**: Differentiates between GPT-4 (no repair), GPT-4 (repaired), GPT-3.5 (no repair), and GPT-3.5 (repaired)

### Detailed Analysis or ### Content Details

- **GPT-4 (no repair)**: The line is the lowest, indicating the lowest mean pass rate across all token counts.

- **GPT-4 (repaired)**: The line is the highest, showing the highest mean pass rate.

- **GPT-3.5 (no repair)**: The line is slightly above GPT-4 (no repair), indicating a higher pass rate than GPT-4 but lower than GPT-4 (repaired).

- **GPT-3.5 (repaired)**: The line is the second highest, showing a significant improvement in pass rate compared to GPT-3.5 (no repair).

### Key Observations

- The repaired versions of GPT-4 and GPT-3.5 show a significant improvement in mean pass rate.

- The difference in pass rate between the repaired and non-repaired versions is most pronounced at higher token counts.

- There is a general trend of increasing pass rate with the increase in token count for all models.

### Interpretation

The data suggests that the repair of GPT models significantly improves their performance in terms of mean pass rate. This is particularly noticeable in the repaired versions of GPT-4 and GPT-3.5, which outperform their non-repaired counterparts across all token counts. The improvement is most pronounced at higher token counts, indicating that the repair might be more effective in handling more complex or longer text. The trend of increasing pass rate with token count suggests that the models become more proficient in generating accurate responses as the input text length increases.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Mean Pass Rate vs. Mean Number of Tokens Generated

### Overview

The chart illustrates the relationship between the mean number of tokens generated and the mean pass rate for different configurations of GPT models. Five distinct data series are plotted, each representing a unique combination of primary (M_P) and repair (M_F) models. The y-axis represents the mean pass rate (0.0–1.0), while the x-axis shows the mean number of tokens generated (0–10,000).

### Components/Axes

- **X-axis**: "Mean number of tokens generated" (0–10,000, linear scale).

- **Y-axis**: "Mean pass rate" (0.0–1.0, linear scale).

- **Legend**: Located in the bottom-right corner, with five entries:

1. **Dark blue**: M_P = GPT-4 (no repair)

2. **Teal**: M_P = GPT-4; M_F = GPT-4

3. **Gray**: M_P = GPT-3.5 (no repair)

4. **Orange**: M_P = GPT-3.5; M_F = GPT-3.5

5. **Light blue**: M_P = GPT-3.5; M_F = GPT-4

### Detailed Analysis

1. **Dark blue (M_P = GPT-4, no repair)**:

- Starts at ~0.4 at 2,000 tokens.

- Rises sharply to ~0.65 at 6,000 tokens.

- Plateaus at ~0.65 beyond 6,000 tokens.

- **Trend**: Steady increase followed by stabilization.

2. **Teal (M_P = GPT-4; M_F = GPT-4)**:

- Begins at ~0.5 at 2,000 tokens.

- Peaks at ~0.7 at 6,000 tokens.

- Drops to ~0.55 at 8,000 tokens.

- **Trend**: Initial improvement, followed by a decline.

3. **Gray (M_P = GPT-3.5, no repair)**:

- Starts at ~0.3 at 2,000 tokens.

- Gradually increases to ~0.55 at 8,000 tokens.

- **Trend**: Slow, linear growth.

4. **Orange (M_P = GPT-3.5; M_F = GPT-3.5)**:

- Begins at ~0.3 at 2,000 tokens.

- Rises to ~0.5 at 8,000 tokens.

- **Trend**: Moderate, linear increase.

5. **Light blue (M_P = GPT-3.5; M_F = GPT-4)**:

- Starts at ~0.35 at 2,000 tokens.

- Reaches ~0.55 at 10,000 tokens.

- **Trend**: Steady, linear improvement.

### Key Observations

- **GPT-4 superiority**: Configurations using GPT-4 (dark blue, teal) consistently outperform GPT-3.5 variants.

- **Repair mechanism impact**:

- For GPT-4, the repair model (M_F = GPT-4) initially improves performance but later causes a decline (teal line).

- For GPT-3.5, pairing with GPT-4 as M_F (light blue) significantly boosts pass rates compared to GPT-3.5 alone (orange line).

- **Threshold effects**: The teal line’s drop after 6,000 tokens suggests potential overfitting or diminishing returns when both models are GPT-4.

### Interpretation

The data demonstrates that GPT-4 models achieve higher pass rates across all token ranges. However, the repair mechanism’s effectiveness depends on the primary model:

- **GPT-4**: Using GPT-4 as both M_P and M_F yields peak performance but risks overfitting, as seen in the teal line’s decline.

- **GPT-3.5**: Pairing with GPT-4 as M_F (light blue) maximizes performance, highlighting the value of hybrid configurations. The gray and orange lines (GPT-3.5 variants) show that standalone GPT-3.5 models underperform compared to GPT-4.

Notably, the teal line’s post-6,000-token drop warrants further investigation—it may indicate a flaw in the repair process when both models are identical high-capacity systems. This suggests that repair mechanisms might need to be tailored to the primary model’s capabilities to avoid unintended consequences.

DECODING INTELLIGENCE...