## Diagram: LLM Response Consistency vs. Factual Accuracy Flowchart

### Overview

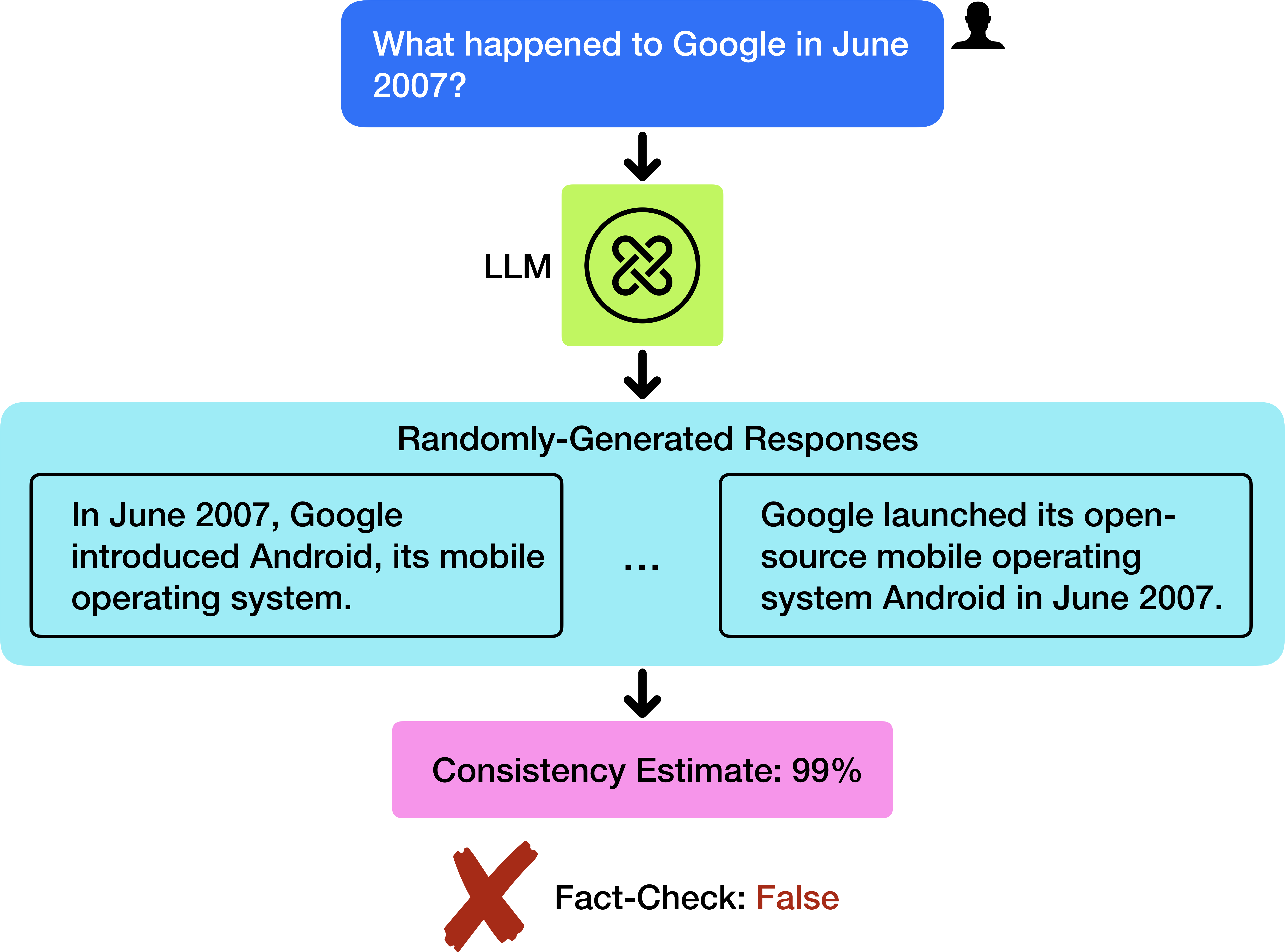

The image is a vertical flowchart illustrating a process where a Large Language Model (LLM) generates multiple responses to a factual query. The diagram demonstrates that while the LLM's responses can be highly consistent with each other, they may still be factually incorrect. The flow moves from top to bottom.

### Components/Axes

The diagram consists of five primary components connected by downward-pointing black arrows, indicating the flow of the process.

1. **User Query (Top, Center):** A blue, rounded rectangle containing the text: "What happened to Google in June 2007?". A black silhouette icon of a person is positioned to its top-right.

2. **LLM Processing (Upper Middle, Center):** A light green square containing a black circular icon with an infinity-like symbol. The text "LLM" is placed to the left of this square.

3. **Randomly-Generated Responses (Middle, Center):** A large, light blue rectangle with the title "Randomly-Generated Responses" at its top. Inside this container are two example response boxes and an ellipsis:

* **Left Response Box:** A black-bordered rectangle containing the text: "In June 2007, Google introduced Android, its mobile operating system."

* **Ellipsis:** Three black dots ("...") centered between the two response boxes, indicating additional generated responses.

* **Right Response Box:** A black-bordered rectangle containing the text: "Google launched its open-source mobile operating system Android in June 2007."

4. **Consistency Estimate (Lower Middle, Center):** A pink, rounded rectangle containing the text: "Consistency Estimate: 99%".

5. **Fact-Check Result (Bottom, Center):** A large, red "X" mark (✗) followed by the text: "Fact-Check: False". The word "False" is in red font.

### Detailed Analysis

* **Flow Direction:** The process is strictly linear and top-down: User Query → LLM → Randomly-Generated Responses → Consistency Estimate → Fact-Check Result.

* **Textual Content Transcription:**

* User Query: "What happened to Google in June 2007?"

* LLM Label: "LLM"

* Response Container Title: "Randomly-Generated Responses"

* Example Response 1: "In June 2007, Google introduced Android, its mobile operating system."

* Example Response 2: "Google launched its open-source mobile operating system Android in June 2007."

* Consistency Metric: "Consistency Estimate: 99%"

* Final Verdict: "Fact-Check: False"

* **Visual Relationships:** The two example responses are semantically very similar, both stating that Google launched/introduced Android in June 2007. This visual similarity supports the subsequent "99%" consistency estimate. The final "False" verdict directly contradicts the information presented in the responses.

### Key Observations

1. **High Consistency, Low Accuracy:** The core observation is the stark contrast between the very high internal consistency of the generated responses (99%) and their collective factual inaccuracy (False).

2. **Example Response Specificity:** Both example responses provide a specific, confident, and nearly identical answer to the user's question.

3. **Process Outcome:** The diagram's endpoint is a definitive factual judgment ("False"), which overrides the high consistency score.

### Interpretation

This diagram serves as a critical illustration of a key limitation in current LLM technology: the potential for **confident hallucination**. It demonstrates that an LLM can produce multiple outputs that are highly consistent with one another (suggesting internal agreement or reliability) yet be fundamentally wrong about the underlying facts.

The process flow highlights a method for detecting such errors: generating multiple samples and checking their consistency is not a reliable proxy for factual accuracy. A high consistency estimate can create a false sense of security. The final "Fact-Check: False" step implies the necessity of an external verification mechanism, separate from the LLM's own generative process, to validate the truthfulness of its outputs. The specific example used (Google/Android in June 2007) is likely chosen because it is a common point of confusion; while Google acquired Android Inc. in 2005, the first public demonstration of the Android OS was in November 2007, making the "June 2007" claim incorrect.