## System Architecture Diagram: Autonomous Agent with LLM-Based Decision-Making

### Overview

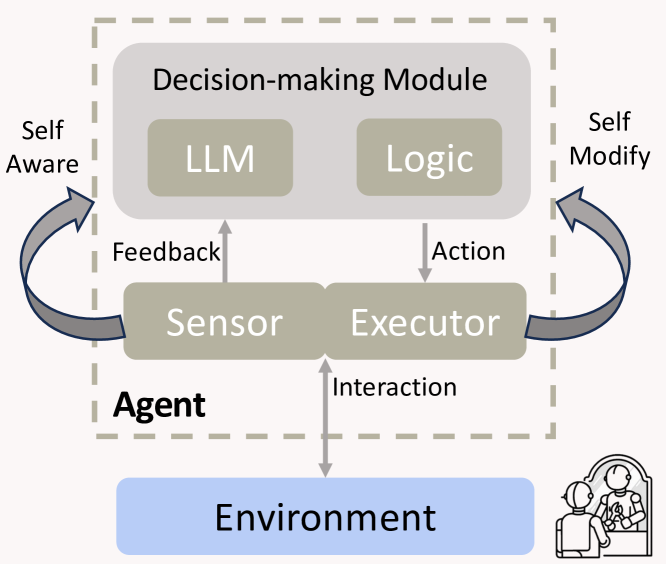

The image is a technical diagram illustrating the architecture of an autonomous "Agent" system. It depicts the agent's internal components, its decision-making process, and its interaction with an external "Environment." The diagram uses a combination of labeled boxes, directional arrows, and a dashed boundary to define system scope.

### Components/Axes

The diagram is structured into two primary regions:

1. **Agent (Dashed Box):** The core system, enclosed by a dashed gray rectangle.

2. **Environment (External):** A separate entity outside the agent's boundary.

**Agent Internal Components:**

* **Decision-making Module:** A large, light-gray rounded rectangle at the top center of the agent box.

* Contains two sub-components: **LLM** (left) and **Logic** (right), both in smaller, darker gray boxes.

* **Sensor & Executor:** A combined, darker gray rounded rectangle positioned below the Decision-making Module.

* The left half is labeled **Sensor**.

* The right half is labeled **Executor**.

**External Component:**

* **Environment:** A light blue rounded rectangle at the bottom of the diagram, outside the agent's dashed boundary. It contains a small line-art icon depicting two human figures, one possibly a child, suggesting a social or human context.

**Arrows and Flow Labels:**

* **Feedback:** A gray arrow pointing upward from the **Sensor** to the **Decision-making Module**.

* **Action:** A gray arrow pointing downward from the **Decision-making Module** to the **Executor**.

* **Interaction:** A double-headed, blue-gray arrow connecting the **Executor** and the **Environment**, indicating bidirectional communication.

* **Self Aware:** A curved, gray arrow originating from the **Sensor** area, looping outside the left side of the agent box, and pointing back into the **Decision-making Module**.

* **Self Modify:** A curved, gray arrow originating from the **Executor** area, looping outside the right side of the agent box, and pointing back into the **Decision-making Module**.

### Detailed Analysis

The diagram defines a closed-loop control system for an intelligent agent.

1. **Perception:** The **Sensor** component gathers information from the **Environment** via the **Interaction** channel.

2. **Processing:** Sensor data is sent as **Feedback** to the **Decision-making Module**. This module processes the input using a combination of an **LLM** (Large Language Model, for generative or reasoning tasks) and **Logic** (for structured, rule-based processing).

3. **Action:** The Decision-making Module outputs an **Action** command to the **Executor**.

4. **Execution:** The **Executor** carries out the action, which affects the **Environment** through the **Interaction** channel, completing the primary loop.

5. **Meta-Cognition:** Two secondary feedback loops exist:

* The **Self Aware** loop suggests the agent monitors its own sensor state or internal processes.

* The **Self Modify** loop indicates the agent can adjust its own decision-making parameters or logic based on the outcomes of its actions.

### Key Observations

* The **LLM** and **Logic** are presented as parallel, co-equal components within the decision module, suggesting a hybrid AI architecture that combines neural and symbolic approaches.

* The **Sensor** and **Executor** are fused into a single visual block, emphasizing their tight coupling as the agent's interface to the world.

* The **Environment** is explicitly shown to contain human elements (via the icon), framing this as a human-in-the-loop or socially situated agent.

* The **Self Aware** and **Self Modify** loops are architecturally significant, elevating the system from a simple reactive agent to one capable of introspection and adaptation.

### Interpretation

This diagram outlines a sophisticated blueprint for a general-purpose autonomous agent. The core innovation is the integration of an LLM not as a standalone chatbot, but as the reasoning engine within a larger cybernetic system. The inclusion of "Logic" alongside the LLM addresses a key limitation of pure neural models by incorporating structured reasoning.

The architecture emphasizes **closed-loop control** and **adaptation**. The agent doesn't just act; it perceives the results of its actions (via Sensor/Feedback) and can modify both its understanding (Self Aware) and its decision-making rules (Self Modify). This suggests a system designed for long-term operation in dynamic, unpredictable environments.

The presence of humans within the "Environment" block is critical. It implies the agent's purpose is to interact with, assist, or operate alongside people. The "Interaction" arrow being bidirectional confirms this is a two-way street—the agent affects the human environment, and human behavior, in turn, provides the primary sensory input and context for the agent's decisions. This places the architecture squarely in the domain of human-AI collaboration or assistive robotics.