TECHNICAL ASSET FINGERPRINT

7d372d037dd1bdddf8e02693

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

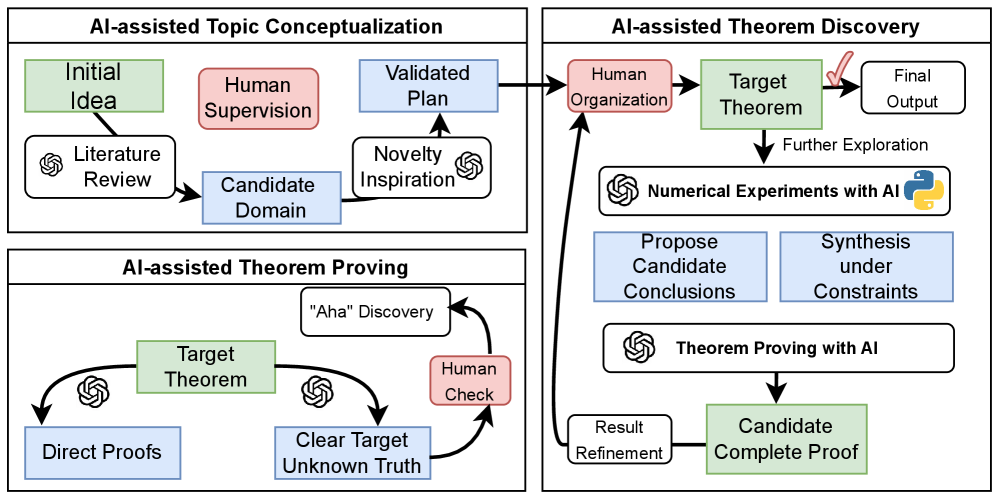

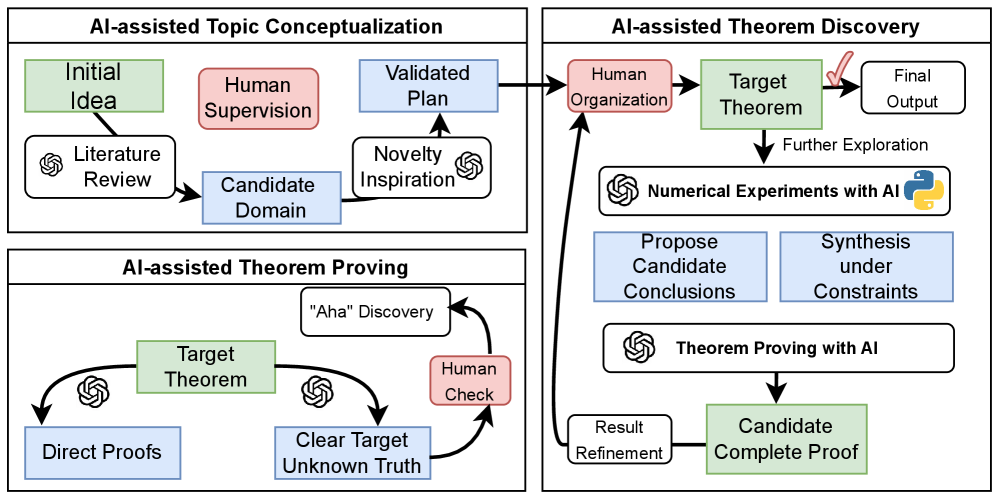

## Diagram: AI-Assisted Research Workflow

### Overview

The image is a technical flowchart illustrating a three-stage, human-in-the-loop workflow for AI-assisted mathematical or scientific research. The process is divided into three interconnected modules: **AI-assisted Topic Conceptualization**, **AI-assisted Theorem Proving**, and **AI-assisted Theorem Discovery**. The diagram uses color-coded boxes and directional arrows to show the flow of ideas, tasks, and feedback between human researchers and AI systems.

### Components/Axes

The diagram is structured into three main rectangular containers, each with a title.

**1. AI-assisted Topic Conceptualization (Top-Left Container)**

* **Components (in approximate flow order):**

* `Initial Idea` (Green box, top-left)

* `Literature Review` (White box with OpenAI logo, below Initial Idea)

* `Candidate Domain` (Blue box, center)

* `Human Supervision` (Red box, above Candidate Domain)

* `Novelty Inspiration` (White box with OpenAI logo, right of Candidate Domain)

* `Validated Plan` (Blue box, top-right)

* **Flow:** Arrows connect `Initial Idea` -> `Literature Review` -> `Candidate Domain`. `Human Supervision` and `Novelty Inspiration` both feed into `Candidate Domain`. An arrow leads from `Candidate Domain` to `Validated Plan`.

**2. AI-assisted Theorem Proving (Bottom-Left Container)**

* **Components:**

* `Target Theorem` (Green box, center)

* `Direct Proofs` (Blue box, bottom-left)

* `Clear Target Unknown Truth` (Blue box, bottom-right)

* `"Aha" Discovery` (White box, top-right)

* `Human Check` (Red box, right side)

* **Flow:** Arrows flow from `Target Theorem` to both `Direct Proofs` and `Clear Target Unknown Truth`. Both of these boxes have OpenAI logos next to their incoming arrows. An arrow leads from `Clear Target Unknown Truth` to `Human Check`, which then points to `"Aha" Discovery`. Finally, an arrow loops from `"Aha" Discovery` back to `Target Theorem`.

**3. AI-assisted Theorem Discovery (Right Container)**

* **Components:**

* `Human Organization` (Red box, top-left)

* `Target Theorem` (Green box, top-center)

* `Final Output` (White box, top-right)

* `Numerical Experiments with AI` (White box with OpenAI and Python logos, center)

* `Propose Candidate Conclusions` (Blue box, below experiments)

* `Synthesis under Constraints` (Blue box, right of propose conclusions)

* `Theorem Proving with AI` (White box with OpenAI logo, below synthesis)

* `Result Refinement` (White box, bottom-left)

* `Candidate Complete Proof` (Green box, bottom-right)

* **Flow:** The main flow starts from the `Validated Plan` (from the Conceptualization module) pointing to `Human Organization`. From there: `Human Organization` -> `Target Theorem` -> `Final Output`. A secondary, exploratory path branches from `Target Theorem` downwards: `Numerical Experiments with AI` -> `Propose Candidate Conclusions` & `Synthesis under Constraints` -> `Theorem Proving with AI` -> `Result Refinement` -> `Candidate Complete Proof`. An arrow from `Candidate Complete Proof` loops back up to `Human Organization`.

**Inter-Module Connections:**

* An arrow connects `Validated Plan` (Conceptualization) to `Human Organization` (Discovery).

* An arrow connects `Human Organization` (Discovery) to `Target Theorem` (Proving).

### Detailed Analysis

The diagram meticulously maps the roles of human and AI agents:

* **Human Roles (Red Boxes):** `Human Supervision`, `Human Check`, `Human Organization`. These represent oversight, validation, and high-level structuring.

* **AI Roles (White Boxes with Logos):** Tasks like `Literature Review`, `Novelty Inspiration`, `Numerical Experiments`, and `Theorem Proving` are explicitly marked with the OpenAI logo, indicating AI execution. The `Numerical Experiments` box also includes a Python logo, suggesting the use of Python-based computational tools.

* **Process States (Green Boxes):** Represent key milestones or objects of focus: `Initial Idea`, `Target Theorem` (appears in two modules), `Candidate Complete Proof`.

* **Process Outputs/Actions (Blue Boxes):** Represent derived domains, plans, proof types, or synthesis actions: `Candidate Domain`, `Validated Plan`, `Direct Proofs`, `Clear Target Unknown Truth`, `Propose Candidate Conclusions`, `Synthesis under Constraints`.

The workflow is highly iterative, especially in the Theorem Proving module, which features a feedback loop from `"Aha" Discovery` back to the `Target Theorem`. The Theorem Discovery module also shows a refinement loop from `Candidate Complete Proof` back to `Human Organization`.

### Key Observations

1. **Central Role of the "Target Theorem":** This concept is the focal point of both the Proving and Discovery modules, indicating it is the core research object.

2. **Human as Organizer and Validator:** Humans are not shown doing the computational heavy lifting (literature review, proving, experimenting) but are crucial for supervision, checks, and organization.

3. **AI as Executor and Inspirer:** AI is tasked with concrete, often computational, sub-tasks and is also credited with providing "Novelty Inspiration" and facilitating `"Aha" Discovery` moments.

4. **Non-Linear Pathways:** The process is not a simple linear sequence. It includes parallel paths (e.g., `Direct Proofs` vs. `Clear Target Unknown Truth`), branching exploration (`Numerical Experiments`), and multiple feedback loops.

5. **Color-Coded Semantics:** The consistent use of color (Green=State, Blue=Action/Output, Red=Human, White=AI Task) provides an immediate visual grammar for understanding the type of each component.

### Interpretation

This diagram presents a conceptual model for a collaborative, AI-augmented research pipeline, likely in mathematics or formal sciences. It argues that breakthrough discovery (`"Aha" Discovery`, `Candidate Complete Proof`) emerges from a structured interplay where:

* **AI handles scalable, data-intensive, or formal tasks:** Sifting through literature, running numerical experiments, executing proof steps.

* **Humans provide direction, judgment, and synthesis:** Setting goals (`Human Organization`), validating intermediate results (`Human Check`), and supervising the overall domain (`Human Supervision`).

The separation into three modules suggests a phased approach: first defining a promising research area (Conceptualization), then working on specific proof techniques (Proving), and finally integrating everything into a novel discovery (Discovery). The presence of loops underscores that research is an iterative, self-correcting process. The model emphasizes that AI does not replace the human researcher but acts as a powerful tool that extends their capabilities, particularly in exploration and formal verification, while the human retains control over the creative and evaluative aspects.

DECODING INTELLIGENCE...