## Diagram: AI Critic Evaluation Process

### Overview

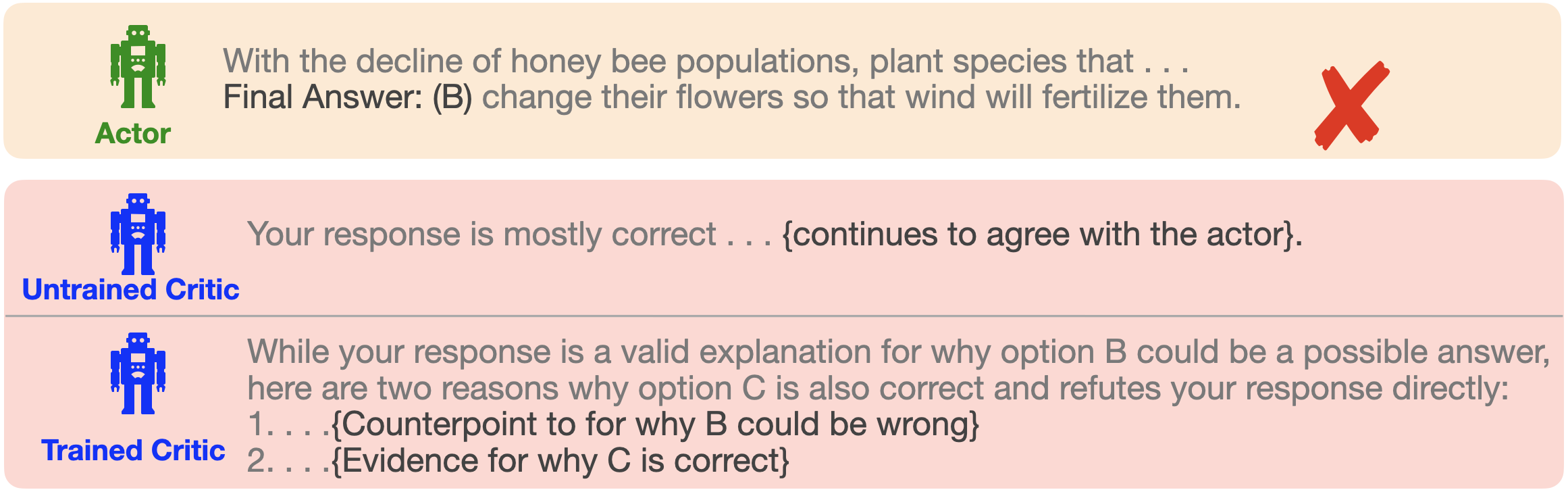

The image is a conceptual diagram illustrating a three-stage interaction between AI agents in an evaluation or learning scenario. It depicts an initial response from an "Actor" agent, followed by feedback from two types of "Critic" agents: one "Untrained" and one "Trained." The diagram uses color-coded robot icons and text panels to show the flow and quality of critique.

### Components/Axes

The diagram is structured into three horizontal panels, stacked vertically.

1. **Top Panel (Actor):**

* **Icon:** A green, pixelated robot icon on the left.

* **Label:** The word "Actor" in green text below the icon.

* **Text Content:** A statement and a final answer.

* **Visual Marker:** A large red "X" on the far right, indicating the answer is incorrect.

2. **Middle Panel (Untrained Critic):**

* **Icon:** A blue, pixelated robot icon on the left.

* **Label:** The words "Untrained Critic" in blue text below the icon.

* **Text Content:** A feedback statement that agrees with the Actor.

3. **Bottom Panel (Trained Critic):**

* **Icon:** A blue, pixelated robot icon on the left (identical to the Untrained Critic's icon).

* **Label:** The words "Trained Critic" in blue text below the icon.

* **Text Content:** A structured feedback statement that refutes the Actor's answer with numbered counterpoints.

### Detailed Analysis / Content Details

**Panel 1: Actor**

* **Text Transcription:** "With the decline of honey bee populations, plant species that . . . Final Answer: (B) change their flowers so that wind will fertilize them."

* **Observation:** The text presents a multiple-choice question scenario. The Actor selects option (B). The red "X" confirms this answer is wrong.

**Panel 2: Untrained Critic**

* **Text Transcription:** "Your response is mostly correct . . . {continues to agree with the actor}."

* **Observation:** The feedback is vague and supportive, failing to identify the error in the Actor's answer. The text in curly braces `{}` appears to be a placeholder or summary of the critic's continued agreement.

**Panel 3: Trained Critic**

* **Text Transcription:** "While your response is a valid explanation for why option B could be a possible answer, here are two reasons why option C is also correct and refutes your response directly: 1. . . .{Counterpoint to for why B could be wrong} 2. . . .{Evidence for why C is correct}"

* **Observation:** This feedback is specific and constructive. It acknowledges the Actor's reasoning but provides a direct refutation by advocating for a different answer (option C). It uses a numbered list to present counter-evidence. The text in curly braces `{}` contains placeholders for the specific logical points.

### Key Observations

1. **Visual Hierarchy:** The diagram uses a clear top-to-bottom flow to represent a sequence: Actor proposes -> Untrained Critic evaluates -> Trained Critic evaluates.

2. **Color Coding:** Green is associated with the Actor (the source of the initial, incorrect answer). Blue is associated with both Critic roles, suggesting they belong to the same category of "evaluator" agents, despite their differing capabilities.

3. **Icon Consistency:** The Untrained and Trained Critics share the same robot icon, emphasizing that the difference is in their training/knowledge, not their fundamental identity or role.

4. **Feedback Quality Contrast:** The core contrast is between the vague, agreeable feedback of the Untrained Critic and the specific, refuting feedback of the Trained Critic. The Trained Critic's response is longer and more structured.

5. **Placeholder Text:** The use of `{...}` indicates that the diagram is a template or schematic, illustrating the *form* of the interaction rather than a specific, complete example.

### Interpretation

This diagram serves as a pedagogical or conceptual model for understanding the value of trained evaluation in AI systems or learning processes. It demonstrates a failure mode where an initial agent (Actor) makes an error, and a naive evaluator (Untrained Critic) fails to catch it, potentially reinforcing the mistake. The Trained Critic represents the ideal: an evaluator capable of identifying errors, providing counter-evidence, and guiding toward a correct answer (option C in this case).

The underlying message is that not all feedback is equal. Effective critique requires sufficient training or domain knowledge to move beyond surface-level agreement and engage in substantive refutation. The diagram could be used to argue for the importance of investing in robust critic models, human-in-the-loop verification, or advanced training techniques for AI evaluators to ensure reliability and correctness in automated systems. The honey bee question is merely a vehicle to illustrate this meta-concept about evaluation quality.