## Diagram: Tic-Tac-Toe Game State Processing via SymDQN

### Overview

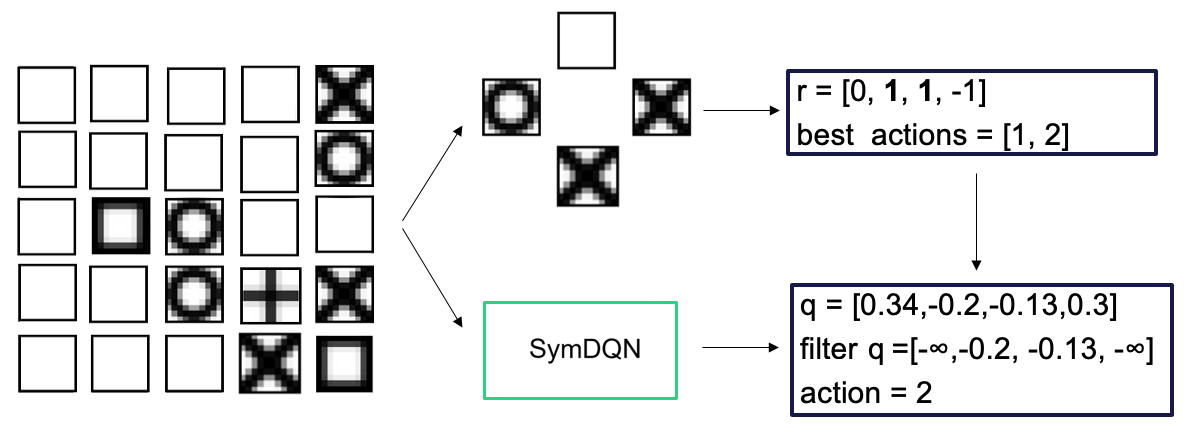

The image is a technical diagram illustrating a decision-making process for an AI agent (SymDQN) in a Tic-Tac-Toe game. It shows a game board state, a filtered or processed view of that state, and the subsequent numerical evaluation and action selection performed by the agent. The flow moves from left to right.

### Components/Axes

The diagram is composed of three primary regions:

1. **Left Region (Game State):** A 5x5 grid representing a Tic-Tac-Toe board. The grid contains empty squares, squares marked with 'X', squares marked with 'O', and one square with a '+' symbol.

2. **Middle Region (Processed State):** A smaller 3x3 grid, which appears to be a cropped or focused view derived from the left grid. Arrows point from the left grid to this middle grid and to a green box labeled "SymDQN".

3. **Right Region (Evaluation & Output):** Two rectangular text boxes with dark blue borders, connected by a downward arrow.

* **Top Box:** Contains the text `r = [0, 1, 1, -1]` and `best actions = [1, 2]`.

* **Bottom Box:** Contains the text `q = [0.34,-0.2,-0.13,0.3]`, `filter q =[-∞,-0.2, -0.13, -∞]`, and `action = 2`.

4. **Agent Component:** A green-bordered box labeled "SymDQN" positioned below the middle grid. An arrow points from this box to the bottom evaluation box.

### Detailed Analysis

**1. Left Grid (5x5 Game State):**

* **Structure:** 5 rows by 5 columns.

* **Content (Row-wise from top):**

* Row 1: Empty, Empty, Empty, Empty, **X**

* Row 2: Empty, Empty, Empty, Empty, **O**

* Row 3: Empty, **O** (with a thick border), **O**, Empty, Empty

* Row 4: Empty, Empty, **O**, **+**, **X**

* Row 5: Empty, Empty, Empty, **X**, **O** (with a thick border)

* **Symbols:** 'X' and 'O' are standard player marks. The '+' symbol in Row 4, Column 4 is unique and may indicate a special cell, a cursor, or a proposed move. Two 'O' symbols (R3C2, R5C5) have noticeably thicker borders.

**2. Middle Grid (3x3 Processed State):**

* **Structure:** 3 rows by 3 columns.

* **Content (Row-wise from top):**

* Row 1: Empty, Empty, Empty

* Row 2: **O**, Empty, **X**

* Row 3: Empty, **X**, Empty

* **Relationship to Left Grid:** This grid does not directly correspond to a contiguous 3x3 section of the left grid. It likely represents a processed, symmetrical, or feature-extracted view of the game state relevant to the SymDQN's decision-making.

**3. Right Text Boxes (Evaluation Data):**

* **Top Box (Reward/Best Actions):**

* `r = [0, 1, 1, -1]`: A list of four numerical values, likely representing rewards or immediate outcomes for four possible actions.

* `best actions = [1, 2]`: Identifies actions indexed 1 and 2 (using 0-based indexing) as the best based on the `r` values. This correlates with the two `1`s in the `r` list.

* **Bottom Box (Q-Values & Final Action):**

* `q = [0.34,-0.2,-0.13,0.3]`: A list of four Q-values, representing the estimated long-term utility for each of the four possible actions.

* `filter q =[-∞,-0.2, -0.13, -∞]`: A filtered version of the Q-value list. Actions 0 and 3 are assigned `-∞` (negative infinity), effectively removing them from consideration. This filter likely applies constraints (e.g., only legal moves are considered).

* `action = 2`: The final selected action. This corresponds to the action with the highest value in the `filter q` list (`-0.13` is greater than `-0.2` and `-∞`).

**4. Flow and Logic:**

The diagram depicts a sequential process:

1. The agent observes the game state (Left Grid).

2. This state is processed into a relevant feature representation (Middle Grid).

3. The SymDQN agent evaluates possible actions, generating initial rewards (`r`) and identifying top candidates (`best actions`).

4. The agent also computes Q-values (`q`) for each action.

5. A filter is applied to the Q-values (`filter q`), likely masking illegal or suboptimal moves.

6. The action with the highest filtered Q-value (`action = 2`) is selected as the final decision.

### Key Observations

* The `best actions` from the reward list (`[1, 2]`) do not directly determine the final `action`. The final choice (`action = 2`) is based on the filtered Q-values, where action 2 has a value of `-0.13`, which is higher than action 1's `-0.2`.

* The filtering step is critical, as it nullifies the Q-values for actions 0 and 3, which had the highest (`0.34`) and second-highest (`0.3`) raw Q-values, respectively. This suggests these actions were deemed illegal or invalid by the game rules.

* The diagram visually separates the immediate reward signal (`r`) from the learned value function (`q`), showing how both inform the final decision.

### Interpretation

This diagram illustrates the internal decision-making pipeline of a SymDQN (Symmetrical Deep Q-Network) agent playing Tic-Tac-Toe. It demonstrates how the agent:

1. **Perceives** a complex board state.

2. **Processes** it into a symmetrical or canonical form (the 3x3 grid) to leverage the game's inherent symmetries, improving learning efficiency.

3. **Evaluates** moves using two parallel signals: immediate rewards (`r`) and long-term value estimates (`q`).

4. **Applies Constraints** via the `filter q` step, which is essential for enforcing game rules (e.g., you cannot place a mark on an occupied cell).

5. **Selects** the optimal *legal* action based on the highest filtered Q-value.

The key takeaway is the separation between raw evaluation and constrained decision-making. The agent's "best" move according to raw value (action 0 with Q=0.34) is overruled by the game's rules, leading it to select the next-best legal move (action 2). This highlights the importance of integrating environment-specific rules into the reinforcement learning process. The use of a processed state (middle grid) suggests the SymDQN architecture is designed to handle spatial symmetries, a common and powerful technique in game-playing AI.