TECHNICAL ASSET FINGERPRINT

7de3f7560831613a49194e6f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Composite Image: Attention Mechanisms, Rule Discovery, and Text Summarization

### Overview

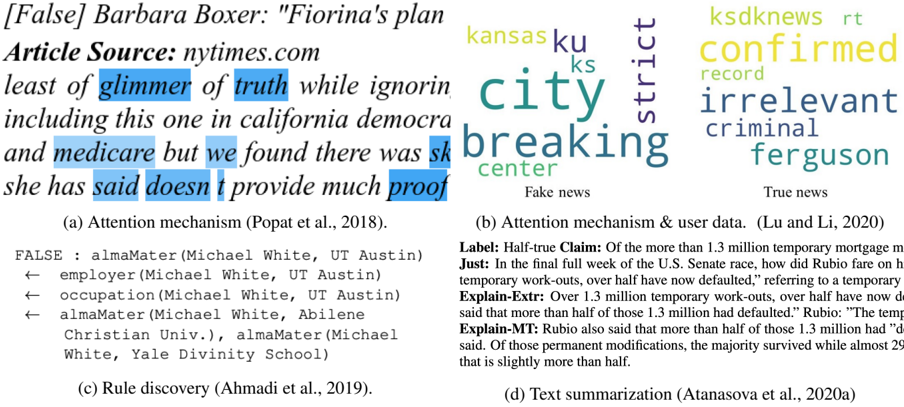

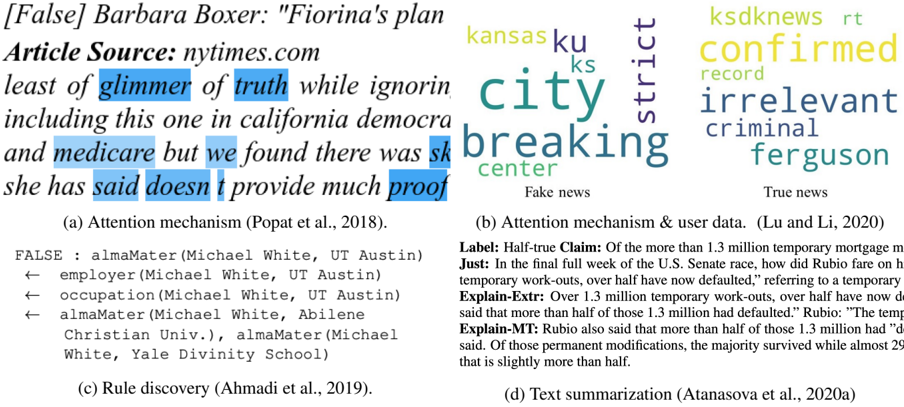

The image is a composite of four sub-images, each representing a different approach to natural language processing. These include attention mechanisms, rule discovery, and text summarization. Each sub-image is labeled with a letter (a, b, c, d) and a citation.

### Components/Axes

* **(a) Attention mechanism (Popat et al., 2018):** Displays the sentence "[False] Barbara Boxer: 'Fiorina's plan' Article Source: nytimes.com least of glimmer of truth while ignoring including this one in california democra and medicare but we found there was sk she has said doesn't provide much proof". Certain words are highlighted in blue, indicating the attention mechanism's focus.

* **(b) Attention mechanism & user data. (Lu and Li, 2020):** A word cloud labeled "Fake news". The words include: "kansas", "ku", "ks", "city", "district", "breaking", "center".

* **(b) Attention mechanism & user data. (Lu and Li, 2020):** A word cloud labeled "True news". The words include: "ksdknews", "rt", "confirmed", "record", "irrelevant", "criminal", "ferguson".

* **(c) Rule discovery (Ahmadi et al., 2019):** Lists a series of rules related to "Michael White, UT Austin" and "Michael White, Abilene Christian Univ.", "Michael White, Yale Divinity School". The rules are:

* FALSE : almaMater (Michael White, UT Austin)

* employer (Michael White, UT Austin)

* occupation (Michael White, UT Austin)

* almaMater (Michael White, Abilene Christian Univ.), almaMater (Michael White, Yale Divinity School)

* **(d) Text summarization (Atanasova et al., 2020a):** Presents text summarization examples related to a "Half-true Claim" about temporary mortgage modifications. The summaries are labeled as:

* Label: Half-true Claim: Of the more than 1.3 million temporary mortgage modifications...

* Just: In the final full week of the U.S. Senate race, how did Rubio fare on how many temporary work-outs, over half have now defaulted," referring to a temporary...

* Explain-Extr: Over 1.3 million temporary work-outs, over half have now defaulted. Rubio said that more than half of those 1.3 million had defaulted." Rubio: "The temporary...

* Explain-MT: Rubio also said that more than half of those 1.3 million had "defaulted," he said. Of those permanent modifications, the majority survived while almost 29% of those that is slightly more than half.

### Detailed Analysis or Content Details

* **Attention Mechanism (a):** The highlighted words in the sentence suggest that the attention mechanism is focusing on words related to truthfulness, political figures, and the source of the information.

* **Word Clouds (b):** The word clouds visually represent the most frequent terms associated with "Fake news" and "True news". The size of each word corresponds to its frequency.

* **Rule Discovery (c):** The rules extracted relate to the alma mater, employer, and occupation of Michael White, indicating a focus on biographical information.

* **Text Summarization (d):** The text summarization examples demonstrate different approaches to summarizing a claim about mortgage modifications, including extractive and abstractive methods.

### Key Observations

* The image showcases a variety of NLP techniques used for different tasks, including fact-checking, information extraction, and text summarization.

* The attention mechanism highlights the importance of specific words in a sentence for understanding its meaning.

* The word clouds provide a quick overview of the key terms associated with different categories of news.

* The rule discovery example demonstrates how structured knowledge can be extracted from text.

* The text summarization examples illustrate the challenges of condensing information while preserving its meaning.

### Interpretation

The composite image provides a glimpse into the diverse applications of natural language processing. It demonstrates how NLP techniques can be used to analyze text, extract information, and generate summaries. The examples highlight the importance of attention mechanisms, rule discovery, and text summarization in various NLP tasks. The image suggests that NLP is a powerful tool for understanding and processing large amounts of text data. The juxtaposition of "Fake news" and "True news" word clouds underscores the role of NLP in combating misinformation. The text summarization examples demonstrate the ongoing research in developing more effective and accurate summarization techniques.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Word Cloud Compilation: Fake vs. True News Analysis

### Overview

The image presents a compilation of four distinct word clouds, each representing the output of a different natural language processing (NLP) technique applied to news articles labeled as either "Fake news" or "True news". Each word cloud is accompanied by a brief description of the method used and a citation. Below the word clouds is a text block detailing a "Label: Half-true Claim" and associated justifications.

### Components/Axes

The image is divided into four quadrants, each containing a word cloud.

* **Top-Left:** Word cloud labeled "(a) Attention mechanism (Popat et al., 2018)." Words are colored in shades of yellow and orange.

* **Top-Right:** Word cloud labeled "(b) Attention mechanism & user data. (Lu and Li, 2020)." Words are colored in shades of blue and purple.

* **Bottom-Left:** Text block labeled "(c) Rule discovery (Ahmadi et al., 2019)." This is not a word cloud, but a list of relationships.

* **Bottom-Right:** Text block labeled "(d) Text summarization (Atanasova et al., 2020a)." This contains a "Label: Half-true Claim" and supporting text.

Additionally, there is a header text: "[False] Barbara Boxer: “Fiorina’s plan” Article Source: nytimes.com".

### Detailed Analysis or Content Details

**Word Cloud (a): Attention mechanism (Popat et al., 2018)**

The most prominent words appear to be "least", "glimmer", "truth", "ignoring", "including", "california", "democrat", "medicare", "found", "doesn't", "proof". The size of the words suggests their frequency in the analyzed text.

**Word Cloud (b): Attention mechanism & user data. (Lu and Li, 2020)**

The most prominent words are "kansas", "ku", "ks", "city", "breaking", "center", "confirmed", "record", "irrelevant", "criminal", "ferguson".

**Text Block (c): Rule discovery (Ahmadi et al., 2019)**

This block presents a series of relationships, formatted as:

FALSE : almaMater (Michael White, UT Austin)

← employer (Michael White, UT Austin)

← occupation (Michael White, UT Austin)

← almaMater (Michael White, Abilene Christian Univ.), almaMater (Michael White, Yale Divinity School)

**Text Block (d): Text summarization (Atanasova et al., 2020a)**

* **Label:** Half-true Claim: Of the more than 1.3 million temporary mortgage

* **Just In:** the final full week of the U.S. Senate race, how did Rubio fare on h temporary work-outs, over half have now defaulted, referring to a temporary

* **Explain-Extr:** Over 1.3 million temporary work-outs, over half have now d said that more than half of 1.3 million had defaulted.” Rubio: “The temp

* **Explain-MT:** Rubio also said that more than half of those 1.3 million had “d said. Of those permanent modifications, the majority survived while almost 29 that is slightly more than half.

### Key Observations

* The word clouds visually represent the differing vocabulary used in articles categorized as "Fake news" (a) versus "True news" (b).

* The "Rule discovery" block (c) demonstrates an attempt to identify relationships between entities (Michael White, UT Austin, etc.) and classify them as "FALSE".

* The "Text summarization" block (d) provides a detailed breakdown of a claim labeled as "Half-true", including supporting context and explanations.

* The header text indicates the analysis is focused on a statement made by Barbara Boxer regarding Fiorina's plan, sourced from nytimes.com.

### Interpretation

The image illustrates a multi-faceted approach to identifying and analyzing potentially misleading information. The word clouds highlight the distinct linguistic characteristics of fake and true news, suggesting that NLP techniques can be used to differentiate between them. The rule discovery method attempts to establish factual inconsistencies, while the text summarization provides a nuanced assessment of a specific claim. The combination of these techniques suggests a comprehensive strategy for combating misinformation. The presence of citations indicates a research-oriented context. The focus on a specific political statement (Boxer vs. Fiorina) suggests a potential application of these techniques to political discourse analysis. The "Half-true" label in (d) is particularly interesting, as it demonstrates the complexity of truth assessment and the need for nuanced evaluation beyond simple binary classifications.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Composite Research Figure: Text Analysis & Fact-Checking Methods

### Overview

The image is a composite figure containing four distinct panels, labeled (a) through (d), each illustrating a different computational method or output related to text analysis, fact-checking, or information extraction. The panels are arranged in a 2x2 grid. The overall theme appears to be techniques for analyzing textual claims, news, or data.

### Components/Axes

The figure is divided into four rectangular panels:

* **Top-Left (a):** Labeled "(a) Attention mechanism (Popat et al., 2018)." Contains a text snippet with highlighted words.

* **Top-Right (b):** Labeled "(b) Attention mechanism & user data. (Lu and Li, 2020)." Contains a word cloud divided into "Fake news" and "True news."

* **Bottom-Left (c):** Labeled "(c) Rule discovery (Ahmadi et al., 2019)." Contains a structured list showing extracted relationships.

* **Bottom-Right (d):** Labeled "(d) Text summarization (Atanasova et al., 2020a)." Contains a structured text block with a label, claim, justification, and explanations.

### Detailed Analysis

#### Panel (a): Attention Mechanism

* **Content:** A text excerpt with specific words highlighted in blue.

* **Header Text:** `[False] Barbara Boxer: "Fiorina's plan`

* **Source Line:** `Article Source: nytimes.com`

* **Main Text Snippet:** `least of glimmer of truth while ignorin including this one in california democrac and medicare but we found there was sh she has said doesn't provide much proof`

* **Highlighted Words (in order of appearance):** `glimmer`, `truth`, `said`, `doesn't`, `proof`.

* **Visual Structure:** The highlights suggest an attention mechanism is focusing on these specific words within the larger, partially visible sentence. The text is cut off on the right and left margins.

#### Panel (b): Attention Mechanism & User Data (Word Cloud)

* **Content:** A word cloud visualization split into two thematic clusters.

* **Left Cluster Label:** `Fake news` (text below the cluster).

* **Right Cluster Label:** `True news` (text below the cluster).

* **"Fake news" Cluster Words (approximate size from largest to smaller):**

* `city` (largest, teal)

* `breaking` (large, teal)

* `ku` (medium, olive green)

* `kansas` (medium, olive green)

* `ks` (small, olive green)

* `strict` (medium, dark purple, oriented vertically)

* `center` (small, teal)

* **"True news" Cluster Words (approximate size from largest to smaller):**

* `confirmed` (largest, yellow-green)

* `irrelevant` (large, dark blue)

* `criminal` (medium, dark blue)

* `ferguson` (medium, dark blue)

* `ksdknews` (small, yellow-green)

* `rt` (small, yellow-green)

* `record` (small, yellow-green)

* **Visual Structure:** Word size likely corresponds to frequency or importance in the respective corpus. The spatial separation and distinct color palettes (teal/olive/purple vs. yellow-green/dark blue) visually differentiate the two news categories.

#### Panel (c): Rule Discovery

* **Content:** A structured list demonstrating the extraction of factual relationships from a text about "Michael White."

* **First Line:** `FALSE : almaMater (Michael White, UT Austin)`

* **Subsequent Lines (each prefixed with a left arrow `←`):**

* `← employer (Michael White, UT Austin)`

* `← occupation (Michael White, UT Austin)`

* `← almaMater (Michael White, Abilene Christian Univ.), almaMater (Michael White, Yale Divinity School)`

* **Interpretation:** The structure suggests a rule or model has identified that the claim "Michael White's alma mater is UT Austin" is FALSE, and has discovered alternative or conflicting relations: his employer is UT Austin, his occupation is associated with UT Austin, and his actual alma maters are Abilene Christian University and Yale Divinity School.

#### Panel (d): Text Summarization

* **Content:** A structured text block summarizing and analyzing a claim about U.S. Senate candidate Marco Rubio and mortgage modifications.

* **Label:** `Label: Half-true`

* **Claim:** `Claim: Of the more than 1.3 million temporary mortgage modifications, over half have now defaulted.`

* **Justification (Just):** `Just: In the final full week of the U.S. Senate race, how did Rubio fare on his temporary work-outs, over half have now defaulted," referring to a temporary mortgage modification program.`

* **Explanation - Extractive (Explain-Extr):** `Explain-Extr: Over 1.3 million temporary work-outs, over half have now defaulted. Rubio: "The temporary work-outs said that more than half of those 1.3 million had defaulted."`

* **Explanation - Abstractive/Machine Translation (Explain-MT):** `Explain-MT: Rubio also said that more than half of those 1.3 million had "d... said. Of those permanent modifications, the majority survived while almost 29%... that is slightly more than half.` (Note: The text is truncated with ellipses `...`).

### Key Observations

1. **Methodological Diversity:** The figure showcases four distinct NLP/AI approaches: attention visualization (a, b), symbolic rule discovery (c), and abstractive/extractive summarization (d).

2. **Focus on Veracity:** Panels (a), (c), and (d) explicitly deal with assessing the truthfulness or accuracy of claims (`[False]`, `FALSE`, `Half-true`).

3. **Data Representation:** Information is presented as highlighted text, a word cloud, a logical rule list, and a structured summary, respectively.

4. **Citations:** Each panel is attributed to a specific research paper (Popat et al., 2018; Lu and Li, 2020; Ahmadi et al., 2019; Atanasova et al., 2020a).

### Interpretation

This composite figure serves as a visual taxonomy of techniques used in computational fact-checking and text analysis. It moves from low-level model interpretability (showing which words an attention mechanism focuses on in a false claim in **a**) to corpus-level patterns (differentiating lexical choices in fake vs. true news in **b**). It then demonstrates a symbolic approach that extracts and verifies relational facts to contradict a claim (**c**), and finally shows a system that generates structured, human-readable explanations for a claim's veracity label (**d**).

The progression suggests a pipeline or a set of complementary tools: first, identifying suspicious language patterns; second, understanding broader contextual cues; third, performing precise fact verification against a knowledge base; and fourth, synthesizing the findings into a justified verdict. The inclusion of specific, real-world political claims (about Carly Fiorina's plan and Marco Rubio's statements) grounds these technical methods in a practical application domain: political discourse analysis and automated fact-checking. The truncation in panels (a) and (d) indicates these are excerpts from larger outputs or interfaces.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Text Analysis Examples: Attention Mechanisms, Rule Discovery, and Summarization

### Overview

The image presents four annotated examples of text analysis techniques applied to news articles and claims. Each example demonstrates a different method: attention mechanisms, rule discovery, and text summarization, with visualizations of key textual elements and relationships.

### Components/Axes

#### (a) Attention Mechanism (Popat et al., 2018)

- **Text Source**: nytimes.com article titled "[False] Barbara Boxer: 'Fiorina's plan least of glimmer of truth while ignorin..."

- **Highlighted Phrases**:

- "least of glimmer of truth" (blue)

- "including this one in california democra" (blue)

- "medicare but we found there was sk" (blue)

- **Key Text**:

- "she has said doesn’t provide much proof"

#### (b) Attention Mechanism & User Data (Lu and Li, 2020)

- **Labels**:

- **Fake News**: "kansas city breaking center" (purple)

- **True News**: "ksdknews confirmed record irrelevant criminal ferguson" (yellow)

- **Key Text**:

- "confirmed record irrelevant criminal ferguson"

#### (c) Rule Discovery (Ahmadi et al., 2019)

- **Knowledge Graph**:

- **Nodes**:

- `FALSE`

- `almaMater(Michael White, UT Austin)`

- `employer(Michael White, UT Austin)`

- `occupation(Michael White, UT Austin)`

- `almaMater(Michael White, Abilene Christian Univ.)`

- `almaMater(Michael White, Yale Divinity School)`

- **Edges**:

- `FALSE → almaMater(Michael White, UT Austin)`

- `FALSE → employer(Michael White, UT Austin)`

- `FALSE → occupation(Michael White, UT Austin)`

- `FALSE → almaMater(Michael White, Abilene Christian Univ.)`

- `FALSE → almaMater(Michael White, Yale Divinity School)`

#### (d) Text Summarization (Atanasova et al., 2020a)

- **Claim**:

- **Label**: "Half-true Claim"

- **Justification**:

- "In the final full week of the U.S. Senate race, how did Rubio fare on temporary work-outs, over half have now defaulted," referring to temporary work-outs.

- **Explanations**:

- **Explain-Extr**: "Over 1.3 million temporary work-outs, over half have now defaulted."

- **Explain-MT**: "Rubio also said that more than half of those 1.3 million had defaulted."

- **Discrepancy**:

- "The temp said that more than half of those 1.3 million had defaulted."

- "Of those permanent modifications, the majority survived while almost 29% defaulted."

### Detailed Analysis

#### (a) Attention Mechanism

- Highlights phrases suggesting skepticism about Fiorina’s plan, including references to California Democrats and Medicare. The incomplete text ("sk") may indicate truncation or redaction.

#### (b) Attention Mechanism & User Data

- Contrasts fake and true news labels with color-coded keywords. "City breaking center" (purple) and "confirmed record irrelevant criminal ferguson" (yellow) reflect user focus on specific entities.

#### (c) Rule Discovery

- Reveals relationships between entities (e.g., `Michael White` linked to multiple alma maters and employers). The graph structure implies a focus on provenance and credibility.

#### (d) Text Summarization

- Demonstrates claim verification with conflicting data:

- **Claim**: Over half of 1.3M temporary work-outs defaulted.

- **Reality**: Majority survived, with ~29% defaulting (slightly more than half).

### Key Observations

1. **Attention Mechanisms** prioritize phrases indicating skepticism or factual gaps.

2. **Rule Discovery** maps entities to assess credibility (e.g., `Michael White`’s affiliations).

3. **Text Summarization** highlights discrepancies between claims and evidence (e.g., 29% default vs. "more than half").

### Interpretation

- **Provenance and Credibility**: The examples emphasize verifying sources (e.g., `almaMater` nodes) and contextualizing claims (e.g., Rubio’s statement vs. data).

- **Bias in Attention**: Fake news labels focus on emotionally charged terms ("breaking," "criminal"), while true news emphasizes confirmation ("confirmed record").

- **Ambiguity in Claims**: The summarization example underscores the need for precise language in fact-checking (e.g., "slightly more than half" vs. "more than half").

This analysis reveals how NLP techniques dissect textual data to uncover biases, relationships, and factual accuracy, critical for investigative journalism and automated fact-checking systems.

DECODING INTELLIGENCE...