## Text Analysis Examples: Attention Mechanisms, Rule Discovery, and Summarization

### Overview

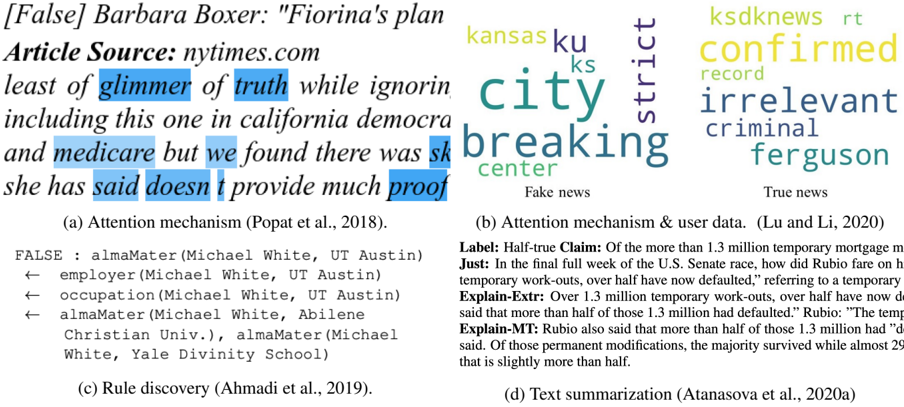

The image presents four annotated examples of text analysis techniques applied to news articles and claims. Each example demonstrates a different method: attention mechanisms, rule discovery, and text summarization, with visualizations of key textual elements and relationships.

### Components/Axes

#### (a) Attention Mechanism (Popat et al., 2018)

- **Text Source**: nytimes.com article titled "[False] Barbara Boxer: 'Fiorina's plan least of glimmer of truth while ignorin..."

- **Highlighted Phrases**:

- "least of glimmer of truth" (blue)

- "including this one in california democra" (blue)

- "medicare but we found there was sk" (blue)

- **Key Text**:

- "she has said doesn’t provide much proof"

#### (b) Attention Mechanism & User Data (Lu and Li, 2020)

- **Labels**:

- **Fake News**: "kansas city breaking center" (purple)

- **True News**: "ksdknews confirmed record irrelevant criminal ferguson" (yellow)

- **Key Text**:

- "confirmed record irrelevant criminal ferguson"

#### (c) Rule Discovery (Ahmadi et al., 2019)

- **Knowledge Graph**:

- **Nodes**:

- `FALSE`

- `almaMater(Michael White, UT Austin)`

- `employer(Michael White, UT Austin)`

- `occupation(Michael White, UT Austin)`

- `almaMater(Michael White, Abilene Christian Univ.)`

- `almaMater(Michael White, Yale Divinity School)`

- **Edges**:

- `FALSE → almaMater(Michael White, UT Austin)`

- `FALSE → employer(Michael White, UT Austin)`

- `FALSE → occupation(Michael White, UT Austin)`

- `FALSE → almaMater(Michael White, Abilene Christian Univ.)`

- `FALSE → almaMater(Michael White, Yale Divinity School)`

#### (d) Text Summarization (Atanasova et al., 2020a)

- **Claim**:

- **Label**: "Half-true Claim"

- **Justification**:

- "In the final full week of the U.S. Senate race, how did Rubio fare on temporary work-outs, over half have now defaulted," referring to temporary work-outs.

- **Explanations**:

- **Explain-Extr**: "Over 1.3 million temporary work-outs, over half have now defaulted."

- **Explain-MT**: "Rubio also said that more than half of those 1.3 million had defaulted."

- **Discrepancy**:

- "The temp said that more than half of those 1.3 million had defaulted."

- "Of those permanent modifications, the majority survived while almost 29% defaulted."

### Detailed Analysis

#### (a) Attention Mechanism

- Highlights phrases suggesting skepticism about Fiorina’s plan, including references to California Democrats and Medicare. The incomplete text ("sk") may indicate truncation or redaction.

#### (b) Attention Mechanism & User Data

- Contrasts fake and true news labels with color-coded keywords. "City breaking center" (purple) and "confirmed record irrelevant criminal ferguson" (yellow) reflect user focus on specific entities.

#### (c) Rule Discovery

- Reveals relationships between entities (e.g., `Michael White` linked to multiple alma maters and employers). The graph structure implies a focus on provenance and credibility.

#### (d) Text Summarization

- Demonstrates claim verification with conflicting data:

- **Claim**: Over half of 1.3M temporary work-outs defaulted.

- **Reality**: Majority survived, with ~29% defaulting (slightly more than half).

### Key Observations

1. **Attention Mechanisms** prioritize phrases indicating skepticism or factual gaps.

2. **Rule Discovery** maps entities to assess credibility (e.g., `Michael White`’s affiliations).

3. **Text Summarization** highlights discrepancies between claims and evidence (e.g., 29% default vs. "more than half").

### Interpretation

- **Provenance and Credibility**: The examples emphasize verifying sources (e.g., `almaMater` nodes) and contextualizing claims (e.g., Rubio’s statement vs. data).

- **Bias in Attention**: Fake news labels focus on emotionally charged terms ("breaking," "criminal"), while true news emphasizes confirmation ("confirmed record").

- **Ambiguity in Claims**: The summarization example underscores the need for precise language in fact-checking (e.g., "slightly more than half" vs. "more than half").

This analysis reveals how NLP techniques dissect textual data to uncover biases, relationships, and factual accuracy, critical for investigative journalism and automated fact-checking systems.