## Violin Plot Comparison: Token Count Distributions

### Overview

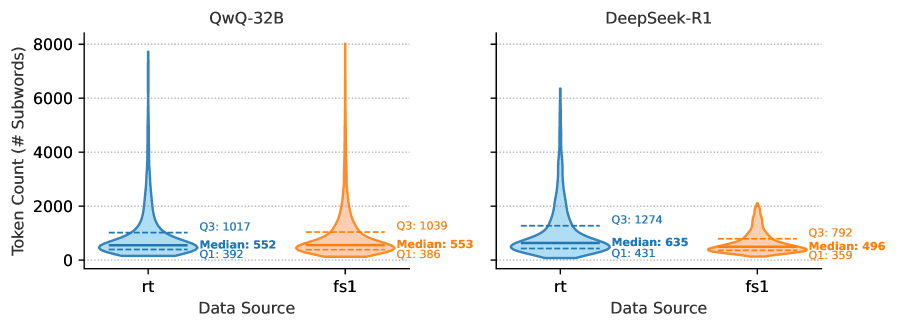

The image displays two side-by-side violin plots comparing the distribution of token counts (measured in number of subwords) for two different models, **QwQ-32B** (left) and **DeepSeek-R1** (right). Each model's plot compares two data sources labeled **rt** and **fs1**. The plots show the probability density of the data at different values, with embedded box plot elements indicating key statistics.

### Components/Axes

* **Chart Title (Top Center):** "QwQ-32B" (left plot), "DeepSeek-R1" (right plot).

* **Y-Axis (Vertical):** Label: "Token Count (# Subwords)". Scale ranges from 0 to 8000, with major tick marks at 0, 2000, 4000, 6000, and 8000.

* **X-Axis (Horizontal):** Label: "Data Source". Two categories are present for each model: "rt" and "fs1".

* **Legend/Statistical Annotations:** For each violin, three key statistics are annotated directly on the plot:

* **QwQ-32B, rt (Blue):** Q3: 1017, Median: 552, Q1: 392.

* **QwQ-32B, fs1 (Orange):** Q3: 1039, Median: 553, Q1: 386.

* **DeepSeek-R1, rt (Blue):** Q3: 1274, Median: 635, Q1: 431.

* **DeepSeek-R1, fs1 (Orange):** Q3: 792, Median: 496, Q1: 359.

* **Color Coding:** The "rt" data source is consistently represented by a blue violin. The "fs1" data source is consistently represented by an orange violin.

### Detailed Analysis

**QwQ-32B Plot (Left):**

* **Data Source "rt" (Blue):** The distribution is strongly right-skewed. The bulk of the data (the widest part of the violin) is concentrated between approximately 400 and 700 tokens. The median is 552. The interquartile range (IQR) is from 392 (Q1) to 1017 (Q3), indicating a long tail extending to higher token counts. The violin's peak density appears near the median.

* **Data Source "fs1" (Orange):** The distribution shape is very similar to "rt" for this model. It is also right-skewed with a dense region between ~400-700 tokens. The median (553) and IQR (Q1: 386, Q3: 1039) are nearly identical to the "rt" source, suggesting comparable token count characteristics between the two data sources for QwQ-32B.

**DeepSeek-R1 Plot (Right):**

* **Data Source "rt" (Blue):** This distribution is also right-skewed but appears more spread out than the QwQ-32B distributions. The dense region is broader, spanning roughly 500 to 900 tokens. The median is higher at 635. The IQR is wider (Q1: 431, Q3: 1274), indicating greater variability in token counts, with a more pronounced tail towards higher values.

* **Data Source "fs1" (Orange):** This distribution is notably different from the "rt" source for the same model. It is more compact and less skewed. The dense region is concentrated between approximately 400 and 600 tokens. The median is lower at 496. The IQR is much narrower (Q1: 359, Q3: 792), indicating that token counts from the "fs1" source are more consistent and generally lower than those from the "rt" source for DeepSeek-R1.

### Key Observations

1. **Model Comparison:** For the "rt" data source, DeepSeek-R1 shows a higher median token count (635 vs. 552) and greater variability (wider IQR) compared to QwQ-32B.

2. **Data Source Consistency:** QwQ-32B exhibits remarkable consistency between the "rt" and "fs1" data sources, with nearly identical medians and distribution shapes.

3. **Data Source Divergence:** DeepSeek-R1 shows a significant divergence between data sources. The "rt" source produces higher and more variable token counts, while the "fs1" source yields lower and more tightly clustered counts.

4. **Distribution Shape:** All four distributions are right-skewed, meaning there is a concentration of data points at lower token counts with a tail of less frequent, higher token count examples. This is typical for length distributions in language data.

### Interpretation

The data suggests fundamental differences in how the two models process or are evaluated on the "rt" and "fs1" data sources.

* **QwQ-32B's** consistent performance across sources implies its tokenization or the nature of its outputs is stable regardless of the input data source ("rt" vs. "fs1"). This could indicate robustness or a specific design that normalizes input characteristics.

* **DeepSeek-R1's** divergent performance is the more striking finding. The "rt" source appears to elicit longer, more variable responses from the model. In contrast, the "fs1" source constrains the model to produce shorter, more uniform outputs. This could mean:

* The "fs1" data source contains prompts that are inherently simpler or more specific, leading to concise answers.

* The "rt" data source contains more open-ended, complex, or verbose prompts.

* The model itself has different behavior modes triggered by the characteristics of each data source.

* The right skew in all plots indicates that while most interactions result in moderate-length outputs (a few hundred subwords), there is a non-trivial subset of interactions that generate very long sequences (approaching or exceeding 8000 subwords), which could be important for understanding computational cost and performance outliers.

In summary, this visualization highlights that model behavior (token count) is not only a function of the model architecture (QwQ-32B vs. DeepSeek-R1) but is also significantly influenced by the data source ("rt" vs. "fs1"), with the effect being much more pronounced for DeepSeek-R1.