\n

## Diagram: Comparison of Three AI Reasoning Approaches for a Medical Query

### Overview

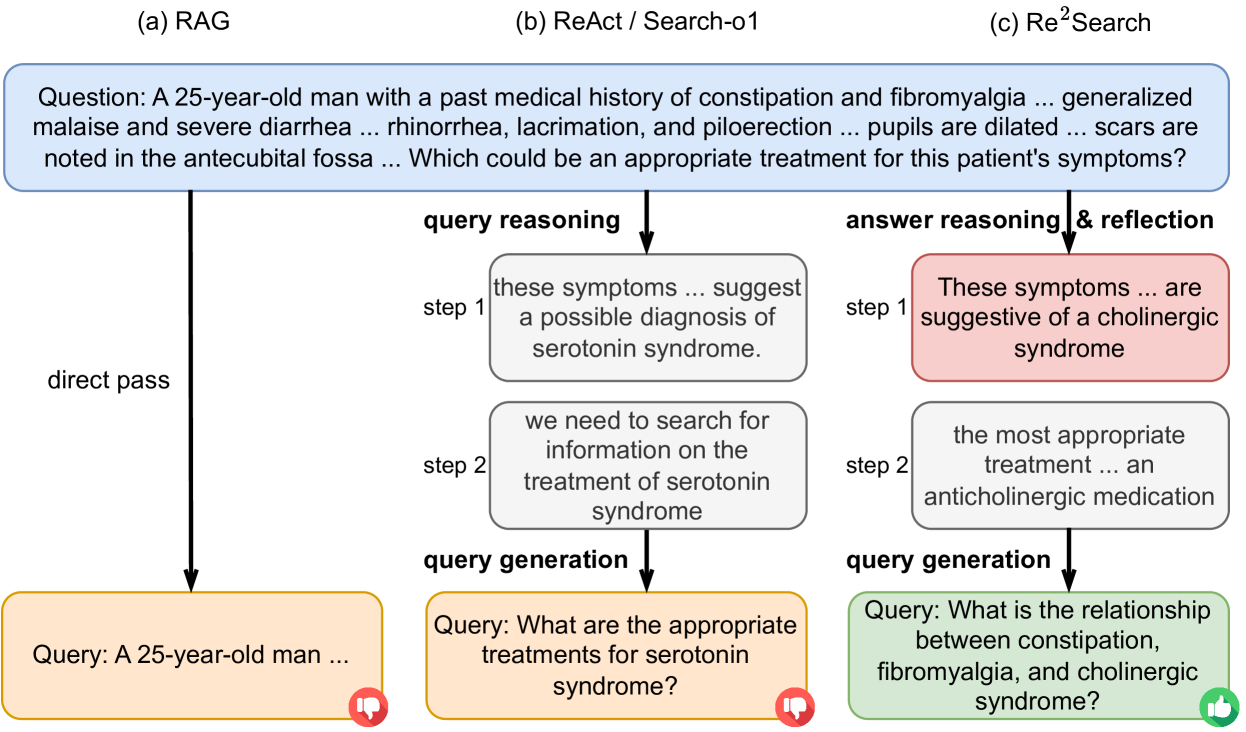

The image is a flow diagram comparing three different methods for processing a complex medical question: (a) RAG, (b) ReAct / Search-o1, and (c) Re²Search. It illustrates the step-by-step reasoning and query generation process for each approach, culminating in a final query and an effectiveness indicator (thumbs-up/down).

### Components/Axes

The diagram is organized into three vertical columns, each representing a distinct method:

1. **Column (a) RAG**: Labeled "(a) RAG" at the top.

2. **Column (b) ReAct / Search-o1**: Labeled "(b) ReAct / Search-o1" at the top.

3. **Column (c) Re²Search**: Labeled "(c) Re²Search" at the top.

A common starting point is a blue box at the top spanning all columns, containing the initial medical question.

Each column contains a sequence of process boxes connected by arrows, indicating the flow of reasoning. The final output for each method is a colored query box at the bottom, accompanied by a circular icon (red thumbs-down or green thumbs-up).

### Detailed Analysis

**1. Initial Question (Top Blue Box):**

* **Text:** "Question: A 25-year-old man with a past medical history of constipation and fibromyalgia ... generalized malaise and severe diarrhea ... rhinorrhea, lacrimation, and piloerection ... pupils are dilated ... scars are noted in the antecubital fossa ... Which could be an appropriate treatment for this patient's symptoms?"

* **Content:** This presents a clinical vignette with symptoms (constipation, fibromyalgia, malaise, diarrhea, rhinorrhea, lacrimation, piloerection, dilated pupils) and a physical finding (scars in the antecubital fossa), asking for an appropriate treatment.

**2. Column (a) RAG Process:**

* **Flow:** A single arrow labeled "direct pass" leads from the question box directly to the final query box.

* **Final Query Box (Orange):**

* **Text:** "Query: A 25-year-old man ..."

* **Icon:** Red circle with a white thumbs-down symbol (👎) in the bottom-right corner.

* **Analysis:** This method performs no intermediate reasoning. It directly passes the original, unprocessed question as the search query.

**3. Column (b) ReAct / Search-o1 Process:**

* **Flow:** The process involves two reasoning steps labeled "query reasoning," followed by "query generation."

* **Step 1 (Grey Box):**

* **Text:** "these symptoms ... suggest a possible diagnosis of serotonin syndrome."

* **Step 2 (Grey Box):**

* **Text:** "we need to search for information on the treatment of serotonin syndrome"

* **Final Query Box (Yellow):**

* **Text:** "Query: What are the appropriate treatments for serotonin syndrome?"

* **Icon:** Red circle with a white thumbs-down symbol (👎) in the bottom-right corner.

* **Analysis:** This method performs explicit, multi-step reasoning. It first hypothesizes a diagnosis (serotonin syndrome) based on the symptoms and then formulates a search query focused on treating that specific condition.

**4. Column (c) Re²Search Process:**

* **Flow:** The process involves two reasoning steps labeled "answer reasoning & reflection," followed by "query generation."

* **Step 1 (Pink Box):**

* **Text:** "These symptoms ... are suggestive of a cholinergic syndrome"

* **Step 2 (Grey Box):**

* **Text:** "the most appropriate treatment ... an anticholinergic medication"

* **Final Query Box (Green):**

* **Text:** "Query: What is the relationship between constipation, fibromyalgia, and cholinergic syndrome?"

* **Icon:** Green circle with a white thumbs-up symbol (👍) in the bottom-right corner.

* **Analysis:** This method also performs multi-step reasoning but with a different focus. It first identifies a different syndrome (cholinergic syndrome) and then reflects on the treatment. Crucially, its final query is not about the treatment directly, but about the *relationship* between the patient's pre-existing conditions (constipation, fibromyalgia) and the hypothesized syndrome, indicating a deeper, more investigative approach.

### Key Observations

1. **Divergent Diagnostic Hypotheses:** The core difference between methods (b) and (c) is the initial diagnosis: "serotonin syndrome" vs. "cholinergic syndrome." This leads to completely different reasoning paths.

2. **Query Sophistication:** The final queries vary significantly in complexity and focus:

* (a) is the raw, broad question.

* (b) is a focused, treatment-oriented question based on its diagnosis.

* (c) is a relational, mechanistic question that seeks to understand underlying connections.

3. **Effectiveness Indication:** The diagram uses thumbs-down (👎) icons for methods (a) and (b), and a thumbs-up (👍) for method (c). This visually asserts that the Re²Search approach is considered more effective or appropriate for this type of complex medical reasoning task.

4. **Process Complexity:** Both (b) and (c) involve explicit reasoning steps, unlike the direct pass of (a). However, (c)'s reasoning includes a "reflection" component implied by its label and the nature of its final query.

### Interpretation

This diagram is a conceptual comparison of AI agent architectures for complex question answering, specifically in a high-stakes domain like medicine. It argues that a simple retrieval-augmented generation (RAG) approach (a) is insufficient, as it merely reformulates the question without understanding. While a reasoning-acting (ReAct) approach (b) improves upon this by forming a diagnostic hypothesis, it may still be flawed if the initial hypothesis is incorrect (e.g., serotonin vs. cholinergic syndrome).

The proposed **Re²Search** method (c) is presented as superior. Its key innovation appears to be integrating **answer reasoning with reflection**. Instead of stopping at a treatment answer, it reflects on the relationship between the patient's history and the acute symptoms. This leads to a more insightful query that probes the *etiology* of the condition rather than just its management. The green thumbs-up suggests that this deeper, relational inquiry is more likely to yield accurate and helpful information for solving the complex clinical puzzle presented. The diagram essentially advocates for AI systems that don't just find answers, but understand and question the connections between data points.