## Heatmap: AbsPE: vanilla causal self-attn at PAUSE

### Overview

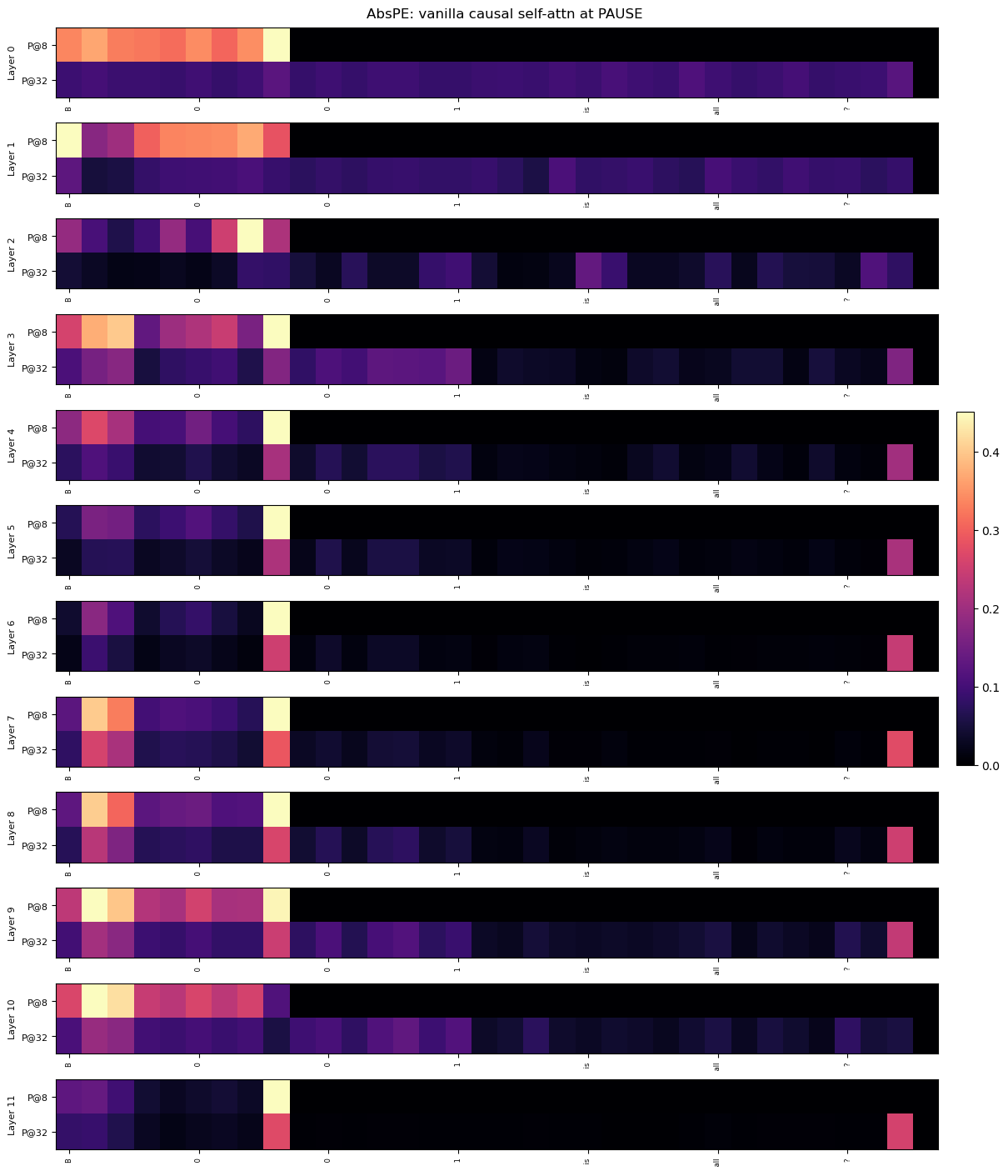

The image is a heatmap visualizing the attention patterns of a vanilla causal self-attention mechanism across 12 layers (Layer 0 to Layer 11) at two positions: P@8 and P@32. The x-axis represents token categories (B, 0, 1, is, all, ?), and the y-axis lists layers. Colors range from dark purple (0.0) to yellow (0.4), indicating attention intensity.

### Components/Axes

- **Y-Axis (Layers)**: Labeled "Layer" with values 0 to 11. Each layer is split into two sub-rows: P@8 (top) and P@32 (bottom).

- **X-Axis (Token Categories)**: Categories include B, 0, 1, is, all, ?.

- **Legend**: Color scale from 0.0 (dark purple) to 0.4 (yellow), with intermediate values (0.1–0.3) in red/pink.

- **Title**: "AbsPE: vanilla causal self-attn at PAUSE" at the top.

### Detailed Analysis

- **Layer 0**:

- **P@8**: Yellow block in "B" (0.4), rest dark purple (0.0).

- **P@32**: Dark purple across all categories.

- **Layer 1**:

- **P@8**: Yellow block in "0" (0.4), rest dark purple.

- **P@32**: Dark purple across all categories.

- **Layer 2**:

- **P@8**: Yellow block in "1" (0.4), rest dark purple.

- **P@32**: Dark purple across all categories.

- **Layer 3**:

- **P@8**: Yellow block in "is" (0.4), rest dark purple.

- **P@32**: Red block in "?" (0.2), rest dark purple.

- **Layer 4**:

- **P@8**: Yellow block in "all" (0.4), rest dark purple.

- **P@32**: Red block in "?" (0.2), rest dark purple.

- **Layer 5**:

- **P@8**: Yellow block in "?" (0.4), rest dark purple.

- **P@32**: Red block in "?" (0.2), rest dark purple.

- **Layer 6**:

- **P@8**: Yellow block in "?" (0.4), rest dark purple.

- **P@32**: Red block in "?" (0.2), rest dark purple.

- **Layer 7**:

- **P@8**: Yellow block in "?" (0.4), rest dark purple.

- **P@32**: Red block in "?" (0.2), rest dark purple.

- **Layer 8**:

- **P@8**: Yellow block in "?" (0.4), rest dark purple.

- **P@32**: Red block in "?" (0.2), rest dark purple.

- **Layer 9**:

- **P@8**: Yellow block in "?" (0.4), rest dark purple.

- **P@32**: Red block in "?" (0.2), rest dark purple.

- **Layer 10**:

- **P@8**: Yellow block in "?" (0.4), rest dark purple.

- **P@32**: Red block in "?" (0.2), rest dark purple.

- **Layer 11**:

- **P@8**: Yellow block in "?" (0.4), rest dark purple.

- **P@32**: Red block in "?" (0.2), rest dark purple.

### Key Observations

1. **P@8 Pattern**: Each layer (0–11) has a single yellow block in a specific x-axis category (B, 0, 1, is, all, ?), indicating focused attention on one token per layer.

2. **P@32 Pattern**: Mostly dark purple, but layers 3–11 have red blocks in the "?" category (0.2), suggesting weaker but consistent attention to unknown tokens.

3. **Color Consistency**: Yellow (0.4) and red (0.2) blocks align with the legend. No discrepancies observed.

### Interpretation

- **Layer-Specific Focus**: In P@8, attention is concentrated on distinct tokens (e.g., B in Layer 0, 0 in Layer 1), suggesting hierarchical processing of input tokens.

- **Unknown Token Attention**: P@32 layers (3–11) show red blocks in "?", indicating the model increasingly attends to unknown tokens in deeper layers. This may reflect a mechanism to handle ambiguity or special tokens in later stages.

- **Positional Encoding**: The "0" and "1" categories in P@8 might correspond to positional encodings, while "is" and "all" could represent structural or global context tokens.

- **Anomalies**: The abrupt shift to "?" attention in P@32 layers suggests a potential design choice to prioritize unknown tokens in deeper layers, possibly for robustness or contextual inference.

This heatmap reveals how self-attention mechanisms allocate focus across layers and token types, highlighting differences between early (P@8) and late (P@32) processing stages.