TECHNICAL ASSET FINGERPRINT

7e88e3c620236a061fe0b310

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

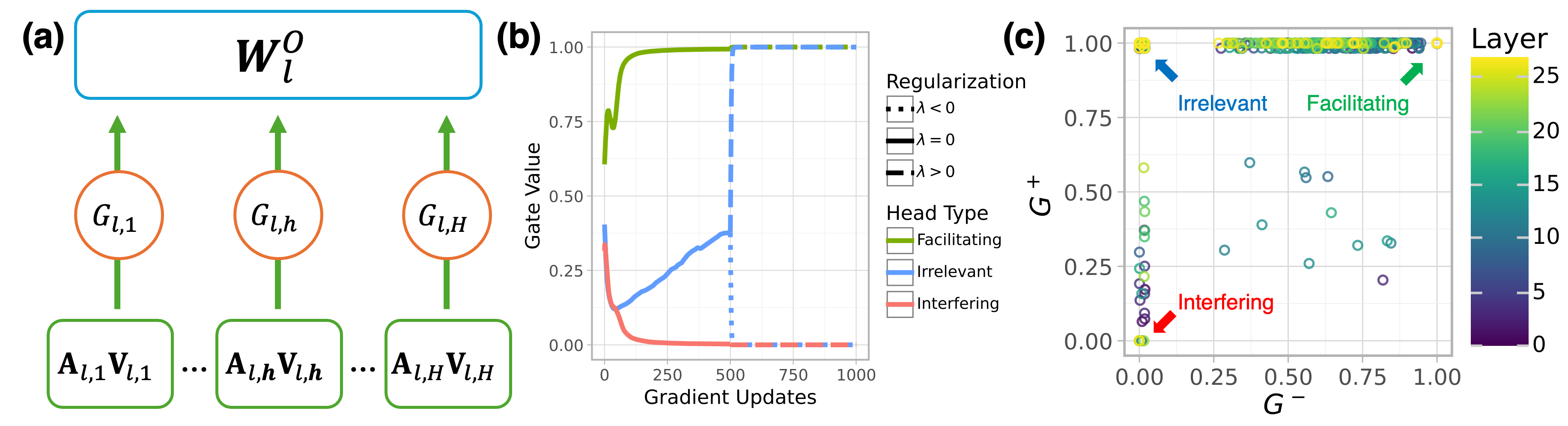

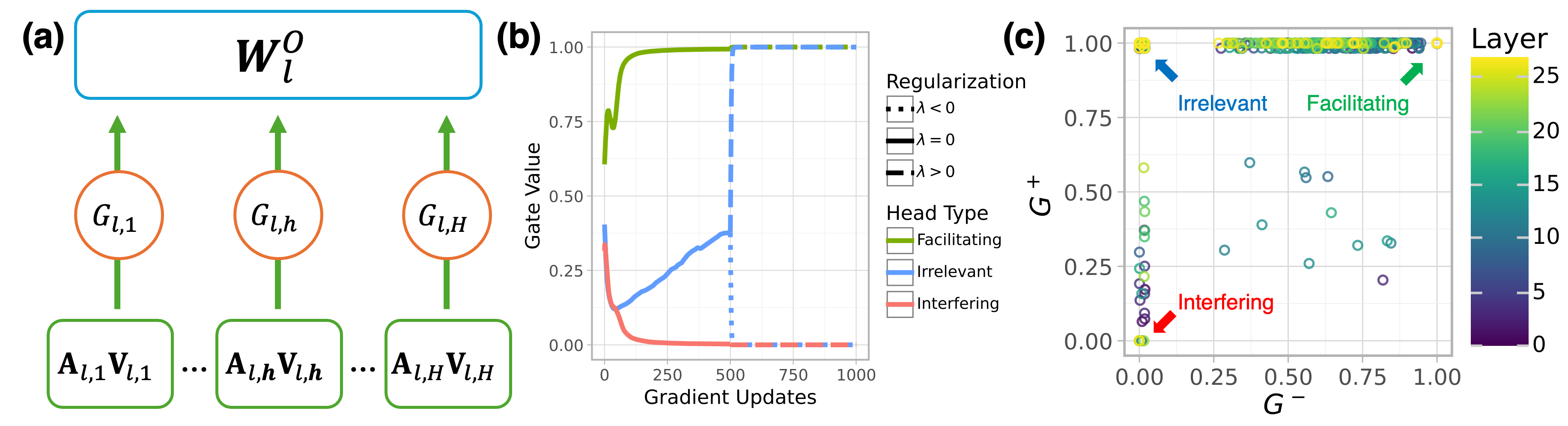

## Multi-Panel Figure: Regularization and Head Type Analysis

### Overview

The image presents a multi-panel figure (a, b, c) analyzing the effects of regularization and head type on a model. Panel (a) is a diagram illustrating the model architecture. Panel (b) is a line graph showing the gate value over gradient updates for different head types. Panel (c) is a scatter plot showing the relationship between G+ and G- values, colored by layer.

### Components/Axes

**Panel (a): Model Architecture Diagram**

* **Nodes:**

* Rectangular nodes labeled "A<sub>l,1</sub>V<sub>l,1</sub>", "A<sub>l,h</sub>V<sub>l,h</sub>", ..., "A<sub>l,H</sub>V<sub>l,H</sub>"

* Circular nodes labeled "G<sub>l,1</sub>", "G<sub>l,h</sub>", "G<sub>l,H</sub>"

* A rounded rectangular node at the top labeled "W<sub>l</sub><sup>o</sup>"

* **Connections:** Green arrows indicate the flow of information from the A<sub>l,x</sub>V<sub>l,x</sub> nodes to the G<sub>l,x</sub> nodes, and from the G<sub>l,x</sub> nodes to the W<sub>l</sub><sup>o</sup> node.

**Panel (b): Gate Value vs. Gradient Updates**

* **X-axis:** "Gradient Updates", ranging from 0 to 1000 in increments of 250.

* **Y-axis:** "Gate Value", ranging from 0.00 to 1.00 in increments of 0.25.

* **Legend (Regularization):** Located on the right side of the plot.

* Dashed line: "λ < 0"

* Solid line: "λ = 0"

* Solid line: "λ > 0"

* **Legend (Head Type):** Located below the Regularization legend.

* Green line: "Facilitating"

* Blue line: "Irrelevant"

* Red line: "Interfering"

**Panel (c): G+ vs. G- Scatter Plot**

* **X-axis:** "G-", ranging from 0.00 to 1.00 in increments of 0.25.

* **Y-axis:** "G+", ranging from 0.00 to 1.00 in increments of 0.25.

* **Color Bar (Layer):** Located on the right side of the plot, ranging from 0 (dark purple) to 25 (yellow).

* **Annotations:**

* "Irrelevant" (blue arrow pointing to the top-left cluster)

* "Facilitating" (green arrow pointing to the top-right cluster)

* "Interfering" (red arrow pointing to the bottom-left cluster)

### Detailed Analysis

**Panel (b): Gate Value vs. Gradient Updates**

* **Facilitating (Green):** The gate value starts around 0.75, rapidly increases to approximately 1.00 within the first 250 gradient updates, and remains at 1.00 for the rest of the updates.

* **Irrelevant (Blue):** The gate value starts around 0.35, decreases to approximately 0.15 within the first 250 gradient updates, then gradually increases to approximately 0.35 by 500 gradient updates, and then jumps to 1.00 at 500 gradient updates, remaining at 1.00 for the rest of the updates.

* **Interfering (Red):** The gate value starts around 0.35, rapidly decreases to approximately 0.00 within the first 250 gradient updates, and remains at 0.00 for the rest of the updates.

* **Regularization (Dashed Blue):** The dashed blue line, representing λ < 0, jumps to 1.00 at 500 gradient updates.

**Panel (c): G+ vs. G- Scatter Plot**

* The scatter plot shows the relationship between G+ and G- values, with each point representing a head.

* The color of each point indicates the layer, with darker colors representing lower layers and lighter colors representing higher layers.

* **Irrelevant Heads:** Cluster in the top-left corner (G+ ≈ 1.00, G- ≈ 0.00). These points are mostly yellow, indicating higher layers.

* **Facilitating Heads:** Cluster in the top-right corner (G+ ≈ 1.00, G- ≈ 1.00). These points are mostly yellow, indicating higher layers.

* **Interfering Heads:** Cluster in the bottom-left corner (G+ ≈ 0.00, G- ≈ 0.00). These points are mostly dark purple, indicating lower layers.

* There are some scattered points in the middle of the plot, representing heads with intermediate G+ and G- values.

### Key Observations

* Facilitating heads quickly reach a gate value of 1.00 and maintain it throughout training.

* Interfering heads quickly reach a gate value of 0.00 and maintain it throughout training.

* Irrelevant heads initially have a lower gate value but eventually reach 1.00 after a certain number of gradient updates.

* Irrelevant and Facilitating heads are primarily located in the higher layers, while Interfering heads are primarily located in the lower layers.

### Interpretation

The data suggests that the model learns to quickly identify and prioritize facilitating heads, while suppressing interfering heads. Irrelevant heads are initially less important but become more relevant later in training. The distribution of head types across layers indicates that lower layers tend to focus on interfering heads, while higher layers focus on facilitating and irrelevant heads. The regularization parameter λ < 0 seems to influence the behavior of irrelevant heads, causing them to become active (gate value = 1.00) after a certain number of gradient updates. The G+ and G- values provide a measure of how much a head contributes to the positive and negative gradients, respectively. The clustering of head types in the G+ vs. G- plot indicates that each type has a distinct role in the learning process.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Neural Network Gate Dynamics & Regularization Effects

### Overview

The image presents a composite diagram illustrating aspects of a neural network, specifically focusing on gate dynamics during training and the impact of regularization. It consists of three sub-figures: (a) a schematic of a neural network layer with gate mechanisms, (b) a plot showing gate value changes during gradient updates, and (c) a scatter plot visualizing the relationship between gate values (G-) and layer depth, colored by head type and regularization strength.

### Components/Axes

**(a) Neural Network Layer Schematic:**

* **W<sub>l</sub><sup>0</sup>**: Represents the weight matrix for layer *l*. Located at the top-center.

* **G<sub>l,1</sub>, G<sub>l,h</sub>, G<sub>l,H</sub>**: Gate units for layer *l*. Arranged horizontally.

* **A<sub>l,1</sub>V<sub>l,1</sub>, A<sub>l,h</sub>V<sub>l,h</sub>, A<sub>l,H</sub>V<sub>l,H</sub>**: Input activations for layer *l*. Arranged horizontally below the gate units.

* Arrows indicate the flow of information.

**(b) Gate Value vs. Gradient Updates:**

* **X-axis**: Gradient Updates (0 to 1000, approximately).

* **Y-axis**: Gate Value (0 to 1.00, approximately).

* A single blue line represents the gate value change over gradient updates.

**(c) Gate Value (G-) vs. Layer Depth:**

* **X-axis**: G- (0.00 to 1.00, approximately).

* **Y-axis**: Layer (0.00 to 0.80, approximately).

* **Colorbar**: Represents Layer depth, ranging from 0 to 25.

* **Legend (Top-Right)**:

* **Regularization**:

* λ < 0 (Black Square)

* λ = 0 (Gray Square)

* λ > 0 (Blue Square)

* **Head Type**:

* Facilitating (Green Circle)

* Irrelevant (Orange Circle)

* Interfering (Red Circle)

* Scatter plot points represent data points with color indicating layer depth.

### Detailed Analysis or Content Details

**(a) Neural Network Layer Schematic:**

The diagram shows a layer of a neural network with multiple gate units controlling the flow of information from input activations to the weight matrix. The gate units (G<sub>l,i</sub>) appear to modulate the activations (A<sub>l,i</sub>V<sub>l,i</sub>) before they are processed by the weight matrix (W<sub>l</sub><sup>0</sup>).

**(b) Gate Value vs. Gradient Updates:**

The blue line starts at approximately 0.05 at 0 gradient updates, rapidly increases to around 0.95 by 250 gradient updates, and then plateaus around 0.98 for the remainder of the gradient updates. The curve shows a steep initial increase followed by saturation.

**(c) Gate Value (G-) vs. Layer Depth:**

* **Facilitating (Green)**: Points are scattered across the plot, generally concentrated in the upper-right quadrant (high G-, high layer).

* **Irrelevant (Orange)**: Points are clustered in the lower-left quadrant (low G-, low layer) and some in the middle.

* **Interfering (Red)**: Points are concentrated in the lower-left quadrant (low G-, low layer).

* **Regularization λ < 0 (Black)**: A few points are scattered.

* **Regularization λ = 0 (Gray)**: A moderate number of points are scattered.

* **Regularization λ > 0 (Blue)**: The majority of points are scattered.

* The color gradient indicates that points in the upper-right tend to have higher layer values (darker purple/blue).

### Key Observations

* Gate values in (b) quickly saturate during training.

* In (c), Facilitating heads tend to have higher G- values and are found in deeper layers.

* Interfering heads are generally associated with lower G- values and shallower layers.

* The regularization parameter (λ) appears to influence the distribution of points, with λ > 0 having the most points.

* The colorbar in (c) shows a clear correlation between G- and layer depth.

### Interpretation

The diagrams collectively suggest a mechanism for understanding how gate units in a neural network evolve during training and how regularization affects their behavior. The rapid saturation of gate values (b) indicates that gates quickly learn to either allow or block information flow. The scatter plot (c) reveals that different head types (Facilitating, Irrelevant, Interfering) exhibit distinct patterns in terms of their gate values and layer depth. Facilitating heads, which presumably contribute positively to the network's performance, are more prevalent in deeper layers and have higher gate values. Interfering heads, conversely, are found in shallower layers and have lower gate values. The influence of regularization (λ) suggests that it can modulate the distribution of these head types, potentially promoting the development of more beneficial gate configurations. The color gradient in (c) further reinforces the idea that gate values are correlated with layer depth, implying that deeper layers may require more selective gating mechanisms. The diagram provides a visual representation of the interplay between gate dynamics, head type, regularization, and layer depth in a neural network, offering insights into the network's learning process and the role of different components.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Multi-Panel Technical Figure]: Gating Mechanism in Multi-Head Attention

### Overview

This image is a three-panel technical figure (labeled a, b, c) illustrating a gating mechanism for multi-head attention layers in a neural network. Panel (a) is a schematic diagram of the architecture. Panel (b) is a line chart showing the evolution of gate values during training. Panel (c) is a scatter plot analyzing the relationship between two gate metrics across different layers and head types.

### Components/Axes

**Panel (a): Schematic Diagram**

* **Top Component:** A blue rounded rectangle labeled **\( W_l^O \)** (Output projection weight matrix for layer \( l \)).

* **Middle Components:** Three orange circles representing gates, labeled **\( G_{l,1} \)**, **\( G_{l,h} \)**, and **\( G_{l,H} \)**. Each has an upward-pointing green arrow connecting it to the \( W_l^O \) block.

* **Bottom Components:** Three green rounded rectangles representing attention head outputs, labeled **\( A_{l,1}V_{l,1} \)**, **\( A_{l,h}V_{l,h} \)**, and **\( A_{l,H}V_{l,H} \)**. Each has a downward-pointing green arrow connecting it to the corresponding gate above.

* **Ellipsis:** The notation "..." between the bottom and middle components indicates there are \( H \) total heads in the layer.

**Panel (b): Line Chart - Gate Value vs. Gradient Updates**

* **X-axis:** **Gradient Updates**. Scale: 0 to 1000, with major ticks at 0, 250, 500, 750, 1000.

* **Y-axis:** **Gate Value**. Scale: 0.00 to 1.00, with major ticks at 0.00, 0.25, 0.50, 0.75, 1.00.

* **Legend (Top-Right):**

* **Regularization:** Three line styles.

* Dotted line: **\( \lambda < 0 \)**

* Solid line: **\( \lambda = 0 \)**

* Dash-dot line: **\( \lambda > 0 \)**

* **Head Type:** Three colors.

* Green line: **Facilitating**

* Blue line: **Irrelevant**

* Red/Salmon line: **Interfering**

**Panel (c): Scatter Plot - \( G^+ \) vs. \( G^- \)**

* **X-axis:** **\( G^- \)**. Scale: 0.00 to 1.00, with major ticks at 0.00, 0.25, 0.50, 0.75, 1.00.

* **Y-axis:** **\( G^+ \)**. Scale: 0.00 to 1.00, with major ticks at 0.00, 0.25, 0.50, 0.75, 1.00.

* **Color Bar (Far Right):** Labeled **Layer**. Scale from 0 (dark purple) to 25 (bright yellow), with ticks at 0, 5, 10, 15, 20, 25.

* **Annotations:**

* **Facilitating:** Green text and arrow pointing to a dense cluster of points in the top-right corner (high \( G^- \), high \( G^+ \)).

* **Irrelevant:** Blue text and arrow pointing to a cluster of points along the top-left edge (low \( G^- \), high \( G^+ \)).

* **Interfering:** Red text and arrow pointing to a cluster of points in the bottom-left corner (low \( G^- \), low \( G^+ \)).

### Detailed Analysis

**Panel (b) - Trend Verification:**

1. **Facilitating Head (Green Line):** Starts at a gate value of ~0.6. Shows a sharp, near-vertical increase within the first ~50 gradient updates to a value of ~0.98. It then plateaus, maintaining a value very close to 1.00 for the remainder of training (up to 1000 updates). The line is solid (\( \lambda = 0 \)).

2. **Irrelevant Head (Blue Line):** Starts at a gate value of ~0.4. It initially dips to ~0.15 within the first ~50 updates. It then begins a steady, roughly linear increase, reaching ~0.4 by 500 updates. At exactly 500 updates, it jumps vertically to 1.00 and plateaus. The line is dotted (\( \lambda < 0 \)) before 500 updates and becomes dash-dot (\( \lambda > 0 \)) after.

3. **Interfering Head (Red/Salmon Line):** Starts at a gate value of ~0.35. It shows a sharp, exponential decay, dropping to near 0.00 by ~150 updates. It remains flat at ~0.00 for the rest of training. The line is solid (\( \lambda = 0 \)).

**Panel (c) - Data Point Distribution:**

* **Facilitating Cluster (Top-Right):** A very dense horizontal band of points is located at \( G^+ \approx 1.00 \), spanning \( G^- \) values from ~0.25 to 1.00. The points are predominantly yellow and light green, indicating they belong to higher layers (approximately layers 15-25).

* **Irrelevant Cluster (Top-Left):** A vertical band of points is located at \( G^- \approx 0.00 \), spanning \( G^+ \) values from ~0.00 to 1.00. The colors are mixed, but many points in the upper part of this band (\( G^+ > 0.5 \)) are blue/teal, indicating mid-range layers (approximately layers 5-15).

* **Interfering Cluster (Bottom-Left):** A small, tight cluster of points is located near the origin (\( G^- \approx 0.00, G^+ \approx 0.00 \)). These points are dark purple, indicating they belong to the earliest layers (layers 0-5).

* **Scattered Points:** There are approximately 15-20 scattered points in the central region of the plot (\( G^- \) between 0.25-0.75, \( G^+ \) between 0.25-0.60). These points are mostly teal and green (layers 10-20).

### Key Observations

1. **Clear Behavioral Dichotomy:** The gating mechanism successfully learns to assign drastically different values to different head types: near 1.0 for Facilitating, near 0.0 for Interfering, and a delayed jump to 1.0 for Irrelevant.

2. **Layer-Dependent Specialization:** Panel (c) strongly suggests that head type is correlated with layer depth. Early layers (0-5) contain "Interfering" heads. Mid-layers (5-15) contain "Irrelevant" heads. Later layers (15-25) are dominated by "Facilitating" heads.

3. **Training Dynamics:** The "Irrelevant" head's gate value is sensitive to a change in regularization (from \( \lambda < 0 \) to \( \lambda > 0 \)) at 500 updates, which triggers its suppression (gate value jumps to 1.0, effectively deactivating it).

4. **Metric Relationship:** For "Facilitating" heads, high \( G^+ \) is associated with a wide range of \( G^- \) values. For "Irrelevant" heads, high \( G^+ \) is strictly associated with very low \( G^- \).

### Interpretation

This figure demonstrates a method for dynamically gating (enabling or disabling) attention heads in a Transformer based on their functional role ("Facilitating," "Irrelevant," or "Interfering").

* **What the data suggests:** The system learns to identify and suppress harmful ("Interfering") heads early in training and in early network layers. It identifies "Irrelevant" heads (which may not contribute positively or negatively) and eventually suppresses them as well, but only after a specific training event (change in regularization). "Facilitating" heads, which are beneficial, are consistently activated (gate value ~1) and are primarily found in the deeper layers of the network.

* **How elements relate:** Panel (a) defines the mechanism. Panel (b) shows the training-time behavior of the gates for each head type. Panel (c) provides a spatial analysis of the final gate states, revealing a clear architectural pattern: the network's early layers filter out noise/interference, middle layers handle neutral information, and deep layers perform the core facilitative processing.

* **Notable Anomalies:** The sharp, discontinuous jump of the "Irrelevant" head's gate at 500 updates is a notable event, indicating a potential phase change in training or the effect of a scheduled hyperparameter. The scattered points in the middle of panel (c) may represent heads in transition or with ambiguous roles.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Neural Network Layer Analysis with Training Dynamics

### Overview

The image presents a three-part technical visualization analyzing neural network layer dynamics. Part (a) shows a schematic of a layer's weight matrix and gate interactions, part (b) displays training dynamics of different head types, and part (c) illustrates the relationship between two gate metrics across layers.

### Components/Axes

**(a) Neural Network Layer Diagram**

- **Top Block**: Weight matrix $ W_l^O $ (blue rectangle)

- **Middle Layer**: Three orange circles labeled:

- $ G_{l,1} $ (left)

- $ G_{l,h} $ (middle)

- $ G_{l,H} $ (right)

- **Bottom Layer**: Green rectangles with $ A_{l,i}V_{l,i} $ (i=1 to H)

- **Arrows**: Vertical connections between weight matrix → gates → activations

**(b) Training Dynamics Graph**

- **X-axis**: Gradient Updates (0-1000)

- **Y-axis**: Gate Value (0.00-1.00)

- **Lines**:

- Green (Facilitating): Starts at ~0.75, plateaus at 1.00

- Blue (Irrelevant): Starts at 0.00, rises to ~0.50, plateaus

- Red (Interfering): Starts at ~0.75, drops to ~0.25, plateaus

- **Legend**:

- Dotted black: $ \lambda < 0 $

- Solid black: $ \lambda = 0 $

- Dashed black: $ \lambda > 0 $

**(c) Gate Metric Scatter Plot**

- **X-axis**: $ G^- $ (0.00-1.00)

- **Y-axis**: $ G^+ $ (0.00-1.00)

- **Color Coding**:

- Green: Facilitating

- Blue: Irrelevant

- Red: Interfering

- **Layer Scale**: 0-25 (purple to yellow gradient)

- **Legend**:

- Yellow circles: Layer 25

- Purple circles: Layer 0

### Detailed Analysis

**(a) Component Flow**

- Weight matrix $ W_l^O $ distributes information to three gate types

- Gates $ G_{l,1} $ (input), $ G_{l,h} $ (hidden), $ G_{l,H} $ (output) modulate activations

- Activations $ A_{l,i}V_{l,i} $ represent processed information flow

**(b) Training Dynamics**

- **Facilitating Heads** (green):

- Rapid convergence to maximum gate value (1.00)

- Stabilizes by ~250 updates

- **Irrelevant Heads** (blue):

- Gradual increase to 0.50 gate value

- Plateaus after ~500 updates

- **Interfering Heads** (red):

- Initial high gate value (~0.75)

- Sharp decline to 0.25 by ~250 updates

**(c) Gate Metric Relationships**

- **Facilitating Heads** (green):

- Clustered at high $ G^+ $ (0.75-1.00) and moderate $ G^- $ (0.25-0.75)

- Layer 25 (yellow) shows highest $ G^+ $

- **Irrelevant Heads** (blue):

- Distributed across mid-range $ G^+ $ (0.25-0.50) and $ G^- $ (0.25-0.75)

- **Interfering Heads** (red):

- Located at low $ G^+ $ (0.00-0.25) and low $ G^- $ (0.00-0.25)

### Key Observations

1. **Training Stability**: Facilitating heads stabilize earliest (250 updates), while irrelevant heads take longest (500+ updates)

2. **Gate Value Correlation**: Higher $ G^+ $ values correlate with better performance (Facilitating heads)

3. **Layer Depth**: Deeper layers (yellow) show more extreme $ G^+ $ values

4. **Interfering Head Pattern**: Consistent low performance across all layers

### Interpretation

The visualization demonstrates how different attention head types behave during training:

- **Facilitating Heads** (green) show optimal training dynamics, quickly reaching maximum gate values and maintaining stability

- **Irrelevant Heads** (blue) represent underperforming components that gradually improve but never reach optimal performance

- **Interfering Heads** (red) demonstrate detrimental behavior, starting strong but rapidly degrading

The scatter plot in (c) reveals that effective heads (Facilitating) occupy the upper-right quadrant of the $ G^+ $-$ G^- $ space, suggesting a positive correlation between these metrics and model performance. The layer depth gradient indicates that deeper layers tend to develop more extreme gate values, potentially reflecting increased representational power or overfitting risks.

The training dynamics in (b) highlight the importance of regularization ($ \lambda $) in controlling head behavior, with different $ \lambda $ values affecting convergence patterns. This analysis provides critical insights for optimizing neural network architectures by identifying and managing different head types during training.

DECODING INTELLIGENCE...