TECHNICAL ASSET FINGERPRINT

7e9f3ad3321ba26dc064c09e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

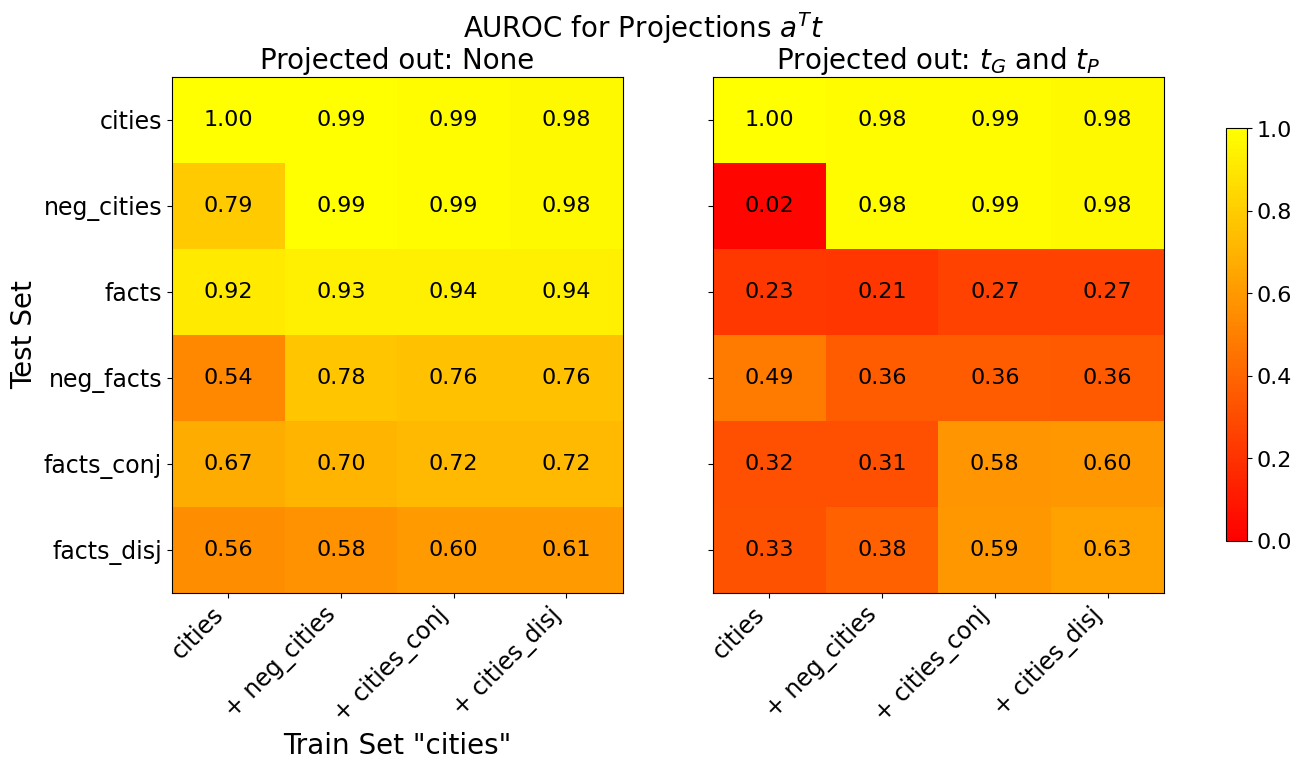

## Heatmap: AUROC for Projections a^Tt

### Overview

The image presents two heatmaps comparing the Area Under the Receiver Operating Characteristic Curve (AUROC) for different projections. The left heatmap shows results when no projections are removed ("Projected out: None"), while the right heatmap shows results when projections tG and tP are removed ("Projected out: tG and tP"). The heatmaps compare performance across different test sets (cities, neg_cities, facts, neg_facts, facts_conj, facts_disj) and train sets (cities, + neg_cities, + cities_conj, + cities_disj). The color intensity represents the AUROC score, ranging from red (low AUROC) to yellow (high AUROC), as indicated by the colorbar on the right.

### Components/Axes

* **Title:** AUROC for Projections a^Tt

* **X-axis (Train Set "cities"):** cities, + neg\_cities, + cities\_conj, + cities\_disj

* **Y-axis (Test Set):** cities, neg\_cities, facts, neg\_facts, facts\_conj, facts\_disj

* **Heatmap 1 Title:** Projected out: None

* **Heatmap 2 Title:** Projected out: tG and tP

* **Colorbar:** Ranges from 0.0 (red) to 1.0 (yellow), with increments of 0.2.

### Detailed Analysis

**Heatmap 1: Projected out: None**

| Test Set | cities | + neg\_cities | + cities\_conj | + cities\_disj |

| :---------- | :----- | :------------ | :------------- | :------------- |

| cities | 1.00 | 0.99 | 0.99 | 0.98 |

| neg\_cities | 0.79 | 0.99 | 0.99 | 0.98 |

| facts | 0.92 | 0.93 | 0.94 | 0.94 |

| neg\_facts | 0.54 | 0.78 | 0.76 | 0.76 |

| facts\_conj | 0.67 | 0.70 | 0.72 | 0.72 |

| facts\_disj | 0.56 | 0.58 | 0.60 | 0.61 |

* **cities:** Consistently high AUROC scores (0.98-1.00) across all train sets.

* **neg\_cities:** High AUROC scores (0.98-0.99) except when trained on "cities" alone (0.79).

* **facts:** High AUROC scores (0.92-0.94) across all train sets.

* **neg\_facts:** Lower AUROC scores (0.54-0.78) compared to other test sets.

* **facts\_conj:** Moderate AUROC scores (0.67-0.72).

* **facts\_disj:** Moderate AUROC scores (0.56-0.61).

**Heatmap 2: Projected out: tG and tP**

| Test Set | cities | + neg\_cities | + cities\_conj | + cities\_disj |

| :---------- | :----- | :------------ | :------------- | :------------- |

| cities | 1.00 | 0.98 | 0.99 | 0.98 |

| neg\_cities | 0.02 | 0.98 | 0.99 | 0.98 |

| facts | 0.23 | 0.21 | 0.27 | 0.27 |

| neg\_facts | 0.49 | 0.36 | 0.36 | 0.36 |

| facts\_conj | 0.32 | 0.31 | 0.58 | 0.60 |

| facts\_disj | 0.33 | 0.38 | 0.59 | 0.63 |

* **cities:** Consistently high AUROC scores (0.98-1.00) across all train sets.

* **neg\_cities:** Very low AUROC score when trained on "cities" alone (0.02), but high scores (0.98-0.99) when trained on other sets.

* **facts:** Low AUROC scores (0.21-0.27) across all train sets.

* **neg\_facts:** Low AUROC scores (0.36-0.49).

* **facts\_conj:** Low AUROC scores (0.31-0.32) when trained on "cities" and "+ neg\_cities", but higher scores (0.58-0.60) when trained on other sets.

* **facts\_disj:** Low AUROC scores (0.33-0.38) when trained on "cities" and "+ neg\_cities", but higher scores (0.59-0.63) when trained on other sets.

### Key Observations

* Removing projections tG and tP significantly impacts the AUROC scores for most test sets, except for "cities".

* The "neg\_cities" test set shows a drastic drop in performance when trained only on "cities" after removing the projections.

* The "facts", "neg\_facts", "facts\_conj", and "facts\_disj" test sets generally have lower AUROC scores when projections tG and tP are removed.

### Interpretation

The heatmaps demonstrate the impact of removing specific projections (tG and tP) on the performance of a model trained and tested on different datasets. The significant drop in AUROC scores for most test sets when these projections are removed suggests that tG and tP contain important information for generalizing to these datasets. The "cities" test set appears to be less sensitive to the removal of these projections, indicating that it may rely on different features or have a simpler underlying structure. The drastic drop in performance for "neg_cities" when trained only on "cities" after removing the projections suggests that the relationship between these two datasets is heavily dependent on the information contained in tG and tP. The results highlight the importance of feature selection and the potential impact of removing relevant information on model performance.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

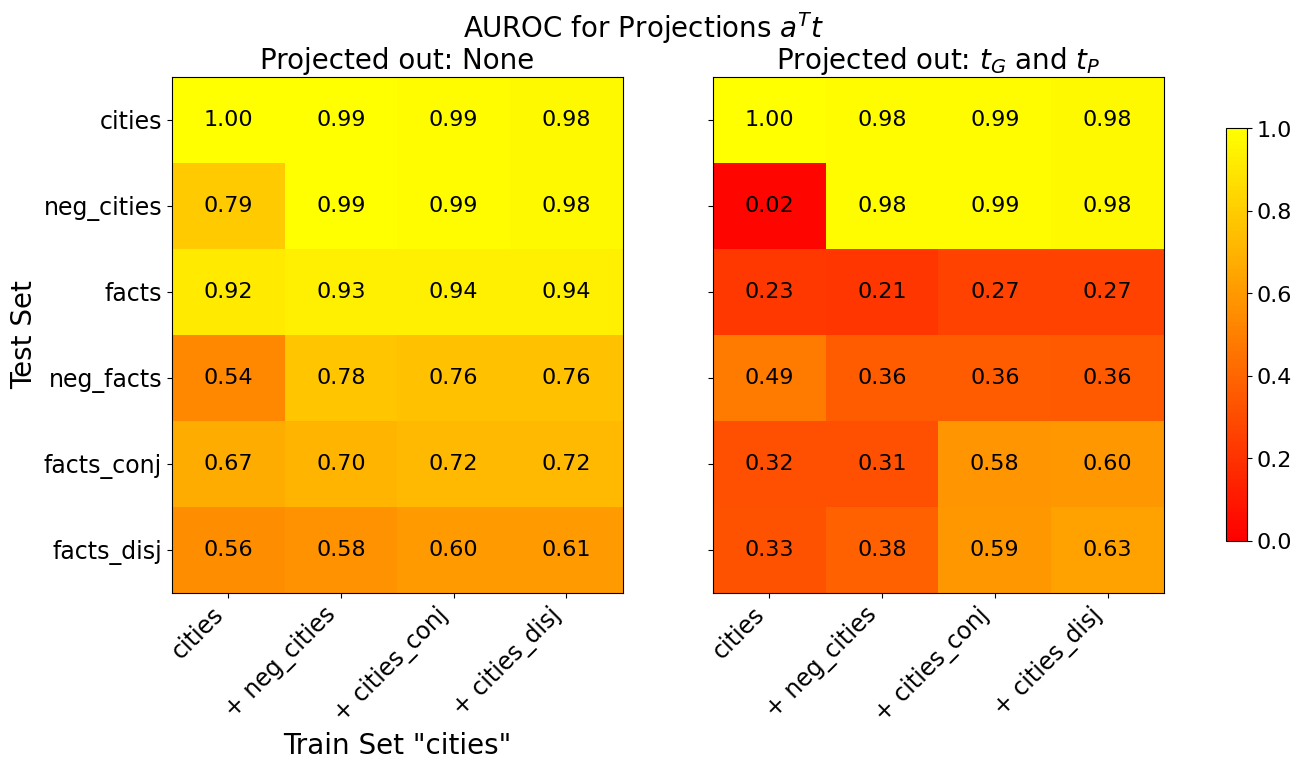

## Heatmap: AUROC for Projections Aᵀt

### Overview

This image presents two heatmaps comparing Area Under the Receiver Operating Characteristic curve (AUROC) scores for different training and testing sets. The heatmaps visualize the performance of projections, with the left heatmap showing results when no projections are applied ("Projected out: None") and the right heatmap showing results when projections *t<sub>G</sub>* and *t<sub>P</sub>* are applied ("Projected out: t<sub>G</sub> and t<sub>P</sub>"). Both heatmaps use the same training and testing set categories.

### Components/Axes

* **Title:** "AUROC for Projections Aᵀt" (centered at the top)

* **Subtitles:** "Projected out: None" (top-left) and "Projected out: t<sub>G</sub> and t<sub>P</sub>" (top-right)

* **X-axis Label:** "Train Set 'cities'" (bottom-center)

* **Y-axis Label:** "Test Set" (left-center)

* **X-axis Categories:** "cities", "+ neg\_cities", "+ cities\_conj", "+ cities\_disj"

* **Y-axis Categories:** "cities", "neg\_cities", "facts", "neg\_facts", "facts\_conj", "facts\_disj"

* **Color Scale/Legend:** A vertical color bar on the right side, ranging from dark red (approximately 0.0) to yellow (approximately 1.0). The scale indicates AUROC scores.

### Detailed Analysis

**Left Heatmap ("Projected out: None")**

The left heatmap shows generally high AUROC scores. The color intensity decreases as you move down and to the right, indicating lower performance.

* **cities vs. cities:** 1.00

* **cities vs. + neg\_cities:** 0.79

* **cities vs. + cities\_conj:** 0.92

* **cities vs. + cities\_disj:** 0.54

* **+ neg\_cities vs. cities:** 0.99

* **+ neg\_cities vs. + neg\_cities:** 0.99

* **+ neg\_cities vs. + cities\_conj:** 0.78

* **+ neg\_cities vs. + cities\_disj:** 0.54

* **+ cities\_conj vs. cities:** 0.99

* **+ cities\_conj vs. + neg\_cities:** 0.99

* **+ cities\_conj vs. + cities\_conj:** 0.94

* **+ cities\_conj vs. + cities\_disj:** 0.70

* **+ cities\_disj vs. cities:** 0.98

* **+ cities\_disj vs. + neg\_cities:** 0.98

* **+ cities\_disj vs. + cities\_conj:** 0.76

* **+ cities\_disj vs. + cities\_disj:** 0.61

**Right Heatmap ("Projected out: t<sub>G</sub> and t<sub>P</sub>")**

The right heatmap shows a more varied range of AUROC scores, with some significant drops in performance compared to the left heatmap.

* **cities vs. cities:** 1.00

* **cities vs. + neg\_cities:** 0.02

* **cities vs. + cities\_conj:** 0.23

* **cities vs. + cities\_disj:** 0.33

* **+ neg\_cities vs. cities:** 0.98

* **+ neg\_cities vs. + neg\_cities:** 0.98

* **+ neg\_cities vs. + cities\_conj:** 0.36

* **+ neg\_cities vs. + cities\_disj:** 0.38

* **+ cities\_conj vs. cities:** 0.99

* **+ cities\_conj vs. + neg\_cities:** 0.99

* **+ cities\_conj vs. + cities\_conj:** 0.27

* **+ cities\_conj vs. + cities\_disj:** 0.31

* **+ cities\_disj vs. cities:** 0.98

* **+ cities\_disj vs. + neg\_cities:** 0.98

* **+ cities\_disj vs. + cities\_conj:** 0.58

* **+ cities\_disj vs. + cities\_disj:** 0.63

### Key Observations

* The application of projections *t<sub>G</sub>* and *t<sub>P</sub>* significantly reduces the AUROC scores for many combinations, particularly when comparing "cities" to the other test sets.

* The highest scores are consistently achieved when the training and testing sets are identical (diagonal of both heatmaps).

* The "cities" category consistently performs well as a training set, regardless of the test set, in the "Projected out: None" heatmap.

* The "neg\_cities" category performs well as a test set, regardless of the training set, in the "Projected out: None" heatmap.

* The "facts" and "facts\_conj" categories show moderate performance in the "Projected out: None" heatmap.

* The "facts\_disj" category consistently shows the lowest performance in the "Projected out: None" heatmap.

### Interpretation

The data suggests that the projections *t<sub>G</sub>* and *t<sub>P</sub>* are detrimental to the performance of the model, especially when trying to generalize from the "cities" training set to other categories. The substantial drop in AUROC scores indicates that these projections introduce noise or distort the feature space, making it harder to discriminate between the different test sets.

The high performance when training and testing on the same category (diagonal) indicates that the model can effectively learn to identify instances within a specific category. However, the performance drops significantly when tested on different categories, suggesting limited generalization ability.

The difference between the two heatmaps highlights the importance of feature selection or dimensionality reduction techniques. The "Projected out: None" heatmap suggests that the original feature space is relatively well-suited for the task, while the "Projected out: t<sub>G</sub> and t<sub>P</sub>" heatmap indicates that these specific projections degrade the quality of the feature representation.

The consistent lower performance of "facts\_disj" suggests that this category is inherently more difficult to classify, potentially due to its complexity or ambiguity. Further investigation into the characteristics of this category may be warranted.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Heatmap Pair: AUROC for Projections a^T t

### Overview

The image displays two side-by-side heatmaps visualizing the Area Under the Receiver Operating Characteristic curve (AUROC) scores for a machine learning model's performance. The overall title is "AUROC for Projections a^T t". The heatmaps compare model performance under two different conditions: one with no components projected out (left) and one with components t_G and t_P projected out (right). The data represents performance when training on a base "cities" dataset and testing on various related and unrelated test sets.

### Components/Axes

* **Main Title:** "AUROC for Projections a^T t"

* **Left Heatmap Subtitle:** "Projected out: None"

* **Right Heatmap Subtitle:** "Projected out: t_G and t_P"

* **Y-Axis Label (Shared):** "Test Set"

* **Y-Axis Categories (Top to Bottom):** `cities`, `neg_cities`, `facts`, `neg_facts`, `facts_conj`, `facts_disj`

* **X-Axis Label (Shared):** "Train Set 'cities'"

* **X-Axis Categories (Left to Right):** `cities`, `+ neg_cities`, `+ cities_conj`, `+ cities_disj`

* **Color Bar/Legend (Far Right):** A vertical gradient bar labeled from 0.0 (red) to 1.0 (yellow), indicating the AUROC score scale. Yellow represents perfect classification (1.0), while red represents random performance (0.0).

### Detailed Analysis

The heatmaps are 6x4 grids. Each cell contains a numerical AUROC value. The color of each cell corresponds to its value based on the color bar.

**Left Heatmap (Projected out: None):**

* **Row `cities`:** Values are consistently high: 1.00, 0.99, 0.99, 0.98. The row is uniformly bright yellow.

* **Row `neg_cities`:** Starts lower at 0.79 (orange-yellow), then jumps to high values: 0.99, 0.99, 0.98 (yellow).

* **Row `facts`:** Shows moderately high, stable values: 0.92, 0.93, 0.94, 0.94 (yellow).

* **Row `neg_facts`:** Shows lower values with a slight upward trend: 0.54, 0.78, 0.76, 0.76 (orange to yellow-orange).

* **Row `facts_conj`:** Values are in the mid-range: 0.67, 0.70, 0.72, 0.72 (orange).

* **Row `facts_disj`:** Values are in the mid-range: 0.56, 0.58, 0.60, 0.61 (orange).

**Right Heatmap (Projected out: t_G and t_P):**

* **Row `cities`:** Remains high: 1.00, 0.98, 0.99, 0.98 (yellow).

* **Row `neg_cities`:** Shows a dramatic drop in the first column to 0.02 (deep red), then recovers to high values: 0.98, 0.99, 0.98 (yellow).

* **Row `facts`:** Shows a severe, uniform drop across all columns: 0.23, 0.21, 0.27, 0.27 (red-orange).

* **Row `neg_facts`:** Shows a significant drop: 0.49, 0.36, 0.36, 0.36 (orange-red).

* **Row `facts_conj`:** Shows a drop, with a slight increase in the last two columns: 0.32, 0.31, 0.58, 0.60 (red-orange to orange).

* **Row `facts_disj`:** Shows a drop, with a slight increase in the last two columns: 0.33, 0.38, 0.59, 0.63 (red-orange to orange).

### Key Observations

1. **Performance Collapse for `facts` Test Set:** The most striking observation is the near-total collapse of performance on the `facts` test set in the right heatmap (values ~0.2-0.27) compared to the left (~0.92-0.94). This indicates the model's ability to classify `facts` is almost entirely dependent on the information contained in the projected-out components t_G and t_P.

2. **Selective Impact on `neg_cities`:** Projecting out t_G and t_P catastrophically affects performance on `neg_cities` test set when trained only on `cities`. Performance recovers when the training set is augmented with other data (`+ neg_cities`, etc.).

3. **General Performance Degradation:** For most test sets (`facts`, `neg_facts`, `facts_conj`, `facts_disj`), AUROC scores are uniformly lower in the right heatmap, showing that projecting out t_G and t_P removes information useful for a broad range of tasks.

4. **Stability of `cities` Test Set:** Performance on the `cities` test set itself remains perfect or near-perfect (0.98-1.00) in both conditions, suggesting the core information for this task is not contained in t_G or t_P.

### Interpretation

This analysis investigates the role of specific model components (t_G and t_P) in performing various classification tasks. The "Projected out" condition acts as an ablation study.

* **What the data suggests:** The components t_G and t_P appear to encode information that is **critical for reasoning about "facts"** (both positive and negative) and their logical conjunctions/disjunctions. Their removal devastates performance on these tasks. Conversely, these components seem **largely irrelevant for the basic "cities" task**, as performance on that test set is unaffected.

* **How elements relate:** The heatmaps demonstrate a clear dichotomy. The left map shows the model's baseline capability across tasks when using all its components. The right map reveals a functional specialization: t_G and t_P are a "knowledge bottleneck" for fact-based reasoning. The recovery of performance on `neg_cities` when the training set is augmented suggests alternative pathways for that specific task exist outside of t_G and t_P.

* **Notable anomalies:** The value **0.02** for `neg_cities` in the right heatmap is a critical outlier. It indicates that when trained only on `cities` and deprived of t_G/t_P, the model's predictions on the `neg_cities` test set are worse than random guessing (AUROC < 0.5). This could imply the model is making systematically incorrect predictions, perhaps due to a strong, now-removed, confounding bias.

* **Underlying implication:** The investigation supports a Peircean abductive reasoning line: if removing components t_G and t_P specifically and severely impairs fact-related reasoning, then those components likely contain the representational structures necessary for that type of reasoning. This helps map the internal "geography" of the model's knowledge.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: AUROC for Projections a^Tt

### Overview

The image presents two side-by-side heatmaps comparing Area Under the Receiver Operating Characteristic (AUROC) values for different combinations of training and test sets under two projection scenarios: (1) no projections applied, and (2) projections of `t_G` and `t_P` applied. The heatmaps use a color gradient from red (low AUROC) to yellow (high AUROC), with numerical values explicitly labeled in each cell.

---

### Components/Axes

- **X-axis (Train Set "cities")**:

Subcategories:

- `cities`

- `+ neg_cities`

- `+ cities_conj`

- `+ cities_disj`

- **Y-axis (Test Set)**:

Subcategories:

- `cities`

- `neg_cities`

- `facts`

- `neg_facts`

- `facts_conj`

- `facts_disj`

- **Legend**:

A color bar on the right maps AUROC values from 0.0 (red) to 1.0 (yellow).

- **Key Titles**:

- Main title: "AUROC for Projections a^Tt"

- Subtitles:

- Left heatmap: "Projected out: None"

- Right heatmap: "Projected out: t_G and t_P"

---

### Detailed Analysis

#### Left Heatmap (Projected out: None)

- **Trend**: AUROC values are generally high (≥0.76) across most combinations, with the highest values (1.00–0.99) along the diagonal where train and test sets match.

- **Notable Values**:

- `cities` vs. `cities`: 1.00

- `neg_cities` vs. `cities`: 0.79

- `facts` vs. `cities`: 0.92

- `facts_disj` vs. `cities`: 0.56

#### Right Heatmap (Projected out: t_G and t_P)

- **Trend**: AUROC values drop significantly compared to the left heatmap, with many cells in red/orange (≤0.63). The diagonal still shows high values (0.98–1.00), but cross-category performance degrades sharply.

- **Notable Values**:

- `cities` vs. `neg_cities`: 0.02 (lowest value)

- `facts` vs. `cities`: 0.23

- `facts_disj` vs. `cities`: 0.33

- `cities_disj` vs. `cities_disj`: 0.63

---

### Key Observations

1. **Diagonal Dominance**: Both heatmaps show high AUROC values (0.98–1.00) along the diagonal, indicating strong performance when train and test sets match.

2. **Projection Impact**: Projecting out `t_G` and `t_P` reduces AUROC values by 30–50% for cross-category comparisons (e.g., `neg_cities` vs. `cities` drops from 0.79 to 0.02).

3. **Category Sensitivity**:

- `facts` and `neg_facts` show moderate performance in the left heatmap but collapse under projection (e.g., `facts` vs. `cities` drops from 0.92 to 0.23).

- `facts_conj` and `facts_disj` exhibit the most severe degradation when projections are applied.

---

### Interpretation

The data demonstrates that projecting out `t_G` and `t_P` severely limits the model's ability to generalize across different categories. While the unprojected scenario maintains high AUROC values even for dissimilar categories (e.g., `facts` vs. `cities` at 0.92), the projected scenario collapses performance for these cases (0.23). This suggests that `t_G` and `t_P` contain critical information for distinguishing between categories, and their removal disrupts the model's discriminative power. The diagonal dominance in both heatmaps highlights the importance of matching train-test distributions for optimal performance.

**Notable Anomaly**: The extreme drop in AUROC for `neg_cities` vs. `cities` under projection (0.02) indicates a near-total failure to distinguish these categories when `t_G` and `t_P` are removed, possibly due to overlapping features in the projected space.

DECODING INTELLIGENCE...