TECHNICAL ASSET FINGERPRINT

7ea18beb86cc84e702062a0e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Task-Oriented Robot Navigation and Manipulation

### Overview

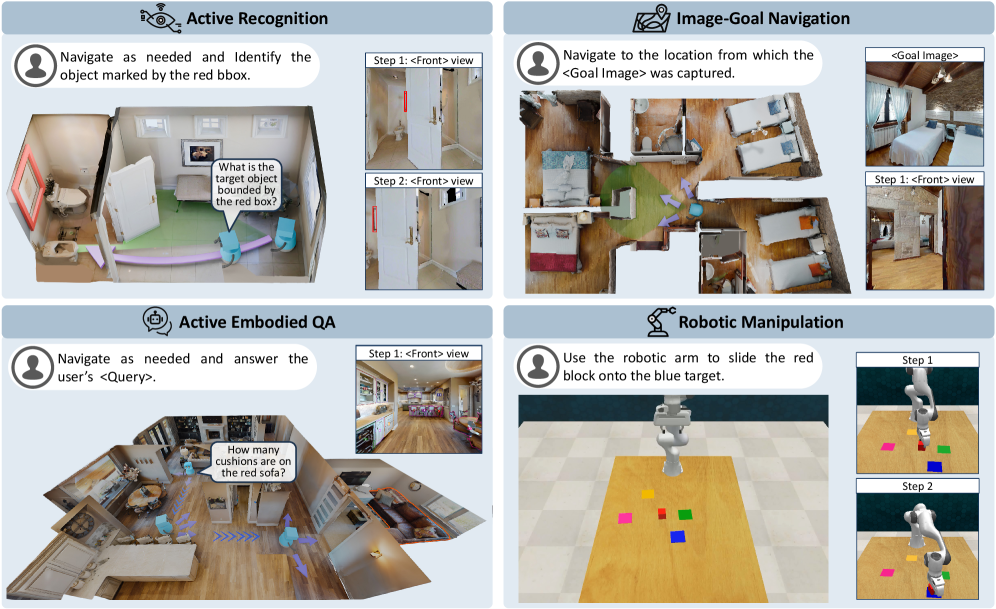

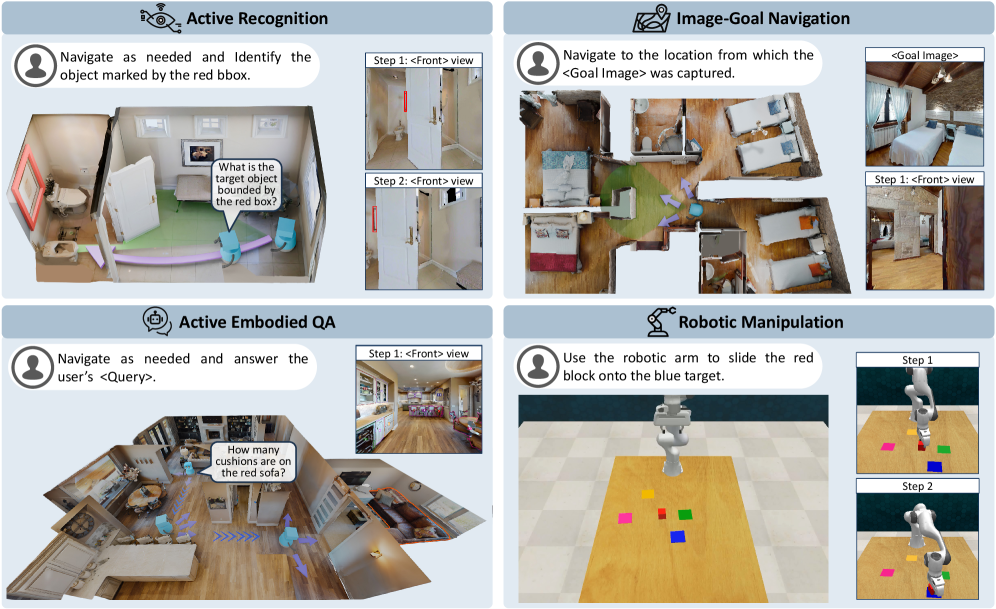

The image presents four distinct tasks for robot navigation and manipulation within simulated environments. Each task is visually depicted with a top-down view of the environment, robot agent(s), and target objects or goals. The tasks are: Active Recognition, Image-Goal Navigation, Active Embodied QA, and Robotic Manipulation. Each task includes a brief description of the objective and visual examples of the environment and robot actions.

### Components/Axes

**1. Active Recognition:**

* **Title:** Active Recognition

* **Objective:** Navigate as needed and identify the object marked by the red bounding box (bbox).

* **Environment:** A bathroom scene with a toilet, sink, door, and other furniture.

* **Agent:** Represented by a humanoid icon.

* **Visual Cues:**

* Red bounding box highlighting a specific object (e.g., a door frame).

* Green shaded area indicating the navigable space.

* Purple arrows indicating the agent's movement.

* **Steps:**

* Step 1: <Front> view - A front view of a door with a red bounding box around the door frame.

* Step 2: <Front> view - A front view of a door with a red bounding box around the door frame.

* **Question:** "What is the target object bounded by the red box?"

**2. Image-Goal Navigation:**

* **Title:** Image-Goal Navigation

* **Objective:** Navigate to the location from which the <Goal Image> was captured.

* **Environment:** A bedroom scene with beds, furniture, and windows.

* **Agent:** Represented by a humanoid icon.

* **Visual Cues:**

* <Goal Image>: A photograph of the bedroom from a specific viewpoint.

* Green shaded area indicating the navigable space.

* Purple arrows indicating the agent's movement.

* **Steps:**

* Step 1: <Front> view - A front view of the bedroom matching the <Goal Image>.

* <Goal Image>: A photograph of the bedroom from a specific viewpoint.

**3. Active Embodied QA:**

* **Title:** Active Embodied QA

* **Objective:** Navigate as needed and answer the user's <Query>.

* **Environment:** A living room/kitchen scene with sofas, tables, chairs, and kitchen appliances.

* **Agent:** Represented by a humanoid icon.

* **Visual Cues:**

* Purple arrows indicating the agent's movement.

* **Steps:**

* Step 1: <Front> view - A front view of the kitchen area.

* **Question:** "How many cushions are on the red sofa?"

**4. Robotic Manipulation:**

* **Title:** Robotic Manipulation

* **Objective:** Use the robotic arm to slide the red block onto the blue target.

* **Environment:** A table with colored blocks (red, blue, yellow, green).

* **Agent:** Represented by a robotic arm.

* **Visual Cues:**

* Colored blocks (red, blue, yellow, green).

* **Steps:**

* Step 1: The robotic arm is positioned above the table with the colored blocks.

* Step 2: The robotic arm has moved the red block onto the blue target.

### Detailed Analysis or ### Content Details

* **Active Recognition:** The agent needs to identify the object within the red bounding box by navigating the environment. The example shows the agent identifying a door frame.

* **Image-Goal Navigation:** The agent needs to navigate to the location where the goal image was taken. The example shows the agent navigating to a specific viewpoint in a bedroom.

* **Active Embodied QA:** The agent needs to answer a question about the environment by navigating and observing. The example shows the agent answering a question about the number of cushions on a red sofa.

* **Robotic Manipulation:** The robotic arm needs to manipulate objects in the environment to achieve a specific goal. The example shows the arm sliding a red block onto a blue target.

### Key Observations

* The tasks involve different levels of complexity, ranging from object recognition to question answering and robotic manipulation.

* The environments are simulated and visually realistic.

* The agents are represented by humanoid icons or robotic arms.

* The tasks require both navigation and interaction with the environment.

### Interpretation

The image illustrates a range of tasks that robots can perform in simulated environments. These tasks demonstrate the capabilities of robots in areas such as object recognition, navigation, question answering, and manipulation. The tasks are designed to be challenging and require robots to reason about the environment and interact with it in a meaningful way. The image suggests that robots are becoming increasingly capable of performing complex tasks in real-world environments.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Robotic Task Demonstrations

### Overview

The image presents a 2x2 grid of diagrams illustrating four different robotic tasks: Active Recognition, Image-Goal Navigation, Active Embodied QA, and Robotic Manipulation. Each task is visually demonstrated through a sequence of images showing the robot's perspective and actions within a room environment. Each task has a description and a query/instruction.

### Components/Axes

Each quadrant of the image contains:

* **Task Title:** Located at the top-left corner, indicating the type of robotic task.

* **Icon:** A small icon representing the task (e.g., a magnifying glass for Active Recognition).

* **Human Icon:** A small icon of a person, representing the user or the task initiator.

* **Description:** A brief textual description of the task.

* **Query/Instruction:** A text box containing the specific question or instruction given to the robot.

* **Image Sequence:** A series of images showing the robot's view and actions during the task. Each image is labeled with a "Step" number and a view direction (e.g., "Step 1: <Front> view").

* **Goal Image (Image-Goal Navigation only):** A small image in the top-right corner showing the target view.

* **Target (Robotic Manipulation only):** A blue square indicating the target location.

### Detailed Analysis or Content Details

**1. Active Recognition:**

* **Description:** "Navigate as needed and Identify the object marked by the red bbox."

* **Query:** "What is the target object bounded by the red box?"

* **Step 1: <Front> view:** Shows a room with a red bounding box around a chair.

* **Step 2: <Front> view:** Shows a closer view of the chair, presumably from the robot's perspective.

**2. Image-Goal Navigation:**

* **Description:** "Navigate to the location from which the <Goal Image> was captured."

* **Goal Image:** Shows a view of the room with a bed and a window.

* **Step 1: <Front> view:** Shows a room with a bed and a window.

* **Step 2: <Front> view:** Shows a different perspective of the room, presumably from the robot's initial location.

**3. Active Embodied QA:**

* **Description:** "Navigate as needed and answer the user's <Query>."

* **Query:** "How many cushions are on the red sofa?"

* **Step 1: <Front> view:** Shows a room with a red sofa and several cushions.

* **Step 2: <Front> view:** Shows a closer view of the red sofa and cushions.

**4. Robotic Manipulation:**

* **Description:** "Use the robotic arm to slide the red block onto the blue target."

* **Step 1:** Shows a robotic arm positioned near a red block and a blue target.

* **Step 2:** Shows the robotic arm sliding the red block onto the blue target.

### Key Observations

* Each task demonstrates a different aspect of robotic intelligence: object recognition, navigation, question answering, and manipulation.

* The image sequences show the robot's perspective, allowing us to understand how it perceives and interacts with the environment.

* The tasks are presented in a visually clear and intuitive manner.

* The use of bounding boxes and targets helps to focus the robot's attention on specific objects or locations.

### Interpretation

The image illustrates a set of capabilities for a robotic system operating in a domestic environment. The tasks are designed to test the robot's ability to understand natural language instructions, navigate complex spaces, recognize objects, and perform physical manipulations. The sequence of images for each task suggests a process of perception, planning, and action. The tasks are representative of the challenges involved in creating robots that can assist humans in everyday life. The image does not provide quantitative data, but rather serves as a qualitative demonstration of the robot's potential. The tasks are designed to be increasingly complex, starting with simple object recognition and progressing to more sophisticated manipulation tasks. The use of a "front view" perspective suggests that the robot is equipped with a camera and is able to process visual information. The image highlights the importance of integrating perception, navigation, and manipulation capabilities in order to create truly intelligent robots.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Four Embodied AI Task Scenarios

### Overview

The image is a composite diagram divided into four distinct panels, each illustrating a different task for an embodied AI agent (e.g., a robot). The panels are arranged in a 2x2 grid. Each panel contains a title, a task instruction, a primary 3D scene visualization, and, in most cases, a secondary panel showing a first-person ("Front") view or a step-by-step sequence. The overall theme is demonstrating capabilities in navigation, visual understanding, question answering, and physical manipulation within simulated indoor environments.

### Components/Axes

The diagram is segmented into four quadrants:

1. **Top-Left:** "Active Recognition"

2. **Top-Right:** "Image-Goal Navigation"

3. **Bottom-Left:** "Active Embodied QA"

4. **Bottom-Right:** "Robotic Manipulation"

Each quadrant contains:

* A title with an associated icon.

* A text bubble with a task instruction directed at an agent (represented by a person icon).

* A main visual scene (a top-down or isometric view of a room or workspace).

* A secondary visual element (a "Front" view panel or a step-by-step sequence).

### Detailed Analysis

#### Panel 1: Active Recognition (Top-Left)

* **Instruction Text:** "Navigate as needed and Identify the object marked by the red bbox."

* **Main Scene:** A top-down view of a living room. A light blue agent figure is shown with a purple path indicating movement. A red bounding box highlights a picture frame on the wall. A speech bubble from the agent asks: "What is the target object bounded by the red box?"

* **Secondary Panel (Right):** Two stacked images labeled "Step 1: <Front> view" and "Step 2: <Front> view". Both show a first-person perspective looking at a white door with a red vertical handle. The view in Step 2 appears slightly closer or adjusted compared to Step 1.

#### Panel 2: Image-Goal Navigation (Top-Right)

* **Instruction Text:** "Navigate to the location from which the <Goal Image> was captured."

* **Main Scene:** A top-down view of a multi-room apartment (bedroom, bathroom, living area). A light blue agent figure is shown with a purple path leading from a starting point to a goal location in the bedroom. A green cone emanates from the agent, indicating its field of view.

* **Secondary Panel (Right):** Two images. The top is labeled "<Goal Image>" and shows a bedroom with a bed, wooden ceiling, and a window. The bottom is labeled "Step 1: <Front> view" and shows a first-person perspective from within the bedroom, looking towards a doorway.

#### Panel 3: Active Embodied QA (Bottom-Left)

* **Instruction Text:** "Navigate as needed and answer the user's <Query>."

* **Main Scene:** An isometric view of a large, open-plan living and kitchen area. A light blue agent figure is shown with a purple path. A speech bubble from a user (person icon) asks: "How many cushions are on the red sofa?" The red sofa is visible in the scene.

* **Secondary Panel (Right):** A single image labeled "Step 1: <Front> view". It shows a first-person perspective from the kitchen area, looking towards the living room where the red sofa is partially visible.

#### Panel 4: Robotic Manipulation (Bottom-Right)

* **Instruction Text:** "Use the robotic arm to slide the red block onto the blue target."

* **Main Scene:** A top-down view of a wooden table. A white robotic arm is positioned over the table. On the table are several colored blocks: pink, yellow, green, red, and blue. The blue block is square and appears to be the target.

* **Secondary Panel (Right):** Two images showing a sequence. "Step 1" shows the robotic arm's gripper approaching the red block. "Step 2" shows the gripper having pushed the red block so that it is now on top of the blue target block.

### Key Observations

* **Consistent Agent Representation:** The AI agent is consistently visualized as a light blue, simplified humanoid figure in the navigation tasks (Panels 1-3).

* **Path Visualization:** Movement is indicated by a semi-transparent purple path with directional arrows.

* **First-Person Verification:** Three of the four tasks include a "<Front> view" panel, emphasizing the importance of the agent's egocentric visual perspective for completing the task.

* **Task Progression:** The "Robotic Manipulation" panel explicitly shows a two-step action sequence, while the others imply navigation steps.

* **Environment Complexity:** The environments range from a single room (Active Recognition) to a multi-room apartment (Image-Goal Navigation) and a complex open-plan space (Active Embodied QA).

### Interpretation

This diagram serves as a visual taxonomy or set of examples for core challenges in embodied AI. It demonstrates how an intelligent agent must integrate several capabilities:

1. **Perception & Grounding:** Identifying objects (Active Recognition) and understanding spatial relationships from images (Image-Goal Navigation).

2. **Action & Planning:** Generating navigation paths (purple lines) and physical manipulation sequences (sliding the block).

3. **Interactive Reasoning:** Combining navigation with visual question answering (Active Embodied QA), where the agent must move to gather visual information to answer a query.

4. **Multi-Modal Instruction Following:** Each task begins with a natural language instruction that the agent must interpret and execute.

The inclusion of both third-person (top-down) and first-person ("<Front> view") perspectives highlights a key research challenge: bridging the gap between an external observer's understanding of a scene and the agent's limited, on-board sensory input. The tasks progress from pure perception (Recognition) to goal-directed navigation, then to interactive QA, and finally to direct physical interaction, showcasing a hierarchy of complexity in agent capabilities. The clean, simulated environments suggest these are likely benchmark tasks for training and evaluating AI agents in controlled settings before deployment in the real world.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Multi-Task Robotics System Architecture

### Overview

The image depicts a 2x2 grid of diagrams illustrating four distinct robotics tasks: **Active Recognition**, **Image-Goal Navigation**, **Active Embodied QA**, and **Robotic Manipulation**. Each quadrant includes textual instructions, visual steps, and spatial reasoning elements.

### Components/Axes

#### Active Recognition (Top-Left)

- **Task Label**: "Active Recognition" with a speech bubble icon.

- **Instruction**: "Navigate as needed and Identify the object marked by the red bbox."

- **Speech Bubble**: "What is the target object bounded by the red box?"

- **Steps**:

- Step 1: `<Front> view` (image of a door with a red bounding box).

- Step 2: `<Front> view` (image of a room with a red bounding box).

- **Visuals**: 3D room model with a red bounding box highlighting a target object.

#### Image-Goal Navigation (Top-Right)

- **Task Label**: "Image-Goal Navigation" with a map icon.

- **Instruction**: "Navigate to the location from which the <Goal Image> was captured."

- **Steps**:

- Step 1: `<Front> view` (image of a room with a green arrow pointing to a target location).

- Goal Image: Bedroom scene with two beds.

- **Visuals**: 3D room model with a green arrow indicating the goal location.

#### Active Embodied QA (Bottom-Left)

- **Task Label**: "Active Embodied QA" with a speech bubble icon.

- **Instruction**: "Navigate as needed and answer the user’s <Query>."

- **Speech Bubble**: "How many cushions are on the red sofa?"

- **Steps**:

- Step 1: `<Front> view` (image of a kitchen with a red sofa).

- **Visuals**: 3D room model with a red sofa and blue arrows indicating movement.

#### Robotic Manipulation (Bottom-Right)

- **Task Label**: "Robotic Manipulation" with a robotic arm icon.

- **Instruction**: "Use the robotic arm to slide the red block onto the blue target."

- **Steps**:

- Step 1: Robot arm approaching red block.

- Step 2: Robot arm placing red block on blue target.

- **Visuals**: Grid with colored blocks (red, yellow, green, blue) and a robotic arm.

### Detailed Analysis

- **Textual Elements**:

- All tasks include explicit instructions and step-by-step visuals.

- Speech bubbles and step labels (e.g., `<Front> view`) are consistently used across quadrants.

- Color-coded elements (red bounding boxes, green arrows, blue arrows) denote targets or actions.

- **Spatial Grounding**:

- Red bounding boxes in Active Recognition and Active Embodied QA highlight objects of interest.

- Green arrows in Image-Goal Navigation indicate goal locations.

- Blue arrows in Active Embodied QA suggest movement paths.

- Robotic Manipulation uses colored blocks (red, blue) to denote objects and targets.

### Key Observations

1. **Task Consistency**: Each quadrant follows a structured format: task label, instruction, steps, and visuals.

2. **Color Coding**: Red is used for target objects (bounding boxes, blocks), green for navigation goals, and blue for movement paths.

3. **Step Progression**: Steps 1 and 2 in each quadrant show incremental actions (e.g., identifying objects, navigating, manipulating).

### Interpretation

The diagram illustrates a modular robotics system capable of:

1. **Object Recognition**: Identifying targets via bounding boxes.

2. **Goal Navigation**: Using visual cues (arrows, images) to reach locations.

3. **Question Answering**: Answering spatial queries (e.g., counting objects).

4. **Robotic Action**: Executing precise manipulations (e.g., sliding blocks).

The system emphasizes **active perception** (navigation, recognition) and **embodied interaction** (QA, manipulation), suggesting integration of computer vision, spatial reasoning, and robotic control. The use of color-coded visuals and step-by-step instructions implies a focus on human-robot collaboration, where users guide robots through tasks using intuitive cues.

No numerical data or charts are present; the image focuses on task descriptions and spatial relationships.

DECODING INTELLIGENCE...