## Line Charts: Average maj@K Accuracy vs. K for Different Models and Sequence Lengths

### Overview

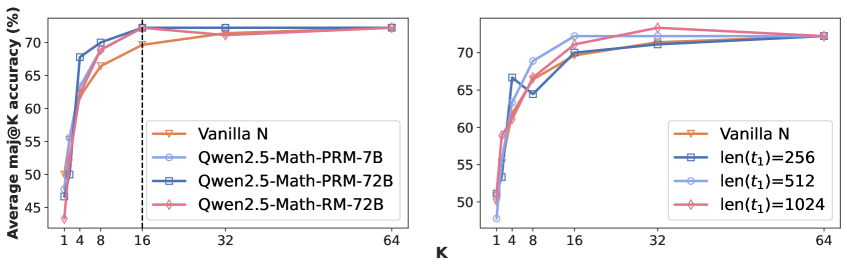

The image contains two side-by-side line charts. Both charts plot "Average maj@K accuracy (%)" on the y-axis against "K" on the x-axis. The left chart compares the performance of different model architectures and sizes. The right chart compares performance for different input sequence lengths (`len(t1)`). Both charts show accuracy increasing with K and then plateauing.

### Components/Axes

**Common Elements:**

* **X-axis:** Label: `K`. Ticks and values: `1`, `4`, `8`, `16`, `32`, `64`. The scale is logarithmic.

* **Y-axis:** Label: `Average maj@K accuracy (%)`. Ticks and values: `50`, `55`, `60`, `65`, `70`.

* **Chart Type:** Line chart with markers at data points.

* **Grid:** Light horizontal grid lines are present at the major y-axis ticks.

**Left Chart - Model Comparison:**

* **Legend:** Located in the bottom-right quadrant of the chart area.

* `Vanilla N` - Orange line with downward-pointing triangle markers.

* `Qwen2.5-Math-PRM-7B` - Light blue line with circle markers.

* `Qwen2.5-Math-PRM-72B` - Dark blue line with square markers.

* `Qwen2.5-Math-RM-72B` - Pink line with diamond markers.

* **Annotation:** A vertical dashed black line is drawn at `K=16`.

**Right Chart - Sequence Length Comparison:**

* **Legend:** Located in the bottom-right quadrant of the chart area.

* `Vanilla N` - Orange line with downward-pointing triangle markers.

* `len(t1)=256` - Dark blue line with square markers.

* `len(t1)=512` - Light blue line with circle markers.

* `len(t1)=1024` - Pink line with diamond markers.

### Detailed Analysis

**Left Chart (Model Comparison):**

* **Trend Verification:** All four lines show a steep upward slope from K=1 to K=8, a gentler slope from K=8 to K=16, and then plateau with minimal change from K=16 to K=64.

* **Data Points (Approximate Values):**

* **Vanilla N (Orange, Triangle):** K=1: ~50%, K=4: ~62%, K=8: ~67%, K=16: ~70%, K=32: ~71%, K=64: ~72%.

* **Qwen2.5-Math-PRM-7B (Light Blue, Circle):** K=1: ~47%, K=4: ~68%, K=8: ~70%, K=16: ~72%, K=32: ~72%, K=64: ~72%.

* **Qwen2.5-Math-PRM-72B (Dark Blue, Square):** K=1: ~46%, K=4: ~65%, K=8: ~70%, K=16: ~72%, K=32: ~72%, K=64: ~72%.

* **Qwen2.5-Math-RM-72B (Pink, Diamond):** K=1: ~44%, K=4: ~63%, K=8: ~69%, K=16: ~72%, K=32: ~72%, K=64: ~72%.

* **Key Observations:** At K=1, the `Vanilla N` model has the highest accuracy (~50%). By K=4, the `Qwen2.5-Math-PRM-7B` model overtakes it. From K=16 onward, all three Qwen models converge to nearly identical accuracy (~72%), slightly outperforming `Vanilla N` (~71-72%). The vertical line at K=16 highlights the point where performance largely stabilizes.

**Right Chart (Sequence Length Comparison):**

* **Trend Verification:** All four lines follow the same general trend: a sharp rise from K=1 to K=8, followed by a plateau. The `len(t1)=1024` line shows a slight peak at K=32 before settling.

* **Data Points (Approximate Values):**

* **Vanilla N (Orange, Triangle):** K=1: ~51%, K=4: ~62%, K=8: ~67%, K=16: ~70%, K=32: ~71%, K=64: ~72%.

* **len(t1)=256 (Dark Blue, Square):** K=1: ~51%, K=4: ~66%, K=8: ~65%, K=16: ~70%, K=32: ~71%, K=64: ~72%.

* **len(t1)=512 (Light Blue, Circle):** K=1: ~48%, K=4: ~66%, K=8: ~69%, K=16: ~72%, K=32: ~72%, K=64: ~72%.

* **len(t1)=1024 (Pink, Diamond):** K=1: ~51%, K=4: ~63%, K=8: ~69%, K=16: ~72%, K=32: ~73%, K=64: ~72%.

* **Key Observations:** At K=1, performance is similar across all sequence lengths (~48-51%). At K=4, the `len(t1)=256` and `len(t1)=512` conditions show a slight advantage. By K=16 and beyond, the longer sequence lengths (`512` and `1024`) achieve marginally higher accuracy (~72-73%) compared to the shorter `256` length and the `Vanilla N` baseline (~71-72%). The `len(t1)=1024` condition shows the highest single point at K=32 (~73%).

### Interpretation

The data demonstrates two key findings related to the "maj@K" (majority vote at K) evaluation metric:

1. **Model Architecture and Scale:** The left chart suggests that specialized math models (Qwen2.5-Math variants) can surpass a baseline (`Vanilla N`) when using majority voting, but only after a sufficient number of samples (K≥4). The performance of the largest models (PRM-72B and RM-72B) is very similar, indicating potential diminishing returns from scaling beyond 72B parameters for this specific task and metric. The convergence of all Qwen models at K≥16 implies that with enough samples, the advantage of model size or specific training (PRM vs. RM) becomes less critical for achieving high majority-vote accuracy.

2. **Input Sequence Length:** The right chart indicates that providing longer input contexts (`len(t1)=512` or `1024`) can yield a small but consistent accuracy benefit over shorter contexts (`256`) when K is large (K≥16). This suggests that for problems requiring majority voting, a richer initial context helps the model generate a set of solutions where the correct answer is more frequently represented, making the majority vote more reliable. The peak at K=32 for the longest sequence is an interesting anomaly that might warrant further investigation.

**Overall Implication:** To maximize accuracy using the maj@K strategy, one should use a capable, specialized model (like the Qwen2.5-Math series) with a sufficiently long input context, and set K to at least 16. Increasing K beyond 32 provides minimal additional benefit, representing a trade-off between computational cost and performance gain.