TECHNICAL ASSET FINGERPRINT

7ee6573927436e281089e357

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

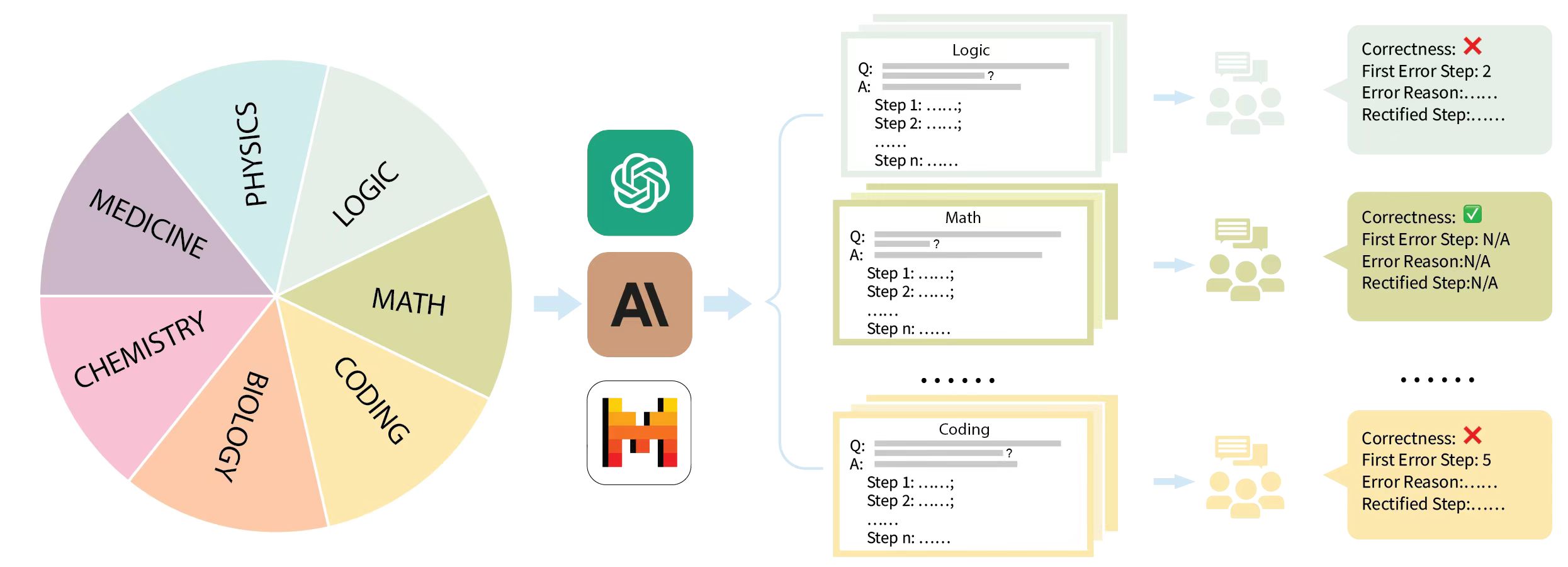

## Diagram: AI Tutoring System

### Overview

The image illustrates a conceptual diagram of an AI tutoring system. It shows a pie chart representing different subjects, which are then processed by AI models, resulting in feedback on the correctness of solutions.

### Components/Axes

* **Pie Chart:** Divided into seven segments, each representing a subject: Medicine, Chemistry, Biology, Coding, Math, Logic, and Physics.

* **AI Processing:** Three AI icons are shown: a green icon resembling the ChatGPT logo, a brown "AI" icon, and a red/orange icon resembling the Wolfram Alpha logo.

* **Problem/Solution Cards:** Represented as stacks of cards for Logic, Math, and Coding. Each card contains a "Q:" (Question) and "A:" (Answer) section, followed by multiple "Step" lines.

* **Feedback:** Each subject's solution is followed by an icon of people discussing, and a feedback card indicating "Correctness," "First Error Step," "Error Reason," and "Rectified Step."

### Detailed Analysis

* **Pie Chart Subjects:**

* Medicine (purple)

* Chemistry (pink)

* Biology (orange)

* Coding (yellow)

* Math (light green)

* Logic (light green)

* Physics (light blue)

* **AI Processing Icons:**

* ChatGPT-like icon (green)

* "AI" icon (brown)

* Wolfram Alpha-like icon (red/orange)

* **Problem/Solution Card Details:**

* Each card has a "Q:" and "A:" section, followed by "Step 1:", "Step 2:", and "Step n:".

* The content of the "Q:" and "A:" sections is obscured, represented by gray lines.

* The "Step" lines are followed by ellipses.

* **Feedback Card Details:**

* Logic: "Correctness: X", "First Error Step: 2", "Error Reason: ...", "Rectified Step: ..."

* Math: "Correctness: ✓", "First Error Step: N/A", "Error Reason: N/A", "Rectified Step: N/A"

* Coding: "Correctness: X", "First Error Step: 5", "Error Reason: ...", "Rectified Step: ..."

### Key Observations

* The diagram illustrates a flow from subject selection to AI processing to feedback on solutions.

* The feedback indicates that the Math problem was solved correctly, while Logic and Coding problems had errors.

* The "First Error Step" is specified for incorrect solutions, suggesting a step-by-step analysis.

### Interpretation

The diagram represents a conceptual AI tutoring system that covers multiple subjects. The system takes a problem, processes it through AI models, and provides feedback on the correctness of the solution, including the first error step and potential rectification. The use of different AI icons suggests that the system may leverage multiple AI models for different tasks or subjects. The diagram highlights the potential of AI to provide personalized and detailed feedback in education. The "N/A" values for the Math problem suggest that the system can also identify correct solutions.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 2

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

## Diagram: AI Model Evaluation Workflow

### Overview

This diagram illustrates a workflow where different subject areas are processed by AI models, leading to an evaluation of their correctness and error details. The workflow starts with a categorization of knowledge domains, followed by AI processing, and concludes with a review and feedback mechanism.

### Components/Axes

The diagram is structured into several distinct sections, flowing from left to right:

1. **Knowledge Domains (Leftmost Section):**

* A pie chart visually represents various academic and technical disciplines.

* **Labels on Pie Chart Slices:**

* PHYSICS (light blue slice)

* LOGIC (light green slice)

* MATH (pale yellow slice)

* CODING (light orange slice)

* BIOLOGY (peach slice)

* CHEMISTRY (pink slice)

* MEDICINE (purple slice)

2. **AI Models (Middle-Left Section):**

* Two distinct AI model representations are shown, connected by an arrow from the Knowledge Domains section.

* **AI Model 1:** A green square with a stylized circular logo (resembling a swirling pattern). This is likely representing a general-purpose AI model, possibly a large language model.

* **AI Model 2:** A brown square with the text "AI" and a vertical bar. This could represent a specialized AI model or a different AI architecture.

* **AI Model 3 (Bottom):** A white square with a pixelated, retro-style logo resembling the letter "H" or a stylized building. This might represent a specific AI tool or platform.

3. **Problem/Solution Representation (Middle Section):**

* This section shows structured templates for problems and their step-by-step solutions, categorized by subject. Arrows from the AI Models section point to this section, indicating processing.

* **Logic Problem Template:**

* **Title:** Logic

* **Q:** (Question placeholder)

* **A:** (Answer placeholder)

* **Step 1:** ......;

* **Step 2:** ......;

* **......** (ellipsis indicating more steps)

* **Step n:** ......

* **Math Problem Template:**

* **Title:** Math

* **Q:** ?

* **A:** (Answer placeholder)

* **Step 1:** ......;

* **Step 2:** ......;

* **......**

* **Step n:** ......

* **Coding Problem Template:**

* **Title:** Coding

* **Q:** (Question placeholder)

* **A:** (Answer placeholder)

* **Step 1:** ......;

* **Step 2:** ......;

* **......**

* **Step n:** ......

* Ellipses (...) between the templates indicate that other subject areas from the pie chart are also processed in a similar manner.

4. **Evaluation/Feedback (Rightmost Section):**

* This section depicts a group of stylized figures (representing reviewers or evaluators) receiving the processed information and providing feedback. Arrows from the Problem/Solution Representation section point to this section.

* **Feedback Block 1 (Associated with Logic/General AI):**

* A speech bubble icon above a group of figures.

* A colored text box (light green) with the following information:

* **Correctness:** X (Red cross icon)

* **First Error Step:** 2

* **Error Reason:** ......

* **Rectified Step:** ......

* **Feedback Block 2 (Associated with Math):**

* A speech bubble icon above a group of figures.

* A colored text box (pale yellow) with the following information:

* **Correctness:** ✓ (Green checkmark icon)

* **First Error Step:** N/A

* **Error Reason:** N/A

* **Rectified Step:** N/A

* Ellipses (...) indicate that feedback is generated for all processed problems.

* **Feedback Block 3 (Associated with Coding):**

* A speech bubble icon above a group of figures.

* A colored text box (light orange) with the following information:

* **Correctness:** X (Red cross icon)

* **First Error Step:** 5

* **Error Reason:** ......

* **Rectified Step:** ......

### Detailed Analysis or Content Details

The diagram outlines a process that begins with the selection of a knowledge domain from a pie chart. This domain is then fed into one or more AI models. The AI models process a question (Q) and attempt to provide a step-by-step answer (A), from Step 1 to Step n. Following this AI processing, a group of evaluators reviews the generated solution. The evaluation results in a feedback block indicating the correctness (either correct ✓ or incorrect X), the step where the first error occurred (or N/A if correct), the reason for the error (or N/A), and the rectified step (or N/A).

Specific examples shown are for Logic (incorrect, error at step 2), Math (correct), and Coding (incorrect, error at step 5). The placeholders "......" indicate that detailed information would be present in a real-world scenario.

### Key Observations

* The workflow demonstrates a cycle of AI generation and human (or AI-assisted human) evaluation.

* The AI models are capable of processing problems across diverse domains like Logic, Math, and Coding.

* The evaluation system provides granular feedback, pinpointing the exact step of the first error.

* Not all AI-generated solutions are correct; the diagram explicitly shows instances of both correct and incorrect outputs.

* The "N/A" entries for correct solutions suggest a standardized feedback format that omits irrelevant fields when no errors are present.

### Interpretation

This diagram illustrates a conceptual framework for evaluating the performance of AI models in solving problems across various disciplines. The pie chart signifies the breadth of knowledge AI can be applied to. The AI models represent the processing engine, taking a problem and generating a solution. The subsequent evaluation stage, depicted by the figures and feedback boxes, is crucial for understanding the AI's accuracy, identifying its weaknesses (specific error steps and reasons), and potentially guiding its improvement (rectified steps).

The contrast between the correct Math solution and the incorrect Logic and Coding solutions suggests that AI performance can vary significantly depending on the domain and the specific problem. The detailed error reporting (First Error Step, Error Reason) is a key aspect of this workflow, enabling targeted debugging and refinement of AI algorithms. This process is fundamental to the development and deployment of reliable AI systems, especially in critical fields where accuracy is paramount. The inclusion of multiple AI model icons suggests that the system might be designed to compare or utilize different AI approaches.

DECODING INTELLIGENCE...

EXPERT: gemini-3.1-pro-preview VERSION 2

RUNTIME: nugit/gemini/gemini-3.1-pro-preview

INTEL_VERIFIED

## Diagram: Multi-Domain AI Reasoning Evaluation Pipeline

### Overview

This image is a process flow diagram illustrating a methodology for evaluating Large Language Models (LLMs) on complex, multi-step reasoning tasks across various scientific and technical domains. The flow moves from left to right, starting with a diverse dataset of domain-specific questions, passing them through specific AI models to generate step-by-step answers, and concluding with human evaluation to identify and rectify specific logical errors within the generated steps.

### Components Isolation & Spatial Grounding

The diagram is divided into four distinct vertical sections, flowing from left to right, connected by light blue arrows:

1. **Far Left (Input Domain):** A segmented pie chart representing the subject matter dataset.

2. **Center-Left (Processing):** A vertical stack of three prominent AI model logos.

3. **Center-Right (Output Generation):** Stacks of generated text cards, color-coded to match the input domains, showing step-by-step reasoning.

4. **Far Right (Evaluation):** Human annotator icons leading to color-coded evaluation result boxes detailing correctness and error localization.

---

### Content Details & Transcription

#### 1. Input Domains (Far Left)

A 7-slice pie chart. The slices appear roughly equal in size, suggesting a balanced dataset.

* **Top Right:** LOGIC (Light Green)

* **Right:** MATH (Olive Green)

* **Bottom Right:** CODING (Light Orange/Yellow)

* **Bottom:** BIOLOGY (Orange)

* **Bottom Left:** CHEMISTRY (Pink)

* **Left:** MEDICINE (Purple)

* **Top Left:** PHYSICS (Light Blue)

*Flow:* A light blue arrow points from the right edge of the pie chart to the AI models.

#### 2. AI Models (Center-Left)

Three vertically stacked, rounded-square icons representing the LLMs being evaluated:

* **Top:** OpenAI logo (White swirling geometric shape on a teal/green background).

* **Middle:** Anthropic / Claude logo (Stylized black "A" and backslash on a tan/brown background).

* **Bottom:** Mistral AI logo (Stylized "M" made of orange, red, and black blocks on a white background).

*Flow:* A light blue arrow points from these models, splitting into a large bracket `{` that encompasses the output cards to the right.

#### 3. Generated Outputs (Center-Right)

Three visible stacks of cards representing the models' outputs. The borders of these cards are color-coded to match the pie chart slices.

* **Top Stack (Light Green border - matches LOGIC):**

* Header: `Logic`

* Body Text:

```text

Q: [grey line representing text] ?

A: [grey line representing text]

Step 1: ......;

Step 2: ......;

......

Step n: ......

```

* **Middle Stack (Olive Green border - matches MATH):**

* Header: `Math`

* Body Text: Identical structure to the Logic card (Q, A, Step 1 to Step n).

* **Separator:** Six black dots (`......`) indicating omitted categories (likely Biology, Chemistry, Medicine, Physics).

* **Bottom Stack (Light Orange/Yellow border - matches CODING):**

* Header: `Coding`

* Body Text: Identical structure to the Logic card.

*Flow:* A light blue arrow points from each card stack to an icon of three human figures with a chat bubble (representing human annotators/evaluators).

#### 4. Human Evaluation Results (Far Right)

Arrows point from the human annotator icons to evaluation boxes. These boxes share the background color of their corresponding domain.

* **Top Box (Light Green background - Logic Evaluation):**

```text

Correctness: ❌

First Error Step: 2

Error Reason:......

Rectified Step:......

```

* **Middle Box (Olive Green background - Math Evaluation):**

```text

Correctness: ✅

First Error Step: N/A

Error Reason:N/A

Rectified Step:N/A

```

* **Separator:** Six black dots (`......`) aligning with the omitted categories.

* **Bottom Box (Light Orange/Yellow background - Coding Evaluation):**

```text

Correctness: ❌

First Error Step: 5

Error Reason:......

Rectified Step:......

```

---

### Key Observations

* **Color-Coding Consistency:** There is a strict visual mapping using color. The "Logic" slice in the pie chart is light green, the generated output cards for Logic have a light green border, and the final evaluation box for Logic has a light green background. This pattern holds true for Math (olive green) and Coding (yellow/orange).

* **Chain-of-Thought Structure:** The generated outputs explicitly use a "Step 1, Step 2... Step n" format. This indicates the models are prompted to use Chain-of-Thought (CoT) reasoning rather than providing direct answers.

* **Granular Evaluation:** The evaluation metrics go beyond simple binary pass/fail (`Correctness: ✅ / ❌`). When an answer is incorrect, the evaluators identify the exact point of failure (`First Error Step`), explain why it failed (`Error Reason`), and provide the correct logical step (`Rectified Step`).

---

### Interpretation

This diagram outlines a sophisticated framework for benchmarking the reasoning capabilities of state-of-the-art Large Language Models (specifically OpenAI, Anthropic, and Mistral models).

**Reading between the lines (Peircean investigative analysis):**

1. **Process-Based vs. Outcome-Based Evaluation:** The inclusion of "First Error Step" and "Rectified Step" strongly suggests this methodology is designed to create or utilize a **Process Reward Model (PRM)** dataset. Instead of just grading the final answer (Outcome Reward Model), this approach evaluates the *trajectory* of the model's logic. This is crucial for complex STEM fields (Physics, Math, Coding) where a single arithmetic mistake in Step 2 can ruin a perfectly logical 10-step deduction.

2. **Human-in-the-Loop (HITL):** The explicit inclusion of human annotator icons indicates that automated evaluation (using another LLM as a judge) is deemed insufficient for this level of logical scrutiny. Human experts are required to trace the logic and pinpoint the exact moment the AI hallucinates or makes a logical leap.

3. **Dataset Creation:** Because the evaluation includes a "Rectified Step," this pipeline is not just testing models; it is actively generating a high-quality, human-corrected dataset. This corrected data can subsequently be used to fine-tune future models, teaching them not just what the right answer is, but how to correct their specific logical missteps in various scientific domains.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: AI Foundation - Disciplinary Inputs & Correctness Assessment

### Overview

The image depicts a diagram illustrating the foundational disciplines contributing to Artificial Intelligence (AI), and a subsequent assessment of AI's correctness in applying Logic, Math, and Coding. The diagram shows a circular arrangement of disciplines feeding into an "AI" central node, followed by three separate correctness assessment blocks for each discipline.

### Components/Axes

The diagram consists of the following components:

* **Disciplinary Wheel:** A circular arrangement of six disciplines: Physics (blue), Medicine (green), Logic (teal), Math (yellow), Coding (orange), and Chemistry (red).

* **AI Node:** A central node labeled "AI" with an icon representing a brain-like structure.

* **Arrow:** An arrow indicating the flow of information from the disciplines to the AI node.

* **Correctness Assessment Blocks (x3):** Three blocks, one each for Logic, Math, and Coding, each containing a question/answer structure and a correctness indicator.

* **Correctness Indicators:** Each assessment block has a visual indicator of correctness: a red "X" for Logic and Coding, and a green checkmark for Math.

* **Step-by-Step Breakdown:** Each assessment block includes a "Q:?" (Question) and "A:" (Answer) section, followed by a series of "Step 1: ...", "Step 2: ...", and "Step n: ..." placeholders.

* **Iconography:** Each assessment block also features a series of icons representing people or processes.

### Detailed Analysis or Content Details

**Disciplinary Wheel:**

* The wheel is divided into six equal segments, each labeled with a discipline.

* The disciplines are arranged clockwise as follows: Physics, Medicine, Logic, Math, Coding, Chemistry.

**AI Node:**

* The AI node is positioned to the right of the disciplinary wheel, receiving input from the arrow.

* The AI node's icon is a swirling, abstract representation of a brain.

**Correctness Assessment Blocks:**

* **Logic:**

* Correctness: Red "X"

* First Error Step: 2

* Error Reason: "......"

* Rectified Step: "......"

* Q: ?

* A: ?

* Steps: Step 1: "......", Step 2: "......", Step n: "......"

* Icons: Four icons representing people/processes.

* **Math:**

* Correctness: Green Checkmark

* First Error Step: N/A

* Error Reason: N/A

* Rectified Step: N/A

* Q: ?

* A: ?

* Steps: Step 1: "......", Step 2: "......", Step n: "......"

* Icons: Four icons representing people/processes.

* **Coding:**

* Correctness: Red "X"

* First Error Step: 5

* Error Reason: "......"

* Rectified Step: "......"

* Q: ?

* A: ?

* Steps: Step 1: "......", Step 2: "......", Step n: "......"

* Icons: Four icons representing people/processes.

### Key Observations

* AI relies on a diverse set of disciplines as its foundation.

* The assessment indicates that AI currently performs correctly in Math but struggles with Logic and Coding, as indicated by the red "X" markers.

* The "Error Reason" and "Rectified Step" fields are placeholders ("......"), suggesting that the specific errors and corrections are not detailed in this diagram.

* The step-by-step breakdown suggests an iterative process of problem-solving.

* The icons in the assessment blocks likely represent the individuals or processes involved in the AI's reasoning or execution.

### Interpretation

The diagram illustrates a conceptual model of AI's dependence on various foundational disciplines and its current performance in applying those disciplines to solve problems. The wheel represents the breadth of knowledge required for AI, while the correctness assessment blocks highlight areas where AI currently faces challenges. The fact that Math is correct, while Logic and Coding are not, suggests that AI excels at tasks requiring precise calculation but struggles with tasks requiring abstract reasoning or complex algorithmic implementation. The placeholders for error reasons and rectified steps indicate that the diagram is a high-level overview and does not delve into the specifics of AI's failures or improvements. The diagram implies a need for further development in Logic and Coding to enhance AI's overall capabilities. The use of icons suggests a collaborative or multi-stage process in AI problem-solving.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: AI Model Evaluation Across Academic Domains

### Overview

The image is a conceptual diagram illustrating a workflow for evaluating and improving AI models across multiple academic disciplines. It depicts a process where domain knowledge is fed into AI models, which generate step-by-step solutions to problems, followed by human evaluation and feedback for error correction.

### Components/Axes

The diagram is organized into three main vertical sections, flowing from left to right:

1. **Left Section (Input Domains):** A large pie chart divided into seven colored segments, each representing an academic field.

* **Labels (clockwise from top-left):** PHYSICS (light blue), LOGIC (pale green), MATH (olive green), CODING (light orange), BIOLOGY (peach), CHEMISTRY (pink), MEDICINE (lavender).

* **Spatial Grounding:** The pie chart occupies the left third of the image. The labels are placed within their respective colored segments.

2. **Center Section (AI Models):** Three distinct AI model icons arranged vertically, connected by light blue arrows from the pie chart and pointing towards the right section.

* **Top Icon:** A green square with a white, intricate, knot-like symbol (resembling the OpenAI logo).

* **Middle Icon:** A brown square with the letters "AI" in black.

* **Bottom Icon:** A white square with a stylized, pixelated "H" in orange, yellow, and red (resembling the Hugging Face logo).

3. **Right Section (Evaluation & Feedback):** This section shows a parallel process for three example domains (Logic, Math, Coding), with ellipses (`......`) indicating the process repeats for others.

* **For each domain, there are two components:**

* **A. Problem/Solution Card:** A rectangular card with a colored header and border.

* **Header:** The domain name (e.g., "Logic").

* **Content:** A structured Q&A format:

* `Q:` followed by a gray placeholder bar and a question mark `?`.

* `A:` followed by a gray placeholder bar.

* A list of steps: `Step 1: ......;`, `Step 2: ......;`, `......`, `Step n: ......`.

* **B. Feedback Box:** A colored box connected to the card by an arrow, containing evaluation results.

* **Fields:**

* `Correctness:` followed by a symbol (❌ for incorrect, ✅ for correct).

* `First Error Step:` (e.g., "2", "N/A", "5").

* `Error Reason:` (e.g., "......", "N/A").

* `Rectified Step:` (e.g., "......", "N/A").

* **Spatial Grounding & Color Matching:**

* The **Logic** card (top) has a pale green header/border. Its feedback box is also pale green and shows `Correctness: ❌`.

* The **Math** card (middle) has an olive green header/border. Its feedback box is olive green and shows `Correctness: ✅`.

* The **Coding** card (bottom) has a light orange header/border. Its feedback box is light orange and shows `Correctness: ❌`.

* Between the cards and feedback boxes, there are small, faint icons of people with speech bubbles, symbolizing human evaluators.

### Detailed Analysis

The diagram outlines a clear, multi-stage pipeline:

1. **Domain Input:** Knowledge or problems from seven academic domains (Medicine, Physics, Logic, Math, Coding, Biology, Chemistry) serve as the input source.

2. **AI Processing:** This input is processed by one or more AI models (represented by the three central icons).

3. **Solution Generation:** For a given domain (e.g., Logic), the AI generates a structured, step-by-step solution to a question (`Q:`).

4. **Human Evaluation:** Human evaluators (implied by the people icons) assess the AI's solution.

5. **Feedback & Correction:** The evaluation produces a structured feedback report detailing correctness, the step where the first error occurred (if any), the reason for the error, and a rectified version of that step. This feedback loop is designed for iterative model improvement.

### Key Observations

* **Selective Correctness:** In the examples shown, only the Math solution is marked as fully correct (`✅`, `First Error Step: N/A`). The Logic and Coding solutions contain errors, identified at Step 2 and Step 5, respectively.

* **Structured Error Analysis:** The feedback format is consistent and granular, focusing on identifying the *first* error step, which is crucial for efficient debugging and training.

* **Visual Coding:** Colors are used systematically to link each domain's problem card to its corresponding feedback box, ensuring clarity in the parallel workflows.

* **Scalability:** The use of ellipses (`......`) in both the step lists and between the domain examples indicates this is a scalable framework applicable to many problems and domains beyond the three shown.

### Interpretation

This diagram represents a **human-in-the-loop framework for benchmarking and improving AI reasoning capabilities**. It moves beyond simple right/wrong assessment to a diagnostic approach.

* **What it demonstrates:** The process is designed to create high-quality training data. By pinpointing the exact step where an AI's reasoning fails and providing a correction, the system generates targeted examples for fine-tuning models. This is more effective than training on just final answers.

* **Relationship between elements:** The flow is linear but implies a cycle: Domains → AI Generation → Human Evaluation → Feedback. This feedback is presumably used to retrain the AI models (the central icons), creating a closed loop for continuous improvement. The variety of domains suggests the goal is to develop a generalist AI with robust reasoning skills across STEM and professional fields.

* **Notable implication:** The inclusion of "Medicine" and "Coding" alongside pure sciences like "Physics" and "Math" indicates an ambition to apply this rigorous, step-wise evaluation to practical, high-stakes fields where explainable and correct reasoning is critical. The framework treats all domains with the same analytical rigor.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Pie Chart: Academic Disciplines Distribution

### Overview

A circular pie chart divided into eight equal segments, each representing a different academic discipline. The chart uses distinct colors for each category and is positioned on the left side of the image.

### Components/Axes

- **Labels**:

- Medicine (purple)

- Physics (light blue)

- Logic (light green)

- Math (olive green)

- Coding (yellow)

- Biology (peach)

- Chemistry (pink)

- **Structure**:

- Eight equal-sized segments (12.5% each)

- No numerical values or percentages explicitly labeled

- Central white background with black text labels

### Detailed Analysis

- **Color Coding**:

- Medicine: #8A2BE2 (purple)

- Physics: #ADD8E6 (light blue)

- Logic: #90EE90 (light green)

- Math: #556B2F (olive green)

- Coding: #FFFF00 (yellow)

- Biology: #FFDAB9 (peach)

- Chemistry: #FFC0CB (pink)

- **Spatial Positioning**:

- Pie chart occupies the left third of the image

- Segments arranged clockwise starting from top-left (Physics)

### Key Observations

- All disciplines are represented equally (12.5% each)

- No overlapping or hierarchical relationships shown

- Color choices distinguish categories effectively

### Interpretation

The pie chart visually represents equal emphasis on seven core academic disciplines plus Logic. The equal segmentation suggests a balanced curriculum or research focus across these fields. The color selection uses high-contrast hues for clear differentiation, though the absence of numerical labels limits quantitative interpretation.

## Flowchart: AI-Human Collaboration Process

### Overview

A horizontal flowchart on the right side of the image depicting an iterative process between AI systems and human collaborators. The process involves three academic disciplines (Logic, Math, Coding) with feedback loops for error correction.

### Components/Axes

- **Central Node**:

- Brown square labeled "AI" with black "A" and "I" text

- **Input Sources**:

- Green square (ResearchGate logo)

- Orange square (Hacker News logo)

- Red square (H logo)

- **Output Sections**:

- Logic (light green)

- Math (olive green)

- Coding (yellow)

- **Feedback Elements**:

- Correctness indicators (red X or green check)

- Error step identification

- Rectified step suggestions

### Detailed Analysis

- **Process Flow**:

1. Input sources → AI processing

2. AI outputs → Three discipline-specific sections

3. Human feedback → Correctness evaluation

4. Error correction → Rectified steps

- **Color Coding**:

- Logic: #98FB98 (light green)

- Math: #90EE90 (olive green)

- Coding: #FFFFE0 (yellow)

- **Spatial Positioning**:

- AI node centered in flowchart

- Input sources arranged above AI node

- Discipline sections arranged horizontally below AI node

- Feedback bubbles positioned to the right of each section

### Key Observations

- Logic section shows first error at step 2

- Math section has no errors (N/A)

- Coding section has first error at step 5

- Feedback bubbles use speech bubble icons with text

- Process emphasizes iterative improvement through human-AI collaboration

### Interpretation

The flowchart illustrates a quality assurance process where AI-generated academic content undergoes human review. The varying error patterns across disciplines suggest:

1. Math problems may be more algorithmically solvable

2. Logic requires careful step-by-step verification

3. Coding involves complex error detection at later stages

The feedback loop mechanism demonstrates the value of human-AI collaboration in academic problem-solving, with the system designed to identify and correct errors through iterative refinement.

DECODING INTELLIGENCE...