## Dual-Axis Line Chart: RL Training Steps vs. Token Length and Reproduced Rate

### Overview

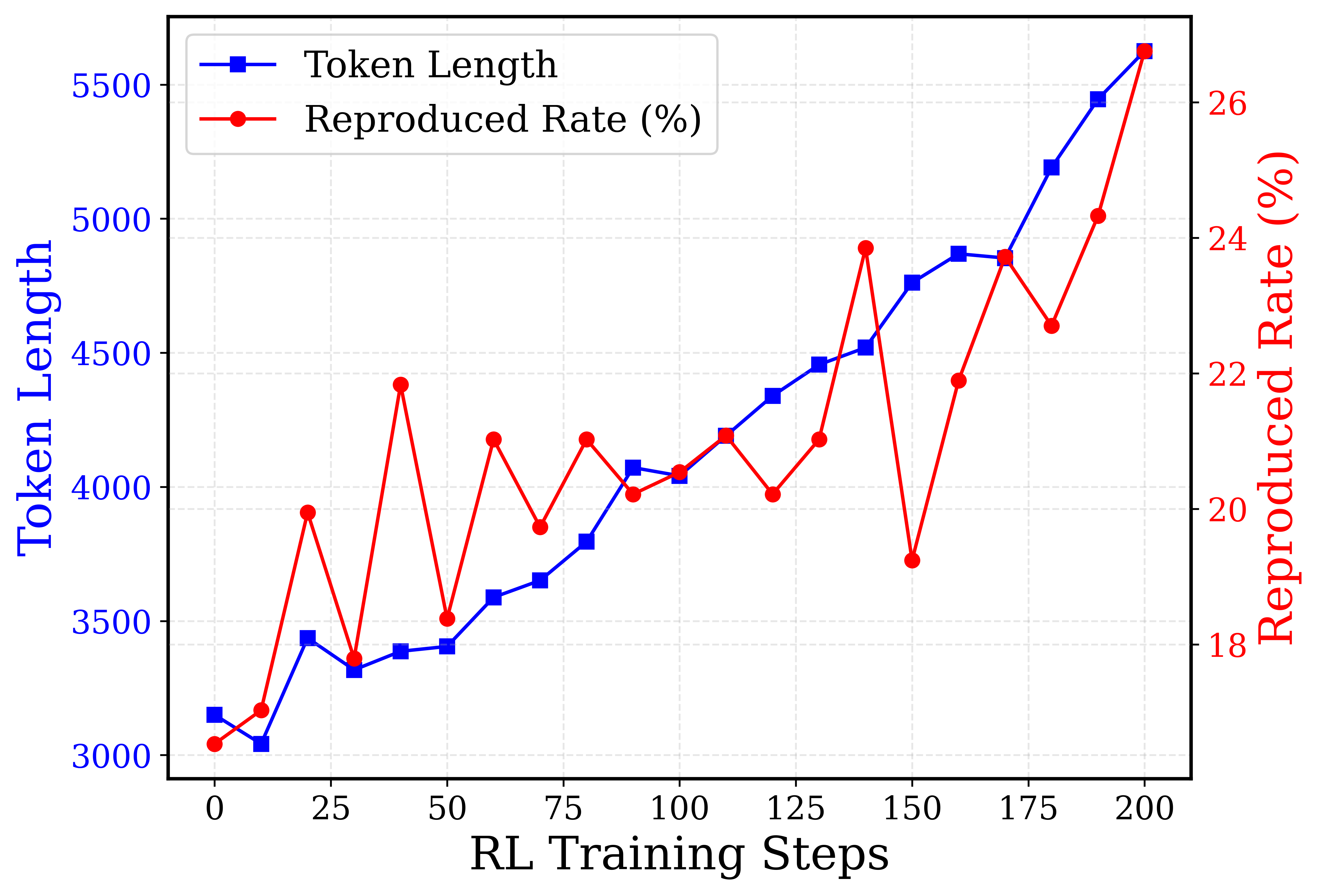

This is a dual-axis line chart plotting two metrics against "RL Training Steps" on the x-axis. The chart tracks the progression of "Token Length" (left y-axis) and "Reproduced Rate (%)" (right y-axis) over 200 training steps. The data suggests a general upward trend for both metrics, with the Reproduced Rate exhibiting significantly more volatility than the steadily increasing Token Length.

### Components/Axes

* **Chart Type:** Dual-axis line chart.

* **X-Axis:**

* **Label:** `RL Training Steps`

* **Scale:** Linear, from 0 to 200.

* **Major Tick Marks:** 0, 25, 50, 75, 100, 125, 150, 175, 200.

* **Left Y-Axis (Primary):**

* **Label:** `Token Length` (displayed vertically in blue).

* **Scale:** Linear, from 3000 to 5500.

* **Major Tick Marks:** 3000, 3500, 4000, 4500, 5000, 5500.

* **Right Y-Axis (Secondary):**

* **Label:** `Reproduced Rate (%)` (displayed vertically in red).

* **Scale:** Linear, from 18 to 26.

* **Major Tick Marks:** 18, 20, 22, 24, 26.

* **Legend:**

* **Position:** Top-left corner of the plot area.

* **Series 1:** `Token Length` - Represented by a blue line with square markers.

* **Series 2:** `Reproduced Rate (%)` - Represented by a red line with circular markers.

* **Grid:** A light gray, dashed grid is present for both axes.

### Detailed Analysis

**Data Series 1: Token Length (Blue Line, Square Markers)**

* **Trend:** Shows a consistent, near-linear upward trend with minor fluctuations. The slope increases slightly after step 100.

* **Approximate Data Points (RL Step, Token Length):**

* (0, ~3150)

* (10, ~3050)

* (20, ~3450)

* (30, ~3300)

* (40, ~3400)

* (50, ~3400)

* (60, ~3600)

* (70, ~3650)

* (80, ~3800)

* (90, ~4050)

* (100, ~4050)

* (110, ~4200)

* (120, ~4350)

* (130, ~4450)

* (140, ~4500)

* (150, ~4750)

* (160, ~4900)

* (170, ~4850)

* (180, ~5200)

* (190, ~5450)

* (200, ~5600)

**Data Series 2: Reproduced Rate (%) (Red Line, Circular Markers)**

* **Trend:** Exhibits high volatility with sharp peaks and troughs, but the overall trend is upward. Notable dips occur at steps 30, 50, 70, 120, and 150.

* **Approximate Data Points (RL Step, Reproduced Rate %):**

* (0, ~18.2%)

* (10, ~18.5%)

* (20, ~20.0%)

* (30, ~19.0%)

* (40, ~22.0%)

* (50, ~18.5%)

* (60, ~21.5%)

* (70, ~19.8%)

* (80, ~21.5%)

* (90, ~20.2%)

* (100, ~20.5%)

* (110, ~21.5%)

* (120, ~20.2%)

* (130, ~21.5%)

* (140, ~24.0%)

* (150, ~19.5%)

* (160, ~22.0%)

* (170, ~24.0%)

* (180, ~22.5%)

* (190, ~24.5%)

* (200, ~26.5%)

### Key Observations

1. **Divergent Volatility:** The most striking feature is the contrast between the smooth, predictable growth of Token Length and the erratic, sawtooth pattern of the Reproduced Rate.

2. **Correlation at Extremes:** Despite the volatility, both metrics reach their highest values at the final data point (Step 200: Token Length ~5600, Reproduced Rate ~26.5%).

3. **Significant Drop:** The Reproduced Rate experiences its most severe drop at step 150, falling to ~19.5% from a peak of ~24.0% at step 140, before recovering sharply.

4. **Mid-Training Convergence:** Between steps 90 and 110, the two lines visually converge and cross paths on the chart, indicating a period where the numerical values of the two different metrics (on their respective scales) were similar.

### Interpretation

The chart likely visualizes the performance of a Reinforcement Learning (RL) model during training. "Token Length" probably refers to the length of sequences generated by the model, while "Reproduced Rate (%)" is a performance metric, possibly indicating how often the model successfully reproduces a target output or meets a specific criterion.

The data suggests that as training progresses (more RL steps), the model learns to generate longer sequences (increasing Token Length). Concurrently, its performance (Reproduced Rate) also improves overall, but this learning process is unstable, characterized by frequent setbacks and recoveries. The sharp drop at step 150 could indicate a period of catastrophic forgetting, a challenging batch of training data, or an adjustment in the training process. The final data point shows both metrics at their peak, suggesting that despite the instability, the training process was ultimately successful in improving both the length and quality (as measured by the reproduction rate) of the model's outputs. The correlation between increasing length and increasing rate implies that the model's ability to generate longer sequences is linked to its improved performance.