## Chart Type: Bar Chart - Model Performance Comparison

### Overview

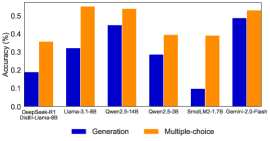

This image displays a bar chart comparing the "Accuracy (%)" of seven different language models across two distinct task types: "Generation" and "Multiple-choice". Each model category on the x-axis has two vertical bars, one for "Generation" (blue) and one for "Multiple-choice" (orange), allowing for a direct comparison of performance for each model and across task types.

### Components/Axes

* **Chart Title**: Not explicitly provided, but the content suggests "Model Performance Comparison by Task Type".

* **Y-axis**:

* **Label**: "Accuracy (%)"

* **Scale**: Ranges from 0.0 to 0.5, with major tick marks at 0.0, 0.1, 0.2, 0.3, 0.4, and 0.5. Minor tick marks are present at 0.05, 0.15, etc.

* **X-axis**:

* **Label**: Not explicitly labeled, but represents different language models or model configurations.

* **Categories (from left to right)**:

1. DeepSeek-R1

2. Distil-Llama-8B

3. Llama-3.1-8B

4. Qwen2.5-14B

5. Qwen2.5-3B

6. SnoLM2-1.7B

7. Gemini-2.0-Flash

* **Legend**:

* **Position**: Bottom-center of the chart.

* **Entries**:

* A blue square represents "Generation".

* An orange square represents "Multiple-choice".

### Detailed Analysis

The chart presents pairs of bars for each model, showing their accuracy for "Generation" (blue) and "Multiple-choice" (orange) tasks.

1. **DeepSeek-R1 / Distil-Llama-8B**:

* **Generation (blue)**: The bar reaches approximately 0.19 Accuracy (%).

* **Multiple-choice (orange)**: The bar reaches approximately 0.36 Accuracy (%).

* **Trend**: Multiple-choice accuracy is significantly higher than generation accuracy for this model.

2. **Llama-3.1-8B**:

* **Generation (blue)**: The bar reaches approximately 0.32 Accuracy (%).

* **Multiple-choice (orange)**: The bar reaches approximately 0.54 Accuracy (%).

* **Trend**: Multiple-choice accuracy is substantially higher than generation accuracy. This model shows the highest multiple-choice accuracy among all models.

3. **Qwen2.5-14B**:

* **Generation (blue)**: The bar reaches approximately 0.45 Accuracy (%).

* **Multiple-choice (orange)**: The bar reaches approximately 0.53 Accuracy (%).

* **Trend**: Multiple-choice accuracy is slightly higher than generation accuracy, showing one of the smallest gaps between the two task types.

4. **Qwen2.5-3B**:

* **Generation (blue)**: The bar reaches approximately 0.29 Accuracy (%).

* **Multiple-choice (orange)**: The bar reaches approximately 0.39 Accuracy (%).

* **Trend**: Multiple-choice accuracy is higher than generation accuracy.

5. **SnoLM2-1.7B**:

* **Generation (blue)**: The bar reaches approximately 0.10 Accuracy (%).

* **Multiple-choice (orange)**: The bar reaches approximately 0.39 Accuracy (%).

* **Trend**: Multiple-choice accuracy is significantly higher than generation accuracy. This model shows the lowest generation accuracy among all models.

6. **Gemini-2.0-Flash**:

* **Generation (blue)**: The bar reaches approximately 0.49 Accuracy (%).

* **Multiple-choice (orange)**: The bar reaches approximately 0.52 Accuracy (%).

* **Trend**: Multiple-choice accuracy is slightly higher than generation accuracy, showing the smallest gap between the two task types. This model achieves the highest generation accuracy among all models.

### Key Observations

* **Consistent Pattern**: For every single model presented, the "Multiple-choice" accuracy (orange bar) is higher than the "Generation" accuracy (blue bar).

* **Highest Performers**:

* Llama-3.1-8B achieves the highest "Multiple-choice" accuracy at approximately 0.54.

* Gemini-2.0-Flash achieves the highest "Generation" accuracy at approximately 0.49.

* **Lowest Performers**:

* SnoLM2-1.7B shows the lowest "Generation" accuracy at approximately 0.10.

* DeepSeek-R1 / Distil-Llama-8B shows the lowest "Multiple-choice" accuracy at approximately 0.36.

* **Performance Gap Variation**: The difference between "Multiple-choice" and "Generation" accuracy varies significantly across models. The largest gaps are observed in Llama-3.1-8B (approx. 0.22 difference) and SnoLM2-1.7B (approx. 0.29 difference). The smallest gaps are seen in Gemini-2.0-Flash (approx. 0.03 difference) and Qwen2.5-14B (approx. 0.08 difference).

### Interpretation

The data strongly suggests that, for the evaluated language models, performing multiple-choice tasks is generally easier or yields higher accuracy scores compared to generation tasks. This could be attributed to several factors:

1. **Task Complexity**: Generation tasks often require more nuanced understanding, creativity, coherence, and adherence to specific constraints, making them inherently more challenging for models. Multiple-choice tasks, conversely, might primarily test comprehension and retrieval, where the correct answer is explicitly present among options.

2. **Evaluation Metrics**: The "Accuracy (%)" metric might be more straightforward to calculate for multiple-choice (binary correct/incorrect) than for generation, where evaluating the quality of generated text can be subjective and complex, potentially leading to lower scores even for reasonable outputs.

3. **Model Strengths**: The varying gaps between task types indicate that some models are relatively better at generation than others. Models like Gemini-2.0-Flash and Qwen2.5-14B, with smaller performance differences, appear to be more balanced in their capabilities across both task types, suggesting stronger generative abilities relative to their multiple-choice performance. Conversely, models like SnoLM2-1.7B and Llama-3.1-8B exhibit a larger disparity, implying a greater proficiency in discriminative (multiple-choice) tasks over generative ones.

4. **Implications for Benchmarking**: This consistent trend highlights a critical consideration for benchmarking language models. A model's performance on multiple-choice benchmarks may not directly translate to its real-world utility in generative applications. It underscores the need for diverse evaluation methodologies that accurately reflect the intended use cases of these models.