## Horizontal Bar Chart: E-CARE: Avg. Proof Depth

### Overview

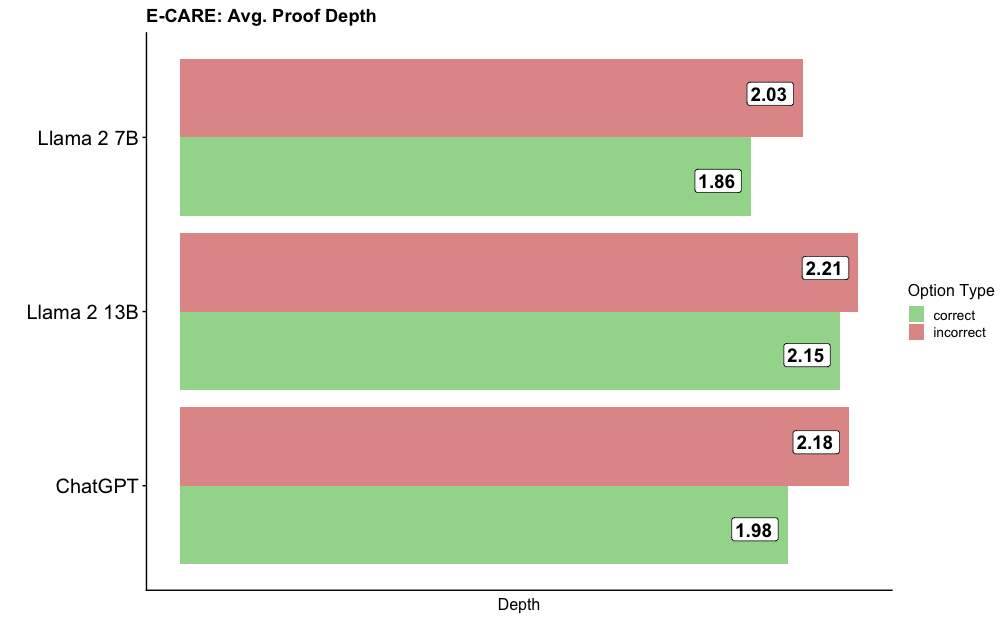

The image displays a horizontal bar chart titled "E-CARE: Avg. Proof Depth." It compares the average proof depth metric for three different large language models (LLMs) when generating correct versus incorrect answers. The chart uses paired bars for each model, with green representing "correct" options and red representing "incorrect" options.

### Components/Axes

* **Chart Title:** "E-CARE: Avg. Proof Depth" (located at the top-left).

* **Y-Axis (Vertical):** Lists the three models being compared. From top to bottom:

* Llama 2 7B

* Llama 2 13B

* ChatGPT

* **X-Axis (Horizontal):** Labeled "Depth" at the bottom. The axis represents a numerical scale for average proof depth, though specific tick marks are not shown. The values are provided as data labels on the bars.

* **Legend:** Located on the right side of the chart, titled "Option Type."

* A green square corresponds to "correct."

* A red square corresponds to "incorrect."

* **Data Labels:** Each bar has a numerical value displayed at its end, indicating the precise average proof depth.

### Detailed Analysis

The chart presents the following data points for each model:

1. **Llama 2 7B:**

* **Correct (Green Bar):** Average proof depth = 1.86. The green bar is shorter than the red bar for this model.

* **Incorrect (Red Bar):** Average proof depth = 2.03. The red bar is longer than the green bar.

2. **Llama 2 13B:**

* **Correct (Green Bar):** Average proof depth = 2.15. The green bar is shorter than the red bar.

* **Incorrect (Red Bar):** Average proof depth = 2.21. The red bar is longer than the green bar.

3. **ChatGPT:**

* **Correct (Green Bar):** Average proof depth = 1.98. The green bar is shorter than the red bar.

* **Incorrect (Red Bar):** Average proof depth = 2.18. The red bar is longer than the green bar.

**Trend Verification:** For all three models (Llama 2 7B, Llama 2 13B, and ChatGPT), the visual trend is consistent: the red bar (incorrect) is always longer than the green bar (correct). This indicates a higher average proof depth for incorrect answers across the board.

### Key Observations

* **Consistent Pattern:** The most notable pattern is that for every model listed, the average proof depth for incorrect answers is higher than for correct answers.

* **Model Comparison:**

* Llama 2 13B shows the highest average proof depth values for both correct (2.15) and incorrect (2.21) options among the three models.

* Llama 2 7B shows the lowest average proof depth for correct options (1.86).

* The difference between incorrect and correct proof depth is smallest for Llama 2 13B (0.06) and largest for ChatGPT (0.20).

* **Value Range:** All extracted average proof depth values fall within a relatively narrow range, between 1.86 and 2.21.

### Interpretation

The data suggests a potential correlation between the complexity or length of the reasoning chain (as measured by "proof depth") and the correctness of the model's output. Specifically, **incorrect answers tend to be associated with longer, more complex proof chains** than correct answers for these models on the E-CARE benchmark.

This could imply several investigative possibilities:

1. **Overthinking/Confabulation:** Models may generate longer, more convoluted justifications when they are uncertain or "hallucinating," leading to incorrect conclusions.

2. **Error Propagation:** A longer chain of reasoning provides more opportunities for a logical error to occur, which could result in an incorrect final answer.

3. **Task Difficulty:** Questions that elicit incorrect answers might inherently be more difficult, prompting the model to attempt a longer, but ultimately flawed, reasoning process.

The fact that this pattern holds across three different models (including two sizes of Llama 2 and ChatGPT) suggests it may be a general characteristic of how these LLMs perform on this type of reasoning task, rather than an artifact of a single model's architecture. The metric "proof depth" itself is central to this analysis, likely counting the number of logical steps or inferences in a generated explanation.