\n

## Diagram: Direct Preference Optimization (DPO) and Step-DPO

### Overview

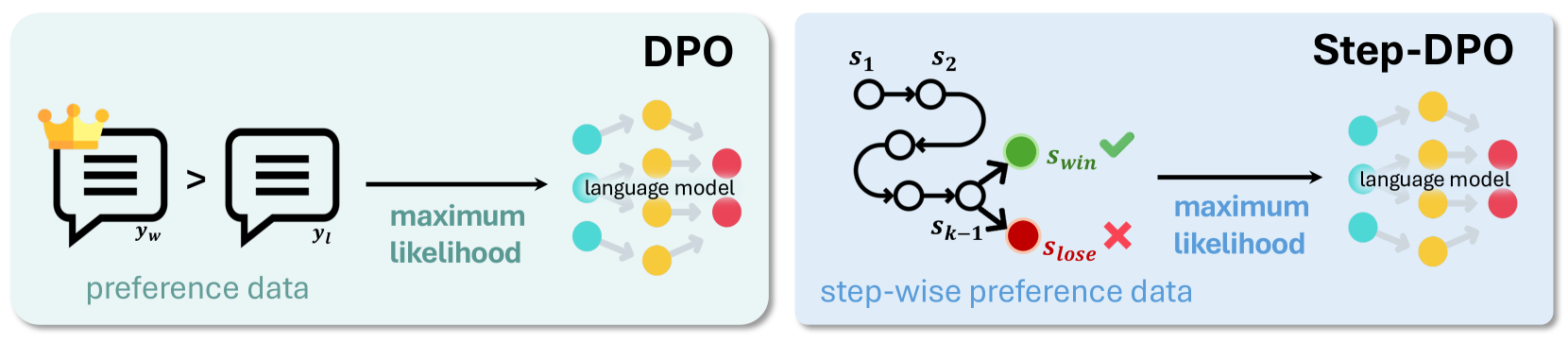

The image is a diagram illustrating the concepts of Direct Preference Optimization (DPO) and Step-DPO, two methods for training language models based on preference data. It visually represents how preference data is used to refine a language model through a maximum likelihood approach. The diagram is divided into three main sections: preference data input, DPO process, and Step-DPO process.

### Components/Axes

The diagram consists of the following components:

* **Preference Data:** Represented by two speech bubble icons, one with a crown (y<sub>w</sub> - "win") and the other without (y<sub>l</sub> - "lose"). An arrow indicates the preference relationship (y<sub>w</sub> > y<sub>l</sub>).

* **Language Model:** Represented by a cluster of colored circles (yellow, orange, light blue, pink, purple, and red).

* **DPO:** A section showing the transformation of preference data into language model updates via "maximum likelihood".

* **Step-DPO:** A section showing step-wise preference data and its impact on the language model, also via "maximum likelihood".

* **Step-wise Preference Data:** Represented by a series of connected circles (s<sub>1</sub>, s<sub>2</sub>, ..., s<sub>k-1</sub>, s<sub>lose</sub>, s<sub>win</sub>) with arrows indicating the flow of preference. A green checkmark indicates the "win" (s<sub>win</sub>) and a red 'X' indicates the "lose" (s<sub>lose</sub>).

* **Text Labels:** "preference data", "DPO", "Step-DPO", "maximum likelihood", "step-wise preference data".

* **Mathematical Notation:** y<sub>w</sub>, y<sub>l</sub>, s<sub>1</sub>, s<sub>2</sub>, s<sub>k-1</sub>, s<sub>lose</sub>, s<sub>win</sub>.

### Detailed Analysis / Content Details

The diagram illustrates a process flow.

1. **Preference Data:** The process begins with preference data, where one response (y<sub>w</sub>) is preferred over another (y<sub>l</sub>). This is indicated by the arrow pointing from y<sub>l</sub> to y<sub>w</sub>.

2. **DPO:** The preference data is then fed into the DPO process, which uses a "maximum likelihood" approach to update the language model. The language model is represented by a cluster of colored circles.

3. **Step-DPO:** The Step-DPO process takes step-wise preference data (s<sub>1</sub>, s<sub>2</sub>, ..., s<sub>k-1</sub>, s<sub>lose</sub>, s<sub>win</sub>). The preference is indicated by the green checkmark on s<sub>win</sub> and the red 'X' on s<sub>lose</sub>. This data is also used with a "maximum likelihood" approach to update the language model.

The language model in both DPO and Step-DPO appears to be the same, represented by the same color scheme of circles. The Step-DPO process shows a sequential flow of preference data, while DPO appears to handle preference data in a more direct manner.

### Key Observations

* Both DPO and Step-DPO utilize a "maximum likelihood" approach for updating the language model.

* Step-DPO explicitly models step-wise preference data, suggesting a sequential or iterative refinement process.

* The color scheme of the language model circles is consistent across both DPO and Step-DPO, indicating that the underlying model is the same.

* The diagram does not provide any numerical data or specific values. It is a conceptual illustration of the processes.

### Interpretation

The diagram demonstrates two approaches to refining a language model based on human preferences. DPO directly optimizes the model based on pairwise preferences, while Step-DPO refines the model iteratively using step-wise preference data. The use of "maximum likelihood" in both methods suggests that the goal is to maximize the probability of the preferred responses given the preference data.

The Step-DPO process, with its sequential flow, might be useful in scenarios where preferences are revealed incrementally or where the model needs to learn from a series of related choices. The DPO process, on the other hand, might be more efficient when pairwise preferences are readily available.

The diagram highlights the importance of preference data in aligning language models with human values and expectations. It suggests that by incorporating human feedback, these models can be trained to generate more desirable and helpful responses. The lack of numerical data suggests that the diagram is intended to convey the conceptual framework rather than specific performance metrics. The diagram is a high-level overview and does not delve into the technical details of the algorithms.