\n

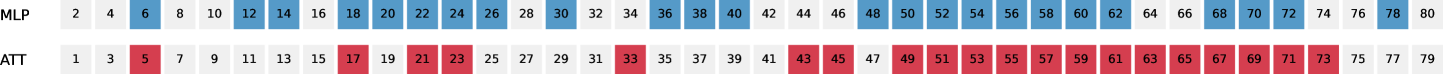

## Table Diagram: MLP and ATT Layer Activation Indices

### Overview

The image displays a two-row table or diagram comparing two sequences of numerical indices, labeled "MLP" and "ATT". The numbers are arranged horizontally in ascending order. Specific numbers in each row are highlighted with a colored background, suggesting a selection, activation, or grouping of those particular indices. The visual presentation is simple, using a monospaced font on a white background with colored cell highlights.

### Components/Axes

* **Row Labels (Left-aligned):**

* Top Row: `MLP`

* Bottom Row: `ATT`

* **Data Series:** Each row contains a sequence of integers.

* **MLP Row:** Contains even numbers from 2 to 80.

* **ATT Row:** Contains odd numbers from 1 to 79.

* **Highlighting Scheme:**

* **MLP:** Selected numbers are highlighted with a medium blue background.

* **ATT:** Selected numbers are highlighted with a medium red background.

* **Spatial Layout:** The numbers are presented in a single, continuous horizontal line for each row, starting from the left edge. The highlighting is applied to the background of individual number cells.

### Detailed Analysis

**1. MLP Row (Even Numbers, 2-80):**

* **Full Sequence:** 2, 4, 6, 8, 10, 12, 14, 16, 18, 20, 22, 24, 26, 28, 30, 32, 34, 36, 38, 40, 42, 44, 46, 48, 50, 52, 54, 56, 58, 60, 62, 64, 66, 68, 70, 72, 74, 76, 78, 80.

* **Highlighted (Blue) Indices:** 6, 18, 20, 22, 24, 26, 28, 30, 36, 38, 40, 42, 44, 46, 48, 50, 52, 54, 56, 58, 60, 62, 64, 66, 68, 70, 72, 74, 76, 78, 80.

* **Pattern:** The first highlighted index is 6. After a gap (8-16 are not highlighted), a contiguous block from 18 to 30 is highlighted. Another gap follows (32, 34). From 36 onward, all subsequent even numbers up to 80 are highlighted.

**2. ATT Row (Odd Numbers, 1-79):**

* **Full Sequence:** 1, 3, 5, 7, 9, 11, 13, 15, 17, 19, 21, 23, 25, 27, 29, 31, 33, 35, 37, 39, 41, 43, 45, 47, 49, 51, 53, 55, 57, 59, 61, 63, 65, 67, 69, 71, 73, 75, 77, 79.

* **Highlighted (Red) Indices:** 5, 17, 21, 23, 33, 43, 45, 49, 53, 63, 67, 73.

* **Pattern:** The highlighting is more sporadic compared to the MLP row. There is no large contiguous block. Highlights appear at seemingly irregular intervals, with some pairs close together (e.g., 21 & 23, 43 & 45) and larger gaps between others.

### Key Observations

1. **Complementary Sequences:** The two rows form complementary sets of integers: MLP uses even numbers, ATT uses odd numbers.

2. **Divergent Highlighting Patterns:** The MLP row shows a clear trend towards **increasing density** of highlighted indices as the numbers increase, culminating in a solid block from 36 to 80. The ATT row maintains a **sparse and irregular** highlighting pattern throughout its range.

3. **Potential Correlation:** There is a loose visual correlation where indices highlighted in one row often have a nearby index highlighted in the other (e.g., MLP 6 / ATT 5, MLP 18 / ATT 17, MLP 42/44 / ATT 43/45). However, this is not a strict one-to-one mapping.

4. **Data Density:** The MLP row contains 40 total numbers with 31 highlighted (77.5% highlighted). The ATT row contains 40 total numbers with 12 highlighted (30% highlighted).

### Interpretation

This diagram likely represents the **activation pattern or selection of layers/units** within two different components of a neural network architecture, specifically a Multi-Layer Perceptron (MLP) and an Attention mechanism (ATT).

* **What the data suggests:** The pattern implies that as the network depth or index increases, the MLP component increasingly utilizes or activates its later layers/units (from index 36 onward). In contrast, the Attention mechanism's usage remains selective and non-contiguous across its entire depth, suggesting it performs specific, targeted computations at certain layers rather than a broad, increasing engagement.

* **Relationship between elements:** The complementary even/odd numbering may simply be a labeling convention to distinguish between the two parallel sequences. The loose correlation between highlighted indices in MLP and ATT could indicate points in the network where both components are actively processing information, potentially for integrated feature transformation.

* **Notable anomaly/trend:** The most significant trend is the **phase shift** in the MLP row. The transition from sporadic highlighting (indices 6, 18-30) to complete saturation (36-80) suggests a potential architectural boundary or a change in the role of the MLP subnetwork at a certain depth. The ATT row's persistent sparsity is characteristic of attention mechanisms, which compute context-dependent weights rather than applying uniform transformations.

**In summary, this is not a chart of measured data but a schematic diagram illustrating the structural activation profile of two model components. It visually argues that the MLP's role becomes dominant and uniform in the later stages, while the Attention mechanism's role remains specialized and selective throughout.**