\n

## Charts: Training Dynamics of a Neural Network

### Overview

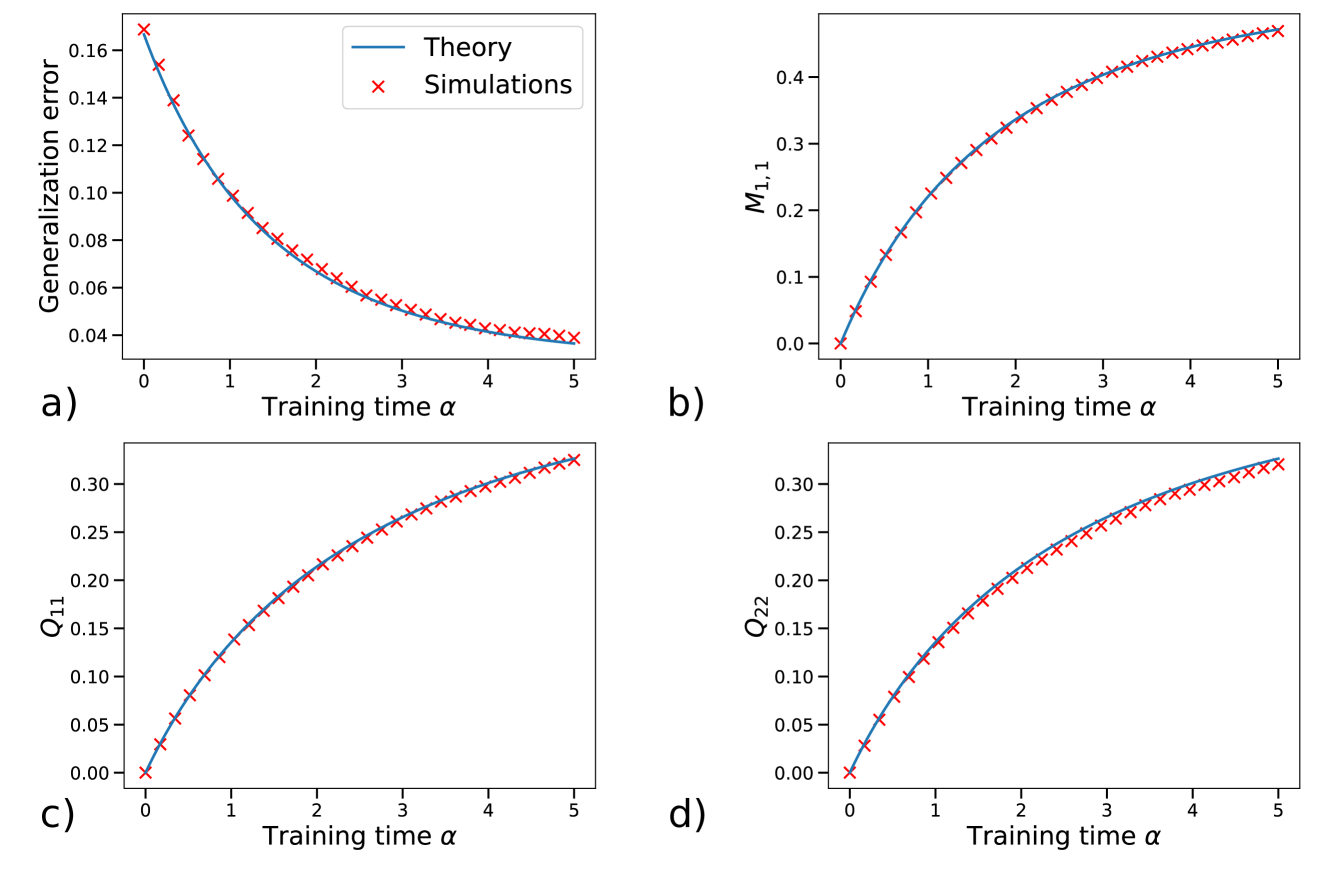

The image presents four separate charts (a, b, c, and d) illustrating the training dynamics of a neural network. Each chart plots a different metric against the training time, denoted as α (alpha). The charts compare theoretical predictions (solid lines) with simulation results (red 'x' markers).

### Components/Axes

Each chart shares the following components:

* **X-axis:** Labeled "Training time α", ranging from 0 to 5.

* **Y-axis:** Each chart has a unique Y-axis label representing the metric being plotted.

* **Legend:** Located in the top-left corner of each chart, distinguishing between "Theory" (blue solid line) and "Simulations" (red 'x' markers).

* **Chart Labels:** Each chart is labeled with a letter (a, b, c, d) in the bottom-left corner.

Specifically:

* **Chart a:** Y-axis labeled "Generalization error", ranging from 0 to 0.18.

* **Chart b:** Y-axis labeled "M<sub>1,1</sub>", ranging from 0 to 0.5.

* **Chart c:** Y-axis labeled "Q<sub>1,1</sub>", ranging from 0 to 0.35.

* **Chart d:** Y-axis labeled "Q<sub>2,2</sub>", ranging from 0 to 0.35.

### Detailed Analysis or Content Details

**Chart a: Generalization Error vs. Training Time**

* **Trend:** Both the "Theory" line and the "Simulations" markers show a decreasing trend, indicating that the generalization error decreases as training time increases. The rate of decrease slows down as training time progresses.

* **Data Points (approximate):**

* α = 0: Theory ≈ 0.165, Simulations ≈ 0.165

* α = 1: Theory ≈ 0.11, Simulations ≈ 0.11

* α = 2: Theory ≈ 0.075, Simulations ≈ 0.07

* α = 3: Theory ≈ 0.05, Simulations ≈ 0.048

* α = 4: Theory ≈ 0.035, Simulations ≈ 0.03

* α = 5: Theory ≈ 0.025, Simulations ≈ 0.022

**Chart b: M<sub>1,1</sub> vs. Training Time**

* **Trend:** Both the "Theory" line and the "Simulations" markers show an increasing trend, indicating that M<sub>1,1</sub> increases as training time increases. The rate of increase slows down as training time progresses.

* **Data Points (approximate):**

* α = 0: Theory ≈ 0.02, Simulations ≈ 0.02

* α = 1: Theory ≈ 0.1, Simulations ≈ 0.09

* α = 2: Theory ≈ 0.2, Simulations ≈ 0.19

* α = 3: Theory ≈ 0.28, Simulations ≈ 0.27

* α = 4: Theory ≈ 0.34, Simulations ≈ 0.33

* α = 5: Theory ≈ 0.38, Simulations ≈ 0.37

**Chart c: Q<sub>1,1</sub> vs. Training Time**

* **Trend:** Both the "Theory" line and the "Simulations" markers show an increasing trend, indicating that Q<sub>1,1</sub> increases as training time increases. The rate of increase slows down as training time progresses.

* **Data Points (approximate):**

* α = 0: Theory ≈ 0.03, Simulations ≈ 0.03

* α = 1: Theory ≈ 0.08, Simulations ≈ 0.07

* α = 2: Theory ≈ 0.15, Simulations ≈ 0.14

* α = 3: Theory ≈ 0.21, Simulations ≈ 0.20

* α = 4: Theory ≈ 0.26, Simulations ≈ 0.25

* α = 5: Theory ≈ 0.30, Simulations ≈ 0.29

**Chart d: Q<sub>2,2</sub> vs. Training Time**

* **Trend:** Both the "Theory" line and the "Simulations" markers show an increasing trend, indicating that Q<sub>2,2</sub> increases as training time increases. The rate of increase slows down as training time progresses.

* **Data Points (approximate):**

* α = 0: Theory ≈ 0.02, Simulations ≈ 0.02

* α = 1: Theory ≈ 0.07, Simulations ≈ 0.06

* α = 2: Theory ≈ 0.14, Simulations ≈ 0.13

* α = 3: Theory ≈ 0.20, Simulations ≈ 0.19

* α = 4: Theory ≈ 0.25, Simulations ≈ 0.24

* α = 5: Theory ≈ 0.29, Simulations ≈ 0.28

### Key Observations

* The simulation results consistently align with the theoretical predictions across all four charts.

* The generalization error (Chart a) decreases with training time, as expected.

* M<sub>1,1</sub>, Q<sub>1,1</sub>, and Q<sub>2,2</sub> all increase with training time, suggesting a change in the network's internal state.

* The rate of change for all metrics appears to diminish as training time increases, indicating a potential convergence towards a stable state.

### Interpretation

The charts demonstrate the training process of a neural network, comparing theoretical expectations with empirical simulation results. The decreasing generalization error confirms the network's ability to learn and improve its performance on unseen data. The increasing values of M<sub>1,1</sub>, Q<sub>1,1</sub>, and Q<sub>2,2</sub> likely represent the network's increasing confidence or activation levels in specific layers or components as it learns. The close agreement between the "Theory" and "Simulations" suggests that the theoretical model accurately captures the essential dynamics of the network's training process. The diminishing rate of change in all metrics indicates that the network is approaching a point of convergence, where further training may yield only marginal improvements. These charts provide valuable insights into the behavior of neural networks during training and can be used to optimize training parameters and improve model performance.