## Diagram: LLM Response Quality Evaluation via Uncertainty Estimation

### Overview

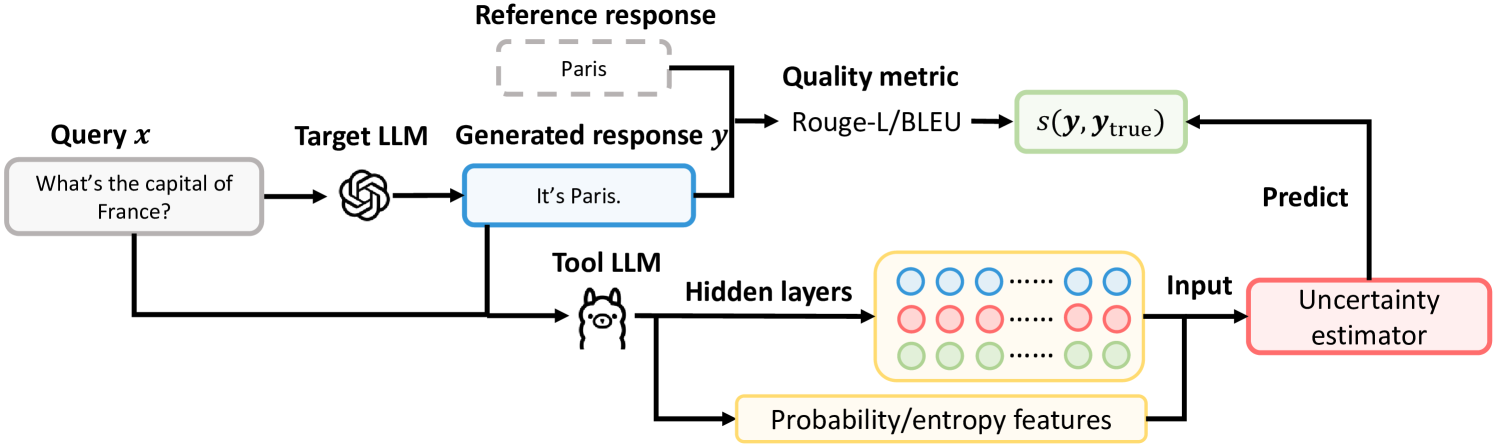

This diagram illustrates a technical process for evaluating the quality of a Large Language Model's (LLM) generated response by using a secondary "Tool LLM" to estimate uncertainty. The system compares the generated response against a reference answer using a standard metric (Rouge-L/BLEU) and also uses internal model features to predict a quality score, creating a dual-path evaluation system.

### Components/Axes

The diagram is a flowchart with labeled components connected by directional arrows indicating data flow. The primary language is English.

**Key Components (from left to right):**

1. **Query x**: A gray, rounded rectangle containing the example text: "What's the capital of France?".

2. **Target LLM**: Represented by a stylized brain/gear icon. It receives the query.

3. **Generated response y**: A blue, rounded rectangle containing the text: "It's Paris.".

4. **Reference response**: A dashed gray box containing the text: "Paris". This is the ground truth.

5. **Quality metric**: A label above a process arrow. The specific metrics listed are "Rouge-L/BLEU".

6. **s(y, y_true)**: A green, rounded rectangle representing the calculated similarity score between the generated response (y) and the true reference (y_true).

7. **Tool LLM**: Represented by a cat-like icon. It receives two inputs: the original "Query x" and the "Generated response y".

8. **Hidden layers**: A yellow box containing a grid of circles (blue, red, green) representing neural network activations. An arrow from the Tool LLM points to this box.

9. **Probability/entropy features**: A yellow, rounded rectangle below the "Hidden layers" box. An arrow from the Tool LLM also points here.

10. **Input**: A label on an arrow combining data from "Hidden layers" and "Probability/entropy features".

11. **Uncertainty estimator**: A red, rounded rectangle. It receives the combined "Input".

12. **Predict**: A label on an arrow pointing from the "Uncertainty estimator" back to the "s(y, y_true)" score box.

### Detailed Analysis

The process flow is as follows:

1. **Primary Generation Path (Top Flow):**

* A **Query x** ("What's the capital of France?") is fed into a **Target LLM**.

* The Target LLM produces a **Generated response y** ("It's Paris.").

* This generated response is compared to a **Reference response** ("Paris") using a **Quality metric** (Rouge-L/BLEU).

* The output of this comparison is a similarity score, denoted as **s(y, y_true)**.

2. **Uncertainty Estimation Path (Bottom Flow):**

* The same **Query x** and the **Generated response y** are both fed into a separate **Tool LLM**.

* The Tool LLM processes these inputs. Its internal states are tapped at two points:

* **Hidden layers**: The activations from the model's neural network layers.

* **Probability/entropy features**: Derived statistical features from the model's output distribution.

* These two data streams are combined as an **Input** to an **Uncertainty estimator** module.

* The **Uncertainty estimator** produces a prediction (**Predict**).

* This prediction is directed to the **s(y, y_true)** score box, indicating it is either predicting or modulating the final quality score.

### Key Observations

* **Dual Evaluation**: The system employs two parallel evaluation methods: a direct, reference-based metric (Rouge-L/BLEU) and an indirect, model-internal uncertainty estimation.

* **Tool LLM Role**: The "Tool LLM" acts as a diagnostic model, analyzing both the input query and the output response to gauge confidence. Its icon (a cat) is distinct from the Target LLM's icon (a brain/gear), suggesting it may be a different, specialized model.

* **Feature Extraction**: The uncertainty estimator doesn't use the raw text but relies on abstract features: hidden layer activations and probability/entropy metrics.

* **Feedback Loop**: The arrow from the Uncertainty estimator back to the quality score `s(y, y_true)` creates a feedback or predictive loop, suggesting the uncertainty estimate is used to adjust, validate, or predict the final quality assessment.

### Interpretation

This diagram depicts a framework for making LLM outputs more reliable. The core idea is that a model's internal "uncertainty" (captured via its hidden states and output entropy) can be a proxy for the factual correctness or quality of its response.

* **How it works**: For a given query and response, the system doesn't just ask "Is this answer similar to the correct one?" (the Rouge-L/BLEU path). It also asks, "Was the model confident when it generated this answer?" (the uncertainty path). A response that is both similar to the reference *and* generated with high confidence (low uncertainty) is likely of higher quality.

* **Significance**: This approach is valuable for deployed AI systems where reference answers aren't always available in real-time. The uncertainty estimator could flag responses that, while fluent, are generated with low model confidence, prompting a human review or a fallback mechanism. It moves beyond surface-level text matching to probe the model's internal state for signs of potential error or hallucination.

* **Notable Design**: The use of a separate "Tool LLM" implies that estimating uncertainty might be a task best handled by a model different from the one generating the answer, possibly to avoid bias or to leverage a model fine-tuned specifically for diagnostic tasks.