TECHNICAL ASSET FINGERPRINT

805556ed92c08228ef6f9b09

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart: Answer Accuracy vs. Layer for Llama-3 Models

### Overview

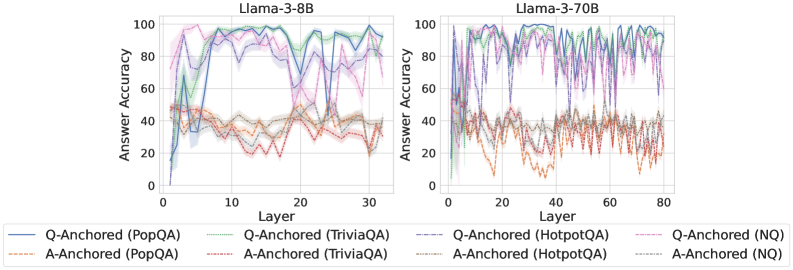

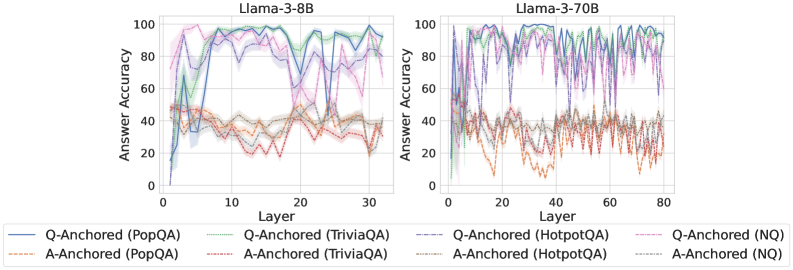

The image presents two line charts comparing the answer accuracy of Llama-3-8B and Llama-3-70B models across different layers. The x-axis represents the layer number, and the y-axis represents the answer accuracy. Each chart displays six data series, representing Q-Anchored and A-Anchored approaches for four different question answering datasets: PopQA, TriviaQA, HotpotQA, and NQ.

### Components/Axes

* **Titles:**

* Left Chart: Llama-3-8B

* Right Chart: Llama-3-70B

* **X-axis:**

* Label: Layer

* Left Chart: Scale from 0 to 30, with tick marks at intervals of 10.

* Right Chart: Scale from 0 to 80, with tick marks at intervals of 20.

* **Y-axis:**

* Label: Answer Accuracy

* Scale: 0 to 100, with tick marks at intervals of 20.

* **Legend:** Located at the bottom of the image, it identifies the data series by color and line style:

* Blue solid line: Q-Anchored (PopQA)

* Brown dashed line: A-Anchored (PopQA)

* Green dotted line: Q-Anchored (TriviaQA)

* Brown dotted-dashed line: A-Anchored (TriviaQA)

* Purple solid line: Q-Anchored (HotpotQA)

* Brown solid line: A-Anchored (HotpotQA)

* Pink dashed line: Q-Anchored (NQ)

* Grey dotted line: A-Anchored (NQ)

### Detailed Analysis

**Left Chart: Llama-3-8B**

* **Q-Anchored (PopQA) - Blue solid line:** Starts at approximately 10% accuracy, rapidly increases to around 60% by layer 5, and then rises to approximately 90% by layer 10. It fluctuates between 80% and 100% for the remaining layers.

* **A-Anchored (PopQA) - Brown dashed line:** Starts at approximately 40% accuracy and remains relatively stable between 30% and 50% across all layers.

* **Q-Anchored (TriviaQA) - Green dotted line:** Starts at approximately 50% accuracy, increases to around 80% by layer 10, and then fluctuates between 70% and 90% for the remaining layers.

* **A-Anchored (TriviaQA) - Brown dotted-dashed line:** Starts at approximately 40% accuracy and remains relatively stable between 30% and 50% across all layers.

* **Q-Anchored (HotpotQA) - Purple solid line:** Starts at approximately 50% accuracy, increases to around 90% by layer 10, and then fluctuates between 80% and 100% for the remaining layers.

* **A-Anchored (HotpotQA) - Brown solid line:** Starts at approximately 40% accuracy and remains relatively stable between 30% and 50% across all layers.

* **Q-Anchored (NQ) - Pink dashed line:** Starts at approximately 50% accuracy, increases to around 90% by layer 10, and then fluctuates between 80% and 100% for the remaining layers.

* **A-Anchored (NQ) - Grey dotted line:** Starts at approximately 40% accuracy and remains relatively stable between 30% and 50% across all layers.

**Right Chart: Llama-3-70B**

* **Q-Anchored (PopQA) - Blue solid line:** Starts at approximately 50% accuracy, increases to around 90% by layer 10, and then fluctuates between 80% and 100% for the remaining layers.

* **A-Anchored (PopQA) - Brown dashed line:** Starts at approximately 40% accuracy and remains relatively stable between 20% and 50% across all layers.

* **Q-Anchored (TriviaQA) - Green dotted line:** Starts at approximately 60% accuracy, increases to around 90% by layer 10, and then fluctuates between 80% and 100% for the remaining layers.

* **A-Anchored (TriviaQA) - Brown dotted-dashed line:** Starts at approximately 40% accuracy and remains relatively stable between 20% and 50% across all layers.

* **Q-Anchored (HotpotQA) - Purple solid line:** Starts at approximately 60% accuracy, increases to around 90% by layer 10, and then fluctuates between 80% and 100% for the remaining layers.

* **A-Anchored (HotpotQA) - Brown solid line:** Starts at approximately 40% accuracy and remains relatively stable between 20% and 50% across all layers.

* **Q-Anchored (NQ) - Pink dashed line:** Starts at approximately 60% accuracy, increases to around 90% by layer 10, and then fluctuates between 80% and 100% for the remaining layers.

* **A-Anchored (NQ) - Grey dotted line:** Starts at approximately 40% accuracy and remains relatively stable between 20% and 50% across all layers.

### Key Observations

* For both models, the Q-Anchored approach consistently outperforms the A-Anchored approach across all datasets.

* The Q-Anchored lines (blue, green, purple, pink) show a rapid increase in accuracy in the initial layers, followed by fluctuations at a high accuracy level.

* The A-Anchored lines (brown dashed, brown dotted-dashed, brown solid, grey dotted) remain relatively stable at a lower accuracy level throughout all layers.

* The Llama-3-70B model generally shows slightly higher initial accuracy for the Q-Anchored approaches compared to the Llama-3-8B model.

* The fluctuations in accuracy for the Q-Anchored approaches appear more pronounced in the Llama-3-70B model.

### Interpretation

The data suggests that anchoring the question (Q-Anchored) is a more effective strategy for improving answer accuracy in Llama-3 models compared to anchoring the answer (A-Anchored). The rapid increase in accuracy for the Q-Anchored approaches in the initial layers indicates that the model quickly learns to leverage the question information for better performance. The relatively stable and lower accuracy of the A-Anchored approaches suggests that anchoring the answer alone is not sufficient for achieving high accuracy.

The Llama-3-70B model, being larger, generally starts with a slightly higher accuracy for the Q-Anchored approaches, indicating that it has a better initial understanding of the question answering task. However, the more pronounced fluctuations in accuracy for the Llama-3-70B model could suggest that it is more sensitive to the specific characteristics of each layer or that it is exploring a wider range of potential solutions.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Answer Accuracy vs. Layer for Llama Models

### Overview

The image presents two line charts comparing the answer accuracy of different question-answering (QA) datasets across layers of two Llama models: Llama-3-8B and Llama-3-70B. The x-axis represents the layer number, and the y-axis represents the answer accuracy, ranging from 0 to 100. Each line represents a specific QA dataset and anchoring method.

### Components/Axes

* **X-axis:** Layer (ranging from approximately 0 to 30 for Llama-3-8B and 0 to 80 for Llama-3-70B).

* **Y-axis:** Answer Accuracy (ranging from 0 to 100).

* **Left Chart Title:** Llama-3-8B

* **Right Chart Title:** Llama-3-70B

* **Legend:**

* Q-Anchored (PopQA) - Blue line

* A-Anchored (PopQA) - Light Brown line

* Q-Anchored (TriviaQA) - Green line

* A-Anchored (TriviaQA) - Purple line

* Q-Anchored (HotpotQA) - Dashed Purple line

* A-Anchored (HotpotQA) - Dashed Brown line

* Q-Anchored (NQ) - Light Blue line

* A-Anchored (NQ) - Orange line

### Detailed Analysis or Content Details

**Llama-3-8B Chart:**

* **Q-Anchored (PopQA):** The blue line starts at approximately 10% accuracy at layer 0, rapidly increases to around 90% by layer 5, fluctuates between 80% and 95% for layers 5-25, and then decreases to around 85% by layer 30.

* **A-Anchored (PopQA):** The light brown line starts at approximately 20% accuracy at layer 0, increases to around 40% by layer 5, and remains relatively stable between 30% and 50% for the rest of the layers.

* **Q-Anchored (TriviaQA):** The green line starts at approximately 20% accuracy at layer 0, increases to around 90% by layer 5, and fluctuates between 80% and 95% for layers 5-25, and then decreases to around 80% by layer 30.

* **A-Anchored (TriviaQA):** The purple line starts at approximately 20% accuracy at layer 0, increases to around 60% by layer 5, and remains relatively stable between 50% and 70% for the rest of the layers.

* **Q-Anchored (HotpotQA):** The dashed purple line starts at approximately 10% accuracy at layer 0, increases to around 80% by layer 5, and fluctuates between 70% and 90% for layers 5-25, and then decreases to around 75% by layer 30.

* **A-Anchored (HotpotQA):** The dashed brown line starts at approximately 10% accuracy at layer 0, increases to around 40% by layer 5, and remains relatively stable between 30% and 50% for the rest of the layers.

* **Q-Anchored (NQ):** The light blue line starts at approximately 10% accuracy at layer 0, increases to around 70% by layer 5, and fluctuates between 60% and 80% for layers 5-25, and then decreases to around 65% by layer 30.

* **A-Anchored (NQ):** The orange line starts at approximately 10% accuracy at layer 0, increases to around 30% by layer 5, and remains relatively stable between 20% and 40% for the rest of the layers.

**Llama-3-70B Chart:**

The trends are similar to the Llama-3-8B chart, but the fluctuations are more pronounced and the layer range is extended to 80. All lines exhibit similar oscillatory behavior, peaking around 80-100% accuracy at various points and dipping to lower values. The A-Anchored lines consistently remain lower in accuracy than the Q-Anchored lines across all datasets.

### Key Observations

* **Q-Anchored consistently outperforms A-Anchored:** Across all datasets and both models, the Q-Anchored lines generally exhibit higher answer accuracy than the A-Anchored lines.

* **Initial Accuracy Increase:** All lines show a significant increase in accuracy within the first 5 layers.

* **Fluctuations:** The accuracy fluctuates significantly across layers, particularly in the Llama-3-70B model.

* **Model Size Impact:** The Llama-3-70B model exhibits more pronounced fluctuations in accuracy compared to the Llama-3-8B model.

### Interpretation

The data suggests that question anchoring (Q-Anchored) is a more effective method for improving answer accuracy in both Llama models compared to answer anchoring (A-Anchored). The initial rapid increase in accuracy across all datasets indicates that the early layers of the models are crucial for capturing basic question-answering capabilities. The subsequent fluctuations in accuracy suggest that deeper layers may be more sensitive to the specific characteristics of each dataset. The more pronounced fluctuations in the Llama-3-70B model could be attributed to its larger size and increased complexity, allowing it to capture more nuanced patterns but also making it more susceptible to overfitting or noise in the data. The consistent lower performance of A-Anchored methods suggests that focusing on the question itself, rather than the answer, is more beneficial for improving the model's ability to retrieve accurate information. The oscillatory behavior could be due to the model learning and unlearning patterns as it progresses through the layers, or it could be an artifact of the training process. Further investigation would be needed to determine the underlying cause of these fluctuations.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Llama-3 Model Answer Accuracy by Layer

### Overview

The image displays two side-by-side line charts comparing the "Answer Accuracy" of two Large Language Models (Llama-3-8B and Llama-3-70B) across their internal layers. The performance is measured on four question-answering datasets (PopQA, TriviaQA, HotpotQA, NQ) using two different methods: "Q-Anchored" and "A-Anchored". The charts visualize how accuracy evolves as information propagates through the model's layers.

### Components/Axes

* **Chart Titles:** "Llama-3-8B" (left chart), "Llama-3-70B" (right chart).

* **Y-Axis (Both Charts):** Label: "Answer Accuracy". Scale: 0 to 100, with major tick marks at 0, 20, 40, 60, 80, 100.

* **X-Axis (Left Chart - Llama-3-8B):** Label: "Layer". Scale: 0 to 30, with major tick marks at 0, 10, 20, 30.

* **X-Axis (Right Chart - Llama-3-70B):** Label: "Layer". Scale: 0 to 80, with major tick marks at 0, 20, 40, 60, 80.

* **Legend (Bottom, spanning both charts):** Contains 8 entries, each with a unique color and line style.

* **Q-Anchored Series (Solid Lines):**

* `Q-Anchored (PopQA)`: Blue solid line.

* `Q-Anchored (TriviaQA)`: Green solid line.

* `Q-Anchored (HotpotQA)`: Purple solid line.

* `Q-Anchored (NQ)`: Pink solid line.

* **A-Anchored Series (Dashed Lines):**

* `A-Anchored (PopQA)`: Orange dashed line.

* `A-Anchored (TriviaQA)`: Red dashed line.

* `A-Anchored (HotpotQA)`: Brown dashed line.

* `A-Anchored (NQ)`: Gray dashed line.

* **Data Representation:** Each series is plotted as a line with a shaded region around it, likely representing confidence intervals or variance across multiple runs.

### Detailed Analysis

**Llama-3-8B Chart (Left):**

* **Q-Anchored Lines (Solid):** All four lines show a rapid increase in accuracy from layer 0 to approximately layer 5-7, reaching a plateau between ~80-100% accuracy. They exhibit significant volatility, with sharp dips and recoveries throughout the layers. The `Q-Anchored (TriviaQA)` (green) and `Q-Anchored (PopQA)` (blue) lines frequently reach the highest accuracy values, often near 100%. The `Q-Anchored (NQ)` (pink) line shows a notable dip around layer 20.

* **A-Anchored Lines (Dashed):** These lines cluster in a lower accuracy band, primarily between 20% and 50%. They are more stable than the Q-Anchored lines but still show fluctuations. The `A-Anchored (PopQA)` (orange) and `A-Anchored (TriviaQA)` (red) lines are generally at the top of this cluster, while `A-Anchored (NQ)` (gray) is often at the bottom.

**Llama-3-70B Chart (Right):**

* **Q-Anchored Lines (Solid):** Similar to the 8B model, these lines rise sharply in the early layers (0-10) to a high-accuracy plateau (80-100%). The volatility is even more pronounced, with frequent, deep oscillations across all layers. The lines for different datasets are tightly interwoven, making it difficult to declare a consistent top performer, though `Q-Anchored (TriviaQA)` (green) and `Q-Anchored (HotpotQA)` (purple) often spike highest.

* **A-Anchored Lines (Dashed):** These lines again occupy a lower accuracy range, roughly 10% to 50%. The `A-Anchored (PopQA)` (orange) line shows a distinct downward trend from layer 0 to about layer 20 before stabilizing. The other A-Anchored lines fluctuate within their band without a clear directional trend.

**Cross-Model Comparison:**

* The fundamental pattern is consistent: Q-Anchored methods dramatically outperform A-Anchored methods across all datasets and both model sizes.

* The larger model (70B) operates over more layers (80 vs. 30) and exhibits greater volatility in the Q-Anchored accuracy scores.

* The performance gap between Q-Anchored and A-Anchored methods appears slightly wider in the 70B model.

### Key Observations

1. **Method Dominance:** The most striking pattern is the clear and consistent superiority of the Q-Anchored approach over the A-Anchored approach for all tested datasets.

2. **Layer Sensitivity:** Accuracy is highly sensitive to the specific layer within the model, especially for Q-Anchored methods, as shown by the jagged lines.

3. **Early Layer Convergence:** Both models achieve near-peak accuracy for Q-Anchored methods within the first 10-20% of their layers.

4. **Dataset Variability:** While Q-Anchored is always better, the relative ranking of datasets (e.g., TriviaQA vs. NQ) varies between layers and models, suggesting dataset-specific characteristics interact with the model's internal processing.

5. **Stability Contrast:** A-Anchored methods, while lower performing, show less dramatic layer-to-layer variance than Q-Anchored methods.

### Interpretation

The data suggests that the **"anchoring" strategy is a critical factor** in determining the answer accuracy extracted from intermediate layers of Llama-3 models. The Q-Anchored method (likely using the question as a prompt or reference) is far more effective at eliciting correct answers from the model's internal representations than the A-Anchored method (likely using the answer itself).

The high volatility in Q-Anchored accuracy indicates that **different layers specialize in different types of knowledge or reasoning steps**. The sharp dips could represent layers where information is being transformed or re-represented in a way that is temporarily less directly accessible for answer extraction. The early plateau suggests that the core information needed to answer these factual questions is encoded relatively early in the network's processing pipeline.

The greater volatility in the larger 70B model might reflect a more complex and specialized internal organization, where knowledge is distributed across more layers, leading to more pronounced peaks and valleys in accessibility. The consistent underperformance of A-Anchored methods implies that using the answer as an anchor does not effectively tap into the model's knowledge retrieval mechanism in the intermediate layers, possibly because it creates a mismatch with how the model naturally processes and stores information. This has practical implications for techniques like model probing or interpretability, highlighting the importance of choosing the correct prompt or anchor to query a model's internal state.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Answer Accuracy Across Layers for Llama-3-8B and Llama-3-70B Models

### Overview

The image contains two side-by-side line graphs comparing answer accuracy across neural network layers for two versions of the Llama-3 model (8B and 70B parameters). Each graph tracks performance across multiple anchoring methods (Q-Anchored and A-Anchored) and datasets (PopQA, TriviaQA, HotpotQA, NQ). The graphs show significant variability in accuracy across layers, with distinct patterns emerging between model sizes.

### Components/Axes

- **X-axis (Layer)**:

- Llama-3-8B: 0–30 layers

- Llama-3-70B: 0–80 layers

- **Y-axis (Answer Accuracy)**: 0–100% scale

- **Legends**:

- **Line styles/colors**:

- Solid lines: Q-Anchored methods

- Dashed lines: A-Anchored methods

- Colors correspond to datasets:

- Blue: PopQA

- Green: TriviaQA

- Purple: HotpotQA

- Red: NQ

- Legend positioned at bottom center, spanning both charts

### Detailed Analysis

#### Llama-3-8B (Left Chart)

- **Q-Anchored (PopQA)**: Starts at ~80% accuracy, drops sharply to ~20% at layer 5, then fluctuates between 40–80% with peaks at layers 10, 15, and 25.

- **A-Anchored (PopQA)**: Begins at ~50%, dips to ~30% at layer 5, then stabilizes between 30–50% with minor oscillations.

- **Q-Anchored (TriviaQA)**: Peaks at ~90% at layer 10, crashes to ~10% at layer 15, then recovers to ~70% by layer 30.

- **A-Anchored (TriviaQA)**: Starts at ~60%, drops to ~40% at layer 10, then stabilizes between 40–60%.

- **Q-Anchored (HotpotQA)**: Sharp drop from ~70% to ~20% at layer 5, followed by erratic fluctuations between 30–70%.

- **A-Anchored (HotpotQA)**: Starts at ~50%, dips to ~30% at layer 5, then stabilizes between 30–50%.

- **Q-Anchored (NQ)**: Begins at ~60%, drops to ~20% at layer 5, then fluctuates between 40–60%.

- **A-Anchored (NQ)**: Starts at ~50%, dips to ~30% at layer 5, then stabilizes between 30–50%.

#### Llama-3-70B (Right Chart)

- **Q-Anchored (PopQA)**: Starts at ~90%, drops to ~30% at layer 10, then fluctuates between 50–90% with peaks at layers 20, 40, and 60.

- **A-Anchored (PopQA)**: Begins at ~60%, dips to ~40% at layer 10, then stabilizes between 40–60%.

- **Q-Anchored (TriviaQA)**: Peaks at ~95% at layer 20, crashes to ~15% at layer 30, then recovers to ~80% by layer 70.

- **A-Anchored (TriviaQA)**: Starts at ~70%, dips to ~50% at layer 20, then stabilizes between 50–70%.

- **Q-Anchored (HotpotQA)**: Sharp drop from ~80% to ~25% at layer 10, followed by erratic fluctuations between 40–80%.

- **A-Anchored (HotpotQA)**: Starts at ~60%, dips to ~40% at layer 10, then stabilizes between 40–60%.

- **Q-Anchored (NQ)**: Begins at ~70%, drops to ~20% at layer 10, then fluctuates between 50–70%.

- **A-Anchored (NQ)**: Starts at ~60%, dips to ~40% at layer 10, then stabilizes between 40–60%.

### Key Observations

1. **Model Size Impact**: The 70B model shows more pronounced fluctuations in accuracy across layers compared to the 8B model.

2. **Anchoring Method Differences**:

- Q-Anchored methods generally start with higher accuracy but experience sharper drops in early layers.

- A-Anchored methods exhibit more stability but lower baseline accuracy.

3. **Dataset Variability**:

- HotpotQA and NQ datasets show the most erratic patterns, particularly in the 70B model.

- TriviaQA demonstrates the highest peaks in accuracy for both models.

4. **Confidence Intervals**: Shaded regions (not explicitly labeled) suggest wider uncertainty in the 70B model’s predictions.

### Interpretation

The data suggests that larger models (70B) exhibit greater layer-to-layer variability in answer accuracy, potentially due to increased complexity or overfitting. Q-Anchored methods outperform A-Anchored methods in early layers but become less reliable in deeper layers for certain datasets. The TriviaQA dataset consistently shows the highest accuracy peaks, indicating it may be better aligned with the models’ training data. The sharp drops in accuracy for Q-Anchored methods at specific layers (e.g., layer 5 in 8B, layer 10 in 70B) could reflect architectural bottlenecks or dataset-specific challenges. The stability of A-Anchored methods across layers implies they may be more robust to model size changes but sacrifice peak performance. Further investigation into dataset-model alignment and anchoring strategy trade-offs is warranted.

DECODING INTELLIGENCE...