## Flowchart: LLM-based QA Agent Architecture

### Overview

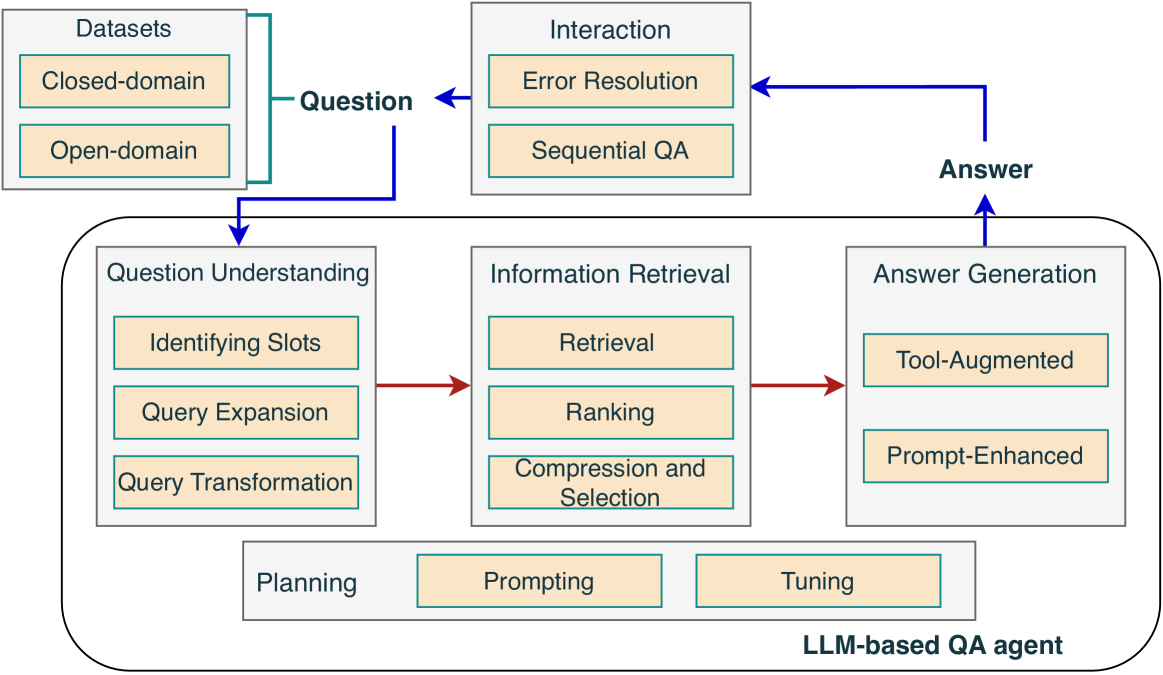

The diagram illustrates the workflow of an LLM-based question-answering (QA) agent, detailing its components, data flow, and iterative processes. It emphasizes modularity, feedback loops, and integration of closed-domain/open-domain datasets.

### Components/Axes

1. **Datasets**:

- **Closed-domain** and **Open-domain** (input sources).

2. **Question Understanding**:

- Sub-components: **Identifying Slots**, **Query Expansion**, **Query Transformation**.

3. **Information Retrieval**:

- Sub-components: **Retrieval**, **Ranking**, **Compression and Selection**.

4. **Interaction**:

- Sub-components: **Error Resolution**, **Sequential QA**.

5. **Answer Generation**:

- Sub-components: **Tool-Augmented**, **Prompt-Enhanced**.

6. **Planning, Prompting, Tuning**:

- Positioned at the bottom as part of the LLM-based QA agent.

### Flow and Relationships

- **Data Flow**:

- Datasets → Question → Question Understanding → Information Retrieval → Answer Generation → Interaction → Answer.

- Feedback loops: **Interaction** connects back to **Error Resolution** and **Sequential QA**, which re-route to **Answer**.

- **Modular Design**:

- Each major component (e.g., Question Understanding) contains sub-tasks (e.g., Identifying Slots).

- **Planning, Prompting, Tuning** are foundational to the LLM agent’s operation.

### Key Observations

1. **Iterative Refinement**:

- The **Interaction** box loops back to **Error Resolution** and **Sequential QA**, enabling error correction and multi-step reasoning.

2. **Domain Flexibility**:

- Separate handling of **Closed-domain** (structured data) and **Open-domain** (unstructured data) datasets.

3. **Hybrid Answer Generation**:

- Combines **Tool-Augmented** (external tools) and **Prompt-Enhanced** (structured input) methods.

4. **Optimization Layers**:

- **Planning, Prompting, Tuning** suggest iterative optimization of the LLM’s performance.

### Interpretation

The diagram represents a robust QA system designed for scalability and adaptability. The separation of **Closed-domain** and **Open-domain** datasets allows tailored processing strategies. The **Interaction** feedback loop ensures continuous improvement by addressing errors and refining sequential queries. The integration of **Tool-Augmented** and **Prompt-Enhanced** methods in answer generation highlights a hybrid approach, leveraging both external resources and structured input for accuracy. The **Planning, Prompting, Tuning** components underscore the importance of iterative optimization in LLM performance, ensuring the system adapts to complex queries.

No numerical data or trends are present; the focus is on architectural design and workflow logic.