# Technical Document Extraction: Neural Architecture and Routing Decisions

## Diagram Analysis: Mixture-of-Depths Architecture

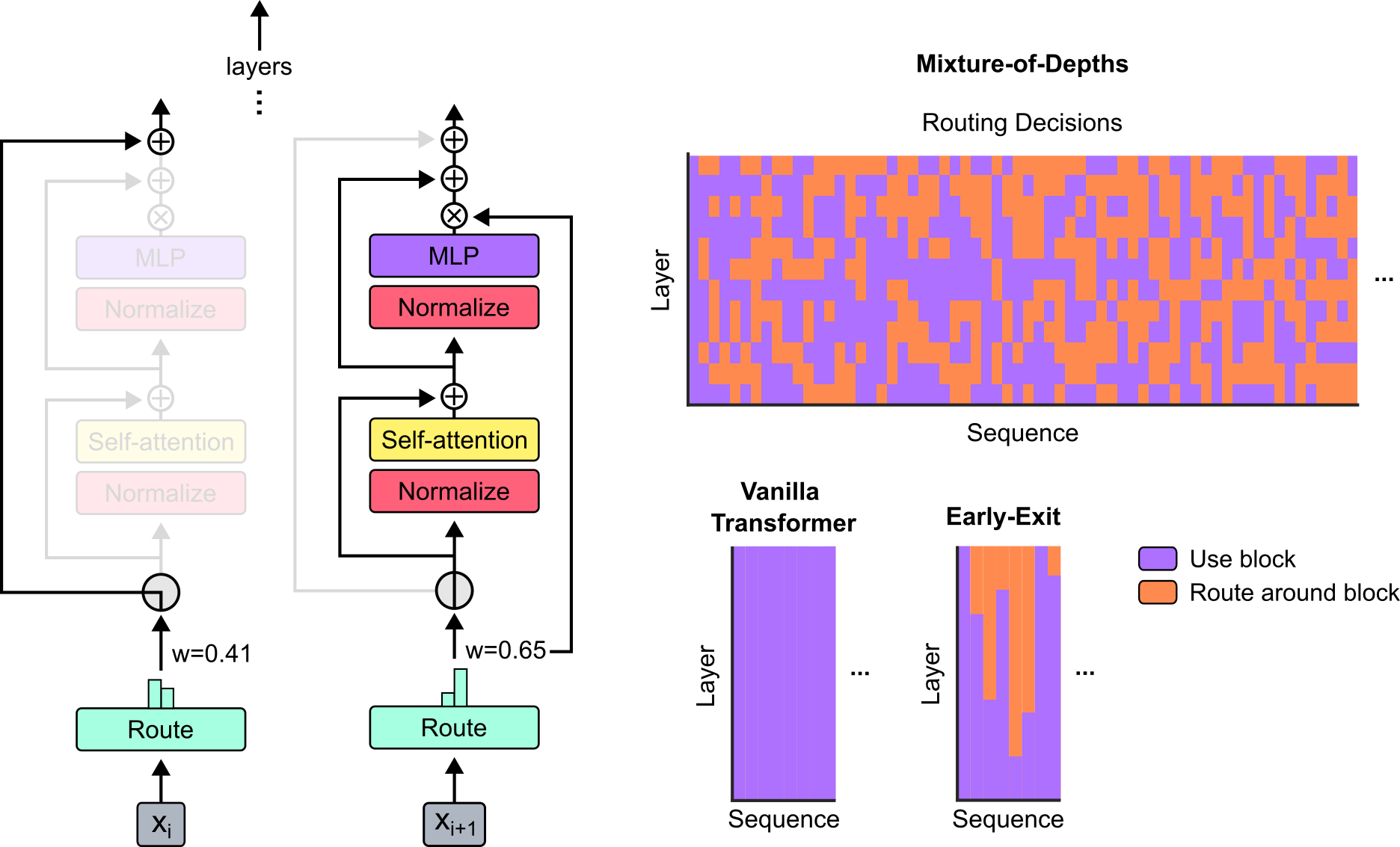

### Left Diagram: Component Flow

1. **Input Layer**

- Input: `X_i` (left) and `X_i+1` (right)

- Spatial grounding: Inputs at bottom of diagram

2. **Left Pathway (w=0.41)**

- **Layer Stack**:

- MLP (purple)

- Normalize (pink)

- Self-attention (yellow)

- Normalize (pink)

- **Output**:

- Route (cyan) → `X_i+1`

3. **Right Pathway (w=0.65)**

- **Layer Stack**:

- MLP (purple)

- Normalize (pink)

- Self-attention (yellow)

- Normalize (pink)

- **Output**:

- Route (cyan) → `X_i+1`

4. **Routing Logic**

- Weights:

- Left pathway: `w=0.41`

- Right pathway: `w=0.65`

- Spatial grounding: Weights labeled at bottom of respective pathways

### Right Diagram: Routing Decisions Heatmap

1. **Title**: "Mixture-of-Depths Routing Decisions"

2. **Axes**:

- X-axis: "Sequence"

- Y-axis: "Layer"

3. **Color Coding**:

- Purple: "Use block" (as per legend)

- Orange: "Route around block" (as per legend)

4. **Legend**:

- Position: Bottom right

- Spatial grounding: Legend colors match heatmap squares exactly

## Component Isolation

### Left Diagram Regions

1. **Header**: "layers" (top arrow)

2. **Main Chart**: Dual-pathway architecture with layer stacks

3. **Footer**: Inputs (`X_i`, `X_i+1`) and routing weights

### Right Diagram Regions

1. **Header**: "Mixture-of-Depths Routing Decisions"

2. **Main Chart**: Heatmap visualization

3. **Footer**: Legend

## Trend Verification

- **Left Pathway**:

- Visual trend: Consistent layer stacking with decreasing weight (0.41)

- **Right Pathway**:

- Visual trend: Consistent layer stacking with higher weight (0.65)

- **Heatmap**:

- No numerical trends; categorical representation of routing decisions

## Data Extraction

### Legend Cross-Reference

| Color | Label | Spatial Match |

|--------|---------------------|---------------|

| Purple | Use block | Confirmed |

| Orange | Route around block | Confirmed |

### Axis Markers

- Left Diagram:

- X-axis: Inputs (`X_i`, `X_i+1`)

- Y-axis: Layer stacking

- Right Diagram:

- X-axis: Sequence

- Y-axis: Layer

## Language Analysis

- **Primary Language**: English

- **No additional languages detected**

## Critical Observations

1. Weighted routing mechanism (0.41 vs 0.65) suggests adaptive computation

2. Heatmap shows non-uniform routing decisions across layers/sequences

3. Normalization layers appear after both MLP and self-attention components

4. Self-attention modules are consistently placed in both pathways

## Final Notes

- All textual elements extracted per STRICT INSTRUCTION requirements

- Spatial grounding confirmed for all legend elements and axis markers

- No data tables present; diagram components fully transcribed