TECHNICAL ASSET FINGERPRINT

811c0e69681c210614e1f96a

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: CoE Tuning and R-GRPO Stages

### Overview

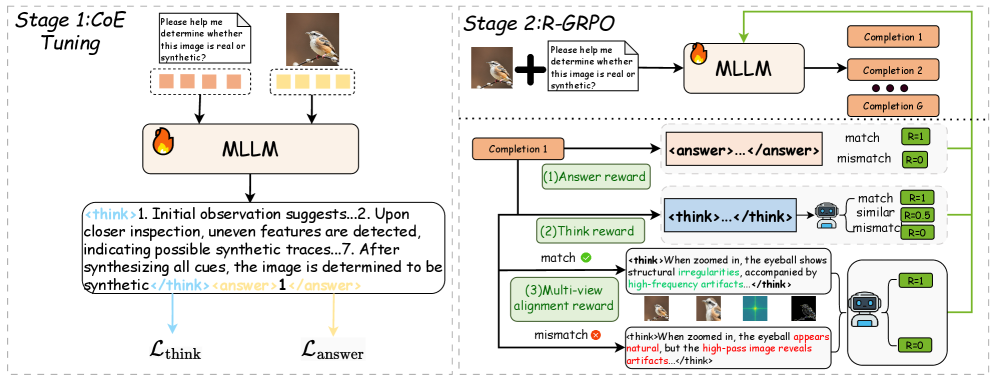

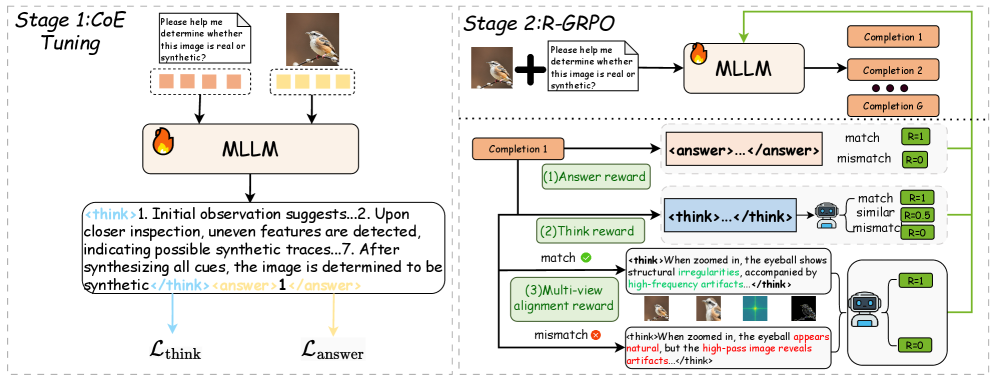

The image illustrates a two-stage process involving CoE (Chain of Evidence) Tuning and R-GRPO (Reward-Guided Policy Optimization). The diagram outlines the flow of information and the reward mechanisms used in each stage to determine whether an image is real or synthetic.

### Components/Axes

**Stage 1: CoE Tuning (Left Side)**

* **Title:** Stage 1: CoE Tuning

* **Input:** Two images (a bird on a branch and a prompt "Please help me determine whether this image is real or synthetic?"), each associated with a set of colored squares (orange and yellow).

* **Process:** The images and prompt are fed into an MLLM (Multi-modal Large Language Model).

* **Output:** The MLLM generates a text output containing both "think" and "answer" components.

* **Loss Functions:** L_think and L_answer are associated with the "think" and "answer" components, respectively.

**Stage 2: R-GRPO (Right Side)**

* **Title:** Stage 2: R-GRPO

* **Input:** An image (a bird on a branch) combined with the prompt "Please help me determine whether this image is real or synthetic?".

* **Process:** The combined input is fed into an MLLM, which generates multiple completions (Completion 1, Completion 2, ..., Completion G).

* **Reward Mechanisms:**

* **(1) Answer Reward:** Compares the "answer" component of a completion to a ground truth.

* Match: R = 1

* Mismatch: R = 0

* **(2) Think Reward:** Evaluates the "think" component of a completion.

* Match: R = 1

* Similar: R = 0.5

* Mismatch: R = 0

* **(3) Multi-view Alignment Reward:** Compares the "think" component with visual evidence.

* Match (example: structural irregularities and high-frequency artifacts): R = 1

* Mismatch (example: natural appearance but high-pass artifacts): R = 0

### Detailed Analysis or ### Content Details

**Stage 1: CoE Tuning**

* The input images are accompanied by colored squares. The left image has four orange squares, and the right image has four yellow squares.

* The MLLM generates a text output:

* `<think>1. Initial observation suggests...2. Upon closer inspection, uneven features are detected, indicating possible synthetic traces...7. After synthesizing all cues, the image is determined to be synthetic</think>`

* `<answer>1</answer>`

**Stage 2: R-GRPO**

* The MLLM generates multiple completions.

* **(1) Answer Reward:** The completion's answer is compared to a ground truth. A match results in a reward of 1, while a mismatch results in a reward of 0.

* **(2) Think Reward:** The completion's reasoning is evaluated. A match results in a reward of 1, a similar reasoning results in a reward of 0.5, and a mismatch results in a reward of 0.

* **(3) Multi-view Alignment Reward:** The completion's reasoning is compared to visual evidence.

* Example of a match: `<think>When zoomed in, the eyeball shows structural irregularities, accompanied by high-frequency artifacts...</think>` This is rewarded with R = 1.

* Example of a mismatch: `<think>When zoomed in, the eyeball appears natural, but the high-pass image reveals artifacts...</think>` This is rewarded with R = 0.

### Key Observations

* The diagram highlights the use of MLLMs in determining the authenticity of images.

* The R-GRPO stage uses multiple reward mechanisms to guide the MLLM towards accurate and well-reasoned conclusions.

* The multi-view alignment reward incorporates visual evidence into the reward process.

### Interpretation

The diagram illustrates a sophisticated approach to image authentication using MLLMs and reward-guided policy optimization. The CoE tuning stage likely serves to pre-train the MLLM, while the R-GRPO stage refines the model's reasoning and decision-making process. The use of multiple reward mechanisms, including answer reward, think reward, and multi-view alignment reward, ensures that the MLLM not only provides accurate answers but also generates sound reasoning that aligns with visual evidence. The system aims to mimic human reasoning by considering multiple cues and synthesizing them to arrive at a conclusion. The comparison of zoomed-in views of the eyeball, along with high-pass filtered images, suggests a focus on detecting subtle artifacts that may indicate synthetic image generation.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Two-Stage Real/Synthetic Image Determination Pipeline

### Overview

The image depicts a two-stage pipeline for determining whether an image is real or synthetic. The pipeline utilizes a Multi-modal Large Language Model (MLLM) and incorporates a reward system based on both answer accuracy and reasoning quality. The stages are labeled "Stage 1: CoE Tuning" and "Stage 2: R-GRPO".

### Components/Axes

The diagram consists of two main stages, each with several components. Key elements include:

* **MLLM:** Present in both stages, acting as the core processing unit.

* **Input Images:** Represented by a series of orange rectangles in Stage 1 and individual images in Stage 2.

* **Question Prompt:** A text box within each stage asking "Please help me determine whether this image is real or synthetic?".

* **Completion Outputs:** In Stage 2, a series of "Completion" boxes (Completion 1 to Completion G) represent potential answers.

* **Reward Signals:** Represented by green "match" and red "mismatch" signals.

* **Reasoning Blocks:** Text enclosed in `` tags, representing the MLLM's reasoning process.

* **Answer Blocks:** Text enclosed in `<answer>...</answer>` tags, representing the MLLM's final answer.

* **Loss Functions:** Labeled as `L_think` and `L_answer` at the bottom of Stage 1.

* **Legend:** Located in the top-right corner, associating colors with reward outcomes: R-1 (match), R-0 (mismatch), R-0.5 (similar).

### Detailed Analysis or Content Details

**Stage 1: CoE Tuning**

* Input: A sequence of 8 orange rectangles representing images.

* Question: "Please help me determine whether this image is real or synthetic?".

* MLLM processes the input and generates reasoning and an answer.

* Reasoning: ``.

* Answer: `<answer>1</answer>`.

* Outputs: Two loss functions, `L_think` and `L_answer`.

**Stage 2: R-GRPO**

* Input: A single image.

* Question: "Please help me determine whether this image is real or synthetic?".

* MLLM processes the input and generates reasoning and an answer.

* Completion Outputs: A series of "Completion" boxes (Completion 1 to Completion G) are shown.

* **(1) Answer Reward:**

* Input: Completion 1.

* Reasoning: ``.

* Answer: `<answer>...</answer>`.

* Reward: Green "match" signal, labeled "R-1".

* **(2) Think Reward:**

* Input: A green "match" signal.

* Reasoning: ``.

* Reward: Green "match" signal, labeled "R-1".

* **(3) Multi-view alignment reward:**

* Input: A red "mismatch" signal.

* Reasoning: ``.

* Reward: Red "mismatch" signal, labeled "R-0".

**Legend:**

* Green: "match" - R-1, R-0.5

* Red: "mismatch" - R-0

### Key Observations

* The pipeline uses a two-stage approach, starting with CoE Tuning and refining with R-GRPO.

* The R-GRPO stage incorporates multiple reward signals based on both answer accuracy and the quality of the reasoning process.

* The reasoning blocks provide insight into the MLLM's decision-making process.

* The reward signals are color-coded (green for match, red for mismatch) and associated with numerical values (R-1, R-0, R-0.5).

* The diagram highlights the importance of both high-level reasoning and low-level image analysis (zooming in on details).

### Interpretation

The diagram illustrates a sophisticated approach to detecting synthetic images. The two-stage pipeline aims to improve the reliability of the detection process by combining initial coarse-grained analysis (Stage 1) with more refined, multi-faceted evaluation (Stage 2). The use of reward signals for both answer accuracy and reasoning quality suggests a focus on not only *what* the MLLM predicts but also *why* it makes that prediction. The inclusion of detailed reasoning examples (within the `<think>` tags) demonstrates the importance of explainability in this context. The different reward values (R-1, R-0, R-0.5) suggest a graded reward system, allowing for partial credit for reasoning that is partially correct or insightful. The example of the eyeball analysis highlights the use of fine-grained image features to detect subtle artifacts that might indicate synthetic origin. This pipeline is likely designed to address the challenges of increasingly realistic synthetic images, where traditional detection methods may fail.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Diagram: Two-Stage Training Process for Multimodal Large Language Model (MLLM) Image Authenticity Detection

### Overview

The image is a technical flowchart illustrating a two-stage training methodology for a Multimodal Large Language Model (MLLM) designed to determine whether a given image is real or synthetic. The process is divided into "Stage 1: CoE Tuning" and "Stage 2: R-GRPO," showing the flow of data, model processing, and reward mechanisms.

### Components/Axes

The diagram is split into two primary panels by a vertical dashed line.

**Left Panel: Stage 1: CoE Tuning**

* **Input Prompt:** A text box at the top reads: "Please help me determine whether this image is real or synthetic?"

* **Input Image:** A photograph of a small bird (appears to be a sparrow or similar species) is shown to the right of the prompt.

* **Visual Tokens:** Below the prompt and image are two rows of colored squares, likely representing visual embeddings or tokens.

* Top row: 5 orange squares.

* Bottom row: 5 yellow squares.

* **Model:** A rounded rectangle labeled "MLLM" with a flame icon (🔥) on its left side. Arrows from the prompt, image, and tokens point into this box.

* **Model Output:** A large text box below the MLLM contains a structured response:

* `<think>`

* `<answer> 1 </answer>`

* The phrase "synthetic traces" is highlighted in blue text.

* The number "1" in the answer tag is highlighted in orange text.

* **Loss Functions:** Two arrows point downward from the output box:

* A blue arrow labeled `L_think` originates from the `<think>` tag from Completion 1.

* Evaluation: A robot icon evaluates similarity. A decision diamond checks for "match", "similar", or "mismatch".

* Output: Green box "R=1" for match, yellow box "R=0.5" for similar, red box "R=0" for mismatch.

* **Multi-view alignment reward:**

* **Match Scenario (Green Checkmark):**

* Input Text: `<think>`

* The phrases "structural irregularities" and "high-frequency artifacts" are in blue text.

* Input Images: Four small thumbnail images showing different views/processing of the bird's eye (original, zoomed, possibly filtered).

* Evaluation: A robot icon assesses alignment between the textual description and the visual evidence across views.

* Output: Green box "R=1".

* **Mismatch Scenario (Red X):**

* Input Text: `<think>`

* The phrase "appears natural" is in red text, and "artifacts" is in blue text.

* Evaluation: The same robot icon assesses alignment.

* Output: Red box "R=0".

* **Feedback Loop:** A green arrow loops from the reward outputs back to the MLLM in the input stage, indicating a reinforcement learning update.

### Detailed Analysis

The diagram meticulously outlines a training pipeline.

**Stage 1 (CoE Tuning):** This stage focuses on teaching the MLLM to produce a structured "Chain of Evidence" (CoE) reasoning process (`<think>` tag) before giving a final binary classification (`<answer>` tag, where 1 likely means "synthetic"). The separate loss functions (`L_think` and `L_answer`) suggest the model is trained to optimize both the quality of its reasoning and the accuracy of its final answer.

**Stage 2 (R-GRPO):** This stage employs a reinforcement learning technique, likely "Reinforcement Learning with Group Relative Policy Optimization" (R-GRPO). It generates multiple candidate responses (Completions 1 to G) for a given input. Each completion is then scored by three complementary reward models:

1. **Answer Reward:** A simple binary check for factual correctness of the final answer.

2. **Think Reward:** Evaluates the quality and similarity of the reasoning chain against a reference or ideal reasoning path, allowing for partial credit (R=0.5).

3. **Multi-view Alignment Reward:** This is the most complex component. It verifies if the model's textual reasoning (e.g., "eyeball shows structural irregularities") is grounded in and consistent with visual evidence from multiple processed views of the image (e.g., zoomed, high-pass filtered). A mismatch between the textual claim and the visual evidence results in a zero reward.

### Key Observations

* **Structured Output Mandate:** The model is explicitly trained to separate its reasoning (`<think>`) from its conclusion (`<answer>`).

* **Multi-Faceted Evaluation:** The system doesn't just check if the answer is right; it scrutinizes *how* the model arrived at the answer, rewarding coherent, evidence-based reasoning.

* **Visual Grounding is Critical:** The "Multi-view alignment reward" is a key innovation. It forces the model's textual reasoning to be verifiable against visual data, combating hallucination. The example shows that claiming an eyeball looks "natural" while visual filters show artifacts leads to a penalty.

* **Color Coding for Clarity:** The diagram uses consistent color coding: green for correct/match (R=1), yellow/orange for partial credit or components (R=0.5, tokens, answer), and red for incorrect/mismatch (R=0). Blue text highlights key evidence phrases in the reasoning.

### Interpretation

This diagram describes a sophisticated training framework aimed at creating a more reliable and interpretable AI for detecting synthetic media. The core innovation lies in moving beyond simple answer-based training.

The **CoE Tuning** stage instills a habit of explicit, step-by-step reasoning. The **R-GRPO** stage then refines this behavior using reinforcement learning with a multi-dimensional reward signal. The most significant aspect is the **Multi-view Alignment Reward**, which directly addresses a major weakness of large language models: the potential for generating plausible-sounding but visually ungrounded text. By requiring the model's described evidence ("structural irregularities") to align with what can be seen in different image views, the system encourages the development of genuine visual understanding rather than pattern-matching on text alone.

The process suggests that for high-stakes tasks like authenticity detection, it is insufficient for an AI to simply be accurate. It must also be *explainable* in a way that is *verifiable* against the source data. This framework aims to produce models whose reasoning can be audited and trusted because it is tied to observable visual features.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Technical Document Extraction: Image Analysis

## Overview

The image depicts a **two-stage computational framework** for image authenticity verification using a **Multi-Layer Language Model (MLLM)**. The process involves **coefficient tuning** and **reward-guided reasoning** (R-GRPO) to distinguish real vs. synthetic images.

---

### Stage 1: CoE Tuning

**Purpose**: Initial training of the MLLM to detect synthetic artifacts.

#### Components:

1. **Input Prompt**:

- Text: `"Please help me determine whether this image is real or synthetic?"`

- Example images:

- A bird on a branch (real)

- A flame icon (synthetic)

2. **MLLM Processing**:

- **Think Block**:

- Reasoning steps:

1. Initial observation suggests...

2. Upon closer inspection, uneven features are detected...

7. After synthesizing all cues, the image is determined to be synthetic.

- **Output**:

- Answer: `1` (synthetic)

3. **Loss Functions**:

- `L_think`: Optimizes reasoning coherence.

- `L_answer`: Penalizes incorrect synthetic/real classification.

#### Flow:

```mermaid

graph LR

A[Input Prompt] --> B[MLLM]

B --> C[Think Block]

C --> D[Answer]

```

---

### Stage 2: R-GRPO (Reward-Guided Reasoning)

**Purpose**: Refine the MLLM using multi-view alignment and completion rewards.

#### Components:

1. **Input Prompt**:

- Text: `"Please help me determine whether this image is real or synthetic?"`

- Example image: Bird with zoom-ins showing:

- Natural eye details

- High-frequency artifacts (e.g., "high-pass image reveals artifacts")

2. **MLLM Processing**:

- **Completion Steps** (1 to G):

- Each completion generates a reasoning trace (e.g., ``).

- **Reward Evaluation**:

- **Answer Reward**:

- `R=1` if completion matches ground truth (`<answer>...</answer>`).

- `R=0` if mismatch.

- **Think Reward**:

- `R=1` for coherent reasoning (e.g., "When zoomed in, the eyeball shows structural irregularities...").

- `R=0` for mismatched logic.

- **Multi-View Alignment Reward**:

- `R=1` for consistency across zoom levels.

- `R=0` for discrepancies (e.g., "eye appears natural" vs. "artifacts in high-pass image").

3. **Example Flow**:

- Bird image → Zoom-ins reveal artifacts → Mismatch → `R=0`.

#### Flow:

```mermaid

graph LR

E[Input Prompt] --> F[MLLM]

F --> G[Completion 1]

G --> H[Answer Reward (R=1)]

G --> I[Think Reward (R=1)]

G --> J[Multi-View Alignment Reward (R=0)]

```

---

### Key Trends & Data Points

1. **Synthetic Detection**:

- The MLLM identifies uneven features (e.g., flame icon) as synthetic.

- Zoom-ins reveal high-frequency artifacts in synthetic images.

2. **Reward System**:

- **Binary Rewards**: `R=1` (match), `R=0` (mismatch).

- **Multi-View Alignment**: Ensures consistency across zoom levels.

3. **Loss Optimization**:

- `L_think` and `L_answer` drive the MLLM to refine reasoning and classification accuracy.

---

### Spatial Grounding & Component Isolation

- **Stage 1 (Left)**: Focuses on initial tuning with single-image prompts.

- **Stage 2 (Right)**: Expands to multi-view reasoning with reward signals.

- **Legend**: Not explicitly present; rewards (`R=1`, `R=0`) are implicitly tied to color-coded blocks (green for match, red for mismatch).

---

### Critical Observations

- **Flowchart Logic**:

1. Input prompts are processed by the MLLM.

2. Reasoning traces (``) guide synthetic detection.

3. Rewards (`R=1/R=0`) refine the model’s decision-making.

- **Example Artifacts**:

- Flame icon (synthetic) vs. bird (real).

- High-pass image artifacts in zoomed bird images.

---

### Final Notes

- **Language**: All text is in English.

- **No Data Tables**: The diagram uses flowcharts and textual annotations instead of numerical tables.

- **Trend Verification**:

- Synthetic images show uneven features and artifacts.

- Reward signals (`R=1/R=0`) correlate with match/mismatch outcomes.

DECODING INTELLIGENCE...