## [Chart Type]: Dual Scatter Plots with Line Segment - Model Accuracy Analysis

### Overview

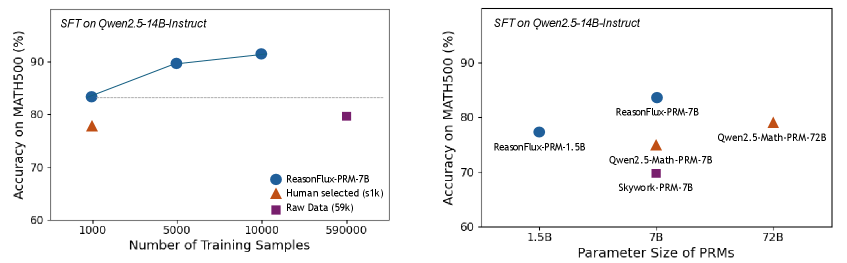

The image displays two side-by-side scatter plots comparing the performance of different Process Reward Models (PRMs) on the MATH500 benchmark. Both charts share the same y-axis metric: "Accuracy on MATH500 (%)". The left chart analyzes the impact of training data size, while the right chart analyzes the impact of model parameter size. The overarching title for both plots is "SFT on Qwen2.5-14B-Instruct".

### Components/Axes

**Common Elements:**

* **Main Title (Top of both plots):** "SFT on Qwen2.5-14B-Instruct"

* **Y-Axis (Both plots):** Label: "Accuracy on MATH500 (%)". Scale ranges from 60 to 90+ with major ticks at 60, 70, 80, 90.

* **Horizontal Reference Line:** A dashed gray line at approximately 82% accuracy appears in both plots.

**Left Plot:**

* **X-Axis:** Label: "Number of Training Samples". Scale is logarithmic with labeled ticks at 1000, 5000, 10000, and 590000.

* **Legend (Bottom Right):**

* Blue Circle: "ReasonFlux-PRM-7B"

* Orange Triangle: "Human selected (s1k)"

* Purple Square: "Raw Data (59k)"

**Right Plot:**

* **X-Axis:** Label: "Parameter Size of PRMs". Scale is logarithmic with labeled ticks at 1.5B, 7B, and 72B.

* **Legend (Embedded as labels next to data points):**

* Blue Circle: "ReasonFlux-PRM-7B"

* Orange Triangle: "Qwen2.5-Math-PRM-72B"

* Purple Square: "Skywork-PRM-7B"

* Orange Triangle (smaller): "Qwen2.5-Math-PRM-7B"

* Blue Circle (smaller): "ReasonFlux-PRM-1.5B"

### Detailed Analysis

**Left Chart: Accuracy vs. Training Samples**

* **Trend Verification:**

* **ReasonFlux-PRM-7B (Blue Line):** The line connecting the three blue circles slopes upward, indicating a positive correlation between the number of training samples and accuracy.

* **Human selected (s1k) (Orange Triangle):** Single data point, no trend.

* **Raw Data (59k) (Purple Square):** Single data point, no trend.

* **Data Points (Approximate):**

* **ReasonFlux-PRM-7B:**

* At 1000 samples: ~83.5% accuracy.

* At 5000 samples: ~89.5% accuracy.

* At 10000 samples: ~91.5% accuracy.

* **Human selected (s1k):** At 1000 samples: ~77.5% accuracy.

* **Raw Data (59k):** At 590000 samples: ~79.5% accuracy.

**Right Chart: Accuracy vs. Parameter Size**

* **Trend Verification:** All data series are single points; no lines connect them. The visual arrangement suggests a general upward trend from left to right.

* **Data Points (Approximate):**

* **ReasonFlux-PRM-1.5B (Blue Circle, leftmost):** At 1.5B parameters: ~77.5% accuracy.

* **ReasonFlux-PRM-7B (Blue Circle, center):** At 7B parameters: ~83.5% accuracy.

* **Skywork-PRM-7B (Purple Square, center):** At 7B parameters: ~70.0% accuracy.

* **Qwen2.5-Math-PRM-7B (Orange Triangle, center):** At 7B parameters: ~74.0% accuracy.

* **Qwen2.5-Math-PRM-72B (Orange Triangle, rightmost):** At 72B parameters: ~79.5% accuracy.

### Key Observations

1. **Training Data Efficiency (Left Chart):** The ReasonFlux-PRM-7B model shows significant accuracy gains (from ~83.5% to ~91.5%) when increasing training samples from 1,000 to 10,000. However, using 590,000 samples of "Raw Data" yields lower accuracy (~79.5%) than using only 1,000 samples of "Human selected" data (~77.5%) or the 1,000-sample ReasonFlux model.

2. **Parameter Size vs. Performance (Right Chart):** Among the 7B parameter models, ReasonFlux-PRM-7B (~83.5%) significantly outperforms both Qwen2.5-Math-PRM-7B (~74.0%) and Skywork-PRM-7B (~70.0%).

3. **Scale Comparison:** The largest model shown, Qwen2.5-Math-PRM-72B (~79.5%), performs worse than the much smaller ReasonFlux-PRM-7B (~83.5%) on this specific benchmark, suggesting architecture or training data quality may be more critical than sheer parameter count.

4. **Consistency:** The performance of ReasonFlux-PRM-7B at 1,000 samples is consistent between the two charts (~83.5%).

### Interpretation

The data suggests two key findings for improving model performance on the MATH500 benchmark when using Supervised Fine-Tuning (SFT) on Qwen2.5-14B-Instruct:

1. **Data Quality and Curation Trumps Quantity:** The left chart demonstrates that a small, high-quality, human-selected dataset (s1k) is more effective than a massive, uncurated dataset (59k). Furthermore, the ReasonFlux model's performance scales well with more high-quality data (up to 10k samples), indicating that the training process or data selection method used for ReasonFlux is highly effective.

2. **Model Architecture/Training is a Dominant Factor:** The right chart reveals that at the same parameter size (7B), the ReasonFlux variant achieves substantially higher accuracy than competitors. This implies that the specific design, training procedure, or data used to create ReasonFlux-PRM-7B provides a significant advantage. Its performance even surpasses a model with 10x more parameters (72B), highlighting that efficient use of parameters can be more important than scale alone.

**Overall Implication:** For technical document purposes, these charts argue that investing in sophisticated data curation and model training methodologies (as exemplified by ReasonFlux) yields better returns on the MATH500 benchmark than simply increasing raw data volume or model size. The ReasonFlux-PRM-7B model appears to be a highly efficient and effective choice within this evaluation context.