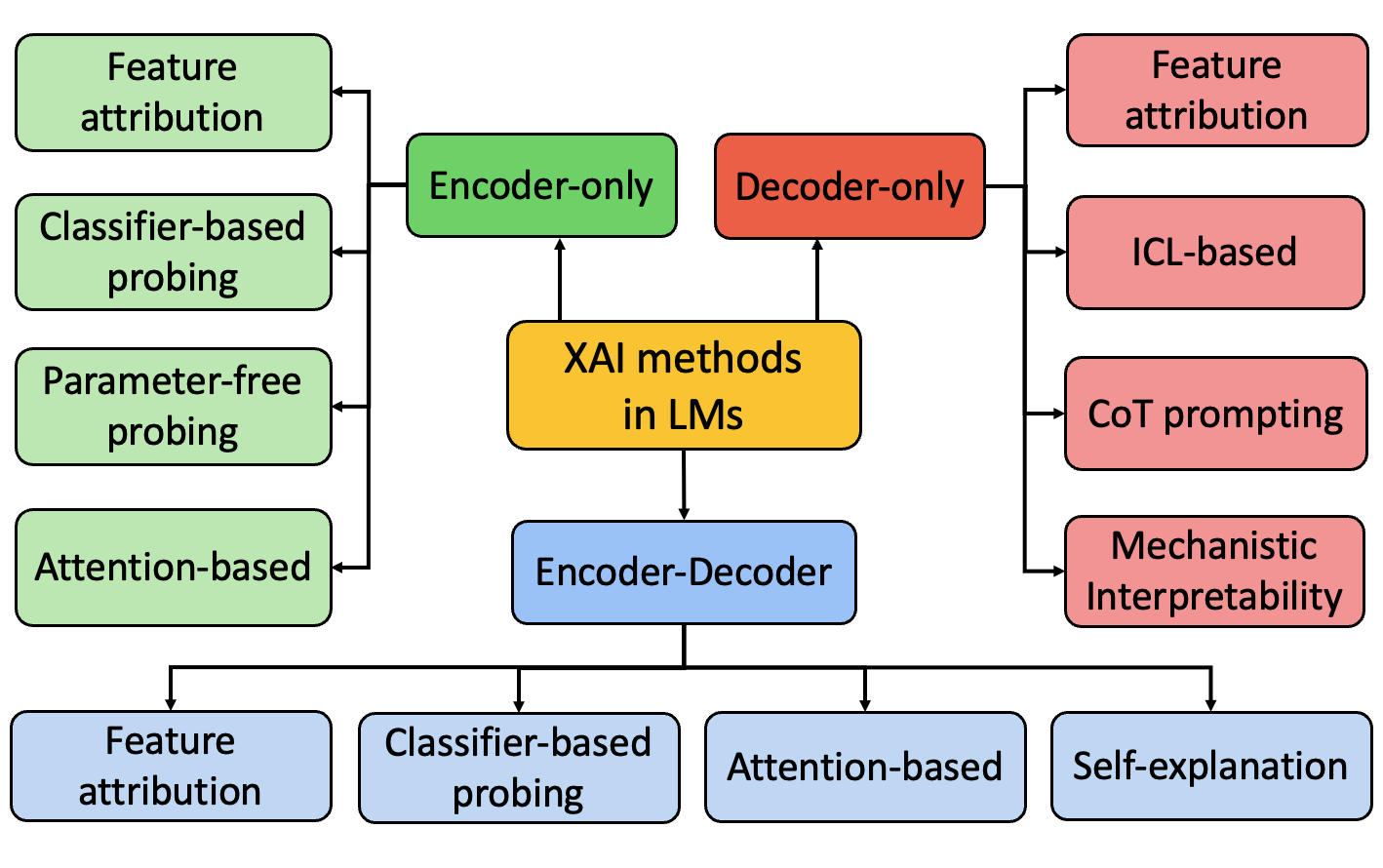

## Diagram: Taxonomy of Explainable AI (XAI) Methods in Language Models (LMs)

### Overview

This image is a hierarchical diagram illustrating the categorization of Explainable AI (XAI) methods as applied to different architectures of Language Models (LMs). The diagram uses color-coded boxes and directional arrows to show the relationships between a central concept, three primary model architectures, and the specific XAI techniques associated with each architecture.

### Components/Axes

The diagram is structured with a central node and three main branches. There are no numerical axes or scales.

**Central Node:**

* **Label:** "XAI methods in LMs"

* **Color:** Yellow/Gold

* **Position:** Center of the diagram.

**Primary Architecture Branches (connected to the central node):**

1. **Encoder-only**

* **Color:** Green

* **Position:** Top-left, connected by an upward arrow from the central node.

2. **Decoder-only**

* **Color:** Red/Orange

* **Position:** Top-right, connected by an upward arrow from the central node.

3. **Encoder-Decoder**

* **Color:** Light Blue

* **Position:** Bottom-center, connected by a downward arrow from the central node.

**Associated XAI Methods (connected to each architecture branch):**

* **For "Encoder-only" (Green branch, left side):**

* Feature attribution

* Classifier-based probing

* Parameter-free probing

* Attention-based

* *All these boxes are light green and connected via a single vertical line with arrows pointing left from the "Encoder-only" box.*

* **For "Decoder-only" (Red/Orange branch, right side):**

* Feature attribution

* ICL-based

* CoT prompting

* Mechanistic Interpretability

* *All these boxes are light red/pink and connected via a single vertical line with arrows pointing right from the "Decoder-only" box.*

* **For "Encoder-Decoder" (Light Blue branch, bottom):**

* Feature attribution

* Classifier-based probing

* Attention-based

* Self-explanation

* *All these boxes are light blue and connected via a horizontal line with arrows pointing down from the "Encoder-Decoder" box.*

### Detailed Analysis

The diagram presents a clear taxonomy. The central concept, "XAI methods in LMs," is the root. It branches into three distinct language model architectures, each represented by a different color. The specific XAI techniques applicable to each architecture are then listed in boxes of a lighter shade of the architecture's color, creating a visual grouping.

**Text Transcription (All text is in English):**

* Central: XAI methods in LMs

* Top-left (Green): Encoder-only

* Sub-items: Feature attribution, Classifier-based probing, Parameter-free probing, Attention-based

* Top-right (Red/Orange): Decoder-only

* Sub-items: Feature attribution, ICL-based, CoT prompting, Mechanistic Interpretability

* Bottom (Light Blue): Encoder-Decoder

* Sub-items: Feature attribution, Classifier-based probing, Attention-based, Self-explanation

### Key Observations

1. **Method Overlap:** "Feature attribution" is listed as a method for all three architectures (Encoder-only, Decoder-only, and Encoder-Decoder).

2. **Architecture-Specific Methods:** Some methods are unique to a single architecture in this diagram. "Parameter-free probing" is only listed under Encoder-only. "ICL-based," "CoT prompting," and "Mechanistic Interpretability" are only listed under Decoder-only. "Self-explanation" is only listed under Encoder-Decoder.

3. **Visual Grouping:** The use of color (green for encoder-only, red for decoder-only, blue for encoder-decoder) effectively groups the methods with their parent architecture, making the taxonomy easy to follow.

4. **Flow Direction:** Arrows consistently point from the general category (e.g., "XAI methods in LMs") to the more specific sub-categories (architectures, then methods), indicating a top-down, hierarchical classification.

### Interpretation

This diagram serves as a conceptual map for understanding the landscape of explainability techniques in the context of different language model designs. It suggests that the choice of XAI method is not universal but is contingent upon the underlying architecture of the language model being analyzed.

The diagram implies that:

* **Encoder-only models** (like BERT) are often analyzed using probing and attribution techniques that examine internal representations.

* **Decoder-only models** (like GPT) are associated with methods that leverage their generative nature, such as in-context learning (ICL) and chain-of-thought (CoT) prompting, alongside mechanistic analysis.

* **Encoder-Decoder models** (like T5) share some methods with encoder-only models (probing, attention) but also have unique approaches like "Self-explanation," which may involve the model generating its own rationale.

The repetition of "Feature attribution" across all categories highlights it as a fundamental and widely applicable XAI technique. The diagram is a useful reference for researchers or practitioners to identify which explainability tools are most relevant for a given type of language model.