## Flowchart: XAI Methods in Large Language Models (LLMs)

### Overview

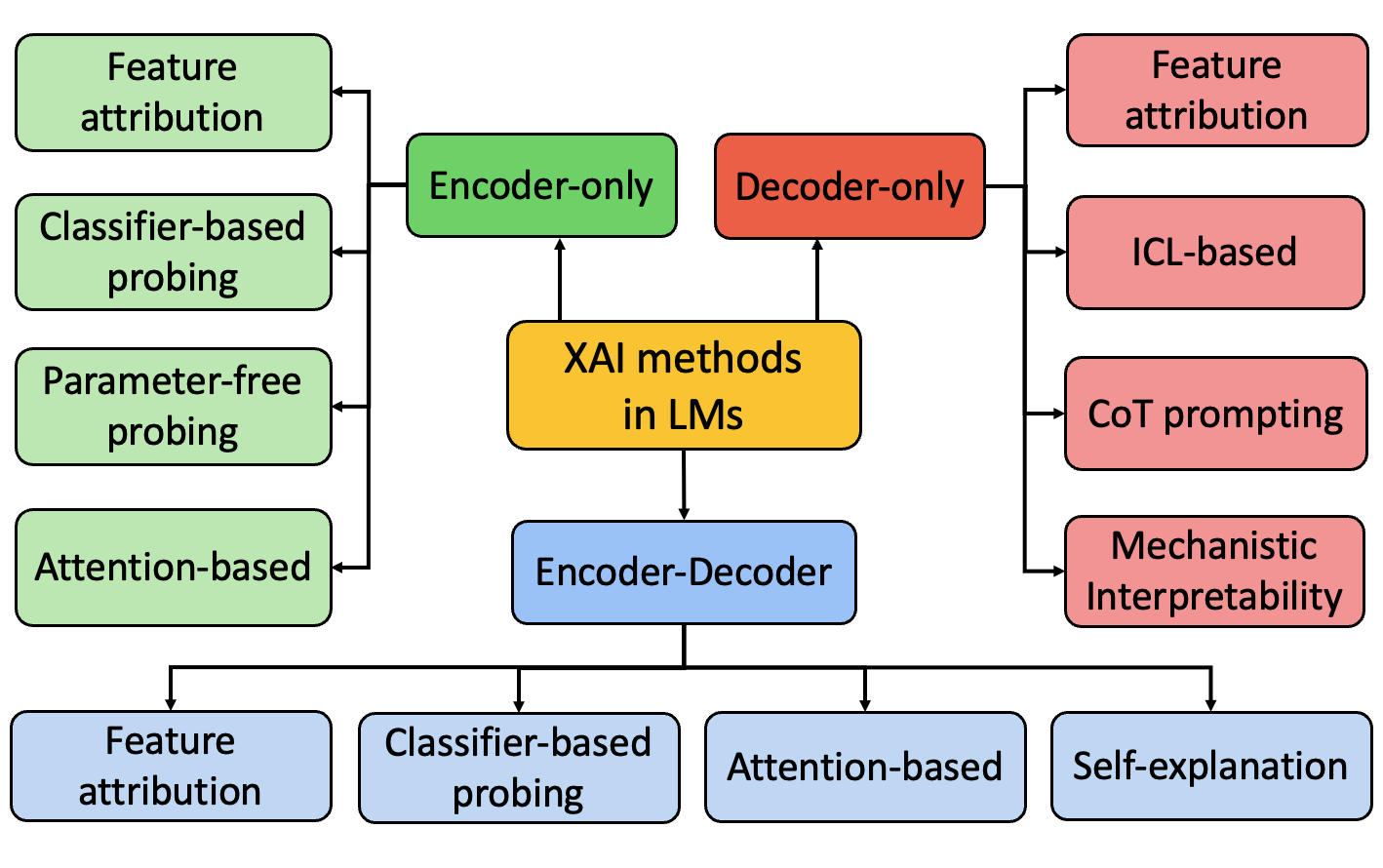

The flowchart categorizes Explainable AI (XAI) methods used in Large Language Models (LLMs) based on three architectural approaches: **Encoder-only**, **Decoder-only**, and **Encoder-Decoder**. Each architectural category contains specific XAI techniques, with overlapping and unique methods across sections.

### Components/Axes

- **Main Sections** (Color-coded):

- **Encoder-only** (Green)

- **Decoder-only** (Red)

- **Encoder-Decoder** (Blue)

- **Subcategories** (Text labels within boxes):

- **Encoder-only**:

- Feature attribution

- Classifier-based probing

- Parameter-free probing

- Attention-based

- **Decoder-only**:

- Feature attribution

- ICL-based

- CoT prompting

- Mechanistic Interpretability

- **Encoder-Decoder**:

- Feature attribution

- Classifier-based probing

- Attention-based

- Self-explanation

- **Arrows**: Connect subcategories to their parent architectural sections.

### Detailed Analysis

1. **Encoder-only (Green)**:

- **Feature attribution**: Appears in all three sections, indicating universal applicability.

- **Classifier-based probing**: Unique to Encoder-only.

- **Parameter-free probing**: Unique to Encoder-only.

- **Attention-based**: Appears in Encoder-only and Encoder-Decoder.

2. **Decoder-only (Red)**:

- **Feature attribution**: Shared with other sections.

- **ICL-based**: Unique to Decoder-only (In-Context Learning).

- **CoT prompting**: Unique to Decoder-only (Chain-of-Thought).

- **Mechanistic Interpretability**: Unique to Decoder-only.

3. **Encoder-Decoder (Blue)**:

- **Feature attribution**: Shared across all sections.

- **Classifier-based probing**: Shared with Encoder-only.

- **Attention-based**: Shared with Encoder-only.

- **Self-explanation**: Unique to Encoder-Decoder.

### Key Observations

- **Feature attribution** is the most widely used method, spanning all three architectural approaches.

- **Encoder-only** and **Decoder-only** sections contain unique methods not found in the Encoder-Decoder section (e.g., Parameter-free probing vs. ICL-based/CoT prompting).

- **Self-explanation** is exclusive to the Encoder-Decoder architecture, suggesting it relies on interactions between encoder and decoder components.

- **Attention-based** and **Classifier-based probing** are shared between Encoder-only and Encoder-Decoder, indicating their relevance to both single-component and dual-component architectures.

### Interpretation

This flowchart demonstrates how XAI methods are tailored to LLM architectures:

- **Encoder-only** methods focus on input processing (e.g., probing, attention mechanisms).

- **Decoder-only** methods emphasize output generation and reasoning (e.g., CoT prompting, mechanistic interpretability).

- **Encoder-Decoder** methods bridge both components, with **Self-explanation** likely requiring cross-component analysis.

- The overlap of **Feature attribution** and **Attention-based** methods across architectures highlights their foundational role in LLM interpretability.

- Unique methods in each section (e.g., ICL-based in Decoder-only) reflect architectural constraints and opportunities for explainability.