\n

## Diagram: State Transition Diagram with Reward and Penalty Mechanisms

### Overview

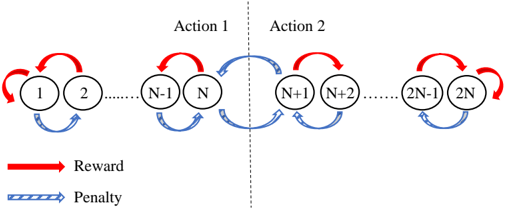

The image is a technical diagram illustrating a sequential process or state machine divided into two phases, labeled "Action 1" and "Action 2." It depicts a series of states (represented by numbered circles) connected by two types of transitions: "Reward" (red solid arrows) and "Penalty" (blue striped arrows). The diagram suggests a cyclical or iterative process within each phase, with a transition between phases.

### Components/Axes

* **Main Structure:** A horizontal sequence of circles (states) connected by arrows (transitions).

* **Phase Division:** A vertical dashed line separates the diagram into two sections.

* **Left Section Label:** "Action 1" (centered above the left half).

* **Right Section Label:** "Action 2" (centered above the right half).

* **States (Circles):** Numbered sequentially from 1 to 2N.

* **Action 1 States:** 1, 2, ..., N-1, N. (Ellipsis "......" indicates omitted states between 2 and N-1).

* **Action 2 States:** N+1, N+2, ..., 2N-1, 2N. (Ellipsis "......" indicates omitted states between N+2 and 2N-1).

* **Legend (Bottom-Left Corner):**

* **Red Solid Arrow:** Labeled "Reward".

* **Blue Striped Arrow:** Labeled "Penalty".

### Detailed Analysis

**Flow and Connections:**

1. **Within Action 1:**

* **Reward (Red) Flow:** Arrows point forward (to the right) from state 1 to 2, and from state N-1 to N. A red arrow also loops back from state 1 to itself.

* **Penalty (Blue) Flow:** Arrows point backward (to the left) from state 2 to 1, and from state N to N-1.

2. **Transition from Action 1 to Action 2:**

* A blue "Penalty" arrow points from state N (last state of Action 1) to state N+1 (first state of Action 2).

3. **Within Action 2:**

* **Reward (Red) Flow:** Arrows point forward from state N+1 to N+2, and from state 2N-1 to 2N. A red arrow also loops back from state 2N to itself.

* **Penalty (Blue) Flow:** Arrows point backward from state N+2 to N+1, and from state 2N to 2N-1.

**Spatial Grounding:**

* The **legend** is positioned in the bottom-left corner of the image.

* The **"Action 1"** label is centered above the left cluster of states (1 through N).

* The **"Action 2"** label is centered above the right cluster of states (N+1 through 2N).

* The **vertical dashed line** is centered in the image, acting as the boundary between the two action phases.

* The **ellipsis ("......")** is placed horizontally between circles 2 and N-1, and again between circles N+2 and 2N-1, indicating a continuous sequence.

### Key Observations

* **Symmetrical Structure:** The connection pattern (forward Reward, backward Penalty) is mirrored between Action 1 and Action 2.

* **Self-Loop Rewards:** The first state of Action 1 (State 1) and the last state of Action 2 (State 2N) have self-looping "Reward" arrows, suggesting a potential start/end or stable state condition.

* **Inter-Action Transition:** The only connection between the two action phases is a single "Penalty" arrow from state N to N+1. There is no direct "Reward" path shown between the phases.

* **Cyclical Nature:** The combination of forward and backward arrows within each action block creates local cycles (e.g., 1 -> 2 -> 1).

### Interpretation

This diagram models a sequential decision-making or learning process, likely from the field of **reinforcement learning** or **Markov Decision Processes (MDPs)**.

* **States and Actions:** The numbered circles represent discrete states in an environment. "Action 1" and "Action 2" likely represent two distinct strategies, policies, or phases of operation.

* **Reward/Penalty Mechanism:** The arrows define the dynamics of moving between states. A "Reward" transition (red) is presumably desirable, leading to a positive outcome or progression. A "Penalty" transition (blue) is undesirable, leading to a regression or negative outcome.

* **Process Logic:** The system progresses forward within a phase via rewards but can be pushed backward by penalties. The transition from Action 1 to Action 2 is triggered by a penalty, which might indicate that exhausting the options in Action 1 (reaching state N) leads to a forced or suboptimal shift to a new phase (Action 2).

* **Overall Narrative:** The diagram suggests an iterative process where an agent attempts to maximize rewards within a given action framework. Failure or penalty within one framework (Action 1) may necessitate switching to another (Action 2), where a similar reward-penalty dynamic plays out. The self-loops at the boundaries (State 1 and State 2N) could represent absorbing states, reset points, or states where the agent can choose to persist.