## Diagram: Sequential Action-Reward/Penalty System

### Overview

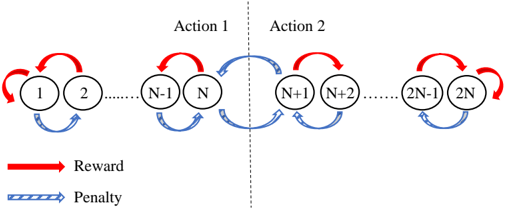

The diagram illustrates a sequential decision-making process involving two distinct actions (Action 1 and Action 2) with nodes labeled 1 to 2N. Arrows indicate transitions between nodes, with red arrows representing rewards and blue arrows representing penalties. A dashed vertical line separates the two actions, emphasizing their distinct operational domains.

### Components/Axes

- **Nodes**: Labeled sequentially from 1 to 2N, divided into two groups:

- **Action 1**: Nodes 1 to N

- **Action 2**: Nodes N+1 to 2N

- **Arrows**:

- **Red (Reward)**: Connect consecutive nodes within each action (e.g., 1→2, N-1→N, N+1→N+2, 2N-1→2N).

- **Blue (Penalty)**: Connect nodes across the dashed line (e.g., N→N+1, N+1→N).

- **Legend**: Located at the bottom, explicitly labeling red as "Reward" and blue as "Penalty".

- **Dashed Line**: Vertically divides the diagram into "Action 1" (left) and "Action 2" (right).

### Detailed Analysis

- **Action 1 (Nodes 1–N)**:

- Nodes are connected in a linear sequence via red arrows, indicating a reward-driven flow.

- Example transitions: 1→2, 2→3, ..., N-1→N.

- **Action 2 (Nodes N+1–2N)**:

- Similarly structured with red arrows connecting consecutive nodes: N+1→N+2, ..., 2N-1→2N.

- **Cross-Action Transitions**:

- Blue arrows (penalties) link the terminal node of Action 1 (N) to the initial node of Action 2 (N+1) and vice versa.

- Example transitions: N→N+1 (penalty), N+1→N (penalty).

### Key Observations

1. **Symmetry**: Both actions have identical internal reward structures (N nodes with sequential red arrows).

2. **Penalty Mechanism**: Switching between actions incurs penalties, represented by bidirectional blue arrows between nodes N and N+1.

3. **Cyclical Flow**: Within each action, nodes form a closed loop (e.g., 1→2→...→N→1), suggesting repetitive reward accumulation.

### Interpretation

This diagram likely models a **reinforcement learning environment** where an agent must choose between two action sequences (Action 1 or Action 2) to maximize cumulative rewards. The penalty for switching actions suggests a trade-off between exploiting high-reward sequences and exploring alternative paths. The symmetry implies equal viability of both actions, but the penalty discourages frequent switching, favoring consistency within a single action sequence. The cyclical nature of each action hints at a Markov decision process with finite states and deterministic transitions.