## Algorithm: Three-factor learning with PCM-trace

### Overview

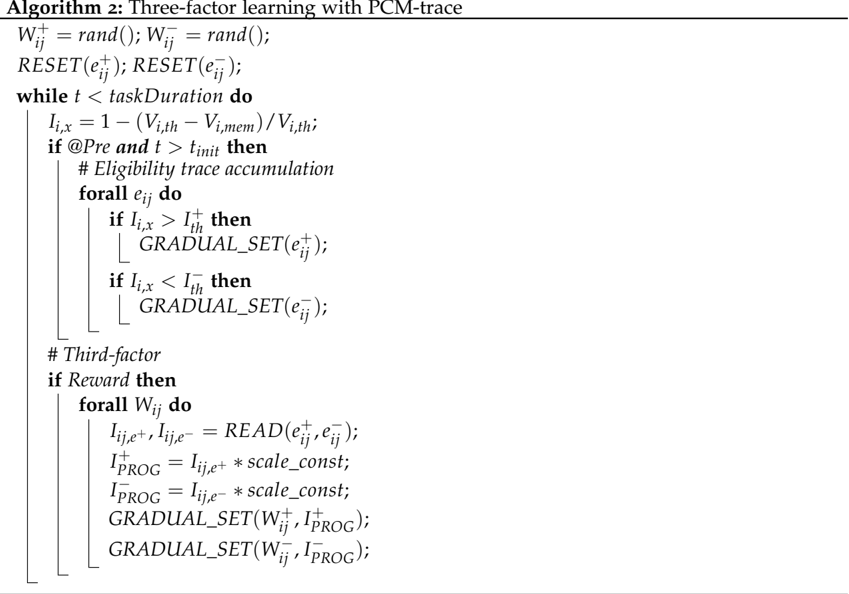

The image presents Algorithm 2, which describes a three-factor learning process using PCM-trace. The algorithm outlines the steps for updating weights and eligibility traces based on reward signals and temporal conditions.

### Components/Axes

The algorithm consists of the following components:

- Initialization of weights (W) using a random function.

- Resetting eligibility traces (e).

- A main loop that iterates while time (t) is less than taskDuration.

- Calculation of Ii,x based on Vi,th and Vi,mem.

- Conditional execution based on @Pre and t > tinit for eligibility trace accumulation.

- Updating eligibility traces based on Ii,x compared to thresholds I+th and I-th.

- A third-factor component that executes if Reward is true.

- Reading and updating Iij,e+ and Iij,e- based on eligibility traces.

- Calculating I+PROG and I-PROG using scale_const.

- Gradual setting of weights (W) based on I+PROG and I-PROG.

### Detailed Analysis or ### Content Details

The algorithm is presented as pseudocode. Here's a breakdown of the code:

1. **Initialization:**

* `W_{ij}^+ = rand();`

* `W_{ij}^- = rand();`

* `RESET(e_{ij}^+);`

* `RESET(e_{ij}^-);`

2. **Main Loop:**

* `while t < taskDuration do`

* `I_{i,x} = 1 - (V_{i,th} - V_{i,mem}) / V_{i,th};`

* `if @Pre and t > t_{init} then`

* `# Eligibility trace accumulation`

* `forall e_{ij} do`

* `if I_{i,x} > I_{th}^+ then`

* `GRADUAL_SET(e_{ij}^+);`

* `if I_{i,x} < I_{th}^- then`

* `GRADUAL_SET(e_{ij}^-);`

3. **Third-factor:**

* `# Third-factor`

* `if Reward then`

* `forall W_{ij} do`

* `I_{ij,e^+}, I_{ij,e^-} = READ(e_{ij}^+, e_{ij}^-);`

* `I_{PROG}^+ = I_{ij,e^+} * scale_const;`

* `I_{PROG}^- = I_{ij,e^-} * scale_const;`

* `GRADUAL_SET(W_{ij}^+, I_{PROG}^+);`

* `GRADUAL_SET(W_{ij}^-, I_{PROG}^-);`

### Key Observations

- The algorithm uses both positive and negative weights and eligibility traces, indicated by the "+" and "-" superscripts.

- The `GRADUAL_SET` function is used to update both eligibility traces and weights, suggesting a gradual adjustment mechanism.

- The third-factor component is triggered by a `Reward` signal, indicating a reinforcement learning aspect.

- The variable `I_{i,x}` is calculated based on `V_{i,th}` and `V_{i,mem}`, which likely represent threshold and memory values, respectively.

### Interpretation

The algorithm describes a three-factor learning rule that incorporates eligibility traces and a reward signal to update weights. The use of PCM-trace suggests that Phase Change Memory is involved in storing and updating the eligibility traces. The algorithm appears to implement a form of reinforcement learning where the weights are adjusted based on the reward and the eligibility traces, which capture the temporal relationship between actions and rewards. The `GRADUAL_SET` function implies a smooth and incremental adjustment of the weights and traces, potentially contributing to the stability of the learning process. The third factor, triggered by the `Reward` signal, likely modulates the weight updates based on the magnitude and valence of the reward.