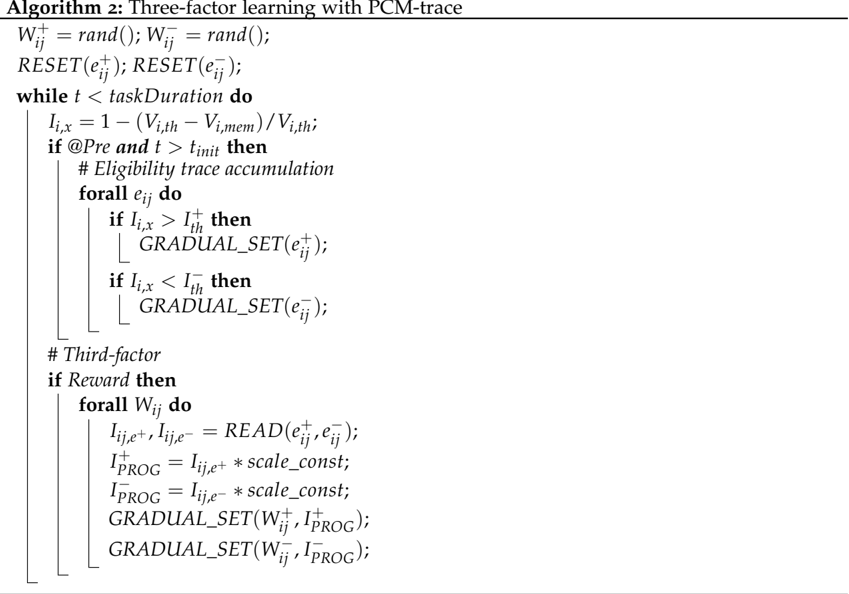

## Pseudocode: Three-factor learning with PCM-trace algorithm

### Overview

The image contains a technical pseudocode implementation of a three-factor learning algorithm incorporating PCM-trace mechanisms. The code defines variable initializations, iterative processes, and conditional logic for eligibility trace updates and reward-based weight adjustments.

### Components/Axes

- **Variables**:

- `W_ij^+ = rand()`: Positive weight initialization

- `W_ij^- = rand()`: Negative weight initialization

- `e_ij^+`, `e_ij^-`: Eligibility traces for positive/negative events

- `V_i,th`, `V_i,mem`: Threshold and memory values for eligibility calculation

- `I_i,x`: Eligibility index calculation variable

- `I_th^+`, `I_th^-`: Threshold values for eligibility comparison

- `scale_const`: Scaling constant for reward-based updates

- **Control Structures**:

- `while t < taskDuration`: Main iteration loop

- `if @Pre and t > t_init`: Eligibility trace accumulation condition

- `if Reward`: Reward signal processing block

- **Functions**:

- `GRADUAL_SET(e_ij)`: Gradual eligibility trace update

- `READ(e_ij^+, e_ij^-)`: Eligibility trace reading operation

- `RESET(e_ij)`: Eligibility trace reset operation

### Detailed Analysis

1. **Initialization Phase**:

- Random initialization of positive/negative weights (`W_ij^+`, `W_ij^-`)

- Reset of eligibility traces (`e_ij^+`, `e_ij^-`)

2. **Main Iteration Loop**:

- Continues while time `t` is less than task duration

- Calculates eligibility index: `I_i,x = 1 - (V_i,th - V_i,mem)/V_i,th`

- Eligibility trace accumulation triggered when:

- `@Pre` condition is met

- Time exceeds initialization threshold `t_init`

3. **Eligibility Trace Updates**:

- For each event `e_ij`:

- If `I_i,x > I_th^+`: Update positive trace `GRADUAL_SET(e_ij^+)`

- If `I_i,x < I_th^-`: Update negative trace `GRADUAL_SET(e_ij^-)`

4. **Third-Factor Processing**:

- Activated when reward signal is detected

- For each weight `W_ij`:

- Reads eligibility traces: `I_ij,e^+, I_ij,e^- = READ(e_ij^+, e_ij^-)`

- Calculates scaled traces:

- `I_PRG^+ = I_ij,e^+ * scale_const`

- `I_PRG^- = I_ij,e^- * scale_const`

- Updates weights with scaled traces:

- `GRADUAL_SET(W_ij^+, I_PRG^+)`

- `GRADUAL_SET(W_ij^-, I_PRG^-)`

### Key Observations

- The algorithm combines eligibility trace learning with reward-modulated weight updates

- Three distinct factors are implemented:

1. Eligibility trace accumulation

2. Threshold-based eligibility comparison

3. Reward-modulated weight adjustment

- The PCM-trace mechanism appears to integrate positive/negative trace dynamics with reward signals

### Interpretation

This pseudocode represents a hybrid learning architecture that:

1. Maintains separate positive/negative eligibility traces for each event

2. Uses threshold comparisons to determine trace update eligibility

3. Implements reward-based weight adjustments through scaled trace values

4. Employs gradual updates for both eligibility traces and weights

The PCM-trace component suggests a probabilistic contrastive mechanism where:

- Positive traces (`e_ij^+`) are strengthened by reward signals

- Negative traces (`e_ij^-`) are weakened by reward signals

- Weight updates (`W_ij`) are modulated by the difference between scaled positive/negative traces

The algorithm appears designed for reinforcement learning scenarios requiring:

- Temporal credit assignment (via eligibility traces)

- Reward-modulated value function updates

- Separate handling of positive/negative prediction errors