\n

## Line Chart: RM@K Accuracy vs. Number of Samples

### Overview

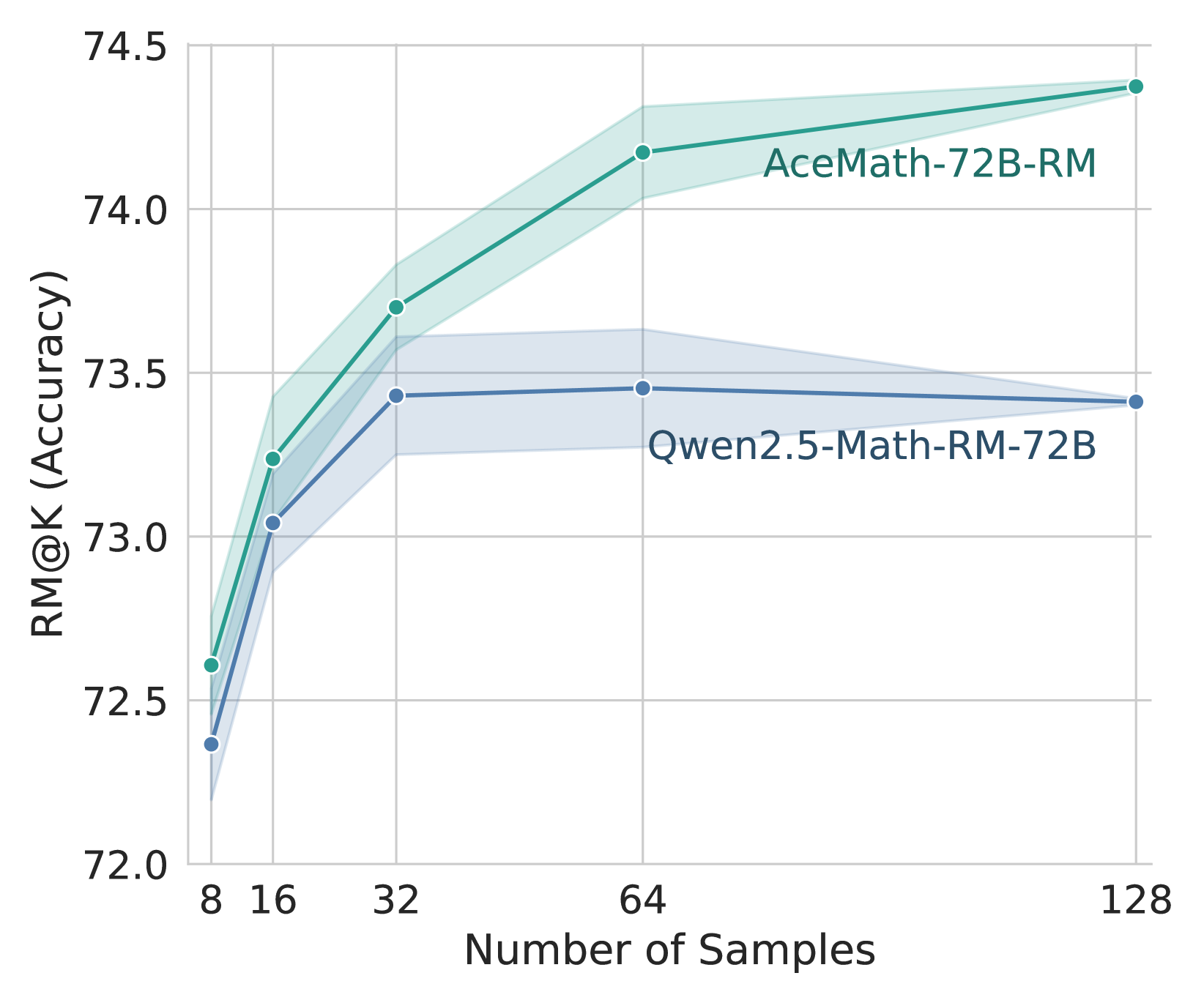

The image is a line chart comparing the performance of two mathematical reasoning models, "AceMath-72B-RM" and "Qwen2.5-Math-RM-72B," as a function of the number of samples used. The chart plots the RM@K (Accuracy) metric on the vertical axis against the Number of Samples on the horizontal axis. Each data series is represented by a line with markers, accompanied by a shaded region indicating a confidence interval or variance.

### Components/Axes

* **Chart Type:** Line chart with confidence intervals.

* **Y-Axis (Vertical):**

* **Label:** "RM@K (Accuracy)"

* **Scale:** Linear, ranging from 72.0 to 74.5.

* **Major Ticks:** 72.0, 72.5, 73.0, 73.5, 74.0, 74.5.

* **X-Axis (Horizontal):**

* **Label:** "Number of Samples"

* **Scale:** Appears to be logarithmic or categorical, with discrete markers.

* **Data Points (Markers):** 8, 16, 32, 64, 128.

* **Legend:**

* **Position:** Top-right quadrant of the chart area.

* **Series 1:** "AceMath-72B-RM" - Represented by a teal/green line and markers.

* **Series 2:** "Qwen2.5-Math-RM-72B" - Represented by a blue line and markers.

* **Data Series & Shaded Regions:**

* Each line has a corresponding semi-transparent shaded area of the same color, likely representing a confidence interval (e.g., standard deviation or standard error) around the mean accuracy.

### Detailed Analysis

**Data Series: AceMath-72B-RM (Teal/Green Line)**

* **Trend:** The line shows a consistent, positive logarithmic-like growth trend. Accuracy increases sharply from 8 to 32 samples and continues to grow at a slower rate up to 128 samples.

* **Data Points (Approximate):**

* At 8 samples: ~72.6

* At 16 samples: ~73.25

* At 32 samples: ~73.7

* At 64 samples: ~74.15

* At 128 samples: ~74.4

* **Confidence Interval:** The shaded teal region widens as the number of samples increases, suggesting greater variance or uncertainty in the accuracy estimate at higher sample counts.

**Data Series: Qwen2.5-Math-RM-72B (Blue Line)**

* **Trend:** The line shows initial growth that plateaus. Accuracy increases from 8 to 32 samples, then remains relatively flat between 32 and 128 samples, with a very slight downward trend at the final point.

* **Data Points (Approximate):**

* At 8 samples: ~72.4

* At 16 samples: ~73.05

* At 32 samples: ~73.45

* At 64 samples: ~73.45

* At 128 samples: ~73.4

* **Confidence Interval:** The shaded blue region is narrower than AceMath's and remains relatively constant in width across the sample range.

### Key Observations

1. **Performance Gap:** AceMath-72B-RM consistently outperforms Qwen2.5-Math-RM-72B at every measured sample count. The performance gap widens as the number of samples increases.

2. **Scaling Behavior:** The two models exhibit fundamentally different scaling behaviors. AceMath continues to benefit from more samples (positive slope throughout), while Qwen's performance saturates after 32 samples (slope approaches zero).

3. **Uncertainty:** The confidence interval for AceMath is wider, especially at higher sample counts (64, 128), indicating its performance metric may be more variable or less certain in those conditions compared to Qwen's more stable, but lower, performance.

4. **Initial Conditions:** At the lowest sample count (8), the models start relatively close in accuracy (~0.2 difference), but their trajectories diverge immediately.

### Interpretation

This chart demonstrates a clear case of **differential scaling efficiency** between two large language models fine-tuned for mathematical reasoning. The data suggests that the AceMath-72B-RM model is more effective at leveraging additional computational resources (in the form of more samples, likely for techniques like majority voting or best-of-n sampling) to improve its final accuracy. Its upward trajectory implies it has not yet reached its performance ceiling within the tested range.

In contrast, the Qwen2.5-Math-RM-72B model hits a performance plateau relatively early. Providing it with more than 32 samples yields negligible benefit, indicating a potential bottleneck in its reasoning capability or reward model alignment that cannot be overcome simply by scaling the number of attempts.

The wider confidence interval for AceMath at high sample counts is an important nuance. It suggests that while its *average* performance is superior, the *consistency* of that performance across different runs or subsets of data may be lower than Qwen's. This could be a factor in model selection for applications where predictability is as important as peak performance.

**In summary, the chart provides strong evidence that for the RM@K metric, AceMath-72B-RM is the more scalable and higher-performing model, but Qwen2.5-Math-RM-72B offers more predictable, stable results at a lower performance tier.**