TECHNICAL ASSET FINGERPRINT

8240fd98a09275b0e587aa37

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

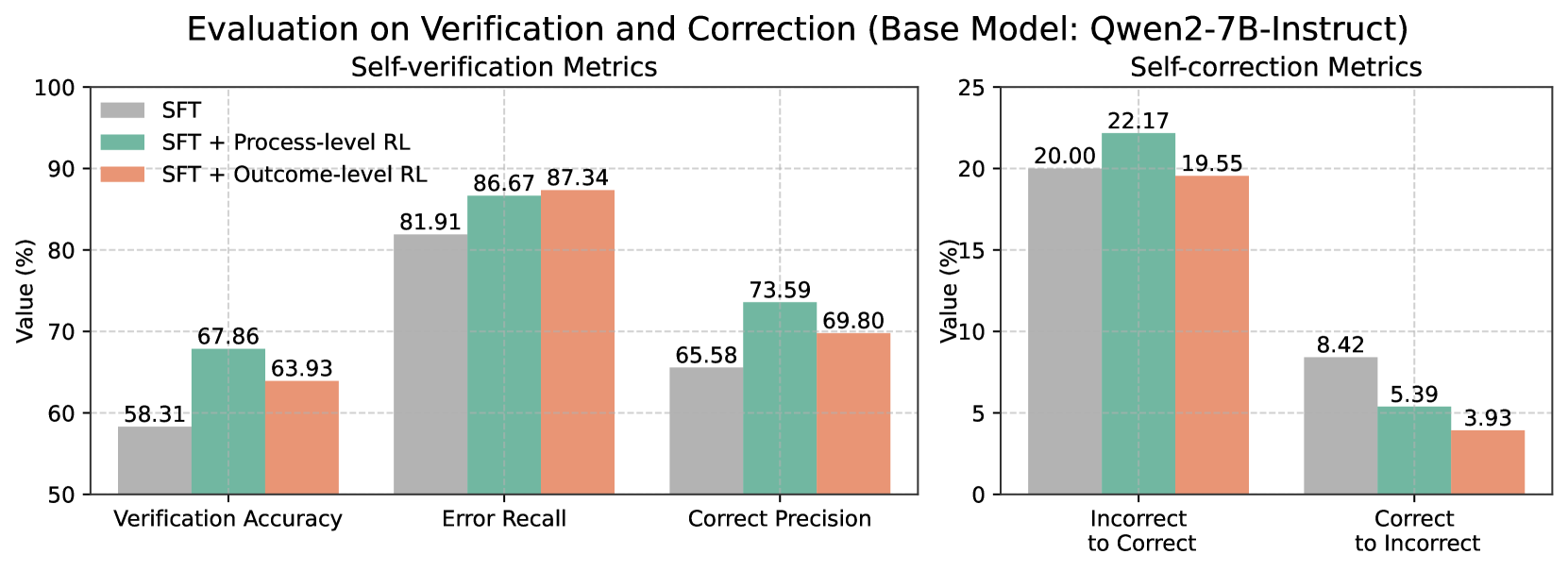

## Bar Chart: Evaluation on Verification and Correction (Base Model: Qwen2-7B-Instruct)

### Overview

The image presents two bar charts comparing the performance of different models (SFT, SFT + Process-level RL, and SFT + Outcome-level RL) on self-verification and self-correction metrics. The left chart focuses on self-verification, showing Verification Accuracy, Error Recall, and Correct Precision. The right chart focuses on self-correction, showing Incorrect to Correct and Correct to Incorrect ratios.

### Components/Axes

**Overall Title:** Evaluation on Verification and Correction (Base Model: Qwen2-7B-Instruct)

**Left Chart:**

* **Title:** Self-verification Metrics

* **Y-axis:** Value (%)

* Scale: 50 to 100, incrementing by 10.

* **X-axis:**

* Verification Accuracy

* Error Recall

* Correct Precision

* **Legend:** Located in the top-left corner.

* SFT (Gray)

* SFT + Process-level RL (Teal)

* SFT + Outcome-level RL (Salmon)

**Right Chart:**

* **Title:** Self-correction Metrics

* **Y-axis:** Value (%)

* Scale: 0 to 25, incrementing by 5.

* **X-axis:**

* Incorrect to Correct

* Correct to Incorrect

* **Legend:** (Same as left chart, located in the top-left corner of the left chart)

* SFT (Gray)

* SFT + Process-level RL (Teal)

* SFT + Outcome-level RL (Salmon)

### Detailed Analysis

**Left Chart (Self-verification Metrics):**

* **Verification Accuracy:**

* SFT (Gray): 58.31%

* SFT + Process-level RL (Teal): 67.86%

* SFT + Outcome-level RL (Salmon): 63.93%

* Trend: SFT + Process-level RL performs best, followed by SFT + Outcome-level RL, and then SFT.

* **Error Recall:**

* SFT (Gray): 81.91%

* SFT + Process-level RL (Teal): 86.67%

* SFT + Outcome-level RL (Salmon): 87.34%

* Trend: SFT + Outcome-level RL performs best, closely followed by SFT + Process-level RL, and then SFT.

* **Correct Precision:**

* SFT (Gray): 65.58%

* SFT + Process-level RL (Teal): 73.59%

* SFT + Outcome-level RL (Salmon): 69.80%

* Trend: SFT + Process-level RL performs best, followed by SFT + Outcome-level RL, and then SFT.

**Right Chart (Self-correction Metrics):**

* **Incorrect to Correct:**

* SFT (Gray): 20.00%

* SFT + Process-level RL (Teal): 22.17%

* SFT + Outcome-level RL (Salmon): 19.55%

* Trend: SFT + Process-level RL performs best, followed by SFT, and then SFT + Outcome-level RL.

* **Correct to Incorrect:**

* SFT (Gray): 8.42%

* SFT + Process-level RL (Teal): 5.39%

* SFT + Outcome-level RL (Salmon): 3.93%

* Trend: SFT performs worst, followed by SFT + Process-level RL, and then SFT + Outcome-level RL.

### Key Observations

* For self-verification metrics, SFT + Process-level RL and SFT + Outcome-level RL generally outperform the base SFT model.

* For self-correction metrics, SFT + Process-level RL shows the highest rate of correcting incorrect answers.

* SFT + Outcome-level RL has the lowest rate of correct answers becoming incorrect.

### Interpretation

The charts suggest that incorporating reinforcement learning (RL), particularly process-level RL, enhances the performance of the Qwen2-7B-Instruct model in both self-verification and self-correction tasks. Process-level RL seems to be more effective at improving the model's ability to correct its mistakes, while outcome-level RL excels at maintaining the correctness of already correct answers. The base SFT model consistently underperforms compared to the RL-enhanced models, indicating the value of RL in improving model reliability and accuracy.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

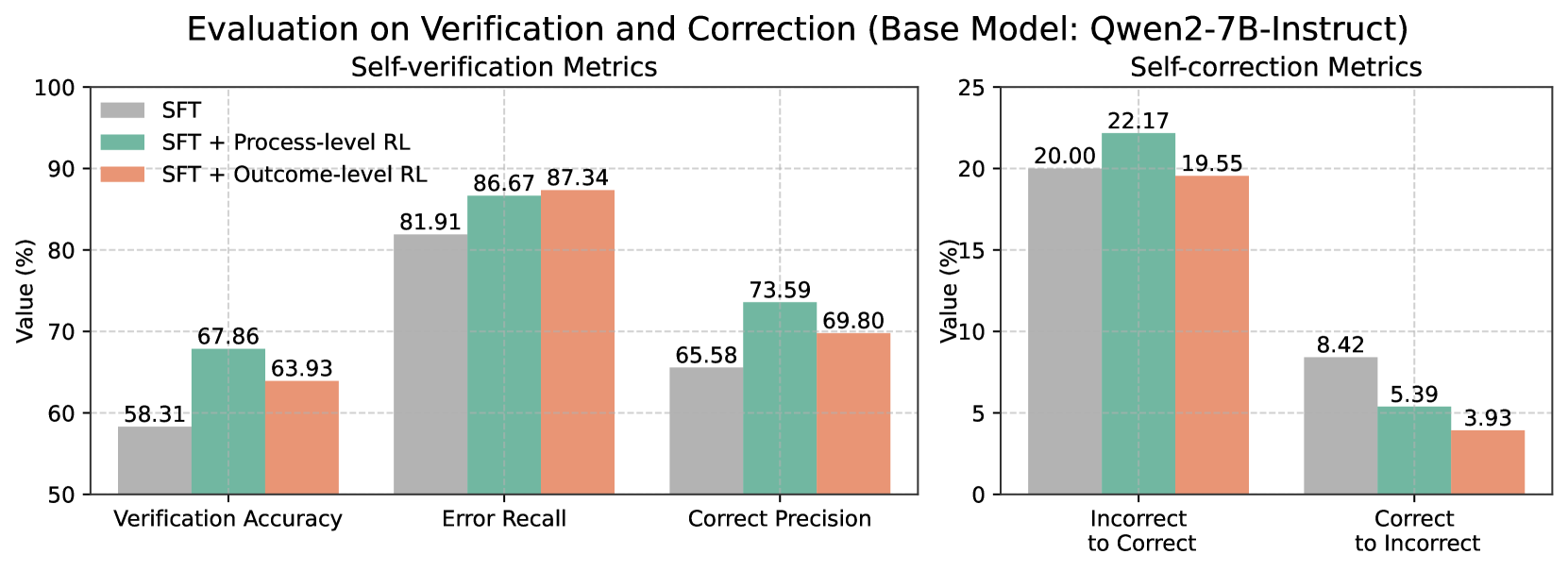

## Bar Chart: Evaluation on Verification and Correction (Base Model: Qwen2-7B-Instruct)

### Overview

This image contains two bar charts side-by-side, presenting evaluation metrics for a base model named "Qwen2-7B-Instruct". The left chart displays "Self-verification Metrics", and the right chart displays "Self-correction Metrics". Both charts compare three different configurations: "SFT", "SFT + Process-level RL", and "SFT + Outcome-level RL". The y-axis for both charts represents "Value (%)".

### Components/Axes

**Overall Title:** Evaluation on Verification and Correction (Base Model: Qwen2-7B-Instruct)

**Left Chart: Self-verification Metrics**

* **Title:** Self-verification Metrics

* **Y-axis Title:** Value (%)

* **Y-axis Scale:** 50 to 100, with major ticks at 50, 60, 70, 80, 90, 100.

* **X-axis Categories:** Verification Accuracy, Error Recall, Correct Precision.

* **Legend:** Located in the top-left quadrant of the left chart.

* **SFT:** Represented by a light grey rectangle.

* **SFT + Process-level RL:** Represented by a teal/mint green rectangle.

* **SFT + Outcome-level RL:** Represented by a coral/light orange rectangle.

**Right Chart: Self-correction Metrics**

* **Title:** Self-correction Metrics

* **Y-axis Title:** Value (%)

* **Y-axis Scale:** 0 to 25, with major ticks at 0, 5, 10, 15, 20, 25.

* **X-axis Categories:** Incorrect to Correct, Correct to Incorrect.

* **Legend:** The legend from the left chart is applicable to both charts.

### Detailed Analysis

**Left Chart: Self-verification Metrics**

* **Verification Accuracy:**

* SFT (Grey): 58.31%

* SFT + Process-level RL (Teal): 67.86%

* SFT + Outcome-level RL (Coral): 63.93%

* **Trend:** SFT + Process-level RL shows the highest Verification Accuracy, followed by SFT + Outcome-level RL, and then SFT.

* **Error Recall:**

* SFT (Grey): 81.91%

* SFT + Process-level RL (Teal): 86.67%

* SFT + Outcome-level RL (Coral): 87.34%

* **Trend:** SFT + Outcome-level RL shows the highest Error Recall, closely followed by SFT + Process-level RL, and then SFT.

* **Correct Precision:**

* SFT (Grey): 65.58%

* SFT + Process-level RL (Teal): 73.59%

* SFT + Outcome-level RL (Coral): 69.80%

* **Trend:** SFT + Process-level RL shows the highest Correct Precision, followed by SFT + Outcome-level RL, and then SFT.

**Right Chart: Self-correction Metrics**

* **Incorrect to Correct:**

* SFT (Grey): 20.00%

* SFT + Process-level RL (Teal): 22.17%

* SFT + Outcome-level RL (Coral): 19.55%

* **Trend:** SFT + Process-level RL shows the highest rate of correcting incorrect predictions, followed by SFT, and then SFT + Outcome-level RL.

* **Correct to Incorrect:**

* SFT (Grey): 8.42%

* SFT + Process-level RL (Teal): 5.39%

* SFT + Outcome-level RL (Coral): 3.93%

* **Trend:** SFT shows the highest rate of incorrectly correcting correct predictions, while SFT + Outcome-level RL shows the lowest rate. The SFT + Process-level RL is in between.

### Key Observations

* **Self-verification:** The "SFT + Process-level RL" configuration generally performs best across "Verification Accuracy" and "Correct Precision". "SFT + Outcome-level RL" performs best for "Error Recall". All RL-enhanced configurations ("SFT + Process-level RL" and "SFT + Outcome-level RL") outperform the base "SFT" model in all self-verification metrics.

* **Self-correction:** For "Incorrect to Correct", "SFT + Process-level RL" is the best. For "Correct to Incorrect", "SFT + Outcome-level RL" is the best, indicating it is least likely to make a correct prediction incorrect.

* **Trade-offs:** There appears to be a trade-off between "Incorrect to Correct" and "Correct to Incorrect" rates. While "SFT + Process-level RL" excels at correcting errors, it also has a higher rate of making correct predictions incorrect compared to "SFT + Outcome-level RL". Conversely, "SFT + Outcome-level RL" is better at preserving correct predictions but is slightly less effective at correcting incorrect ones compared to "SFT + Process-level RL".

### Interpretation

The data suggests that applying Reinforcement Learning (RL) techniques, specifically "Process-level RL" and "Outcome-level RL", to the base "SFT" model significantly improves its self-verification and self-correction capabilities when evaluated on the "Qwen2-7B-Instruct" base model.

The "Self-verification Metrics" indicate that RL enhancements lead to better accuracy in verifying information, recalling errors, and precisely correcting errors. The "SFT + Process-level RL" configuration appears to be a strong contender for overall self-verification performance, particularly in accuracy and precision.

The "Self-correction Metrics" reveal nuanced performance. "SFT + Process-level RL" is most effective at turning incorrect predictions into correct ones. However, "SFT + Outcome-level RL" demonstrates a superior ability to avoid degrading correct predictions into incorrect ones. This suggests that "Outcome-level RL" might be more conservative or robust in maintaining correctness, while "Process-level RL" might be more aggressive in error correction, potentially at the cost of introducing new errors.

In essence, the choice between "SFT + Process-level RL" and "SFT + Outcome-level RL" might depend on the specific priorities of the application. If the primary goal is to maximize the correction of errors, "SFT + Process-level RL" is favored. If the priority is to minimize the degradation of correct predictions, "SFT + Outcome-level RL" is the better choice. Both RL approaches offer substantial improvements over the baseline "SFT" model.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Bar Charts: Evaluation on Verification and Correction

### Overview

The image presents two sets of bar charts comparing the performance of a base model (Qwen2-7B-Instruct) with different training approaches: Supervised Fine-Tuning (SFT), SFT + Process-level Reinforcement Learning (RL), and SFT + Outcome-level RL. The left chart focuses on "Self-verification Metrics," while the right chart focuses on "Self-correction Metrics." Both charts display values as percentages.

### Components/Axes

* **Title:** "Evaluation on Verification and Correction (Base Model: Qwen2-7B-Instruct)" - positioned at the top-center of the image.

* **Left Chart Title:** "Self-verification Metrics" - positioned above the left chart.

* **Right Chart Title:** "Self-correction Metrics" - positioned above the right chart.

* **Y-axis Label (Both Charts):** "Value (%)" - positioned on the left side of both charts. The scale ranges from 50 to 100 for the left chart and from 0 to 25 for the right chart.

* **X-axis Labels (Left Chart):** "Verification Accuracy", "Error Recall", "Correct Precision" - positioned along the bottom of the left chart.

* **X-axis Labels (Right Chart):** "Incorrect to Correct", "Correct to Incorrect" - positioned along the bottom of the right chart.

* **Legend (Top-Left of Left Chart):**

* SFT (Blue)

* SFT + Process-level RL (Green)

* SFT + Outcome-level RL (Red)

### Detailed Analysis or Content Details

**Left Chart: Self-verification Metrics**

* **Verification Accuracy:**

* SFT: Approximately 58.31%

* SFT + Process-level RL: Approximately 67.86%

* SFT + Outcome-level RL: Approximately 63.93%

* Trend: The SFT + Process-level RL shows the highest value, indicating improved verification accuracy.

* **Error Recall:**

* SFT: Approximately 81.91%

* SFT + Process-level RL: Approximately 86.67%

* SFT + Outcome-level RL: Approximately 87.34%

* Trend: SFT + Outcome-level RL shows the highest value, indicating improved error recall.

* **Correct Precision:**

* SFT: Approximately 65.58%

* SFT + Process-level RL: Approximately 73.59%

* SFT + Outcome-level RL: Approximately 69.80%

* Trend: SFT + Process-level RL shows the highest value, indicating improved correct precision.

**Right Chart: Self-correction Metrics**

* **Incorrect to Correct:**

* SFT: Approximately 20.00%

* SFT + Process-level RL: Approximately 22.17%

* SFT + Outcome-level RL: Approximately 19.55%

* Trend: SFT + Process-level RL shows the highest value, indicating improved ability to correct incorrect statements.

* **Correct to Incorrect:**

* SFT: Approximately 8.42%

* SFT + Process-level RL: Approximately 5.39%

* SFT + Outcome-level RL: Approximately 3.93%

* Trend: SFT + Outcome-level RL shows the lowest value, indicating improved ability to avoid incorrectly altering correct statements.

### Key Observations

* In the Self-verification Metrics chart, SFT + Process-level RL consistently performs well in Verification Accuracy and Correct Precision, while SFT + Outcome-level RL excels in Error Recall.

* In the Self-correction Metrics chart, SFT + Process-level RL shows the highest value for Incorrect to Correct, while SFT + Outcome-level RL shows the lowest value for Correct to Incorrect.

* The addition of Reinforcement Learning (both process and outcome level) consistently improves performance over the base SFT model across all metrics.

### Interpretation

The data suggests that incorporating Reinforcement Learning into the training process of the Qwen2-7B-Instruct model significantly enhances both its self-verification and self-correction capabilities. The choice between Process-level RL and Outcome-level RL appears to depend on the specific metric being optimized. Process-level RL seems to be more effective at improving accuracy and precision, while Outcome-level RL is better at minimizing the introduction of errors during correction. The charts demonstrate a clear trade-off between these two aspects of performance. The model's ability to both verify its own outputs and correct errors is crucial for building reliable and trustworthy AI systems. The consistent improvement across all metrics with the addition of RL highlights the effectiveness of this technique for enhancing model performance.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Chart: Evaluation on Verification and Correction (Base Model: Qwen2-7B-Instruct)

### Overview

The image displays a comparative bar chart evaluating the performance of three different training methods applied to the base language model "Qwen2-7B-Instruct". The evaluation is split into two distinct metric groups: "Self-verification Metrics" (left panel) and "Self-correction Metrics" (right panel). The chart compares the performance of Supervised Fine-Tuning (SFT) alone against SFT combined with two types of Reinforcement Learning (RL): Process-level RL and Outcome-level RL.

### Components/Axes

* **Main Title:** "Evaluation on Verification and Correction (Base Model: Qwen2-7B-Instruct)"

* **Left Panel Title:** "Self-verification Metrics"

* **Right Panel Title:** "Self-correction Metrics"

* **Y-Axis (Both Panels):** Labeled "Value (%)". The left panel's axis ranges from 50 to 100 in increments of 10. The right panel's axis ranges from 0 to 25 in increments of 5.

* **X-Axis (Left Panel):** Three metric categories: "Verification Accuracy", "Error Recall", and "Correct Precision".

* **X-Axis (Right Panel):** Two metric categories: "Incorrect to Correct" and "Correct to Incorrect".

* **Legend (Top-Left of Left Panel):** A color-coded legend identifies the three training methods:

* **Grey Bar:** SFT

* **Teal/Green Bar:** SFT + Process-level RL

* **Salmon/Orange Bar:** SFT + Outcome-level RL

### Detailed Analysis

**Self-verification Metrics (Left Panel):**

This panel shows the model's ability to verify its own outputs. For all three metrics, the RL-enhanced methods outperform the SFT baseline.

1. **Verification Accuracy:**

* SFT (Grey): 58.31%

* SFT + Process-level RL (Teal): 67.86%

* SFT + Outcome-level RL (Salmon): 63.93%

* *Trend:* Both RL methods improve accuracy, with Process-level RL showing the largest gain.

2. **Error Recall:**

* SFT (Grey): 81.91%

* SFT + Process-level RL (Teal): 86.67%

* SFT + Outcome-level RL (Salmon): 87.34%

* *Trend:* All methods score highly. The RL methods provide a modest improvement over SFT, with Outcome-level RL performing slightly better.

3. **Correct Precision:**

* SFT (Grey): 65.58%

* SFT + Process-level RL (Teal): 73.59%

* SFT + Outcome-level RL (Salmon): 69.80%

* *Trend:* RL methods improve precision. Process-level RL shows the most significant improvement.

**Self-correction Metrics (Right Panel):**

This panel measures the model's ability to correct its own outputs. The trends here are more varied.

1. **Incorrect to Correct:**

* SFT (Grey): 20.00%

* SFT + Process-level RL (Teal): 22.17%

* SFT + Outcome-level RL (Salmon): 19.55%

* *Trend:* Process-level RL improves the rate of correcting incorrect answers. Outcome-level RL performs slightly worse than the SFT baseline.

2. **Correct to Incorrect:**

* SFT (Grey): 8.42%

* SFT + Process-level RL (Teal): 5.39%

* SFT + Outcome-level RL (Salmon): 3.93%

* *Trend:* This is a negative metric (lower is better). Both RL methods significantly reduce the rate of corrupting correct answers, with Outcome-level RL showing the best (lowest) result.

### Key Observations

* **Consistent Improvement in Verification:** All three verification metrics (Accuracy, Error Recall, Correct Precision) show improvement when RL is applied to the SFT baseline.

* **Divergent Impact on Correction:** The effect of RL on correction is metric-dependent. Process-level RL improves the "Incorrect to Correct" rate, while both RL methods excel at reducing the "Correct to Incorrect" error rate.

* **Process-level vs. Outcome-level RL:** Process-level RL generally provides the largest boost to verification metrics and the "Incorrect to Correct" correction metric. Outcome-level RL is particularly effective at minimizing the "Correct to Incorrect" error.

* **Scale Difference:** The values for self-verification metrics (50-90% range) are substantially higher than those for self-correction metrics (4-22% range), indicating the model is better at verifying outputs than actively correcting them.

### Interpretation

The data suggests that integrating Reinforcement Learning (RL) with Supervised Fine-Tuning (SFT) enhances the self-evaluation capabilities of the Qwen2-7B-Instruct model. The core finding is that RL training, whether focused on the process or the outcome, makes the model more reliable at identifying errors (higher Verification Accuracy and Error Recall) and more precise in its judgments (higher Correct Precision).

The correction metrics reveal a more nuanced picture. The model's ability to fix its own mistakes ("Incorrect to Correct") sees a moderate boost primarily from Process-level RL. More importantly, both RL methods drastically reduce the harmful behavior of changing correct answers into incorrect ones ("Correct to Incorrect"). This indicates that RL training instills a more conservative and confident correction behavior, making the model less likely to "over-correct" and introduce new errors.

In summary, the chart demonstrates that RL-augmented training produces a model that is not only better at judging the correctness of its outputs but also safer and more reliable when attempting to correct them, with a notable reduction in harmful interventions. The choice between Process-level and Outcome-level RL may depend on whether the primary goal is improving active correction or minimizing correction-induced errors.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Charts: Self-verification and Self-correction Metrics (Base Model: Qwen2-7B-Instruct)

### Overview

The image contains two side-by-side bar charts comparing performance metrics for a base model (Qwen2-7B-Instruct) under three training conditions:

1. **SFT** (Standard Fine-Tuning)

2. **SFT + Process-level RL** (Reinforcement Learning)

3. **SFT + Outcome-level RL**

The left chart focuses on **Self-verification Metrics** (Verification Accuracy, Error Recall, Correct Precision), while the right chart evaluates **Self-correction Metrics** (Incorrect to Correct, Correct to Incorrect). All values are expressed as percentages.

---

### Components/Axes

#### Left Chart (Self-verification Metrics)

- **X-axis**:

- Verification Accuracy

- Error Recall

- Correct Precision

- **Y-axis**: Value (%) from 50 to 100.

- **Legend**:

- **Gray**: SFT

- **Teal**: SFT + Process-level RL

- **Orange**: SFT + Outcome-level RL

#### Right Chart (Self-correction Metrics)

- **X-axis**:

- Incorrect to Correct

- Correct to Incorrect

- **Y-axis**: Value (%) from 0 to 25.

- **Legend**: Same color coding as the left chart.

---

### Detailed Analysis

#### Left Chart (Self-verification Metrics)

1. **Verification Accuracy**:

- SFT: 58.31%

- SFT + Process-level RL: 67.86%

- SFT + Outcome-level RL: 63.93%

2. **Error Recall**:

- SFT: 81.91%

- SFT + Process-level RL: 86.67%

- SFT + Outcome-level RL: 87.34%

3. **Correct Precision**:

- SFT: 65.58%

- SFT + Process-level RL: 73.59%

- SFT + Outcome-level RL: 69.80%

#### Right Chart (Self-correction Metrics)

1. **Incorrect to Correct**:

- SFT: 20.00%

- SFT + Process-level RL: 22.17%

- SFT + Outcome-level RL: 19.55%

2. **Correct to Incorrect**:

- SFT: 8.42%

- SFT + Process-level RL: 5.39%

- SFT + Outcome-level RL: 3.93%

---

### Key Observations

1. **Process-level RL Improves Verification**:

- Verification Accuracy increases by ~15% (58.31% → 67.86%) with Process-level RL.

- Error Recall and Correct Precision also show significant gains (81.91% → 86.67%, 65.58% → 73.59%).

2. **Outcome-level RL Has Mixed Effects**:

- Slightly lower Verification Accuracy (63.93%) compared to Process-level RL.

- Higher Error Recall (87.34%) but lower Correct Precision (69.80%) than Process-level RL.

3. **Self-correction Trade-offs**:

- Process-level RL achieves the highest **Incorrect to Correct** rate (22.17%).

- Outcome-level RL reduces **Correct to Incorrect** errors most effectively (3.93%).

---

### Interpretation

The data suggests that **Process-level RL** enhances the model's ability to verify and correct errors, particularly in recalling mistakes and improving precision. However, **Outcome-level RL** introduces trade-offs: while it slightly improves error recall, it underperforms in verification accuracy and precision. In self-correction, Process-level RL excels at fixing incorrect answers, but Outcome-level RL is more effective at avoiding overcorrection (reducing "Correct to Incorrect" errors). These results highlight the importance of balancing process and outcome-focused adjustments in reinforcement learning for language models.

DECODING INTELLIGENCE...